We should celebrate accessibility tools, not hide them away

Whether in macOS, Apple Watch, Windows 11, or other platforms, there are swathes of great accessibility features available to everyone

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

You are now subscribed

Your newsletter sign-up was successful

As I crash into my 58th year on this planet, I am becoming more aware of the physical frailties of getting old. I am not getting old, of course. 60 is the new 40, according to my friends. They are too kind.

But we should be aware that the old viewpoint that ageing members of our society and workforce don’t use technology is going to come to a crashing halt soon. Those of us marching towards retirement are quite technology aware, and the old excuses don’t wash.

With age comes some, shall we say, “adaptations” of our abilities. For myself, having real-time glucose monitoring from the Libre, and now Dexcom G6, has been transformative technology for my insulin-injecting diabetes.

However, these are easy, mainstream solutions. We need to consider the impact of accessibility in the wider context. In a recent podcast, I sang about the merits of the Microsoft Surface Adaptation Kit. It costs a few pounds, it doesn’t have a record-smashing business processor or a high resolution screen. It's a set of stickers and raised/bumpy labels for you to stick on various items on your computer. It even comes with the stick-on pull tabs to make it easier to open up the screen, or pull out the foot.

The unfortunate reality is a lot of mainstream IT products are actually quite difficult to use if you have accessibility needs, and the addition of just a few little items like labels and pull tabs can be transformative. Full marks for Microsoft for putting together this kit, which is useful on any computer, and most certainly isn’t limited to the latest devices like the Microsoft Surface Pro 8, for instance.

All of this is a learning experience. My learning started when I was 20, when I spent a year working with a chap who was blind. Realising that I was his eyes in the lab was completely revelatory, and totally changed my perception of accessibility, and how to support people who have such needs. If I could wave a magic wand, teenagers wouldn’t go on a gap year but would spend time working in the community with people with accessibility needs.

We should remember that companies like Microsoft and Apple take accessibility incredibly seriously. You don’t see this work, simply because most people don’t need to look for these features. But the range and depth can be quite breathtaking. And don’t assume that this is done for profit. I’m reminded of the comment made by Apple CEO Tim Cook, in an interview nearly a decade ago: “When we work on making our devices accessible by the blind, I don’t consider the bloody ROI.”

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

So I was delighted to see another wave of accessibility features coming from Apple. I know I could find similar work from Microsoft in Windows 11, but the Apple examples are to hand. For example, this morning I was looking through the Accessibility features in macOS, and found an item called “Enable Head Pointer” in the “Pointer Control” settings. This uses the camera in the laptop to work out where your head is, and then tracks your head movements. These movements are then used to drive the mouse pointer on the screen. To my astonishment, it just worked.

RELATED RESOURCE

Or how about “Enable alternative pointer actions”, which allows Facial Expressions to be mapped to mouse clicks. I enabled “Smile” to map to “Left Click”, and it just works. I had no idea this sort of stuff was baked into the operating system.

How about using gestures to make actions? For example, I can use a range of movements to fire actions on my Apple Watch. Clench the fist to perform an action, or use double clench for a different action. How about pinch and double pinch, which could answer or end a phone call, take a photo, play or pause media playback, start or resume a workout? All of these can be detected by the watch and mapped to actions. Then there’s a huge array of zoom, magnifiers, audio support including screen reading through to mono-ing of stereo audio.

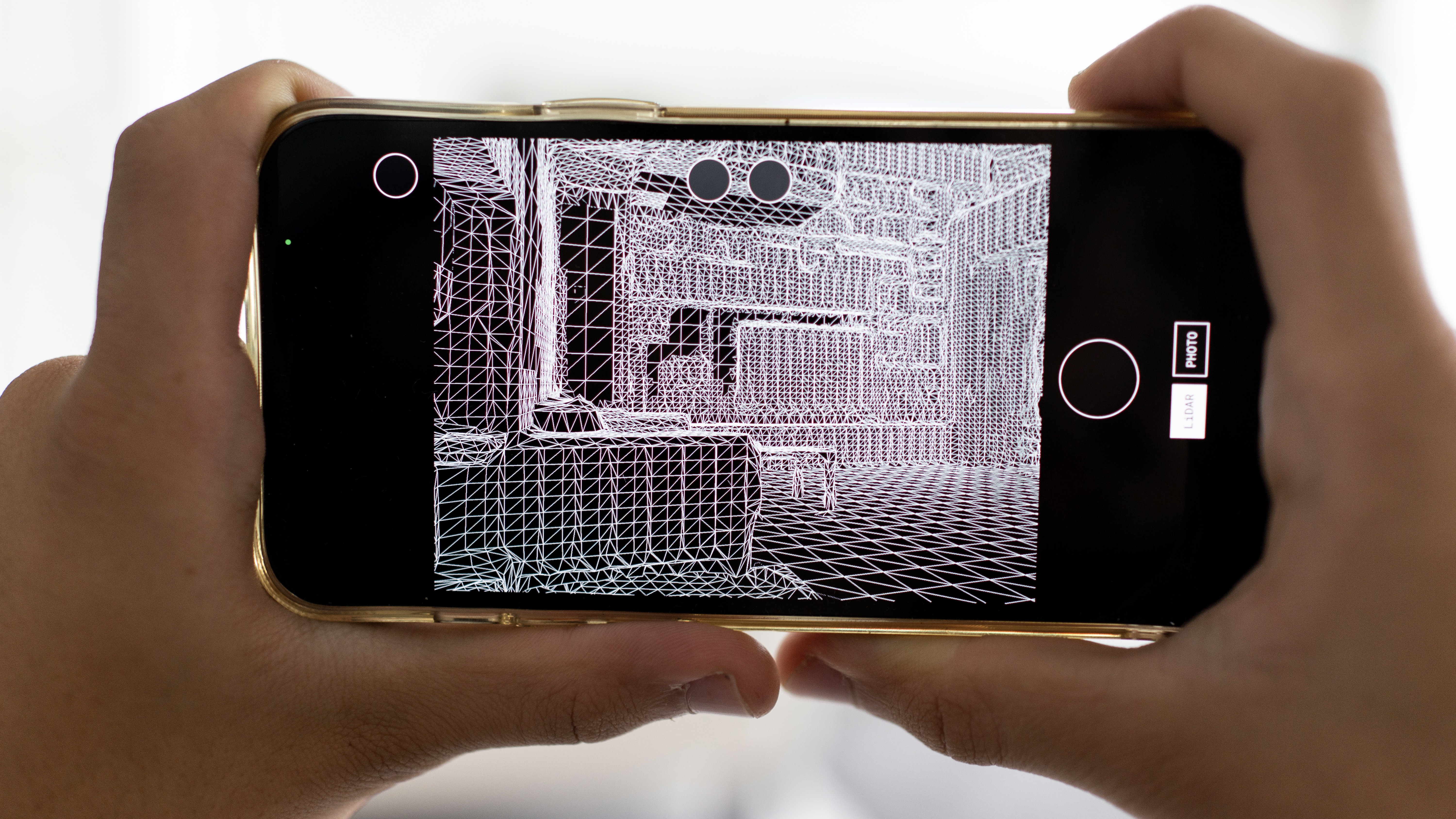

Some of the forthcoming features are simply astonishing, including using the LIDAR in your iPhone to enable door detection, for those who are blind or have low vision. It scans the room and works out where there are doors. Not only that, it can describe the door, and where it is, telling you whether it is open or closed, and whether it can be opened by pushing, turning a knob or pulling a handle. Door Detection can also read signs and symbols around a door, like a room number at an office. This combination of LIDAR, camera and machine learning is truly transformative, and all of this can be spoken to the user.

It soon becomes obvious that a whole heap of these functions are not just for those needing assistance, but for all of us. These aren’t features that should be hidden away behind an Accessibility menu item, but should be front and centre. I have come to think of the combination of my iPhone, Watch and CarPlay as an information and capability fabric. Any forthcoming augmented reality (AR) glasses will need to seamlessly fit into this fabric, and increase the capability of every part of the system.

It is time to bring this capability to the mainstream, and roundly criticise those apps, services and products that are unsupportive or downright hostile, because it affects everyone.

-

AWS UK chief touts big gains with AI-powered coding

AWS UK chief touts big gains with AI-powered codingNews Developers at AWS were able to speed up delivery of what would have traditionally been an extensive project

-

Google Cloud announces eighth-generation TPUs, boasting AI training and inference leaps

Google Cloud announces eighth-generation TPUs, boasting AI training and inference leapsNews Alongside chip improvements, Google Cloud has overhauled its data center fabric and file systems to support enterprise-scale AI deployment

-

Fixing the faltering AI transcription ecosystem

Fixing the faltering AI transcription ecosystemIn-depth Highly in-demand services that make up this ecosystem are riddled with problems, raising negative consequences for productivity and accessibility

-

How AI-powered tech can improve digital accessibility

How AI-powered tech can improve digital accessibilityIn-depth With COVID highlighting the widening digital divide for people with disabilities, can AI come to the rescue?

-

Cryptocurrency: Should you invest?

Cryptocurrency: Should you invest?In-depth Cryptocurrencies aren’t going away – but big questions remain over their longevity, the amount of energy they consume and the morals of investing

-

FCC commissioner calls for big tech to help bridge digital divide

FCC commissioner calls for big tech to help bridge digital divideNews FCC’s senior Republican wants the likes of Amazon, Apple, Facebook, and Google to help pay for broadband expansion

-

Social networking use jumps in the UK

Social networking use jumps in the UKNews A new Ofcom report has shown we still love social networking - but many aren't even online yet.