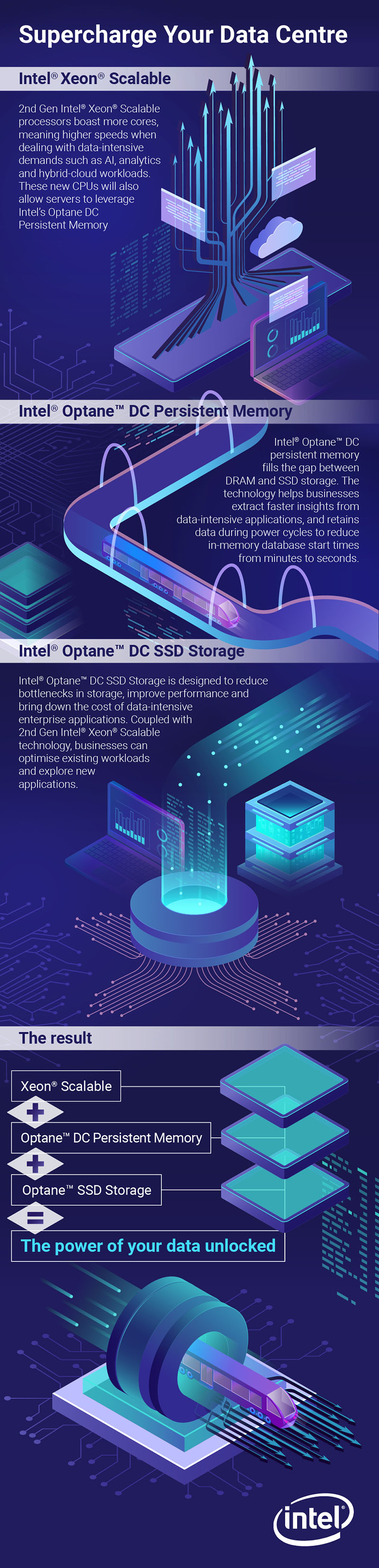

Raising the bar on enterprise computing

More than just a new CPU generation, 2nd Gen Intel® Xeon® Scalable processors could be revolutionary for enterprise computing

With its move to the Xeon Scalable architecture, Intel began a revolution in its enterprise processors that went beyond the normal performance and energy efficiency selling points. Combined with new storage, connectivity and memory technologies, Xeon Scalable was a step change. The new 2nd Gen Intel Xeon Scalable processors don't just continue that work but double down on it, with a raft of improvements and upgrades some revolutionary that add up to a significant shift in performance for today's most crucial workloads. Whether you're looking to push forward with ambitious modernisation strategies or embrace new technologies around AI, 2nd Gen Intel Xeon Scalable processors should be part of your plan. The revised architecture doesn't just give you a speed boost, but opens up a whole new wave of capabilities.

More cores and higher speeds meet AI acceleration

It's not that this latest processor doesn't bring conventional performance improvements. Across the line, from the entry-level Bronze processors to the new, high-end Platinum processors, there are increases in frequency, while models from the Silver family upwards get more cores at roughly the same price point, not to mention more L3 cache. For instance, the new Xeon Silver 4214 has 12 cores running at a base frequency of 2.2GHz with a Turbo frequency of 3.2GHz, plus 16.5MB of L3 cache. That's a big step on from the 10 cores 2.2GHz and 2.5GHz of the old Xeon Silver 4114, which had just 13.75MB of cache, and one that's replicated as you move on upwards through the line.

At the high-end, the improvements stand out even further. The new Platinum 9200 family has processors with up to 56 cores running 112 threads with a base frequency of 2.6GHz and a Turbo frequency of 3.8GHz. By any yardstick that's an incredible amount of power. What's more, these processors have 77MB of L3 cache and support for up to 2,933MHz DDR4 RAM the fastest ever natively supported by a Xeon processor. Put up to 112 cores at work in a two-socket configuration, and you're looking at unbelievable levels of performance for a single unit system.

From heavy duty virtualisation scenarios to cutting-edge, high-performance applications, these CPUs are designed to run the most demanding workloads. Intel claims a 33% performance improvement over previous-generation Xeon Scalable processors, or an up to 3.5x improvement over the Xeon E5 processors of five years ago.

Yet Intel's enhancements run much deeper. The Xeon Scalable architecture introduced the AVX-512 instruction set, with a double-width register and double the number of registers over the previous AVX2 instruction set, dramatically accelerating high-performance workloads including AI, cryptography and data protection. The 2nd generation Intel Xeon Scalable processor takes that one stage further with AVX-512-DL (deep learning) Boost and Vector Neural Network Instruction; new instructions designed specifically to enhance AI performance both at the data centre and the edge.

Deep learning has two major aspects training and inference where the algorithm is first trained to assign different weights' to some aspect of data being input, then asked to infer weights for new data based on what the AI learnt during that training. DL Boost and VNNI are designed specifically to accelerate the inference process by enabling it to work at lower levels of numerical precision, and to do so without any perceptible compromise on accuracy.

Using a new, single instruction to replace the work of three of the old ones, it can offer serious performance upgrades for deep learning applications such as image-recognition, voice recognition and language translation. In internal testing, Intel has seen boosts of up to 30x over previous-generation Xeon Scalable processors. What's more, these technologies are built to accelerate Intel's open source MKL-DNN Deep Learning library, which can be found within the Microsoft Cognitive Toolkit, TensorFlow and BigDL libraries. There's no need for developers to rebuild everything to use the new instructions because they work within the libraries and frameworks DL developers already use.

The next-gen platform

Of course, the processor isn't all that matters in a server or system, which is why 2nd Gen Intel Xeon Scalable processors are designed to work hand-in-hand with some of Intel's most powerful technologies. Perhaps the most crucial is Intel Optane DC Persistent Memory, which combines Intel's 3D XPoint memory media with Intel memory and storage controllers to bring you a new kind of memory, with the performance of RAM but the persistence and lower costs of NAND storage.

Optane is widely known as an alternative to NAND-based SSD technology, but in its DC Persistent Memory form it can replace standard DDR4 DIMMs, augmenting the available RAM and act as a persistent memory store. Paired with a 2nd Gen Intel Xeon Scalable processor, you can have up to six Optane DC Persistent Memory modules per socket partnered with at least one DDR4 module. With 128GB, 256GB and 512GB modules available, you can have up to 32TB of low latency, persistent RAM available without the huge costs associated with using conventional DDR4.

The benefits almost speak for themselves. With such lavish quantities of RAM available, there's scope to run heavier workloads or more virtual machines; Intel testing shows that you can run up to 36% more VMs on 2nd Gen Intel Xeon Scalable processors with Intel Optane DC Persistent Memory. What's more, this same combination opens up powerful but demanding in-memory applications to a much wider range of enterprises, giving more companies the chance to run real-time analytics on near-live data or scour vast datasets for insight. Combine this with the monster AI acceleration of Intel's new CPUs, and some hugely exciting capabilities hit the mainstream.

Yet there's still more to these latest Xeon Scalable chips than performance it's the foundation of a modern computing platform, built for a connected, data-driven business world. Intel QuickAssist technology adds hardware acceleration for network security, routing and storage, boosting performance in the software-defined data centre. There's also support for Intel Ethernet with scalable iWARP RDMA, giving you up to four 10Gbits/sec Ethernet ports for high data throughput between systems with ultra-low latency. Add Intel's new Ethernet 800 Series network adapters, and you can take the next step into 100Gbits/sec connectivity, for incredible levels of scalability and power.

Security, meanwhile, is enhanced by hardware acceleration for the new Intel Security Libraries (SecL-DC) and Intel Threat Detection Technology, providing a real alternative to expensive hardware security modules and protecting the data centre against incoming threats. This makes it tangibly easier to deliver platforms and services based on trust. Finally, Intel's Infrastructure Management Technologies provide a robust framework for resource management, with platform-level detection, monitoring, reporting and configuration. It's the key to controlling and managing your compute and storage resources to improve data centre efficiency and utilisation.

The overall effect? A line of processors that covers the needs of every business, and that provides each one with a secure, robust and scalable platform for the big applications of tomorrow. This isn't just about efficiency or about delivering your existing capabilities faster, but about empowering your business to do more with the best tools available. Don't let the 2nd generation name fool you. This isn't just an upgrade; it's a game-changer.

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

ITPro is a global business technology website providing the latest news, analysis, and business insight for IT decision-makers. Whether it's cyber security, cloud computing, IT infrastructure, or business strategy, we aim to equip leaders with the data they need to make informed IT investments.

For regular updates delivered to your inbox and social feeds, be sure to sign up to our daily newsletter and follow on us LinkedIn and Twitter.

-

European Commission opens public consultation on draft for high-risk AI guidelines

European Commission opens public consultation on draft for high-risk AI guidelinesNews Guidance aims to help organizations and regulators decide whether their AI products and deployments need to conform to tougher rules

-

Microsoft reveals Surface Pro and Surface Laptop for Business

Microsoft reveals Surface Pro and Surface Laptop for BusinessNews New 13in Pro and Laptop claim big performance improvements and vast AI capabilities