Google is getting worse as it does more

Search is Google’s heart, but the algorithms that run it are clearly struggling to keep up with the web

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

You are now subscribed

Your newsletter sign-up was successful

The internet is broken, though that's hardly news. Facebook doesn't bring the world closer together, as Mark Zuckerberg once hoped, but drives people with slightly divergent opinions further apart. Twitter was designed to share news and information instantly and across borders, but it's much better for sharing abuse. As for Google's goal, to "organise the world's information and make it universally accessible and useful"? Searching for anything specific is nigh on impossible.

Don't believe that Google is useless? Here's an example. Try to find out the date and time of your local Bonfire Night fireworks display. The first result I see when I search "Walthamstow Bonfire Night 2019" is a Google-made "Events" box informing me that Bonfire Night is the fifth of November, a fact I very much remember. Next in the results is a list of questions that other "people also ask", which includes the suggestion "what time are the Crystal Palace fireworks" – while I'm sure they're lovely, I live in northeast London.

Both of those results were generated automatically by Google's systems, and both are useless to me. But after that algorithmic garbage, we finally have some actual results from the web, namely a series of articles and blog posts finely tuned to ride the SEO train to advertising profit. The first result says there's no fireworks in Walthamstow this year; that is not true. The second and the third results don't feature the "Walthamstow" anywhere on the linked page – so why are they ranking for that term? If my editor would let me use emoji in my columns, there'd be a "shrug" here.

The fourth website in the ranking does include all the key terms, but only in a photo caption; it's an explainer about what Bonfire Night is, rather than where I might partake. The fifth result is the local council's Bonfire Night listing on Facebook... for last year's event. Bing, on the other hand, had an accurate result second in the list. It's enough to make anyone want to chuck Google on the bonfire; the only fireworks are my temper.

The cause of those fruitless Google results is twofold, and both are issues Google needs to address. First, the article results are there because sites are trying to game Google's ranking algorithm, the dark art known as search engine optimisation (SEO). It's easy to blame publishers, but if sites are ranking for "Walthamstow" without using the word at all, we need to dole out some blame to Google's clearly broken algorithm, too.

The second cause of those terrible results is also to do with Google's algorithm: data ages, it erodes and goes out of date. It's much easier to find information about Bonfire Night displays from 2018 and before than it is this year, but that isn't of much use for those of us without time machines.

Don't get me wrong: I haven't forgotten what life online was like before Google. Hotmail, Yahoo and Ask Jeeves all pale in functionality and utility compared to Gmail and Google Search. But that leap forward hasn't been maintained. The web isn't what it was back in 1997 when Google Search launched, and plenty of that change and added complexity is down to Google itself. Back then, it was easy to dig out the link to the council's web page about Bonfire Night, but now that same system must also define Bonfire Night, sort through the many SEO-designed pages gaming the system and sift through social posts. Google's search algorithm is creaking under the weight.

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

All of this is important. Under its wider Alphabet umbrella company, Google is building driverless cars, with Waymo running trials in Arizona. In Toronto, its subsidiary Sidewalk Labs is designing a new neighbourhood from the ground up as a smart city lab. It bought British machine-learning startup DeepMind to expand into AI healthcare and Nest to shift into smart homes. And that's just Google. Algorithms are slipping into our homes via voice-activated assistants, into our cities via automated traffic lights and dynamic transport timetables, and touching every aspect of our lives. Your bank processes your salary via algorithms. Your medical scans are examined by machine learning systems.

Many of those algorithms aren't fit for purpose. Others may be today, but won't be in the near future. They must learn how to evolve with change and spot the erosion of data quality. We hear a lot about avoiding bias in algorithms and AI, and that's vital, but we also need to make sure systems actually work – and keep working.

After all, if I can't trust Google's famously powerful search algorithm to find such basic information, why would I trust its systems to drive me, organise my city, or keep me healthy?

Freelance journalist Nicole Kobie first started writing for ITPro in 2007, with bylines in New Scientist, Wired, PC Pro and many more.

Nicole the author of a book about the history of technology, The Long History of the Future.

-

UK firms ponder offshoring AI workloads as energy costs surge

UK firms ponder offshoring AI workloads as energy costs surgeNews A CUDO Compute report confirms power prices are impacting the ability to rollout AI

-

Cisco is expanding its sovereign infrastructure suite for EMEA customers

Cisco is expanding its sovereign infrastructure suite for EMEA customersNews Customers will get greater choice and control over their data and digital infrastructure, according to Cisco

-

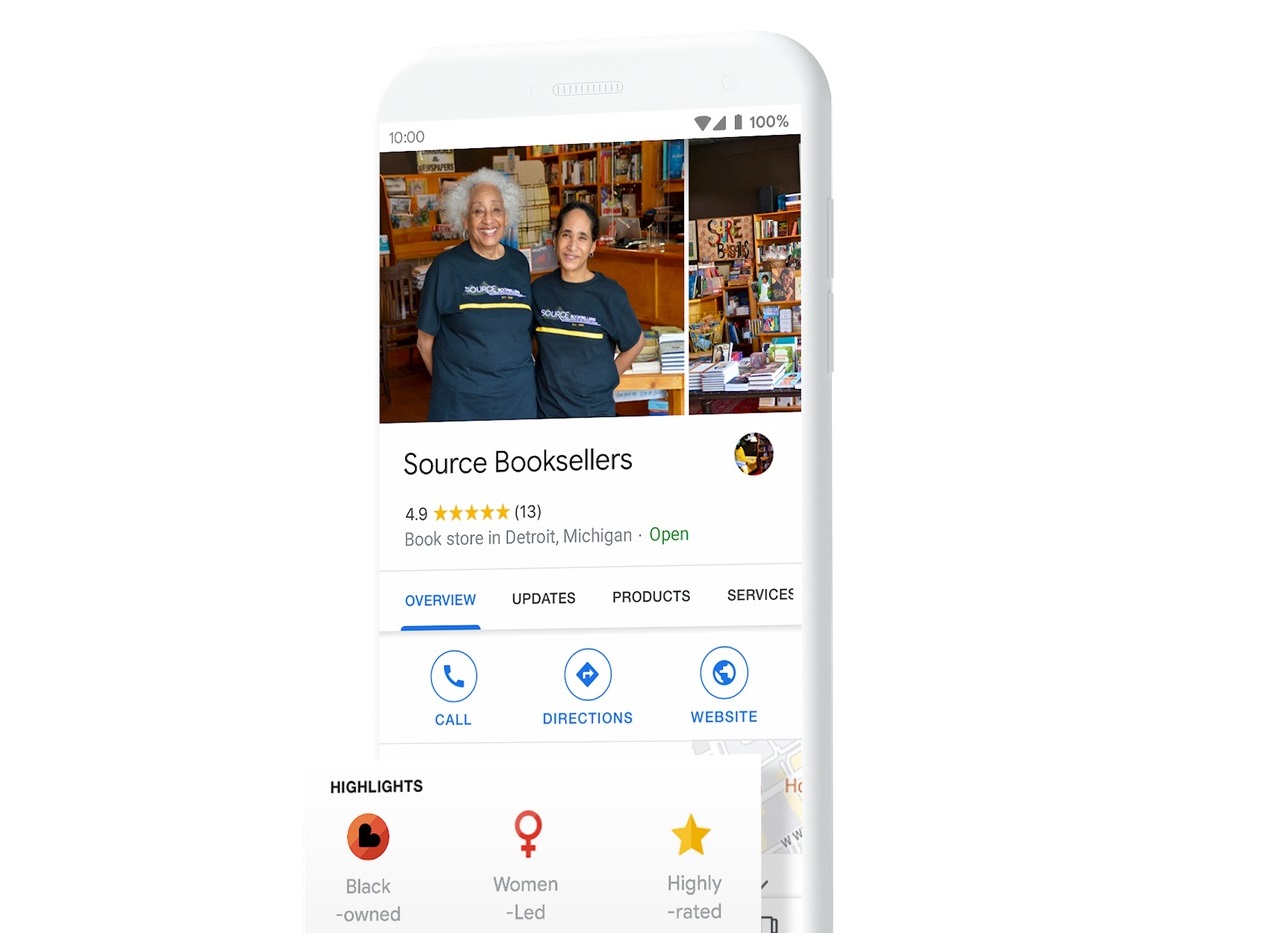

Google expands minority support by labeling Black-owned companies

Google expands minority support by labeling Black-owned companiesNews Gives customers a way to easily find and support Black-owned businesses

-

Fact-check information now added to Google Images

Fact-check information now added to Google ImagesNews Articles with fact-checked images to include a label

-

DuckDuckGo vs. Google: Privacy or popularity?

DuckDuckGo vs. Google: Privacy or popularity?Vs Google may reign as king, but it’s not the only option in the world of search

-

Google warns businesses not to disable their websites

Google warns businesses not to disable their websitesNews Drastic action amid COVID-19 crisis could damage search rankings

-

Google's 'mobilegeddon' update release date arrives

Google's 'mobilegeddon' update release date arrivesNews Google has updated its mobile-friendly algorithm, which will penalise many prominent sites in the process