What is quantum computing?

A revolution in computing is just around the corner - but how do quantum computers work, and what can they help us achieve?

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

You are now subscribed

Your newsletter sign-up was successful

No longer just a futurist’s fantasy, the peculiar and paradoxical physics of quantum computing are definitely more part of this world than many would expect.

Quantum computing is one of the most rapidly-growing innovations in the tech industry, with some of the biggest companies investing billions of dollars in the technology. In spite of this, it is still considered one of the quietest areas of innovation.

The simplest way to explain quantum computing is by picturing exceptionally powerful machines which are capable of processing immense amounts of data due to a reliance on the theory of quantum mechanics in the way they are constructed.

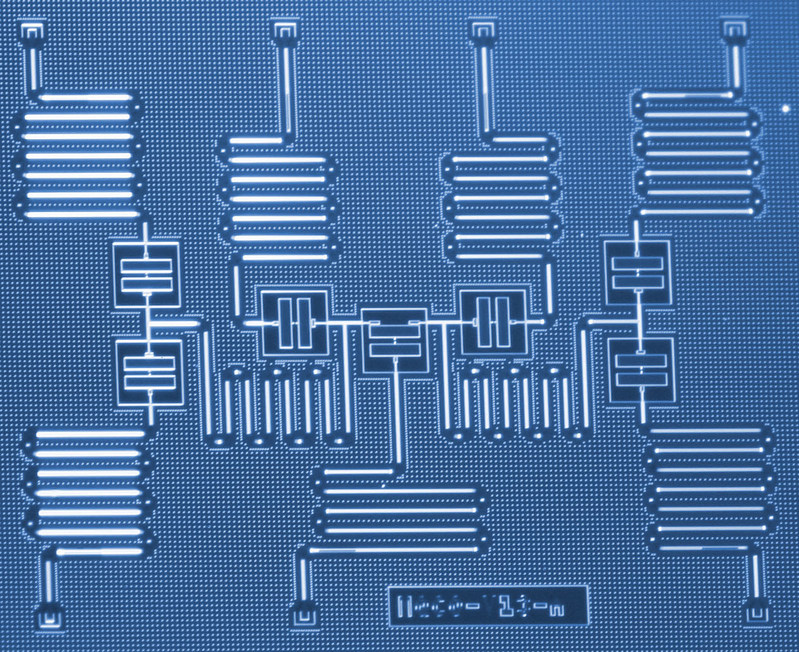

One of the most famous and most powerful quantum computers to date is Google's 72-qubit quantum chip named Bristlecone. Google announced the chip in March 2018, promising that the machine could be used to “solve real-world problems”. However, although impressive, Bristlecone was not quite able to showcase the progress required to beat some of the fastest classical computers.

Nowadays, plenty of tech giants - from IBM to D-Wave - are building chips similar to Bristlecone. Their developments aim to serve as models for upcoming quantum computers which engineers are planning to expand into a comparatively unconventional territory and which will be hundreds of thousands or millions of qubits powerful. Due to companies actively working to make computers capable of providing solutions to complex problems in seconds, not decades, the technology is progressing all the time.

However, how much do we really know about these mysterious machines? How do they work and what is their role in the technology of tomorrow?

What makes quantum computing different from classical' computing?

Quantum computing has a hugely rich history anchored in hardcore theoretical physics; more specifically, quantum mechanics. And after decades of research and development by a succession of physicists and engineers, we now have a handful of relatively small - but promising - machines serving as models for what quantum computers may one day become.

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

Computers as we know them, from bulky desktop machines to the iPhone X, all work in much the same way, regardless of their power or size. They perform operations by storing information as conventional bits, taking the form of either 0 or 1.

But because calculations are performed linearly - with each possibility explored sequentially - these computers take their time in solving complex mathematical problems. Quantum computers, on the other hand, can perform many calculations simultaneously, and produce results at a much faster rate.

They stand apart from classical computers in the sense they are predicated on a completely different set of scientific principles; namely the strange and counterintuitive behaviour of the subatomic world.

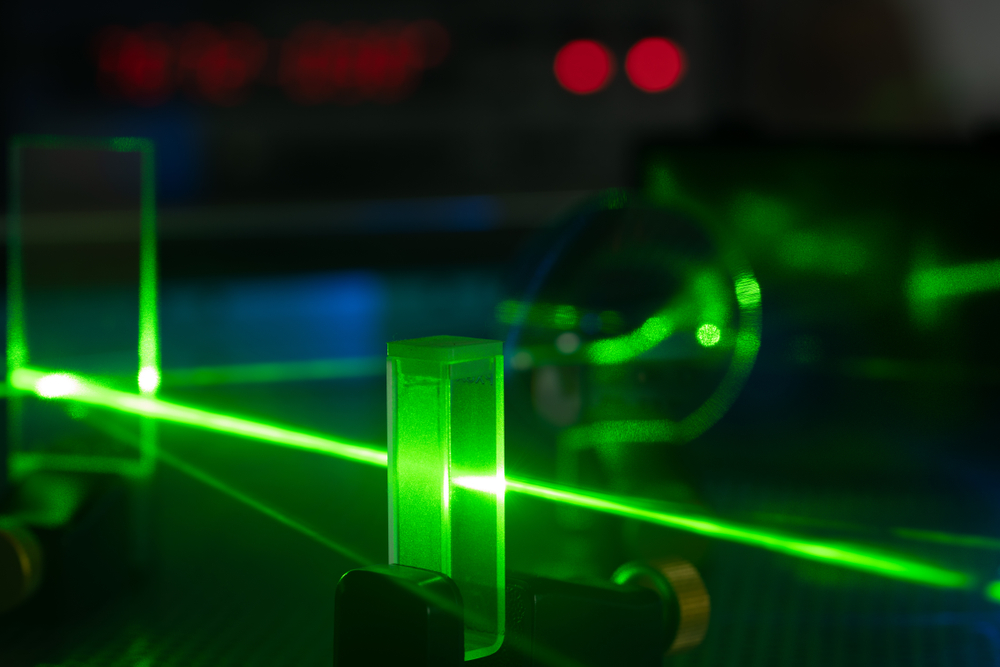

How can subatomic matter, like photons for example, behave as both particles, and as waves - existing in more than one state simultaneously? Add that to the baffling idea that the physical properties of subatomic particles do not exist unless they are being directly observed. Quantum entanglement, meanwhile, explains how particles can communicate with each other uninhibited regardless of how far apart they are.

These principles are hard to bend your mind around because they are fundamentally confusing. Even Nobel Prize-winning physicist Richard Feynman famously remarked: "I think I can safely say that nobody understands quantum mechanics".

Yet regardless of how fuzzy-headed they might make you feel, researchers have endeavoured to create a physical manifestation of these properties in machines we call quantum computers.

How do quantum computers work?

While classical computers work by encoding information into bits, quantum computers run using aptly-named quantum bits or qubits. But there's a big difference between the two.

A bit can be encoded as either 1 or 0, but qubits can take the form of 1, 0, or what is termed as a 'quantum superposition of 1 and 0'; the qubit exists in both states simultaneously.

This isn't to say a qubit is both 1 and 0 at the same time - but neither is this wrong. Rather, when read out, the superposition collapses and you are given the probability of discovering either 1 or 0. So far so Primer. But how does this work in practice?

Two bits, when stitched together, can exist as 00, 01, 10 or 11; and only one of these states at any one time. A quantum computer with two qubits, however, can be all four states - 00, 01, 10 and 11 - simultaneously.

When multiple qubits work in tandem to process calculations, the combination of states that can exist at once ramps up exponentially. A machine with three qubits, for instance, can exist in eight states, four in 16 states, and a 32-qubit strength machine can exist in a quantum superposition of nearly 4.3 billion simultaneous states.

IBM's Dr Talia Gershon illustrates the enhanced capabilities of a quantum computer by comparing it to seating guests around a dinner table: how many possibilities are there to seating ten people, and what is the best way to arrange them?

While a classical computer will attempt to solve this problem by exploring every possible combination in order, then comparing them, a quantum computer can model all 3.6 million combinations at the same time - and find the best answer almost instantaneously. It's for this reason that quantum computing doesn't just mean building a faster computer - but one that works in a fundamentally different way to a classic computer.

What does a quantum computer physically look like, and how is it built?

There's an eerie likeness between the sepia-toned shots of the first classical computers and the first quantum computers engineers have put together today. But under the hood the technological differences are staggering.

A single qubit itself comprises what physicists call a two-level quantum-mechanical system; a single subatomic particle that can shift from a ground state to an excited state when energy is applied.

There are a number of candidates - including photons, a nucleus, or even an electron, and each will have a 1 and 0 equivalent. The nucleus, for instance, represents '1 or 0' via its magnetic direction; spin-up or spin-down.

In one experiment, scientists used a single phosphorus atom carefully encapsulated within a silicon chip, and fitted next to a tiny transistor, while two years ago MIT experimented with a qubit that relied on shaving an electron off this atom and suspending it in free space using an electromagnetic field.

The electron, to use this example, is first suspended in a strong magnetic field, using a superconducting magnet or a large solenoid, and then cooled down to near-absolute zero. Since at room temperatures any particle used as the qubit would be flipping wildly between spin-up and spin-down, or 1 and 0, temperatures of 0.0015 Kelvin (a shade above -273 degrees Celcius) are needed to fix it in either a spin-up or spin-down position.

Only now, in this context, can energy be applied to the electron - delivered via microwaves resonating with the frequency of the magnetic field - to record information and change it from spin-down to spin-up, or vice versa.

Quantum superposition is achieved by hitting the qubit with a pulse of energy and then stopping, so the electron is somewhere between spin-down and spin-up. The superposition means the qubits can exist many simultaneous states to process information and perform calculations - with readings then taken from the transistor.

Incidentally, the reason quantum computers are so big, despite for the most part handling tiny, subatomic particles, is due to the machinery required to reach near absolute zero - the dilution refrigerator. It is for this reason, therefore, the likelihood of ever building a quantum computer the size of a smartphone is as close to slim as you can get.

What can quantum computing help us achieve?

The technology and physics principles needed to run quantum computers means these machines will never be fitted into day-to-day consumer goods like 2-in1s, notebooks, or desktop PCs. This doesn't mean that individuals and employees can't access the power they offer.

Tech firms invested in the quantum computing field have been in competition with one another to up the qubit rate since day dot. Somewhere along the line, efforts will be siphoned into improving accessibility and practicality, presumably once the technology is brought to a stage in which it's far more practical in business context than it is today.

One stark example of work being done on this front now is on behalf of IBM, and its cloud-based simulation. The IBM Q experience gives any individual, no matter how experienced, the scope to experiment and fiddle with an actual quantum computer that's kept in a research facility.

Using a cloud platform, users are given the tools to write their own algorithms and run quantum experiments from home or the office. Once quantum computing can be harnessed in the mainstream, the applications could range from what is termed optimisation' to biomedical simulation.

Because quantum computers are essentially geared up to process extremely complex mathematical problems, optimisation is perhaps the most common interaction consumers and the average business user will have with them, via the remote-access model currently employed by IBM, or something similar.

The dinner table example IBM's Dr Talia Gershon used is the perfect example of this kind of problem. Another may be if a travelling salesman wishes to carve the most efficient route through a string of cities; say, more than 200.

Further applications may be in mapping how materials behave, as well as training machine learning systems far more effectively than we can right now.

Regardless of the problems they can help us solve, researchers agree quantum computers won't be very effective until these machines can house thousands upon millions of qubits - while also maintaining low error rates. IBM has even coined the term 'quantum volume' as a way to judge how effective a quantum chip may be - taking into account a range of factors beyond just the number of qubits.

Although the Bristlecone chip is only 72 qubits in size, it is a considerable step up from where we were just ten years ago - and progress in this field is only expected to accelerate.What makes Google's chip so promising, however, is its low error rate. This is crucial for the prospects of scaling up quantum computers, which is no wonder, then, that the company sees it as a blueprint for scalable machines.

With efforts across the industry only ramping up, the prospects for building better and more efficient quantum computers in the not-too-distant future remains highly promising.

IBM and quantum computing

IBM is arguably the leader in quantum research. Through its quantum computing division, IBM Q, the company is working to make the technology more widely accessible, and it could shed some light on what the future of what quantum computing may hold.

One way it does this is through its Qisikit extensions. The open source program allows researchers and developers to explore quantum computing through Python scripts. You can even collaborate on requests for quantum computing interactions. This means that you don't have to be a physicist to get involved - you can get experience composing programs from your laptop.

In addition to developing quantum computers themselves, IBM is also creating a network of companies and academic institutions in order to spur innovation in quantum computing. The network's aim is to prepare everyone from students to Fortune 500 to startups for the rise of this technology. Participants include Honda, Massachusetts Institute of Technology and JP Morgan Chase & Co.

The network has three primary focus areas: accelerate research, develop commercial applications, and educate and prepare. To accelerate research, IBM provides participating organisations with knowledge and tools to order to encourage widespread adoption. In terms of developing commercial applications, organisations have access to the IBM cloud as well as Qiskit extensions, thus propelling them to create their own innovations. To educate and prepare, IBM trains members of participating organisations and provides support.

While commonplace quantum computing might not be around the corner just yet, IBM is working to help the infant industry flourish through accessible education and programming.

Keumars Afifi-Sabet is a writer and editor that specialises in public sector, cyber security, and cloud computing. He first joined ITPro as a staff writer in April 2018 and eventually became its Features Editor. Although a regular contributor to other tech sites in the past, these days you will find Keumars on LiveScience, where he runs its Technology section.

-

Cohere's Aleph Alpha merger could create a transatlantic sovereign AI powerhouse

Cohere's Aleph Alpha merger could create a transatlantic sovereign AI powerhouseAnalysis The merger between Cohere and Aleph Alpha aims to capitalize on the burgeoning sovereign AI market

-

Everything you need to know about OpenAI's new workspace agents

Everything you need to know about OpenAI's new workspace agentsNews New ‘workspace agents’ from OpenAI will automate tasks for workers and can be customized for specific roles