EU puts human rights at the heart of tougher tech export rules

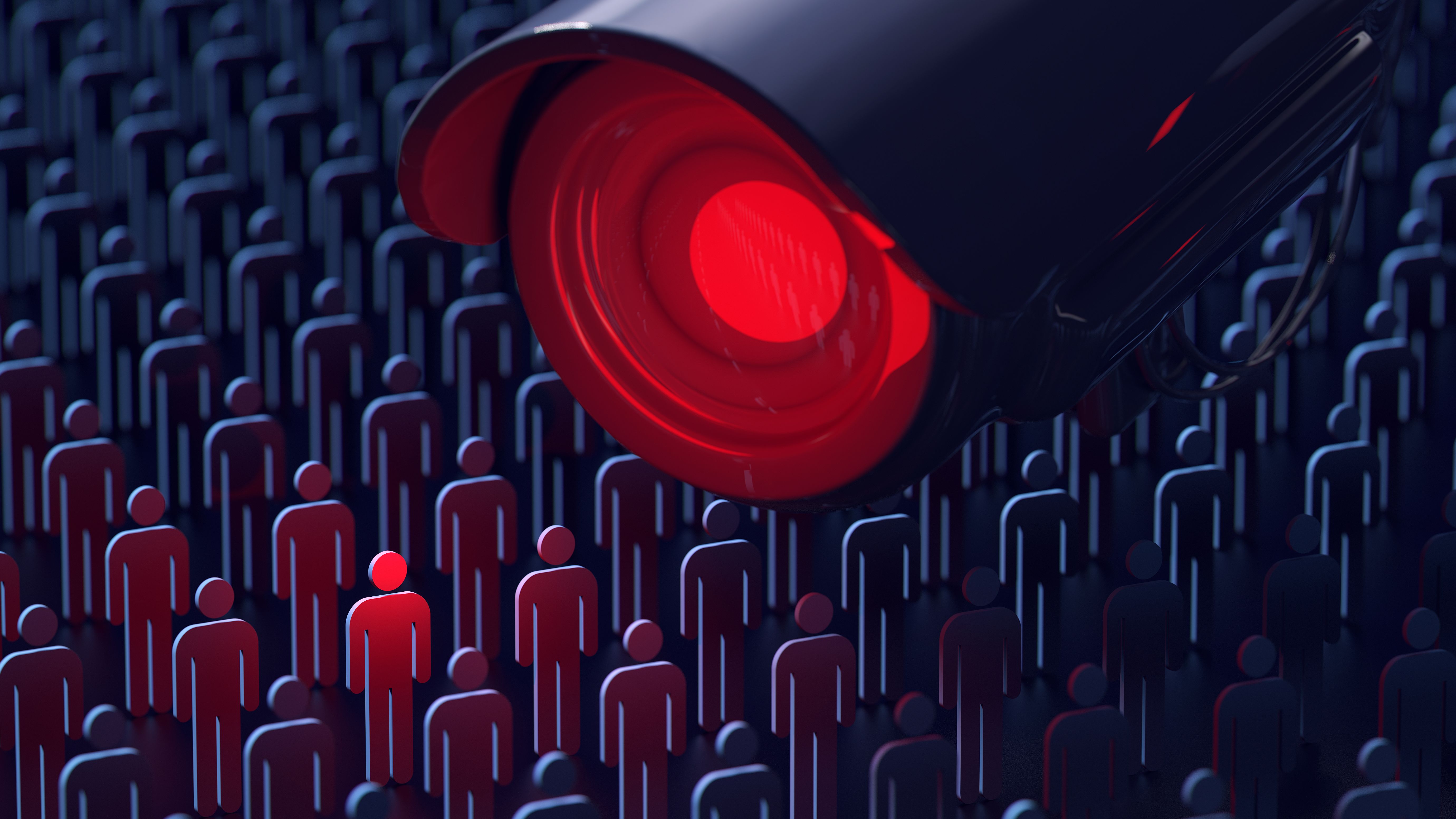

Lawmakers tighten up export controls, meaning technologies such as facial recognition and spyware face a higher bar when being sold

European-based technology vendors must apply for government licenses to export certain ‘dual-use’ products, and consider whether the use of their products in any deal poses a risk to human rights.

New rules established by EU lawmakers and the European Council beef up the export criteria of so-called ‘dual-use’ technologies, meaning vendors will have to clear a much higher bar when striking licensing deals.

These technologies include high-performance computing (HPC), drones, and software such as facial recognition and spyware, spanning systems with civilian applications that can be repurposed for nefarious reasons. They also include certain chemicals.

The beefier update to existing controls includes new criteria to grant or reject export licenses for certain terms, including whether or not the technologies will be used in potential human rights abuses.

The regulation includes guidance for EU nations to “consider the risk of use in connection with internal repression or the commission of serious violations of international human rights and international humanitarian law”. Member states must also be more transparent by publicly disclosing details about the export licenses they grant.

The rules can be swiftly changed to cover emerging technologies, and an EU-wide agreement has also been reached on controlling cyber surveillance products not listed as ‘dual-use’, in order to better safeguard human rights.

“Parliament’s perseverance and assertiveness against a blockade by some member states has paid off: respect for human rights will become an export standard,” said German MEP Bernd Lange. “The revised regulation updates European export controls and adapts to technological progress, new security risks and information on human rights violations.

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

“This new regulation, in addition to the one on conflict minerals and a future supply chain law, shows that we can shape globalisation according to a clear set of values and binding rules to protect human and labour rights and the environment. This must be the blueprint for future rule-based trade policy.”

Technologies such as facial recognition have attracted major opposition due to the way they’re being used by law enforcement agencies. In light of the Black Lives Matter movement, for instance, a swathe of technology vendors announced they’d be curbing their own facial recognition projects due to the public backlash.

Keumars Afifi-Sabet is a writer and editor that specialises in public sector, cyber security, and cloud computing. He first joined ITPro as a staff writer in April 2018 and eventually became its Features Editor. Although a regular contributor to other tech sites in the past, these days you will find Keumars on LiveScience, where he runs its Technology section.

-

US gov makes $2bn investment in domestic quantum firms

US gov makes $2bn investment in domestic quantum firmsNews The Department of Commerce says it wants to strengthen the country's presence in this critical technology sector

-

Data center industry faces ticking power time bomb

Data center industry faces ticking power time bombNews Technical and regulatory hurdles make colocation unscalable for most developers, Wood Mackenzie has warned

-

iOS and Android users beware: This new spyware kit allows hackers to take full control of your device

iOS and Android users beware: This new spyware kit allows hackers to take full control of your deviceNews The professional package allows even unsophisticated attackers to take full control of devices

-

Greek intelligence allegedly uses Predator spyware to wiretap Facebook security staffer

Greek intelligence allegedly uses Predator spyware to wiretap Facebook security stafferNews The employee’s device was infected through a link pretending to confirm a vaccination appointment

-

North Korean-linked Gmail spyware 'SHARPEXT' harvesting sensitive email content

North Korean-linked Gmail spyware 'SHARPEXT' harvesting sensitive email contentNews The insidious software exfiltrates all mail and attachments, researchers warn, putting sensitive documents at risk

-

Young hacker faces 20-year prison sentence for creating prolific Imminent Monitor RAT

Young hacker faces 20-year prison sentence for creating prolific Imminent Monitor RATNews He created the RAT when he was aged just 15 and is estimated to have netted around $400,000 from the sale of it over six years

-

European company unmasked as cyber mercenary group with ties to Russia

European company unmasked as cyber mercenary group with ties to RussiaNews The company that's similar to NSO Group has been active since 2016 and has used different zero-days in Windows and Adobe products to infect victims with powerful, evasive spyware

-

Mysterious MacOS spyware discovered using public cloud storage as its control server

Mysterious MacOS spyware discovered using public cloud storage as its control serverNews Researchers have warned that little is known about the 'CloudMensis' malware, including how it is distributed and who is behind it

-

Apple launching Lockdown Mode with iOS 16 to guard against Pegasus-style spyware

Apple launching Lockdown Mode with iOS 16 to guard against Pegasus-style spywareNews Apple breaks its bug bounty record with $2 million top prize, alongside $10 million grant funding, as it launches industry-first protections for highly targeted individuals

-

El Salvador becomes latest target of Pegasus spyware

El Salvador becomes latest target of Pegasus spywareNews The list of nations with access to Pegasus is growing, with evidence pointing to potential links between 35 confirmed Pegasus cases and the Salvadoran government