Half of agentic AI projects are still stuck at the pilot stage – but that’s not stopping enterprises from ramping up investment

Organizations are stymied by issues with security, privacy, and compliance, as well as the technical challenges of managing agents at scale

Agentic AI projects are failing to get past the pilot stage because enterprises can't govern, validate, or safely scale them.

Adoption of this latest iteration of the technology is still in its early stages, a survey of 919 senior global leaders from Dynatrace has revealed, but is growing rapidly, with 26% of organizations having 11 or more projects.

Organizations expect the biggest return on investment (ROI) from agentic AI in system monitoring (44%), cybersecurity (27%) and data processing (25%), and three-quarters are expecting an increase in their AI budget next year.

However, this may all be wishful thinking. Around half of these projects are stuck in the Proof-of-Concept (PoC) stages.

The main barriers to full implementation, respondents said, are concerns with security, privacy, or compliance, cited by 52%, followed by technical challenges to managing agents at scale, at 51%.

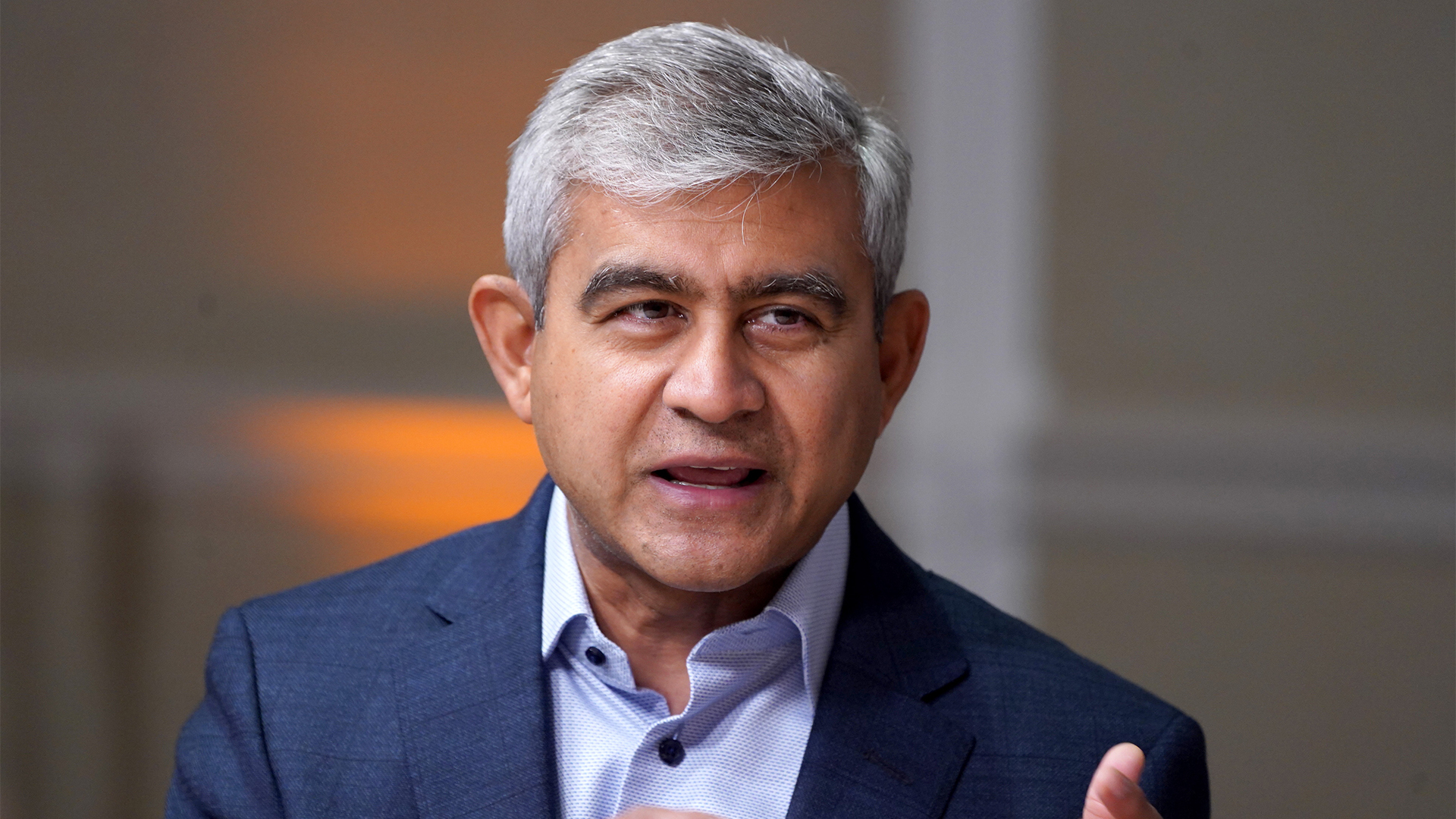

“Organizations are not slowing adoption because they question the value of AI, but because scaling autonomous systems safely requires confidence that those systems will behave reliably and as intended in real-world conditions,” said Alois Reitbauer, chief technology strategist at Dynatrace.

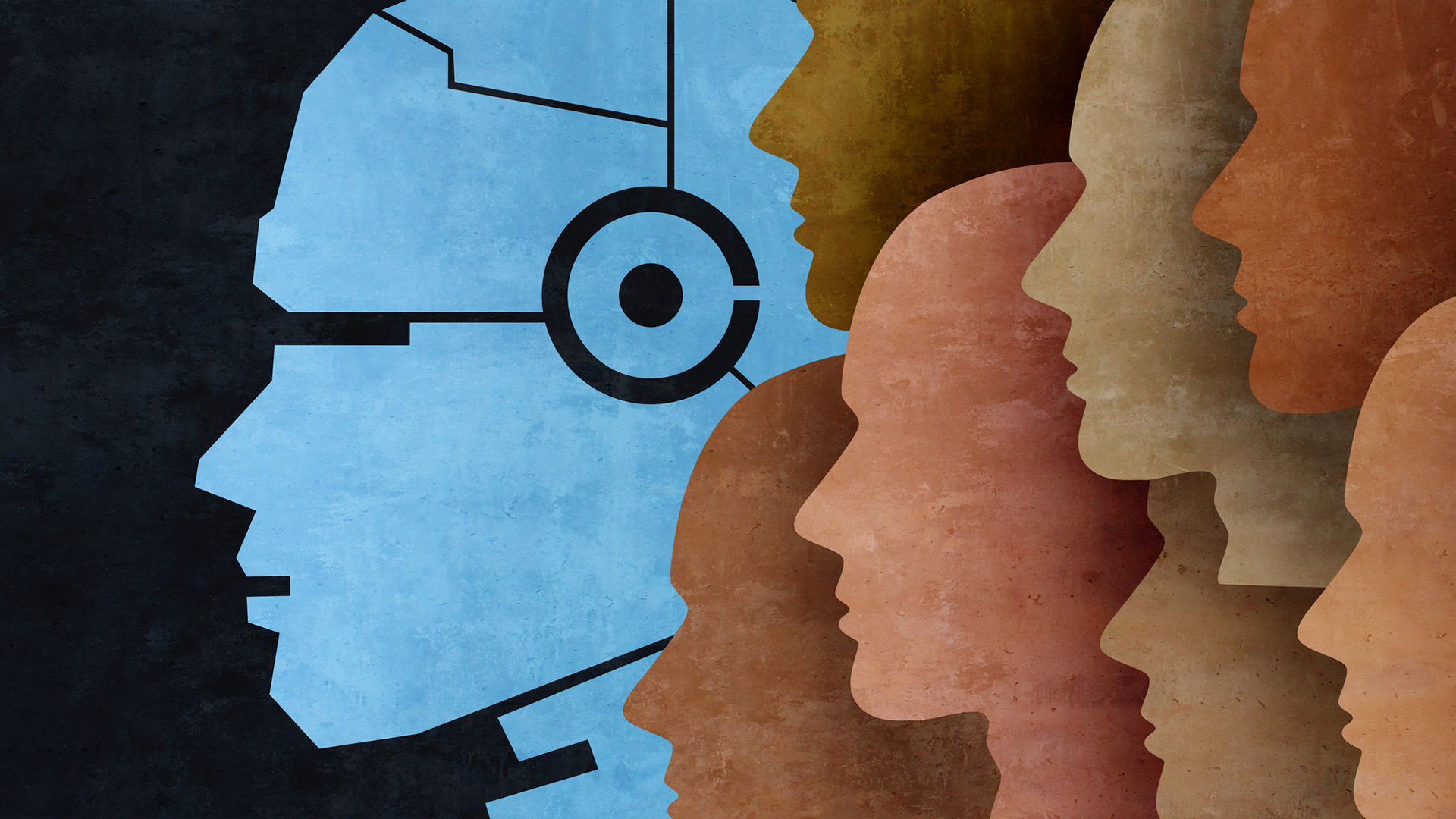

Seven-in-ten agentic AI–powered decisions are still verified by humans, and 87% of organizations are actively building or deploying agents that require human supervision.

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

Leaders said they expected a 50-50 human–AI collaboration for IT and routine customer support applications, and a 60-40 human–AI level of collaboration for business applications.

“With most enterprises now spending millions of dollars annually and planning further budget increases, agentic AI is becoming a core part of digital operations. At the same time, the data shows a clear shift underway," said Reitbauer.

"While human oversight remains essential today, organizations are increasingly preparing for more autonomous, AI-driven decision-making. The focus is now on building the trust and operational reliability needed to scale agentic AI responsibly.”

Observability is a problem with agentic AI

A recurring pain point for enterprises tinkering with agentic AI tools lies in observability, according to Dynatrace. Observability of these autonomous systems is needed across every stage of the life cycle, from development and implementation through to operationalization.

Observability is most used in implementation, at 69%, followed by operationalization at 57% and development at 54%.

“Observability is a vital component of a successful agentic AI strategy. As organizations push toward greater autonomy, they need real-time visibility into how AI agents behave, interact, and make decisions,” Reitbauer said.

“Observability not only helps teams understand performance and outcomes, but it provides the transparency and confidence required to scale agentic AI responsibly and with appropriate oversight.”

AI pilot purgatory is a recurring theme amongst executives. A study from Informatica last year revealed that fewer than two-fifths of AI projects have successfully made it into production, while two-thirds of firms have seen fewer than half of their pilot schemes make it into the real world.

More than four-in-ten cited a lack of data quality and readiness as the biggest obstacle to reaching production, while 43% also blamed a lack of technical maturity.

FOLLOW US ON SOCIAL MEDIA

Make sure to follow ITPro on Google News to keep tabs on all our latest news, analysis, and reviews.

You can also follow ITPro on LinkedIn, X, Facebook, and BlueSky.

Emma Woollacott is a freelance journalist writing for publications including the BBC, Private Eye, Forbes, Raconteur and specialist technology titles.

-

Sam Altman pours cold water on AI 'jobs apocalypse' concerns

Sam Altman pours cold water on AI 'jobs apocalypse' concernsNews OpenAI CEO Sam Altman “thought there would have been more impact” on white collar and entry-level jobs at this point

-

Everything you need to know about Euro-Office

Everything you need to know about Euro-OfficeNews Euro-Office offers a sovereign European rival to Microsoft Office and Google Docs

-

'One-size-fits-all' agent governance sets enterprises up to fail

'One-size-fits-all' agent governance sets enterprises up to failNews Gartner recommends a graded approach for agents, depending on their level of autonomy

-

Google adds AI to the search box

Google adds AI to the search boxNews Major changes for how Google's search functions with the integration of AI tools

-

Dell unveils Deskside Agentic AI at Dell Technologies World 2026

Dell unveils Deskside Agentic AI at Dell Technologies World 2026News Deskside Agentic AI is the latest in the Dell AI Factory with Nvidia stable, with the company saying it further demonstrates its end-to-end enterprise AI capability

-

AI agents aren’t cutting it in customer service

AI agents aren’t cutting it in customer serviceNews Three-quarters of companies have had to pause or halt deployments of AI agents in customer service

-

‘Too many employees are serving as the human middleware’: Workers are wasting a full day each week switching between disparate AI tools and internal systems

‘Too many employees are serving as the human middleware’: Workers are wasting a full day each week switching between disparate AI tools and internal systemsNews Transferring data from one AI tool to another is costing more time than the tools actually save

-

'Advisory AI has run its course': ServiceNow wants agents working in every corner of your business

'Advisory AI has run its course': ServiceNow wants agents working in every corner of your businessNews A big update to ServiceNow’s Autonomous Workforce service looks to ramp up automation

-

Microsoft joins competitors in handing over AI models for advanced testing

Microsoft joins competitors in handing over AI models for advanced testingNews US and UK government agencies will evaluate the firm's frontier models, along with those from Google and xAI

-

AI adoption is accelerating in the UK, but ‘trust is not keeping pace’

AI adoption is accelerating in the UK, but ‘trust is not keeping pace’News Organizations need to do more to reassure customers over governance