OpenAI takes on Midjourney with DALL-E 3 image generation enhanced by ChatGPT

As AI images become more lifelike, OpenAI claims it is working to curb misuse

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

You are now subscribed

Your newsletter sign-up was successful

OpenAI has announced an updated version of its AI image generation model DALL-E, which aims to compete with some of the most detailed image models available, and is included within ChatGPT.

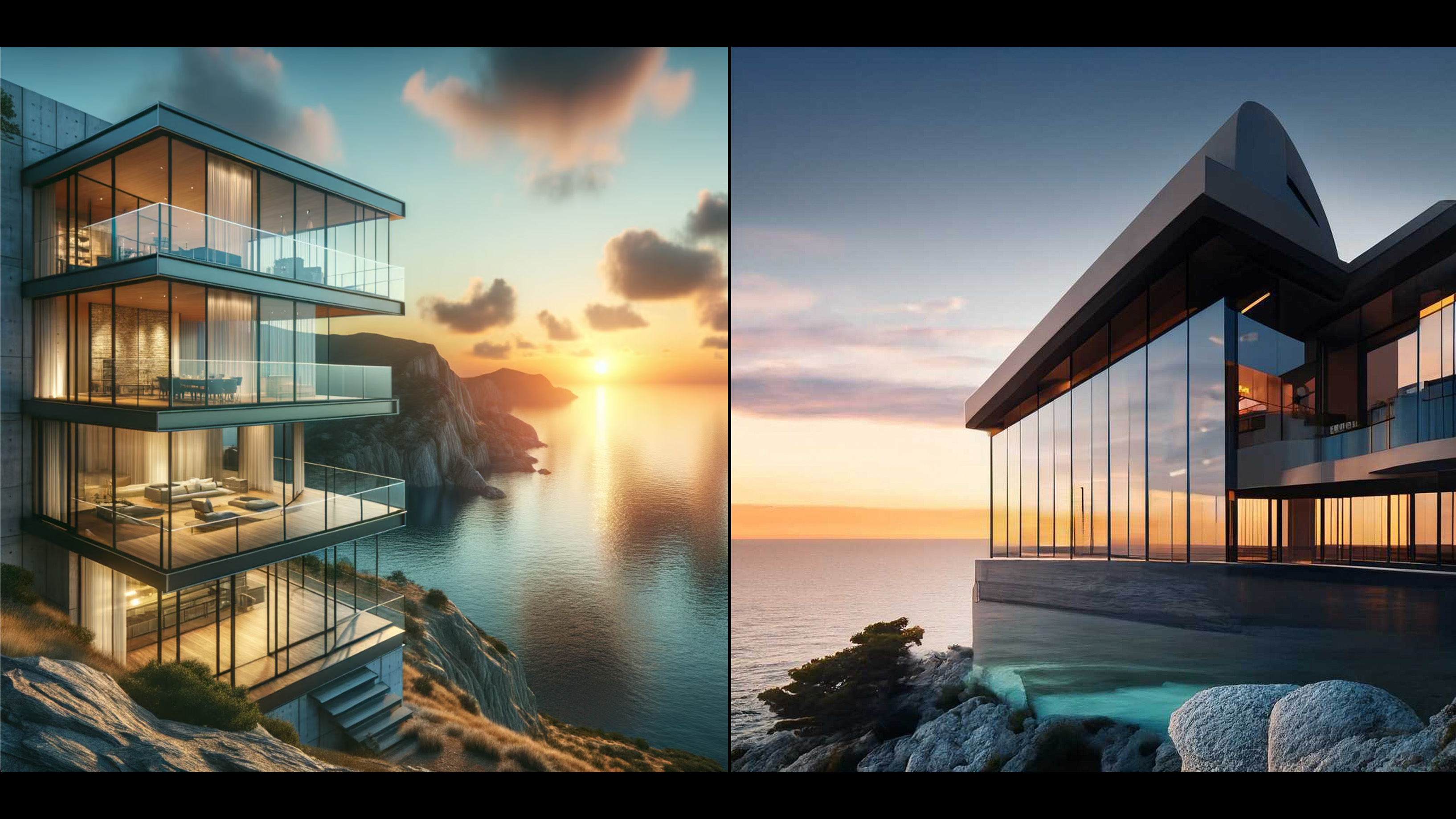

With DALL-E 3, OpenAI has promised its most capable image generation model yet, with more detailed and photorealistic image outputs compared to its predecessor DALL-E 2.

The improvements put the model head to head with Midjourney 5 or Adobe’s image generation model for enterprise, Firefly.

The model will become available directly within ChatGPT, with the chatbot able to generate detailed prompts for the image model in order to more accurately produce the results that users seek.

Generative AI text-to-image models generally produce more impressive results when fed long inputs of text descriptors, produced through a careful trial-and-error process called prompt engineering.

The ability to generate these strings directly within ChatGPT could empower less-skilled users to generate complex images easily, help businesses keep images within their style guides, and prevent the model from producing confusing or unwanted content.

“Modern text-to-image systems have a tendency to ignore words or descriptions, forcing users to learn prompt engineering,” wrote OpenAI.

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

“DALL-E 3 represents a leap forward in our ability to generate images that exactly adhere to the text you provide.”

DALL-E 3 output (left) and an Adobe Firefly output (right) for the prompt A modern architectural building with large glass windows, situated on a cliff overlooking a serene ocean at sunset".

The combination of the well-known ChatGPT interface and DALL-E 3 could also make the model easier to use without user training. OpenAI hopes that this, combined with the new more powerful model, could give DALL-E 3 an edge over competitors.

It will be made available to ChatGPT Plus and Enterprise customers in October, and for ChatGPT API users later in the fall.

Analysis: Where is AI image generation headed?

Rory Bathgate covers artificial intelligence for ITPro, and has written extensively on the topic of generative AI since the technology's steep rise over the past year.

The first generation DALL-E model was released in January 2021, and was capable of limited image generation that could be readily identified as computer-generated.

In the nearly three years since, the quality of AI images has dramatically improved and tools for generating AI images have become easier to access and more widely used by businesses.

At Appian World 2023, the firm used Midjourney for every image in its opening keynote. Even with the strides that made this possible, Malcolm Ross, director and SVP of product strategy, told ITPro that the final images seen by the audience had come from a lengthy back-and-forth of prompt engineering - the kind of step OpenAI is attempting to eliminate.

Advancements have not been without controversy. Many artists are concerned about the impact of AI on their work, particularly those with a trademark style that can be replicated by AI models upon user request.

RELATED RESOURCE

Driving disruptive value with Generative AI

This free webinar explains how businesses are responsibly leveraging AI at scale.

DOWNLOAD FOR FREE

To combat this, OpenAI stated that DALL-E 3 will reject prompts that specifically ask for an image in the style of an artist who is currently alive, and the firm provided an opt-out form for artists who don’t want their work to be used in future image generation models.

It is not clear if redress methods for those whose art has already been used to train models such as DALL-E 3 will be implemented in the future. AI developers can struggle to negate the influence of training data from a model, even in cases where specific model weights can be identified.

In theory, this could lead to workarounds in which users can effectively generate images in the style of any artists already used in the training data using curated terms that lead to the craft

The EU’s AI Act contains provisions that would compel developers to reveal copyrighted content they used to train generative AI models, and OpenAI itself is currently being sued by a number of authors who allege that ChatGPT was trained on their works without consent.

AI developers will be subject to a complex legal regime in the coming months and years, and text models such as DALL-E 3 will be subjected to scrutiny

OpenAI said that it is working on a “provenance classifier” so that images made with DALL-E 3 can be easily identified as artificial, and had put in place mitigations to prevent users generating images of public figures.

Developers across the industry are working tools that can accurately detect AI content such as text, images, audio, and video out of fears content could otherwise be misused.

Mandiant researchers recently warned that generative AI could fuel a new wave of malicious information campaigns, and AI images of public figures such as political leaders have already been used for small-scale disinformation campaigns on social media.

Rory Bathgate is Features and Multimedia Editor at ITPro, overseeing all in-depth content and case studies. He can also be found co-hosting the ITPro Podcast with Jane McCallion, swapping a keyboard for a microphone to discuss the latest learnings with thought leaders from across the tech sector.

In his free time, Rory enjoys photography, video editing, and good science fiction. After graduating from the University of Kent with a BA in English and American Literature, Rory undertook an MA in Eighteenth-Century Studies at King’s College London. He joined ITPro in 2022 as a graduate, following four years in student journalism. You can contact Rory at rory.bathgate@futurenet.com or on LinkedIn.

-

OpenAI says AI tools are paying dividends for small businesses, but uptake is sluggish in several UK regions

OpenAI says AI tools are paying dividends for small businesses, but uptake is sluggish in several UK regionsNews While some small businesses are seeing big benefits, many don't use AI at all

-

Microsoft has a new AI poster child in Anthropic – and it’s about time

Microsoft has a new AI poster child in Anthropic – and it’s about timeOpinion Microsoft is cosying up to Anthropic at a crucial time in the race to deliver on AI promises

-

Will AI hiring entrench gender bias?

Will AI hiring entrench gender bias?ITPro Podcast This International Women's Day, it's more important than ever to consider the inherent biases of training data

-

Why Amazon’s ‘go build it’ AI strategy aligns with OpenAI’s big enterprise push

Why Amazon’s ‘go build it’ AI strategy aligns with OpenAI’s big enterprise pushNews OpenAI and Amazon are both vying to offer customers DIY-style AI development services

-

February rundown: SaaS-pocalypse now?

February rundown: SaaS-pocalypse now?ITPro Podcast Geopolitical uncertainty is intensifying public and private sector focus on true sovereign workloads

-

‘A huge vote of confidence’: London set to host OpenAI's largest research hub outside US

‘A huge vote of confidence’: London set to host OpenAI's largest research hub outside USNews OpenAI wants to capitalize on the UK’s “world-class” talent in areas such as machine learning

-

Sam Altman just said what everyone is thinking about AI layoffs

Sam Altman just said what everyone is thinking about AI layoffsNews AI layoff claims are overblown and increasingly used as an excuse for “traditional drivers” when implementing job cuts

-

OpenAI's Codex app is now available on macOS – and it’s free for some ChatGPT users for a limited time

OpenAI's Codex app is now available on macOS – and it’s free for some ChatGPT users for a limited timeNews OpenAI has rolled out the macOS app to help developers make more use of Codex in their work