The history of search engines

From a CERN list to Google and now Wolfram Alpha, the online search engine market has seen much innovation in its short history.

The launch of Wolfram Alpha this month has some wondering if we're moving into a new age of search. We certainly could be, but it's unlikely it'll be a Google-free world.

Indeed, this latest development as cool as it is is a continuation of a long string of innovation the sector has seen since the birth of the web. Wolfram Alpha is likely to fill one niche area of the market, while other innovations step-up to solve other problems. That may still leave Google holding the bulk of the market, but at the very least it's encouraging that so many are continuing to develop new ideas despite such a near monopoly.

How search works

Every search engine is a bit different, much to the annoyance of search engine optimisers the world over. That said, search engines basically send out a robot or spider across the web to follow each and every link. The service then indexes what it finds, with different engines storing different bits for example, Google, stores everything found in the source of the page, while others just look at what's displayed.

When a user searches all these indexed pages, relevancy comes into play. Google's PageRank is the most famous algorithm, but every engine has its own way of finding the best results.

But it's not just the web that's searched. Wolfram Alpha is promising to return answers, not just documents, from across the web, while many other searches look at more than the web or even limit themselves to specific niche areas of it. Consider the Pirate Bay. The now-notorious site offers a search of BitTorrents, specific file types that are key to its users. Or consider Google Maps it is searching for anything that can be geographically pin-pointed. Even services like Twitter are changing the way search works, by letting us search what people are tweeting about.

Before the web

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

Quite simply, the world didn't need search in the early days. In the beginning, all web servers were listed on a CERN website that was edited by a man then known as Tim Berners-Lee those were the days before he was knighted.

Once that list became unwieldy, a search tool dubbed Archie that's Archive' without the v' searched via a database of web servers. Soon after, Gopher's rise led to a pair of new search tools, comically dubbed Veronica and Jughead, which searched using file names and menu titles.

It wasn't until 1993 that the first robot came about. It was called the World Wide Web Wanderer, but it was for measuring the web, not searching it.

Modern search kicked off in December of that year with JumpStation, which used a robot to crawl the web, indexing it to make it searchable the three key aspects of modern, or at least current, search. JumpStation was limited to titles, but another system called WebCrawler took it a step further the next year, managing to search full text.

Going commercial

Lycos kicked off the money making in 1994. The Carnegie Mellon project not only robotically indexed every word on a page for searching, but it was also used by the public and went commercial.

But it had competition. Among the pack that emerged over the next few years was Excite, Magellan and Infoseek, in addition to Altavista and Yahoo. Perhaps surprisingly now, at the time Yahoo didn't search via full pages and keywords, but instead used a web directory system.

By 1996, dominant browser Netscape was struggling to keep things fair, so for a fee of $5 million it let search engines buy the search spot on the Netscape page in rotation.

But the search engine market was set for a shakeup. In that same year, Larry Page and Sergey Brin teamed up at Standford University to develop a search engine based on relevancy, initially dubbing it BackRub. Two years later, Google was incorporated as a company with investment of $1 million; a year later, they had $25 million to play with.

Google time

In 2000, the market as we know it now started to take shape. The dot com bust took down some, but Google's PageRank bumped it into the limelight, and it started offering advertising that year.

Freelance journalist Nicole Kobie first started writing for ITPro in 2007, with bylines in New Scientist, Wired, PC Pro and many more.

Nicole the author of a book about the history of technology, The Long History of the Future.

-

Developers warned to avoid 'early-access' Google Gemini tools

Developers warned to avoid 'early-access' Google Gemini toolsNews Attackers are tempting would-be users into downloading reverse shell malware

-

Researchers warn millions of RDP and VNC servers are wide open to exploitation

Researchers warn millions of RDP and VNC servers are wide open to exploitationNews Researchers at Forescout spotted millions of RDP and VNC servers exposed online

-

Google looks to shake up the way the tech industry classifies skin tones

Google looks to shake up the way the tech industry classifies skin tonesNews The tech giant is pursuing better ways to test for racial bias in tech products

-

DuckDuckGo vs. Google: Privacy or popularity?

DuckDuckGo vs. Google: Privacy or popularity?Vs Google may reign as king, but it’s not the only option in the world of search

-

What is the semantic web?

What is the semantic web?In-depth The semantic web is another idea from the inventor of the web, but what does it mean for the rest of us?

-

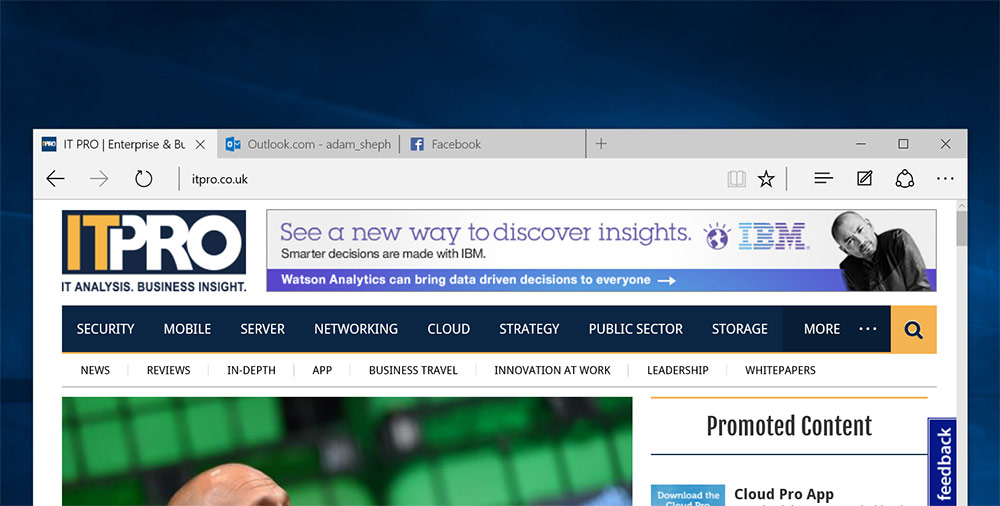

How to change your search engine in Microsoft Edge

How to change your search engine in Microsoft EdgeTutorials If you'd rather search through Google than Bing, here's how to change your default search provider in Windows 10's new browser

-

Google's top 2014 search trends revealed

Google's top 2014 search trends revealedNews Google 2014 trends have been unveiled, and include the year’s biggest sporting events, tech releases, cat stats and more

-

Google right to be forgotten rule extends to Bing & Yahoo

Google right to be forgotten rule extends to Bing & YahooNews The EU’s controversial right to be forgotten ruling against Google will now also apply to Yahoo and Bing

-

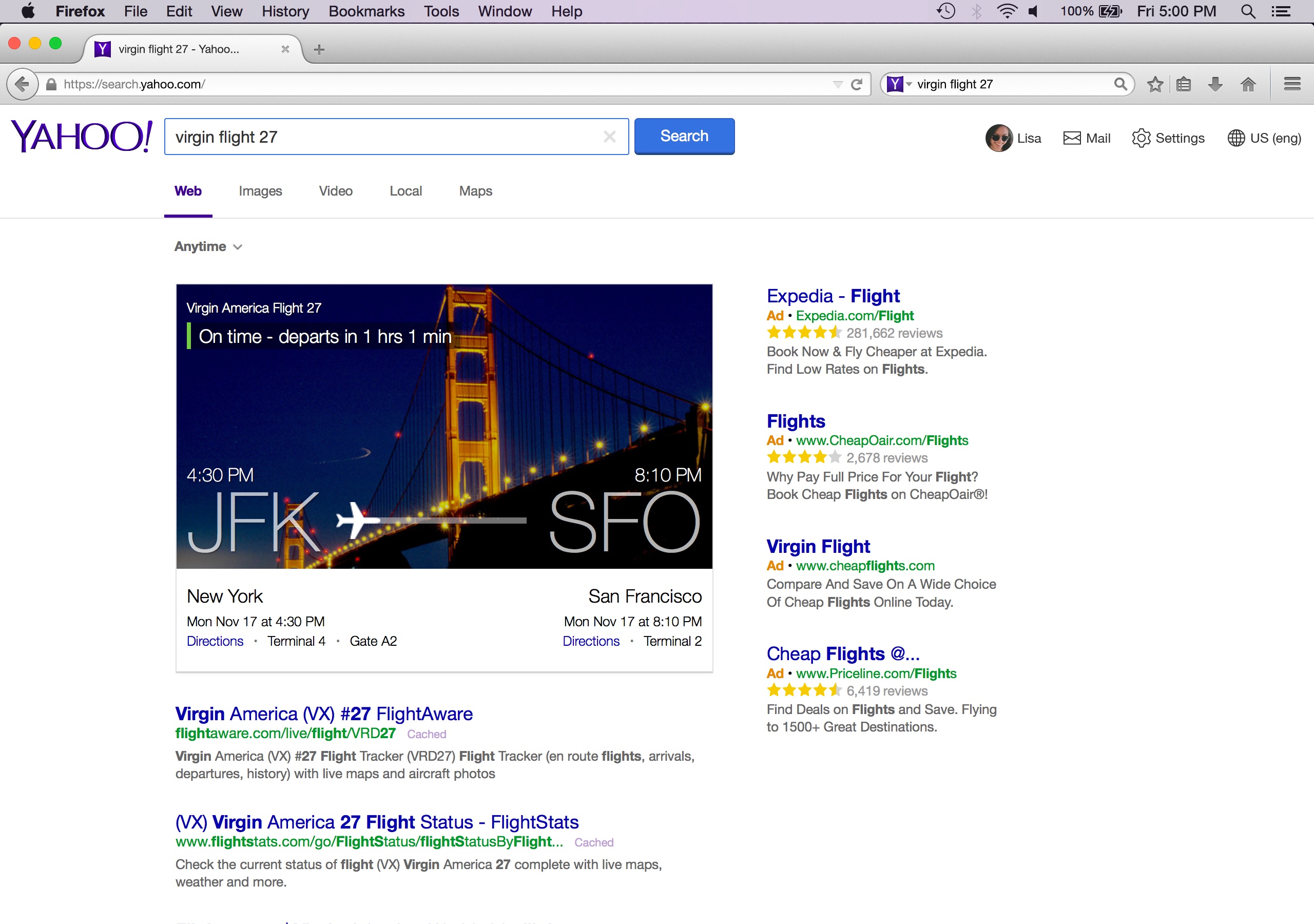

Firefox to switch default search engine from Google to Yahoo

Firefox to switch default search engine from Google to YahooNews Mozilla signs five-year agreement with Yahoo

-

Google declares Amazon its biggest search rival

Google declares Amazon its biggest search rivalNews Google has dubbed Amazon its biggest rival above other traditional search engine companies