The long goodnight: what happens when a supercomputer becomes obsolete?

With the number of supercomputer and AI data centers mushrooming around the world, what happens when these behemoths reach the end of their lives

E-waste has been a problem when it comes to office and consumer devices for over 20 years, with concerns about the volume of defunct electronics being sent to landfill raised as early as 1999.

This is all a valid concern. According to the United Nations Institute for Training and Research (Unitar), 62 million tonnes of e-waste was produced in 2022. That was 82% more than just 12 years before and the figure is projected to reach 82 million tonnes by 2030 – a further increase of 32%.

While this is primarily an office IT and consumer device issue, supercomputers also make a contribution – and the number of super computers in operation is growing.

The pace of innovation in the technology used by supercomputers is accelerating and the workloads they’re being used for is evolving. While high-performance computing (HPC) and parallel processing was once the norm, increasingly tasks like AI-enabled digital twins or researching frontier energy technologies are being run.

Concerns are already growing over the amount of water and energy consumed by supercomputers. Increased e-waste heading from these facilities to landfill could raise further questions about the benefits of the technology versus the potential environmental damage they cause.

The diminishing life expectancy of a supercomputer

"Modern supercomputers typically have a five year lifecycle, driven by rapid advances in processor, interconnect, and cooling technologies,” says Forrester VP principal analyst Charlie Dai. “After installation, performance leadership erodes quickly and planning for replacement begins almost immediately, and energy efficiency and AI workloads further compress refresh cycles.”

This is roughly half the lifecycle of supercomputers that came online in the mid- and late-20th Century. One of the earliest supercomputers, Titan, was up and running for nine years at the University of Cambridge Mathematical Laboratory before being retired in 1973.

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

By contrast, the US Department of Energy’s (DoE) Summit supercomputer came online in June 2018 and immediately took the title of the fastest supercomputer in the world in the Top500 rankings. Less than six years later, in November 2024, it was decommissioned.

As Dai explains: “Competitive pressure and scientific demand for AI-driven simulations accelerate this trend, creating a near-continuous upgrade cycle.”

The role of CPUs and GPUs

Simon McIntosh-Smith, professor of High Performance Computing at the University of Bristol and director of the Bristol Centre for Supercomputing, knows more about this than most.

The facility has housed four supercomputers since 2018, two of which – Isambard 1 and Isambard 2 – have been retired. Isambard 3, which came online in December 2024, is one of the most powerful CPU-based supercomputers, while Isambard-AI is the most powerful supercomputer in the country.

As McIntosh explains, when the plans for Isambard 1 were originally laid out he and his team anticipated it would last a fraction of the time the supercomputers of the past had.

“If you go back ten years, when we wrote the proposal for Isambard 1 … you would replace supercomputers roughly every three years or so, because in that time the technology moved on so far, you were massively uncompetitive after three years,” McIntosh explains. “You would be better off deploying your key resources – your space and your power – with the latest technology that’s moved on a long way in three years.”

However, during that time an unexpected change took place that challenged one of the fundamental ‘rules’ of computing.

“If you use the data from the Top500, the computers are actually lasting longer and longer because they're big, expensive things, and in fact Moore's Law has slowed down a little bit,” says McIntosh.

“All that means that after three years, now your computer's actually still very competitive,” he explains. “If you look at the average age of big computers in the Top500 they're now not three years. They're sort of five years, or even six years.”

Mark Parsons, associate dean for e-research at the University of Edinburgh and director of its supercomputing center, EPCC, has a similar viewpoint.

“The time between generations of processors, be they CPUs or GPUs, has definitely accelerated,” says Parsons. “The cadence used to be maybe two years, and it's more often than not one year nowadays, so the next best processors are just appearing much, much faster.

“But,” he adds, “I don't think that leads to a shorter lifetime of supercomputers.”

The second life of supercomputer hardware

Whether a supercomputer is CPU-driven and lasting longer or GPU-driven and turning over hardware much faster, they all reach the end of the road eventually. What happens next depends somewhat on where the facility is and who it’s run by.

The life-extension phenomenon outlined by McIntosh-Smith only applies to what he describes as “traditional supercomputers” – ones that are driven by CPUs rather than GPUs and make up the majority of the Top500.

“Hyperscalers are doing the exact opposite,” McIntosh continues. “They buy billions of dollars worth of the very latest Nvidia or AMD [chips]. Then, as soon as the next one comes out, they basically retire that and … replace all of it with the very latest Nvidia or AMD.”

This retirement doesn’t mean disposal, however. “They don't throw it away, they move it to a different part of their organization,” he explains. “It goes to all the other stuff. It might actually be, actually running services for their customers and things like that, rather than them using them internally.”

In the case of national computers, Parsons says that they have a longer life than it might appear.

“National supercomputing centers [exist] in all countries and at any time a national supercomputing center will generally have a single national supercomputer they own and operate,” explains Parsons.

“In some countries, however, what you find are two or three national supercomputing centers and the government organizes them so that each center will get a new one about every 18 months,” he continues. “There’s always a relatively new capability and the previous center that was in the lead last time is now second in the lead, if you like.”

Dai gives the example of Frontier, the US Department of Energy supercomputer housed at Oak Ridge National Laboratory (ORNL) that is currently ranked as the second most powerful in the world.

When it came online in 2022, Frontier was the world’s first exascale computer, as well as the fastest. In October 2025, however, it was announced Frontier was being replaced by a new supercomputer – Discovery – which is likely to come online some time in 2027.

“‘Replacement’ is partly accurate, but oversimplifies the strategy,” Dai explains. “HPE’s Discovery will succeed Frontier as Oak Ridge’s flagship, yet Frontier will likely remain operational for secondary workloads during transitions.”

“Similarly, Nvidia-backed systems often augment existing clusters for AI-specific tasks,” he adds.

The final mile… and beyond

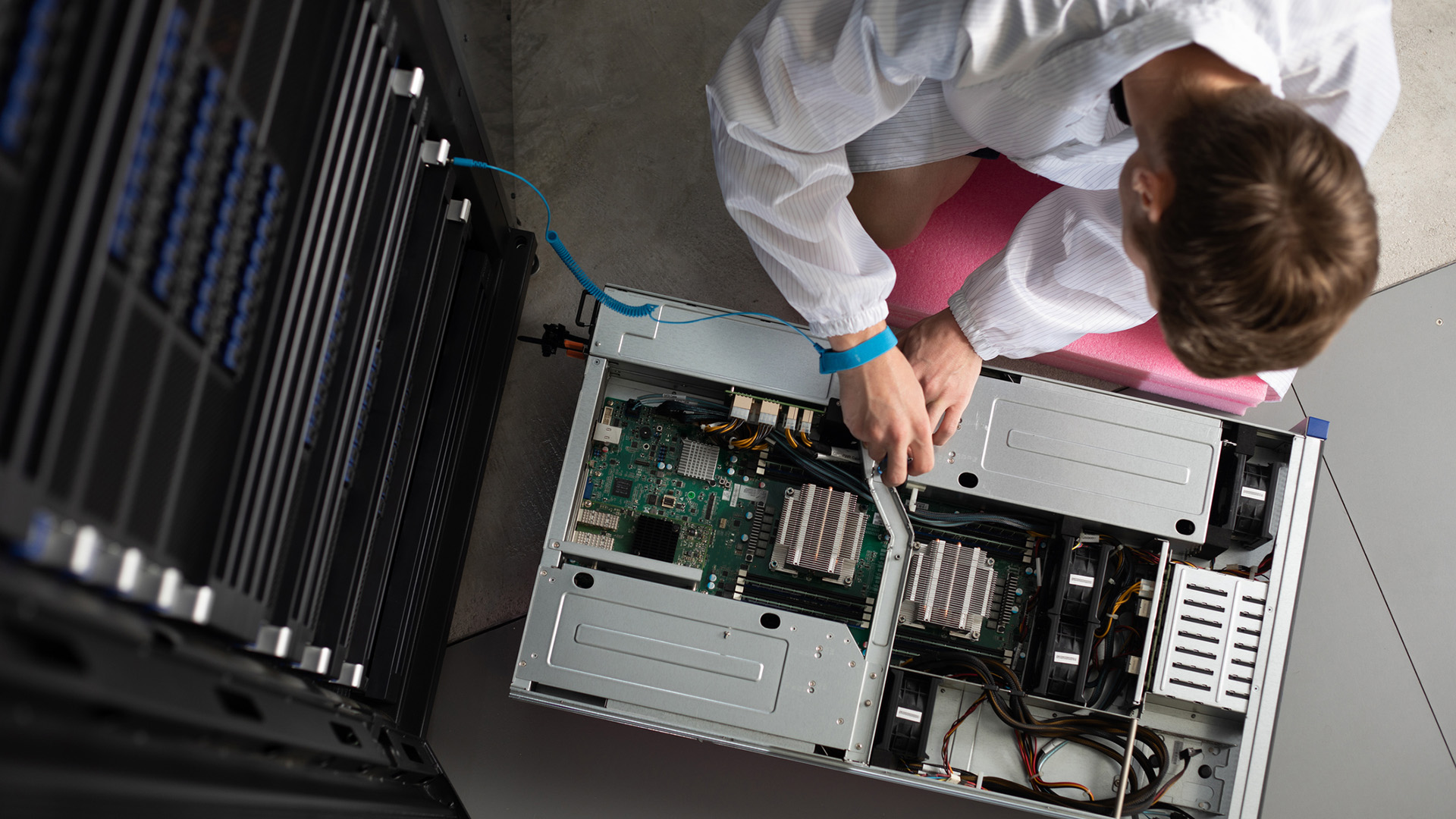

While there’s a level of life-extension that goes on with supercomputers, there will always come a day when the hardware is no longer fit for purpose. When this happens, some supercomputer centers will take charge of disposing of the system. Many, however, will hand the hardware back to the manufacturer.

“Normally, certainly for big systems, the disposal rights vest with the provider of the system,” says Parsons. This can be better value for the supercomputer operator, he says, as often the hardware is made available at a lower price. It also opens up the possibility of keeping the components in circulation for a much longer time.

“If you are going to dispose of it, generally, you would just recycle it using the normal recycling programs for IT equipment,” explains Parsons. “The big companies, though – the HPEs and Dells of this world – have financial services organizations that will buy kit from you. Then they will either recycle it or resell it or use it for maintenance and other systems of that age that are still in operation.”

Two facilities that carry out these recycling activities are HPE’s Technology Renewal Centers, located in Erskine, Scotland, and Andover, Massachusetts.

According to Jim O’Grady, VP of HPE Financial Services Global Asset Management, the company repurposes about 4.3 million assets – both customer owned and leased – between the two sites per year. Of those 4.3 million assets, about half is workplace technology, while the other half is from data centers, which is “highly concentrated in high performance compute product”.

“In general, we repurpose about 83% of all processed assets,” O’Grady says. The remaining 17% is sent for safe and sustainable recycling, with only a small fraction ever ending up as e-waste.

“There are caveats,” he says, “customers at end of use are interested in getting any remaining value from their equipment.” Once the decision is taken to decommission, though, O’Grady says organizations want to “give their assets to a sustainable vendor that will strive to repurpose these assets and minimize e-waste”.

HPE has been recycling materials at the Erskine facility for 15 years, having converted it from a production plant that had been running for several decades prior.

“We’re very proud of what we’ve done, certainly, here in Erskine,” Jackie Rafferty, EMEA delivery manager and technology renewal center operations manager at Erskine, says. “It was a very small community when we first started, but it’s one of the biggest in the world now. We’re really doing some great things for the customer and the environment.”

“I’ve been part of this site for over 38 years,” he adds, “so we’ve had huge amounts of experience … with knowledge of processing IT because we worked in the supply chain. Now we’re using that experience to maximize assets and get them back into the cycle.”

For supercomputers, a remarkable amount of the components can be given a second life.

“We did quite a few last year and the remarketing rates on these supercomputers were amazing,” says Rafferty. “It was 98% remarketing rate on one of them and 90% on the other. One of those supercomputers generated over 100,00 assets, which really shows our capabilities and what we’re putting back into the cycle. It’s really our experience that allows us to do that.”

While only 17% of assets that come through Erskine and Andover, MA end up as e-waste, AI and – perhaps ironically – advances in cooling are starting to create new challenges.

“AI technology is getting more complex,” says O’Grady. “We’re starting to get into the direct liquid cooling technology versus air cooled technology, which is a whole other level of complexity. I don’t believe a lot of other IT players in the future are going to be able to deal with that.”

As the world continues to weigh the many upsides and drawbacks of the AI revolution, this is yet another issue it will have to face head on sooner rather than later.

Jane McCallion is Managing Editor of ITPro and ChannelPro, specializing in data centers, enterprise IT infrastructure, and cybersecurity. Before becoming Managing Editor, she held the role of Deputy Editor and, prior to that, Features Editor, managing a pool of freelance and internal writers, while continuing to specialize in enterprise IT infrastructure, and business strategy.

Prior to joining ITPro, Jane was a freelance business journalist writing as both Jane McCallion and Jane Bordenave for titles such as European CEO, World Finance, and Business Excellence Magazine.

-

‘Perfect’ Zero Trust is killing your mid-market productivity

‘Perfect’ Zero Trust is killing your mid-market productivitySponsored Security theory often collapses under real-world deadlines. It’s time for a more auditable, “human-centric” approach to privileged access management

-

Increased AI use means developers spend more time reviewing code than ever

Increased AI use means developers spend more time reviewing code than everNews While AI is improving productivity and efficiency, many developers are caught up in a vicious cycle of code reviews and bug hunting