What is exascale computing?

Exascale computing is several orders of magnitude beyond the previous generation of supercomputers – bringing with it exciting new possibilities

Max Slater-Robins

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

You are now subscribed

Your newsletter sign-up was successful

Exascale computing represents the next frontier in high-performance computing (HPC). It delivers unprecedented processing power capable of executing up to one quintillion (10¹⁸) calculations per second, marking a significant leap forward and enabling breakthroughs in fields such as artificial intelligenc (AI), climate and weather modelling, drug discovery, and nuclear fusion research.

For years, nations have raced to develop sovereign exascale supercomputers, recognizing their strategic importance in scientific progress, economic competitiveness, and national security. The EU, UK, US, China, and Japan have all made significant advances, with multiple exascale machines now operational.

As the world faces various challenges such as climate change, harnessing the power of supercomputers is going to be an advantage that nations want, and are likely to be protective over once they have it.

Despite its immense power, exascale computing is not just about speed: it is about solving previously intractable problems. With the ability to process vast datasets and run complex simulations, exascale systems are expected to accelerate scientific discoveries, from modelling the universe’s origins to simulating new materials at the atomic level.

What exactly is exascale computing?

Exascale computing refers to a new generation of supercomputers capable of performing at least one exaFLOP, equivalent to one quintillion (10¹⁸) calculations per second. This represents a thousandfold increase over the previous HPC benchmark, petascale computing, which is still very powerful.

At its core, exascale computing is about unprecedented processing power, enabling highly complex simulations, real-time data analysis, and AI model training at a scale never seen before. These machines rely on thousands of interconnected processors and accelerators, such as GPUs and custom-built AI chips, to distribute workloads efficiently, and require vast memory bandwidth and energy-efficient architectures to operate.

The transition to exascale computing has been driven by growing global demand for faster and more detailed simulations in areas such as climate modelling, materials science, and medical research.

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

Traditional supercomputers, even at petascale levels, struggle with the increasing complexity of modern datasets and AI models. Exascale systems bridge this gap, allowing scientists and engineers to simulate entire planetary systems, predict weather patterns with greater accuracy, or model atomic interactions for next-generation medicines.

Beyond its scientific applications, exascale computing is also a matter of national strategy and security. Countries investing in exascale tech are using it to advance AI, develop next-generation encryption, and enhance defence capabilities.

Which countries have exascale capabilities?

The US remains the leader in exascale computing, with three operational systems. Frontier, launched in 2022, was the world’s first exascale supercomputer, delivering 1.1 exaFLOPS. In 2024, the Aurora system exceeded 1.012 exaFLOPS, followed by El Capitan in late 2024, which became the world’s fastest supercomputer, hitting 1.742 exaFLOPS.

These machines drive AI research, climate modelling, and national security initiatives.

Europe has also made strides, with Jupiter, the EU’s first exascale supercomputer, having gone live in September 2025. France is also building its own exascale supercomputer, with AMD and Atos subsidiary Eviden starting assembly on the Alice Recoque supercomputer in 2026 with an aim to solve complex scientific and industrial problems.

Meanwhile, China and Japan continue to advance their HPC capabilities, though China’s exascale progress remains largely undisclosed.

The UK, however, has struggled to keep pace. Despite announcing a £1.3 billion exascale project in 2023, the government withdrew funding in 2024, shifting priorities to other AI initiatives. As of 2025, the project was revived with £750 million in government backing – though with “exascale” notably missing from the announcement.

Industry applications of exascale computing

Exascale computing is revolutionizing scientific research, enabling simulations and analyses at an unprecedented scale and accuracy, while in climate science, researchers can now model entire planetary systems, predicting extreme weather events and long-term climate changes with greater precision.

Similarly, in astrophysics, exascale supercomputers are used to simulate the formation of galaxies and black holes, helping scientists better understand the universe’s origins.

The healthcare sector is another key beneficiary. Exascale computing accelerates drug discovery and genomic research, allowing scientists to simulate the behavior of proteins and molecular interactions in record time, which has major implications for cancer treatment, precision medicine, and the development of new vaccines.

AI-driven medical diagnostics also stand to benefit, as exascale systems can process vast amounts of patient data, leading to earlier disease detection and more personalized treatment plans.

In the energy sector, exascale computing is driving advances in nuclear fusion, renewable energy optimization, and oil and gas exploration. By running highly detailed simulations, researchers can improve the efficiency of wind farms, optimize energy grids, and develop next-generation nuclear reactors. Companies in the oil and gas industry also use exascale models to better predict underground reserves and reduce environmental impact.

How powerful is exascale computing?

Exascale computing represents a monumental leap in classical supercomputing, providing the raw processing power to perform one quintillion (10¹⁸) calculations per second. To put this into perspective, an exascale supercomputer can complete in one second what would take a human over 31 billion years to calculate manually.

Compared to petascale systems, the previous high-performance computing benchmark, exascale machines are around 1,000 times faster, making them capable of handling unprecedented levels of data analysis, simulation, and AI model training.

However, while exascale computing pushes classical computing to its limits, quantum computing takes an entirely different approach.

Instead of using traditional binary processing (0s and 1s), quantum computers leverage qubits, which can exist in multiple states simultaneously, allowing them to solve certain complex problems exponentially faster. While exascale supercomputers excel in areas like physics simulations, AI, and cryptography, quantum computing is expected to outperform classical systems in highly specialized tasks, such as molecular modelling, financial risk analysis, and encryption breaking.

Despite its potential, quantum computing is still in its infancy, with most systems limited by error rates and hardware constraints. Exascale computing, on the other hand, is already a proven technology, actively driving advancements in AI, medicine, climate science, and engineering.

In the foreseeable future, rather than replacing exascale computing, quantum and classical supercomputers are likely to work in tandem, combining their strengths to tackle some of the world’s most complex computational challenges.

Where next?

Exascale computing represents a major leap in high-performance computing, driving advances in climate modelling, medical research, and AI. With the ability to process one quintillion calculations per second, exascale systems are revolutionising scientific discovery.

As competition in supercomputing intensifies, exascale is shaping the future of AI, advanced simulations, and scientific breakthroughs. The energy demands and software challenges of these systems remain key hurdles, but continued innovation in hardware efficiency and scalable algorithms is pushing the industry forward.

Ross Kelly is ITPro's News & Analysis Editor, responsible for leading the brand's news output and in-depth reporting on the latest stories from across the business technology landscape. Ross was previously a Staff Writer, during which time he developed a keen interest in cyber security, business leadership, and emerging technologies.

He graduated from Edinburgh Napier University in 2016 with a BA (Hons) in Journalism, and joined ITPro in 2022 after four years working in technology conference research.

For news pitches, you can contact Ross at ross.kelly@futurenet.com, or on Twitter and LinkedIn.

-

Lloyds Bank touts quantum potential in anti-fraud activities

Lloyds Bank touts quantum potential in anti-fraud activitiesNews The bank said quantum algorithms showed long‑term promise, especially when used to complement AI and classical machine learning

-

IBM is targeting 'quantum advantage' in 12 months – and says useful quantum computing is just a few years away

IBM is targeting 'quantum advantage' in 12 months – and says useful quantum computing is just a few years awayNews Leading organizations are already preparing for quantum computing, which could upend our understanding of linear mathematical problems

-

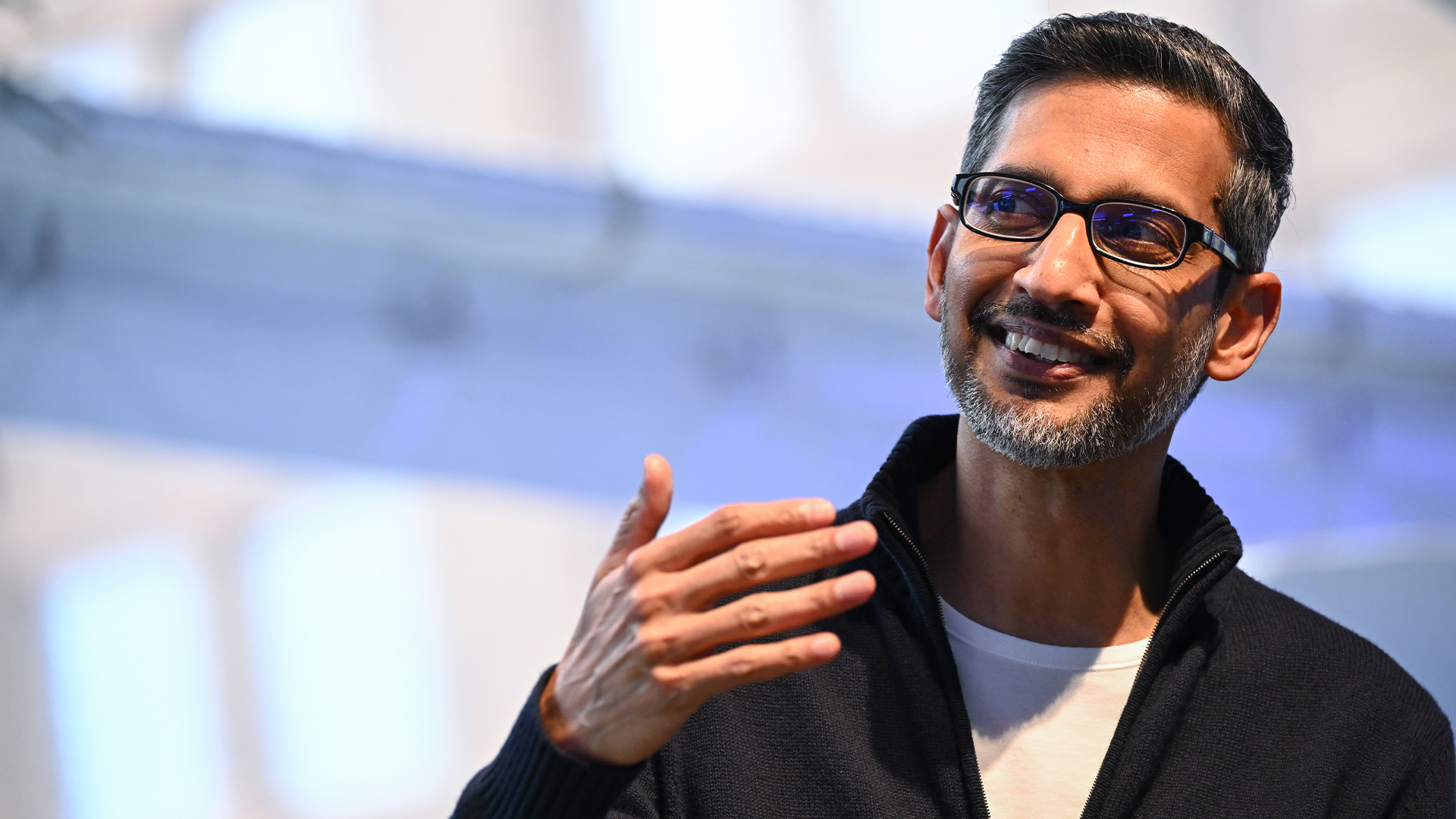

Sundar Pichai thinks commercially viable quantum computing is just 'a few years' away

Sundar Pichai thinks commercially viable quantum computing is just 'a few years' awayNews The Alphabet exec acknowledged that Google just missed beating OpenAI to model launches but emphasized the firm’s inherent AI capabilities

-

Future-proofing cybersecurity: Understanding quantum-safe AI and how to create resilient defenses

Future-proofing cybersecurity: Understanding quantum-safe AI and how to create resilient defensesIndustry Insights Practical steps businesses can take to become quantum-ready today

-

IBM and AMD are teaming up to champion 'quantum-centric supercomputing' – but what is it?

IBM and AMD are teaming up to champion 'quantum-centric supercomputing' – but what is it?News The plan is to integrate the two technologies to create scalable, open source platforms

-

Destination Earth: The digital twin helping to predict – and prevent – climate change

Destination Earth: The digital twin helping to predict – and prevent – climate changeSupported How the European Commission is hoping to fight back against climate change with a perfect digital twin of our planet, powered by Finland's monstrous LUMI supercomputer

-

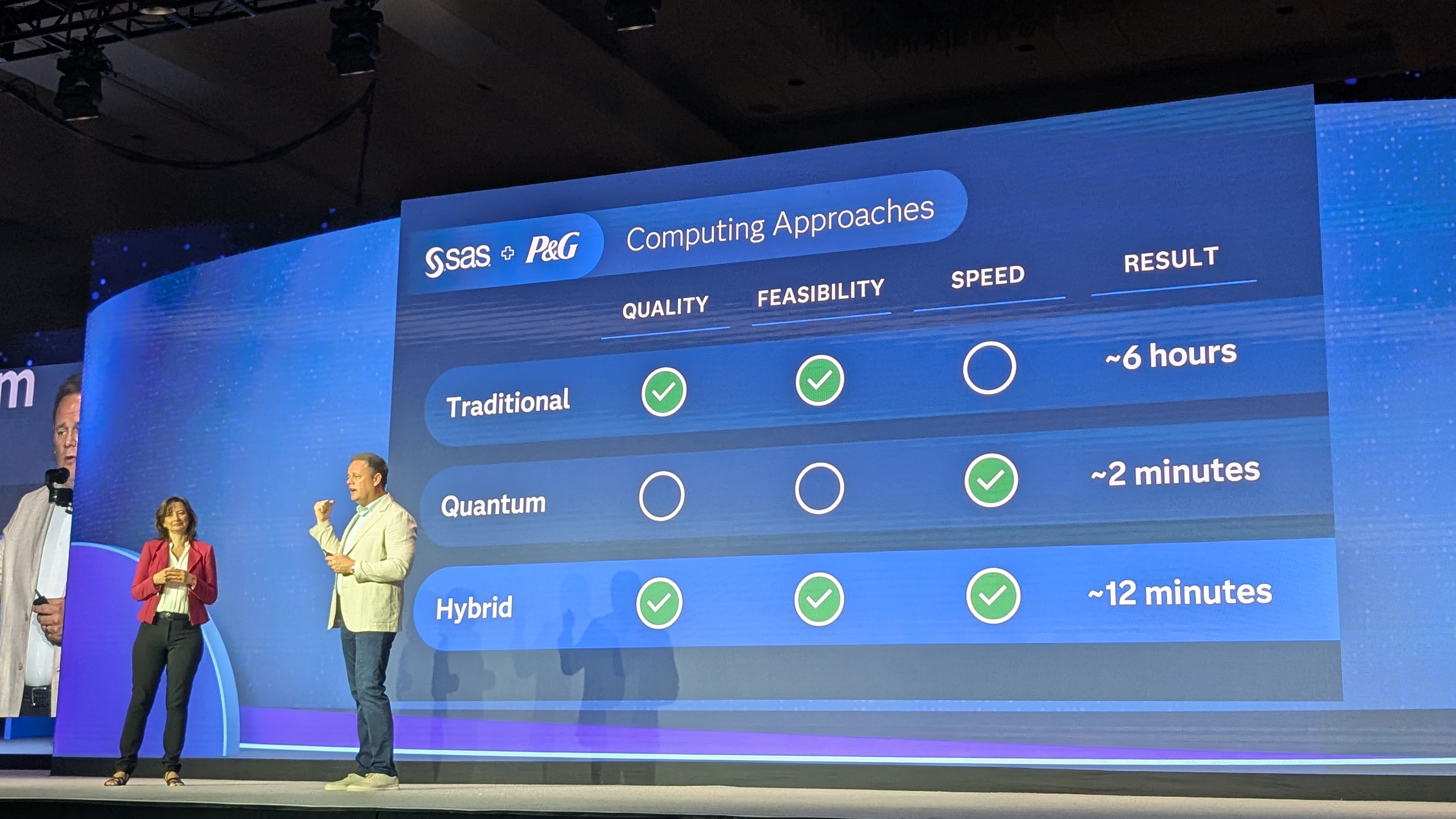

SAS thinks quantum AI has huge enterprise potential – here's why

SAS thinks quantum AI has huge enterprise potential – here's whyNews The analytics veteran has shone a light on three crucial quantum partnerships, as it warns organizations must innovate or fall prey to new threats

-

The UK government wants quantum technology out of the lab and in the hands of enterprises

The UK government wants quantum technology out of the lab and in the hands of enterprisesNews The UK government has unveiled plans to invest £121 million in quantum computing projects in an effort to drive real-world applications and adoption rates.