Fujitsu Primergy CX400 S1 review

Cramming up to four Xeon E5 servers in only 2U of rack space, the Fujitsu Primergy CX400 S1 gives blade servers a run for their money.

Cheaper and more versatile than Blade servers, the Primergy CX400 S1 offers a good rack density with a wide range of storage options. Power consumption is commendably low and it’s better built than Supermicro’s and Dell’s multi-node servers.

-

+

Good value and build quality; Two or four node configurations; Wide choice of storage; Low power usage

-

-

Non hot-plug fans

Multi-node servers have been eating into the blade server market for years thanks to their lower costs, higher rack density and greater flexibility. Fujitsu wants to enter this lucrative territory with the Primergy CX400 S1 Cloud eXtension server.

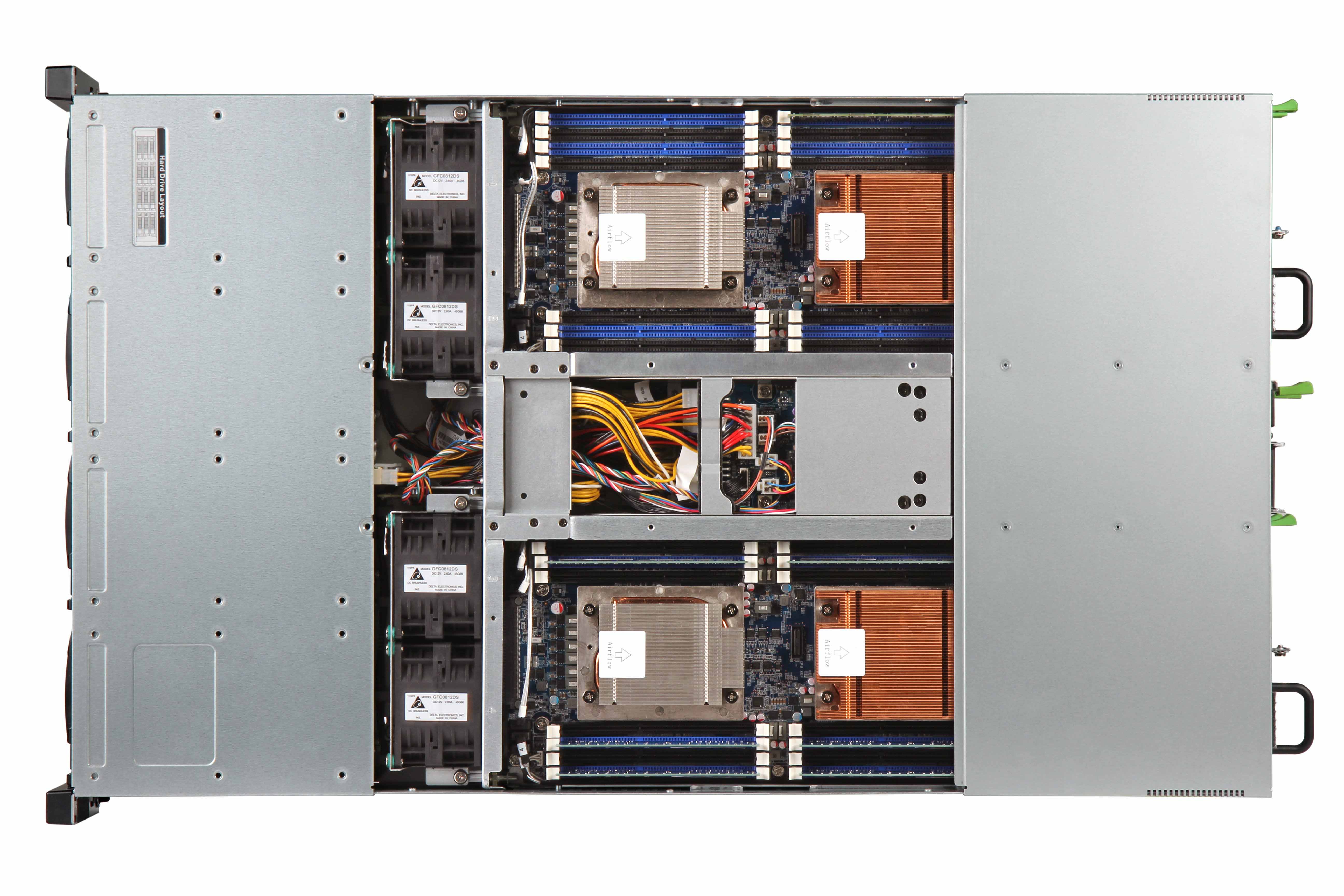

The CX400 squeezes up to four servers and 24 hard disks into 2U of rack space and targets an extensive range of applications including cloud computing, HPC and big server farms. The nodes are hot-pluggable for easy maintenance while power and cooling are shared across all nodes.

There are currently two node choices and if you want four in your chassis then go for the CX250 S1. There's also the double height CX270 S1 which has three PCI-Express slots but you can't mix the two node types in the same chassis.

The nodes slip in from the back and mate with a midplane in front of the cold-swap cooling fans

Chassis design

The design isn't new as Supermicro introduced this format a couple of years ago with its Twin system which we reviewed as the Boston Quattro 2296-T. And then there's Dell's PowerEdge C6220 which also uses the same format.

First impressions are that Fujitsu's build quality is superior. The system looks and feels more solid than the Supermicro and Dell alternatives.

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

The CX400 comes as standard with a two 1400W hot-plug PSUs. These are cabled through to the chassis midplane where each node receives juice and hard disk allotments from their edge connectors.

Cooling is handled by four dual-rotor fans fitted in front of the midplane. These are not hot-pluggable and access to them requires twelve grub screws to be removed before the centre panel above can be lifted off.

Little and large the CX400 supports two CX270 S1 or four CX250 S1 nodes

Storage permutations

The chassis is available with twelve LFF or 24 SFF drive bays. The LFF model supports SATA III and Nearline SAS hard disks whereas the SFF model can handle SATA III, NL-SAS, SAS 2 and SSDs.

The drive backplane is split vertically into four groups each with their own connector. The CX250 S1 nodes have one connector and are allotted either three LFF bays or six SFF drives.

The CX270 S1 nodes have two connectors and can take six LFF drives but there are limitations on the number of SFF drives supported. A backplane expander version is due out but in the current non-expander chassis model, each CX270 S1 supports a maximum of six SFF drives and the nodes must be fitted with a Fujitsu PCI-Express RAID card.

There are also thermal limitations for CPUs with a 130W TDP or higher. If you go for E5-2680 or E5-2690 Xeons then the CX250 S1 nodes are only permitted to have two SFF drives each.

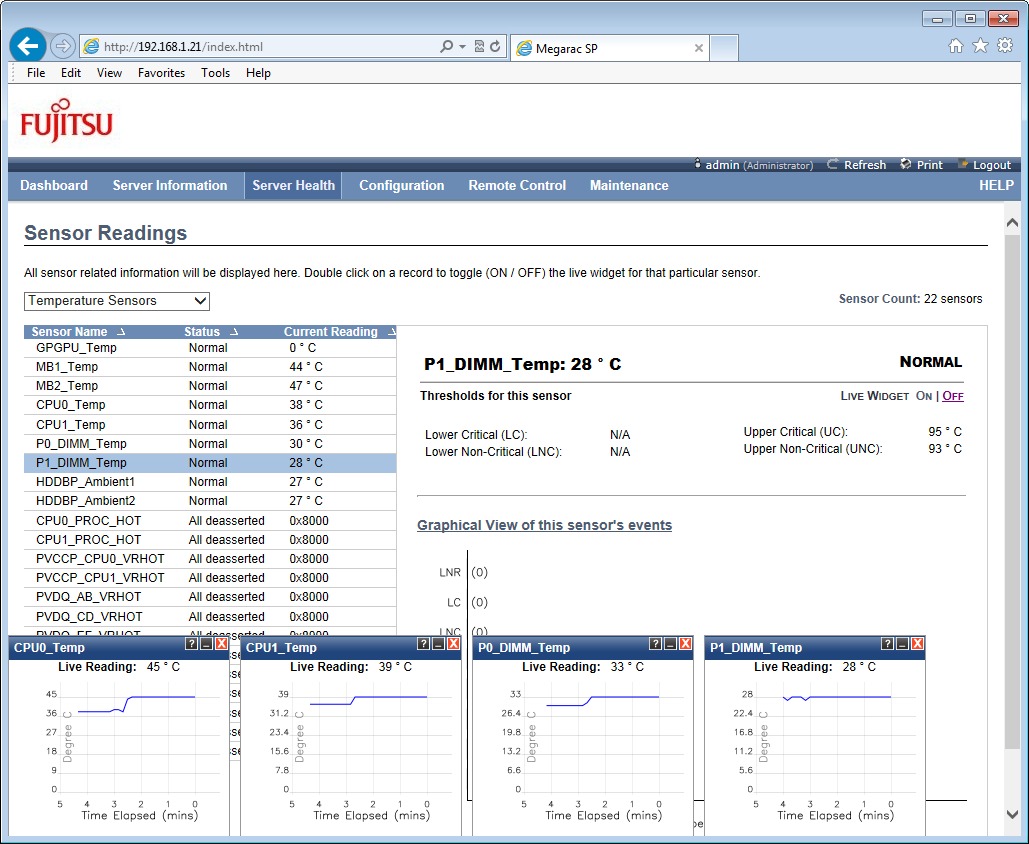

The node's embedded IPMI chip provides handy remote control and component monitoring tools

Nodes and power usage

Our review system came with a pair of CX270 S1 nodes each kitted out with dual 2GHz E5-2620 Xeons and 32GB of DDR3 which can be expanded to 512MB. At the rear you have dual Gigabit, serial, VGA and two USB2 ports.

Node design and built quality is very good and the CX270 S1 has two PCI-Express slots for half-length cards. A third PCI-Express slot is fitted further back and is specifically designed for Nvidia's mighty Tesla GPGPU cards.

The review system performed well in our power tests where we linked it to our inline meter and then powered each node up. We took measurements with them running in idle and with SiSoft Sandra pushing all cores on each one to near maximum utilisation.

In idle we saw one and two nodes draw a total of 140W and 237W. Under pressure these figures rose to only 248W and 432W respectively.

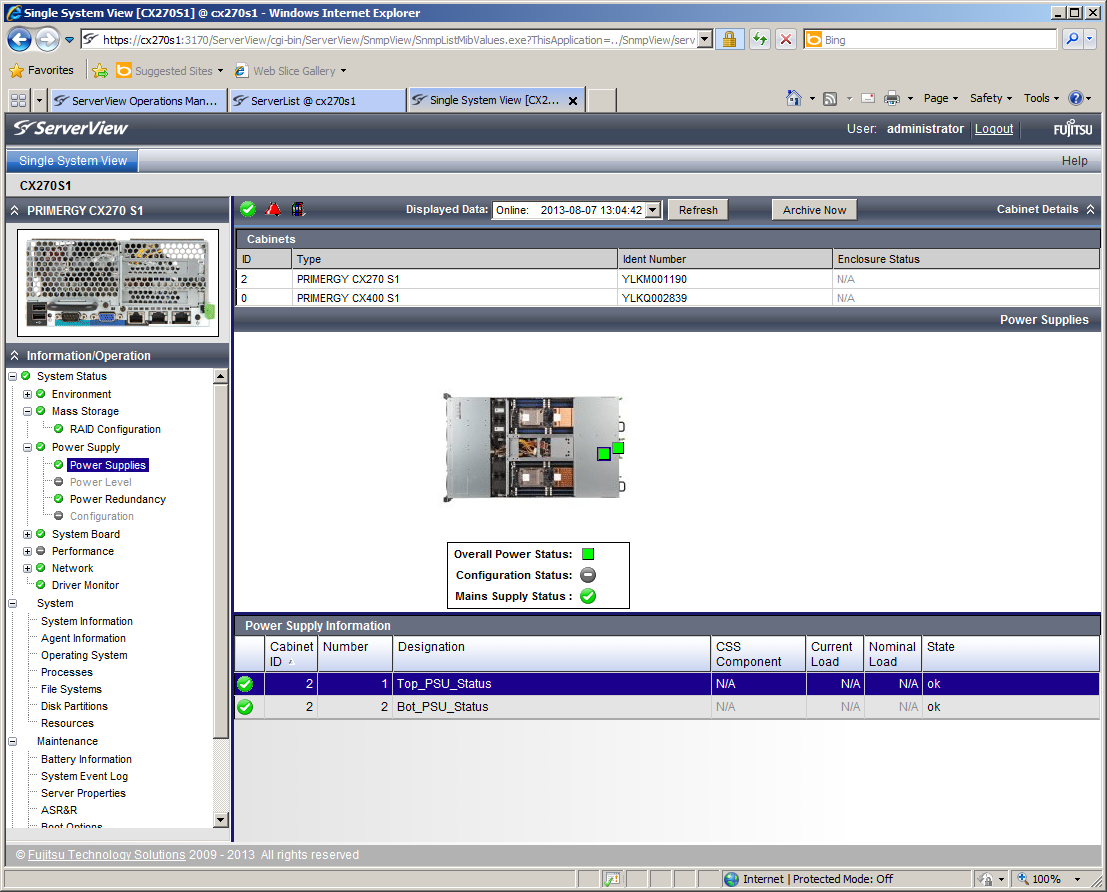

Fujitsu's ServerView software provides more management tools and can also monitor chassis fans and PSUs

Remote management

The chassis itself has no remote management features but each node sports an embedded IPMI chip routed to a dedicated network port at the back. You don't get Fujitsu's iRMC controller and the web interface for this MegaRAC chip bears more than a passing resemblance to that used by Supermicro.

It's not as informative as the iRMC interface but does provide readouts of sensor data for all critical components. Each sensor has thresholds which can be linked to SNMP traps and email alerts if breached.

Supermicro recently added power graphing and monitoring to its web interface but this is absent on the CX270 S1. However, you can remotely control power and it includes KVM-over-IP remote control and virtual media services as standard and not as options.

For general server management Fujitsu offers its ServerView Suite software which monitors all nodes running the agent software. It lists all hardware components and a useful feature is its ability to monitor both chassis PSUs and cooling fans. Conclusion

With the review system coming in at well under eight grand, the CX400 S1 beats blade servers for value and its plentiful storage choices make it a lot more flexible. Support for E5-2600 Xeons allows it to pack an impressive processing density and we found build quality to be superior to the competition.s

Verdict

Cheaper and more versatile than Blade servers, the Primergy CX400 S1 offers a good rack density with a wide range of storage options. Power consumption is commendably low and it’s better built than Supermicro’s and Dell’s multi-node servers.

Chassis: 2U rack

Power: 2 x 1400W hot plug PSUs

Storage: 12 x 3.5in. SATA III hot-swap drive bays

Server Nodes: 2 x CX270 S1 (each with the following)

CPU: 2 x 2GHz Xeon E5-2620

Memory: 32GB DDR3 (max 512GB)

Storage: 2 x 1TB WD Enterprise SATA hot-swap hard disks

RAID: Fujitsu D2607 Ctrl SAS 6G 0/1 PCI-Express card

Array support: RAID0, 1, 10

Network: 2 x Gigabit, IPMI

Expansion slots: 3 x PCI-Express

Management: Embedded MegaRAC IPMI

Warranty: 3yrs on-site NBD

Dave is an IT consultant and freelance journalist specialising in hands-on reviews of computer networking products covering all market sectors from small businesses to enterprises. Founder of Binary Testing Ltd – the UK’s premier independent network testing laboratory - Dave has over 45 years of experience in the IT industry.

Dave has produced many thousands of in-depth business networking product reviews from his lab which have been reproduced globally. Writing for ITPro and its sister title, PC Pro, he covers all areas of business IT infrastructure, including servers, storage, network security, data protection, cloud, infrastructure and services.

-

‘LLMs are unreliable delegates’: Microsoft researchers say you probably shouldn’t trust AI with work documents

‘LLMs are unreliable delegates’: Microsoft researchers say you probably shouldn’t trust AI with work documentsNews A research paper from Microsoft shows AI degrades documents over longer workflows

By Nicole Kobie Published

-

SCC appoints former Microsoft leader to head up UK public sector business

SCC appoints former Microsoft leader to head up UK public sector businessNews Alexandra Wilkinson joins the Birmingham-based provider as it looks to expand public sector growth and AI adoption initiatives

By Daniel Todd Published

-

UK government calls on firms to sign Cyber Resilience Pledge as security sector booms

UK government calls on firms to sign Cyber Resilience Pledge as security sector boomsNews With new figures showing a boom in the country's cybersecurity sector, the government calling on businesses to make the most of the industry’s expertise

By Emma Woollacott Published