Claude Code flaws left AI tool wide open to hackers – here’s what developers need to know

The trio of Claude code flaws could have put developers at risk of attacks

Security researchers have warned Anthropic’s Claude Code tool had critical flaws that could’ve allowed hackers to execute remote code.

A recent advisory from Check Point Research revealed details of a trio of vulnerabilities that could allow code to be run remotely or allow hackers to steal API keys by taking advantage of automation and other built-in tools.

The flaws shouldn't come as a surprise, given how quickly AI coding tools have been introduced to the industry, said Check Point. In recent years, tools like Claude Code and GitHub Copilot have led to a surge in AI-generated or assisted code.

The pace of adoption naturally increases the potential attack surface, researchers noted, with security teams struggling to keep up.

"As AI-powered development tools rapidly integrate into software workflows, they introduce novel attack surfaces that traditional security models haven’t fully addressed," said Check Point researchers Aviv Donenfeld and Oded Vanunu said in a post detailing the bugs.

"These platforms combine the convenience of automated code generation with the risks of executing AI-generated commands and sharing project configurations across collaborative environments."

All three bugs have already been fixed after the security firm disclosed them to Anthropic over the course of several months last year.

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

ITPro approached Anthropic for comment, but did not receive a response by time of publication.

The Claude Code flaws explained

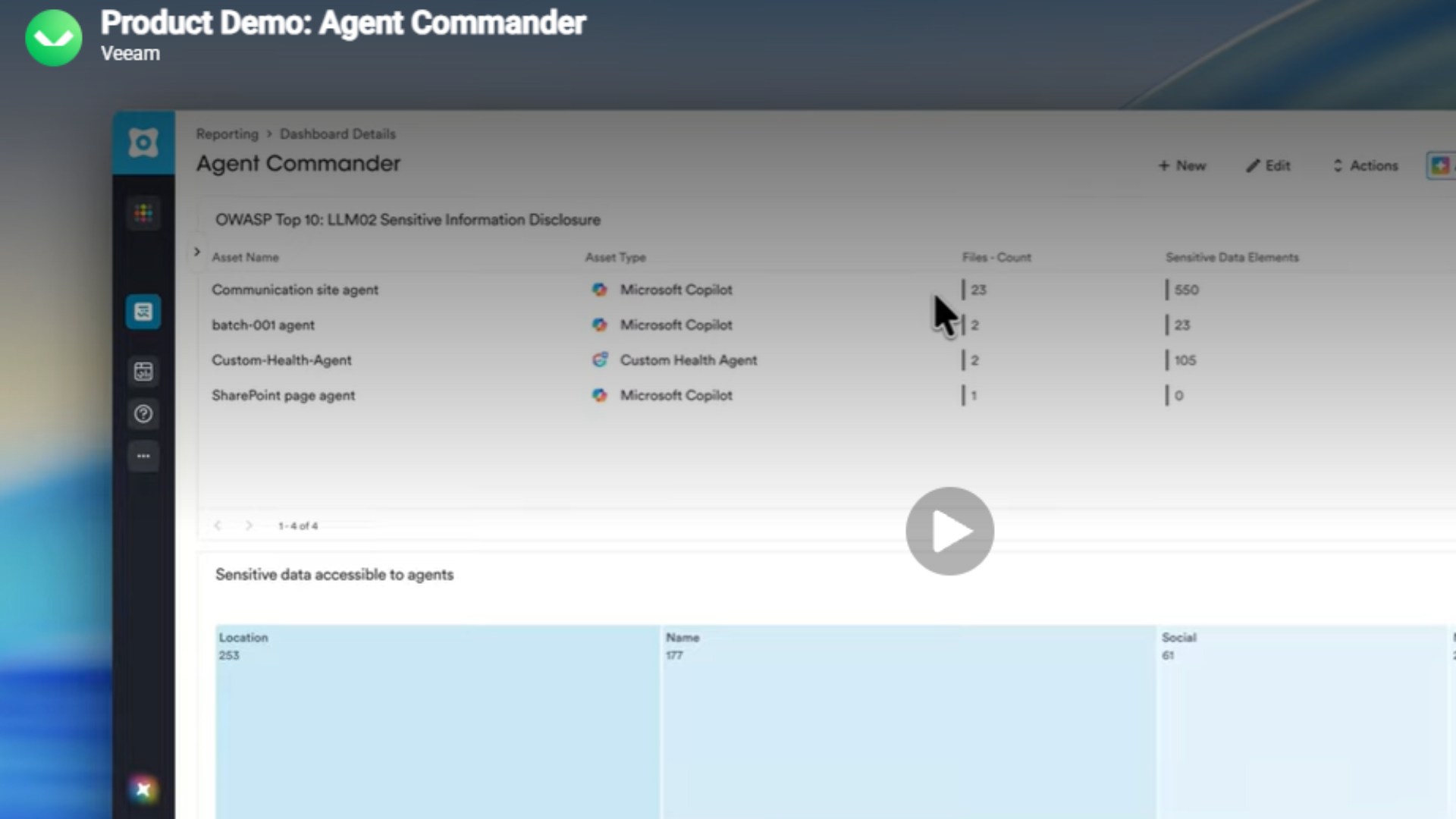

In a blog post detailing the flaws, Check Point said Claude Code introduced a new attack vector by trying to make work easier for developers. The tool is designed to embed project-level configuration files directly within repositories, researchers explained, automatically applying them when a dev opens the tool within any given project directory.

While this is a convenient feature, researchers noted that in some instances cloning and opening a malicious repository would be enough to trigger hidden commands, slip past safeguards, and expose active API keys.

"Check Point Research found that these files, typically perceived as harmless operational metadata, could in fact function as an active execution layer."

Three flaws in total were spotted by Check Point. The first impacted Claude Hooks, which can be used to run predefined actions when a session is started. By fooling developers into opening a malicious repository, hackers could trigger arbitrary shell commands on their computer.

The second centered on Model Context Protocol (MCP), an industry system for letting AI models work with external tools. With this flaw, designated CVE-2025-59536, Check Point found that repository-controlled configuration settings could override safeguards that require users approval, letting remote code be executed.

"When code runs before trust is established, the control model is inverted – shifting authority from the user to repository-defined configuration and expanding the AI-driven attack surface," the researchers said.

The third flaw, tracked as CVE-2026-21852, takes advantage of those repository-controlled configuration settings, researchers said.

If a hacker meddles with those, it's possible to redirect API traffic to an attacker controlled server before security protections kick in. That could allow attackers to steal a developer's active API key and other credentials.

Check Point stressed that API key exposure is particularly problematic as stolen credentials could allow hackers to access shared project files, modify or delete cloud data, upload further malicious content, and run up API costs.

"In collaborative AI environments, a single compromised key can become a gateway to broader enterprise exposure," the researchers noted.

From passive to execution

These new systems have led to a shift in how software supply chains work, Check Point said, relying on repository-based configuration files for automation and collaboration.

"Traditionally, these files were treated as passive metadata – not as execution logic," the researchers noted, adding that "fundamentally alters the threat model."

Check Point said AI-powered coding tools are bringing significant benefits — but also changing how systems work leading to a need to "reassess traditional security assumptions."

"As AI integration deepens, security controls must evolve to match the new trust boundaries,” researchers added.

FOLLOW US ON SOCIAL MEDIA

Make sure to follow ITPro on Google News to keep tabs on all our latest news, analysis, and reviews.

You can also follow ITPro on LinkedIn, X, Facebook, and BlueSky.

Freelance journalist Nicole Kobie first started writing for ITPro in 2007, with bylines in New Scientist, Wired, PC Pro and many more.

Nicole the author of a book about the history of technology, The Long History of the Future.

-

AI might help speed up software development, but 81% of devs now spend more time reviewing code – and it’s creating an ‘invisible work’ trend that’s pushing teams to the limit

AI might help speed up software development, but 81% of devs now spend more time reviewing code – and it’s creating an ‘invisible work’ trend that’s pushing teams to the limitNews While AI is improving productivity and efficiency, many developers are caught up in a vicious cycle of code reviews and bug hunting

-

Anthropic is increasing Claude Code usage limits — here’s everything you need to know

Anthropic is increasing Claude Code usage limits — here’s everything you need to knowNews The new deal will help Anthropic increase Claude Code usage limits, and API rate limits for Claude Opus models

-

AWS CEO Matt Garman is bullish on the future of SaaS — Amazon Quick shows there’s a ‘great business opportunity’ with AI-powered software

AWS CEO Matt Garman is bullish on the future of SaaS — Amazon Quick shows there’s a ‘great business opportunity’ with AI-powered softwareNews Matt Garman said fears over the ‘SaaSpocalypse’ were overblown in February, now AWS is making big moves in the SaaS space

-

AI is coming to Ubuntu: Canonical exec teases future AI features and agentic workflow capabilities for version 26.10 — but on a ‘strictly opt-in basis’

AI is coming to Ubuntu: Canonical exec teases future AI features and agentic workflow capabilities for version 26.10 — but on a ‘strictly opt-in basis’News A range of new AI features are coming to Ubuntu over the next year, according to maintainers, but only providing they’re of “sufficient maturity and quality”.

-

Everything you need to know about the GitHub Copilot pricing changes

Everything you need to know about the GitHub Copilot pricing changesNews GitHub Copilot pricing changes mean users will be charged based on consumption, rather than a set number of credits

-

Developers are slacking on AI-generated code safety – here's why it could come back to haunt them

Developers are slacking on AI-generated code safety – here's why it could come back to haunt themNews While organizations are aware of the risks, many are spending little time or effort on tracking artifact versions, origins, and security attestations

-

Four things you need to know about GitHub's AI model training policy – including how to opt out

Four things you need to know about GitHub's AI model training policy – including how to opt outNews Users of certain GitHub Copilot plans will have interaction data used to train AI models, but can opt out

-

'AI doesn't solve the burnout problem. If anything, it amplifies it': AI coding tools might supercharge software development, but working at 'machine speed' has a big impact on developers

'AI doesn't solve the burnout problem. If anything, it amplifies it': AI coding tools might supercharge software development, but working at 'machine speed' has a big impact on developersNews Developers using AI coding tools are shipping products faster, but velocity is creating cracks across the delivery pipeline