The best Python test frameworks

Make your Python code shine with these testing tools

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

You are now subscribed

Your newsletter sign-up was successful

Testing isn't a software developer's favourite job. The fun part is writing the code; who wants to jump through hoops that tell you just how little of it works? Nevertheless, it's a mandatory practice for good programming. It will help prevent bugs that could affect the performance of your code, make it less usable, or worse still, allow cyber attackers in through the back door.

Building testing into your programming from the beginning makes it easier and less time consuming to fix bugs, but how do you do it? For Python developers, the language comes with its own testing framework called Unittest, but there are also third-party testing frameworks that aim to improve on the original. Here's a look at some of them.

These testing tools aren't just useful on their own. You can use some of them in combination with each other. For example, Selenium is good for testing user interactions, but is at its most powerful when used with other frameworks. Because it uses Python, you can parameterize Selenium by calling it from your PyTest functions. You can also fold it into behaviour-driven development by calling Selenium from within your Behave session's steps file.

Whether you're using unit testing, behavioural testing, or both, building these tests into your coding process throughout can help to increase the quality of your final product.

Unittest (PyUnit)

As Python's baked-in testing module, Unittest's main advantage is that you don't need to install anything else to use it out of the box. It handles unit testing (as you could probably guess), which ensures that a software function produces the correct output, even when presented with unusual input.

This testing tool requires you to create a class that holds your tests by subclassing its TestCase class, like so:

| class TestStringMethods(unittest.TestCase): |

You then create a function for each of your tests inside the class. You use one of its assert functions to determine test outcomes. For instance, self.assertEquals() tests whether a function's output equals your expected output. There are lots of these, including boolean assertions (testing whether something is true or false), greater or lesser, and matches for regular expressions. A newer version, Unitest2, supports more of these assertions.

One useful feature of this tool is its ability to support fixtures. These are environments that you set up to support the program. For example, you might need to log into a database and retrieve a record before you can test whether a function updates it correctly with a given input. A fixture enables you to do that.

Unittest is a great framework for writing basic tests. Its use of a class for test cases allows you to group tests that map to software features. However, it quickly gets unwieldy thanks to its insistence on inheriting tests in classes.

Nose2

Unittest's limitations left room for improvement, so third party developers updated it with their own testing framework called Nose, subsequently replaced with Nose2.

Because this testing framework extends Unittest, it can run Unittest tests alongside its own. Nose2 is extensible, providing support for plugins that give it the potential to offer more functions than unittest. It also adds another important feature: parameterisation.

Most tests need multiple inputs to be sure that no strange elements slip through. For example, if you're testing a function that validates a product price, you might want to try entering various prices with different numbers of digits. You might also want to enter other prices that might fail the test, such as 0.00, a negative price, and perhaps prices with non-numeric characters. Rather than creating a single test for each of these inputs, it would be better to make a test that could rerun itself with inputs from a list. Nose2 allows you to do this.

Pytest

Pytest is an alternative unit testing framework to Unittest and is designed for simple but highly functional testing. Even the developers of Nose2 recommend it as a good option for those new to testing on their GitHub page.

Because PyTest is a third-party open-source testing library, you must install this open-source tool before using it. One difference to Unittest is its use of functions without the need for a class. This means that Pytest requires less code to create tests than Unittest does.

Let's say we have a function to add 1 to an input:

| def inc(x): return x + 1 |

Whereas unittest requires you to contain a test for this system in a separate class, you can simply write a test as a separate function, simplifying the whole process.

Then we use a simple assert function to test the result as a separate function in the same file:

| def test_answer(): assert inc(3) == 4 |

Just as in unittest, the assert function lets you describe what a test looks like when it passes. In this case, running the function inc with an input of three should return a result of 4. If it doesn’t, then PyTest will report that the test has failed.

Pytest is also extensible and features a full plugin library.

DocTest

For an alternative method of testing code, how about writing your tests directly into your documentation? DocTest directly references your code documentation for its tests. You write your documentation as you normally would, but you embed examples of function calls with appropriate input and results within it.

You can embed these tests in docstrings at the function or module level. Then you can either invoke it at the command line or you can include instructions to run it at the bottom of your modules. Alternatively, you can embed the tests in separate .rst help files for larger documentation files.

Like Nose2, DocTest has the advantage of integrating with existing Unittest tests, and provides an elegant way of combining tests and documentation together. On the downside, however, it doesn't include fixture management.

Behave

Those tools are all useful for unit testing, where you test individual functions against basic inputs. However, they might not enable you to test combinations of functions that produce business results your users care about. A business executive might not care that a function validates a customer's login email address, but they'd like to be sure that the program lists a user's outstanding orders after they've logged in.

This is where behaviour-driven development comes in. It tests scenarios and outcomes that map closely to tasks that software users care about. These are known in development parlance as user stories.

The Behave testing library uses specification files to test these stories, which developers must write in a language called Gherkin. It defines application scenarios, and then uses a structure of steps to define the tests, such as:

| given I log inwhen I have an outstanding orderthen I see my outstanding order |

The given statement sets up the environment to run the test. In this example, I log in describes that the user should be logged into the system before the test is run. The when statement then describes the condition that you’re testing (in this case, it’s testing what should happen when a logged-in user has an outstanding order). Finally, the then statement describes the desired outcome when the condition is met (the user should see their outstanding order)..

You define the code tested in these steps within a separate steps file using Python decorators. For example:

| @given('I log in')def step_impl(context)# call the necessary login functions here @when('I have an outstanding order')def step_impl(context)# call the necessary order retrieval functions here @then('I see my outstanding order')def step_impl(context)assert (len (order_list) > 0) |

As with other testing frameworks, you can write these tests before putting together any of your code. They'll all fail, of course, until you then write the functions to support them.

The great part of behaviour-driven development is that by writing the tests, you're documenting your user requirements in a structured way up front. You can then use these tests as a guide when writing your application.

Selenium

These tools are all very well suited to testing application code running locally, but how do you relate them to user actions in browser-based applications? You might write tests to run at the controller level if using a model-view-controller architecture, as we documented in our series on Flask development, but a more useful, intuitive way to test browser-based inputs is to simulate the browser itself. Browser automation enables you to test program input from the user's perspective, and there's one go-to solution for that in Python: Selenium.

Selenium simulates a browser that you can program to automatically carry out common user actions, including entering text into web forms and clicking buttons. You do this by writing scripts in a range of languages including Java, Ruby, and Python. The script finds elements in a web page using their names or other parameters, and then takes actions on them.

Rather than functioning as a testing tool itself, Selenium is a library that you can call from within your Python testing framework of choice, such as Pytest.

For example, you might use this tool to test a form which asks for a user’s postcode and another that asks for their country. You could use PyTest to carry the relevant test data, such as ‘90210’ and ‘United States’. You’d then manipulate Selenium to enter that data into the browser by calling Selenium to find the field named postcode and then entering the postcode. It would then select the relevant country from the drop-down country list, and finally it would find the submit button and automatically click it.

Then, you’d call Selenium to interpret the browser’s output, passing it to an assert statement in Pytest so that you could compare it against your expected result.

Selenium enables you to test your program from a user’s perspective. By marrying it with parameterisation in your testing framework, you can submit dozens or hundreds of combinations to test a multitude of complex form entries and other browser interactions.

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

Danny Bradbury has been a print journalist specialising in technology since 1989 and a freelance writer since 1994. He has written for national publications on both sides of the Atlantic and has won awards for his investigative cybersecurity journalism work and his arts and culture writing.

Danny writes about many different technology issues for audiences ranging from consumers through to software developers and CIOs. He also ghostwrites articles for many C-suite business executives in the technology sector and has worked as a presenter for multiple webinars and podcasts.

-

Cohere's Aleph Alpha merger could create a transatlantic sovereign AI powerhouse

Cohere's Aleph Alpha merger could create a transatlantic sovereign AI powerhouseAnalysis The merger between Cohere and Aleph Alpha aims to capitalize on the burgeoning sovereign AI market

-

Everything you need to know about OpenAI's new workspace agents

Everything you need to know about OpenAI's new workspace agentsNews New ‘workspace agents’ from OpenAI will automate tasks for workers and can be customized for specific roles

-

Anthropic says Claude Code can help streamline 'cost-prohibitive' COBOL modernization, but IBM says it's not that simple – 'decades of hardware-software integration cannot be replicated by moving code'

Anthropic says Claude Code can help streamline 'cost-prohibitive' COBOL modernization, but IBM says it's not that simple – 'decades of hardware-software integration cannot be replicated by moving code'News Research from Anthropic claims Claude Code can simplify modernization of COBOL systems

-

UK government launches industry 'ambassadors' scheme to champion software security improvements

UK government launches industry 'ambassadors' scheme to champion software security improvementsNews The Software Security Ambassadors scheme aims to boost software supply chains by helping organizations implement the Software Security Code of Practice.

-

So much for ‘trust but verify’: Nearly half of software developers don’t check AI-generated code – and 38% say it's because it takes longer than reviewing code produced by colleagues

So much for ‘trust but verify’: Nearly half of software developers don’t check AI-generated code – and 38% say it's because it takes longer than reviewing code produced by colleaguesNews A concerning number of developers are failing to check AI-generated code, exposing enterprises to huge security threats

-

‘1 engineer, 1 month, 1 million lines of code’: Microsoft wants to replace C and C++ code with Rust by 2030 – but a senior engineer insists the company has no plans on using AI to rewrite Windows source code

‘1 engineer, 1 month, 1 million lines of code’: Microsoft wants to replace C and C++ code with Rust by 2030 – but a senior engineer insists the company has no plans on using AI to rewrite Windows source codeNews Windows won’t be rewritten in Rust using AI, according to a senior Microsoft engineer, but the company still has bold plans for embracing the popular programming language

-

AWS says ‘frontier agents’ are here – and they’re going to transform software development

AWS says ‘frontier agents’ are here – and they’re going to transform software developmentNews A new class of AI agents promises days of autonomous work and added safety checks

-

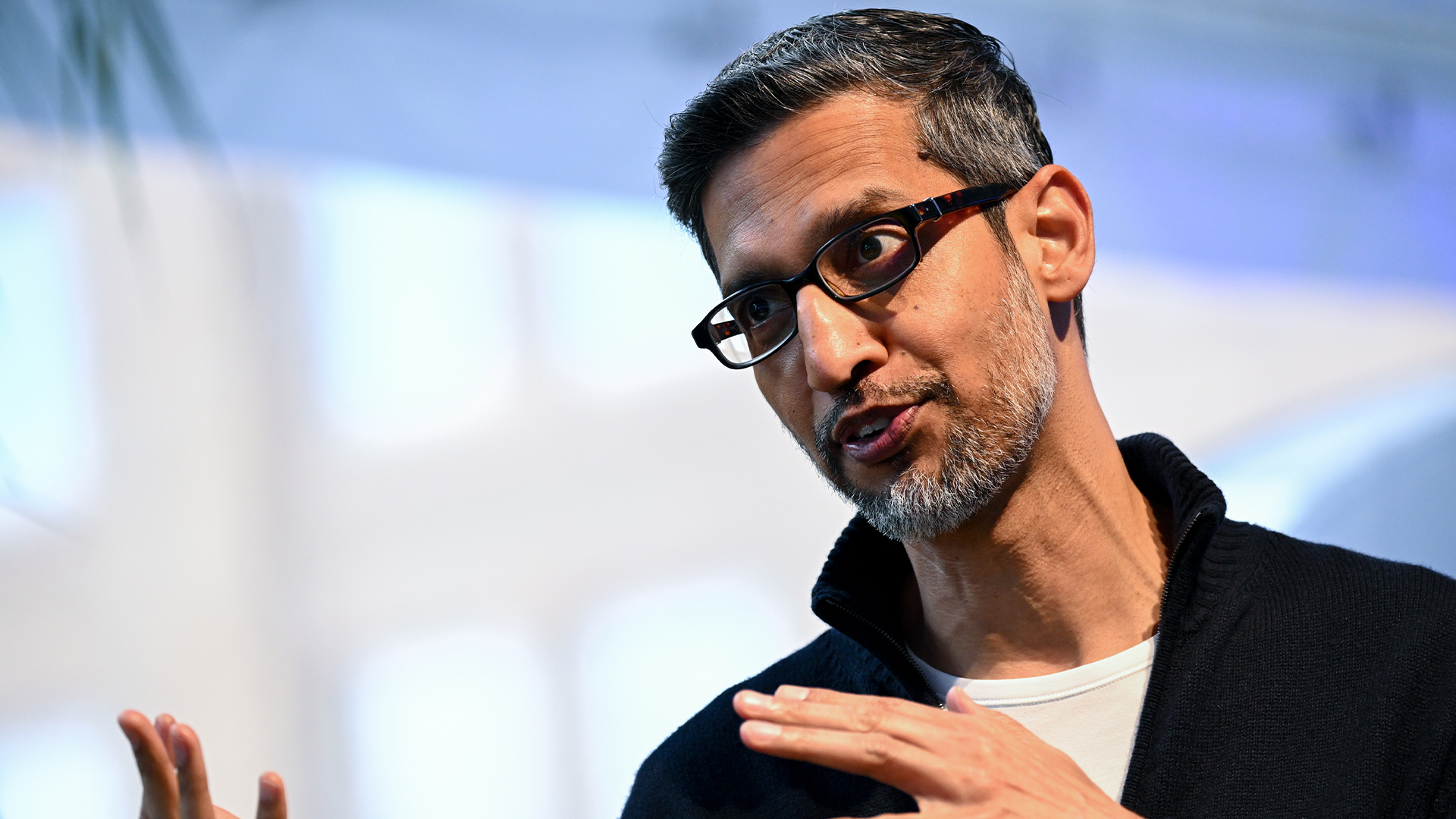

Google CEO Sundar Pichai thinks software development is 'exciting again' thanks to vibe coding — but developers might disagree

Google CEO Sundar Pichai thinks software development is 'exciting again' thanks to vibe coding — but developers might disagreeNews Google CEO Sundar Pichai claims software development has become “exciting again” since the rise of vibe coding, but some devs are still on the fence about using AI to code.

-

Google Brain founder Andrew Ng thinks everyone should learn programming with ‘vibe coding’ tools – industry experts say that’s probably a bad idea

Google Brain founder Andrew Ng thinks everyone should learn programming with ‘vibe coding’ tools – industry experts say that’s probably a bad ideaNews Vibe coding might help lower the barrier to entry for non-technical individuals, but users risk skipping vital learning curves, experts warn.

-

Anthropic’s new Claude Code web portal aims to make AI coding even more accessible

Anthropic’s new Claude Code web portal aims to make AI coding even more accessibleNews Claude Code for web runs entirely in a user’s browser of choice rather than in a command-line interface and can be connected directly to chosen GitHub repositories.