Presented by AMD

Data center efficiency should start at the processor level, report claims

Choosing a processor that can ramp up computational work, but with fewer physical servers, can immediately reduce a data center’s carbon footprint

Choosing processors that complete more work with fewer physical servers could help data centers offset their overall power consumption and, ultimately, their carbon footprints.

That’s according to the Vanguard report from 451 Research, the findings of which also concluded that businesses will stand a better chance of offsetting their carbon footprints simply by looking at their on-prem and cloud technologies.

Data centers measure energy efficiency with the power usage effectiveness (PUE) metric which examines the overall performance, including cooling, server room design, renewable energy sources, and the facility's lighting.

In 2019, US data centers consumed approximately 268TWh of energy, representing 6.3% of America’s total energy usage, according to 451 Research, a higher percentage of energy consumption than the entire country of Mexico.

RELATED RESEARCH

The digital-first journey towards the future enterprise in Europe

The shift to a digital-first Europe

These numbers are continuing to climb, despite new governmental regulations and the corporate introduction of ‘green’ initiatives.

IT operations are a growing contributor to climate change with many in the IT industry now concerned with the environmental impact of their tech stack.

451 Research’s Digital Pulse user survey, for example, found that nearly half of the IT decision-makers it polled said that their IT operations now account for most or all of their environmental impact.

However, the survey also found that modernizing core IT infrastructure and the adoption of public cloud services were the strategies that customers were most commonly adopting to meet their environmental objectives.

RELATED RESOURCE

Build an IT infrastructure that meets demand fluctuations for computing resources

DOWNLOAD NOW

John Abbot, principal research analyst at 451 Research, noted in the report: “Simply driving up power consumption to deliver higher performance in new generations of CPUs is unsustainable”.

A potential solution here can be found within processors. Chip manufacturers are breaking new ground with smaller, more powerful, and more intelligent processors, expanding on the traditional x86-64 architecture.

These new workload-specific accelerator chips are used as part of a heterogeneous computing architecture to complement the capabilities of general-purpose CPUs offering new levels of performance and control over consumed wattage.

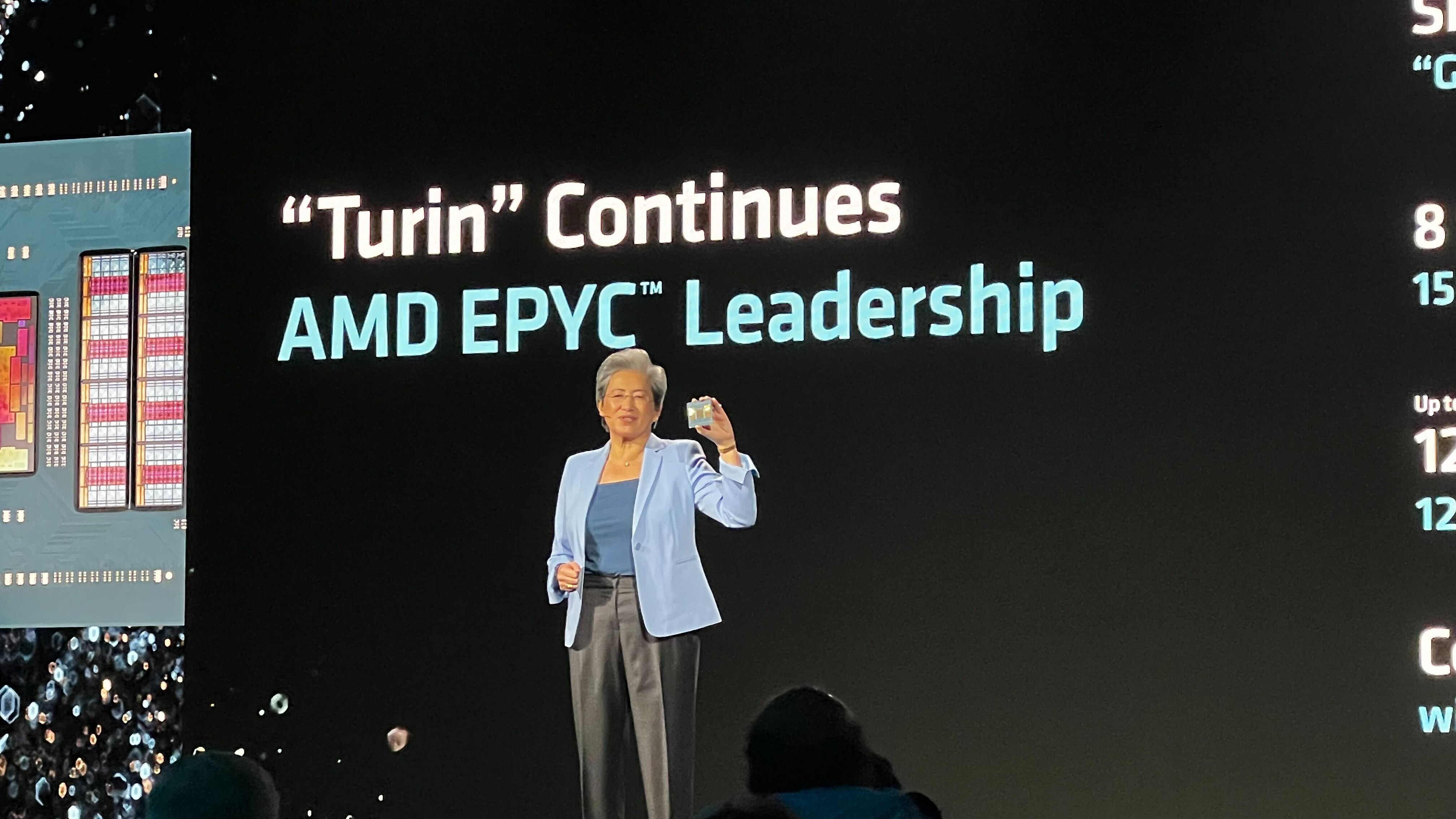

AMD is one of these companies; in 2020, the chipmaker said its goal was to deliver a 30x increase in energy efficiency by 2025 for AI training and high-performance computing applications running on accelerated compute nodes.

If its goal is achieved, billions of kilowatt hours of electricity can be saved by 2025, according to 451 Research.

“While it takes some effort to leverage these CPU and workload accelerator combinations, the cost savings offered can make it worthwhile for enterprises to adopt them from a purely budgetary standpoint,” Abbot noted in the report.

Considering what technology sits at the heart of the data center – the processor – should be the logical first step, according to AMD.

Choosing a processor that can meet the required level of computational work, but with fewer physical servers, can immediately reduce a data center’s carbon footprint and help reduce the facility's overall power consumption.

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

ITPro is a global business technology website providing the latest news, analysis, and business insight for IT decision-makers. Whether it's cyber security, cloud computing, IT infrastructure, or business strategy, we aim to equip leaders with the data they need to make informed IT investments.

For regular updates delivered to your inbox and social feeds, be sure to sign up to our daily newsletter and follow on us LinkedIn and Twitter.

-

Dell Pro 5 vs Dell Pro 7: what’s the difference?

Dell Pro 5 vs Dell Pro 7: what’s the difference?Sponsored Knowing which Dell Pro laptop to purchase comes down to appetite for performance

-

AI readiness and legal compliance: Practical strategies for MSPs in the age of Copilot

AI readiness and legal compliance: Practical strategies for MSPs in the age of CopilotIndustry Insights How MSPs can respond effectively to the rising demand for AI services

-

New £45 million supercomputer to support UK fusion research

New £45 million supercomputer to support UK fusion researchNews Sunrise is claimed to be the world's most powerful AI supercomputer dedicated to fusion energy, and is set to come into operation within months

-

France is getting its first exascale supercomputer – and it's named after an early French AI pioneer

France is getting its first exascale supercomputer – and it's named after an early French AI pioneerNews The Alice Recoque system will be be France’s first, and Europe’s second, exascale supercomputer

-

The role of the CPU in the AI era

The role of the CPU in the AI eraSupported The backbone of enterprise AI, CPUs are the unsung heroes of inference

-

Supercomputing in the real world

Supercomputing in the real worldSupported From identifying diseases more accurately to simulating climate change and nuclear arsenals, supercomputers are pushing the boundaries of what we thought possible

-

Discover the six superpowers of Dell PowerEdge servers

Discover the six superpowers of Dell PowerEdge serverswhitepaper Transforming your data center into a generator for hero-sized innovations and ideas.

-

Inside Lumi, one of the world’s greenest supercomputers

Inside Lumi, one of the world’s greenest supercomputersLong read Located less than 200 miles from the Arctic Circle, Europe’s fastest supercomputer gives a glimpse of how we can balance high-intensity workloads and AI with sustainability

-

AMD eyes networking efficiency gains in bid to streamline AI data center operations

AMD eyes networking efficiency gains in bid to streamline AI data center operationsNews The chip maker will match surging AI workload demands with sweeping bandwidth and infrastructure improvements

-

Enhance end-to-end data security with Microsoft SQL Server, Dell™ PowerEdge™ Servers and Windows Server 2022

Enhance end-to-end data security with Microsoft SQL Server, Dell™ PowerEdge™ Servers and Windows Server 2022whitepaper How High Performance Computing (HPC) is making great ideas greater, bringing out their boundless potential, and driving innovation forward