What is an artificial neural network?

A look at the role of neural nodes and how deep learning is used to create algorithms

At the heart of many artificial intelligence computer systems are artificial neural networks (ANN). These networks have been inspired by the biological arrangements found in a human brain.

Using a structure of connected "neurons", these networks can distinguish numerical arrangements by 'learning' to process certain stimuli and creating assessments without the involvement of humans.

One such practical instance of this is the use of an ANN to identify objects in images. In a system constructed to identify the image of a cat, an ANN will be taught on a data set that comprises images that are labelled “cat”, which can be used as a reference point for any forthcoming analysis. Just as people may learn to recognise a dog based on distinctive features, such as a tail or fur, so too does an ANN, by breaking each image down into their various component parts, such as colour and shapes.

In practical terms, a neural network offers a sorting and classification level that sits on top of your managed data, aiding the clustering and grouping of data based on resemblances. It's possible to produce complex spam filters, algorithms to find fraudulent behaviour and customer relationship tools that precisely measure mood, all using an artificial neural network.

How an artificial neural network works

ANNs draw inspiration from the neurological organization of the human brain. They are constructed using neuron-like computational nodes that converse with each other along channels like the way synapses work. This means the output of one computational node will affect the processing of another.

Neural networks signified an enormous leap in the development of artificial intelligence, which had until then relied on the use of pre-defined processes and regular human intervention to create the desired outcome. An ANN allows the analytical load to be spread across a net of several interconnected layers, each containing interconnected nodes. As information is processed and contextualised, it's then passed along to the next node, and down through the layers. The idea is to allow additional contextual information to be drip-fed into the network to inform processing at every stage.

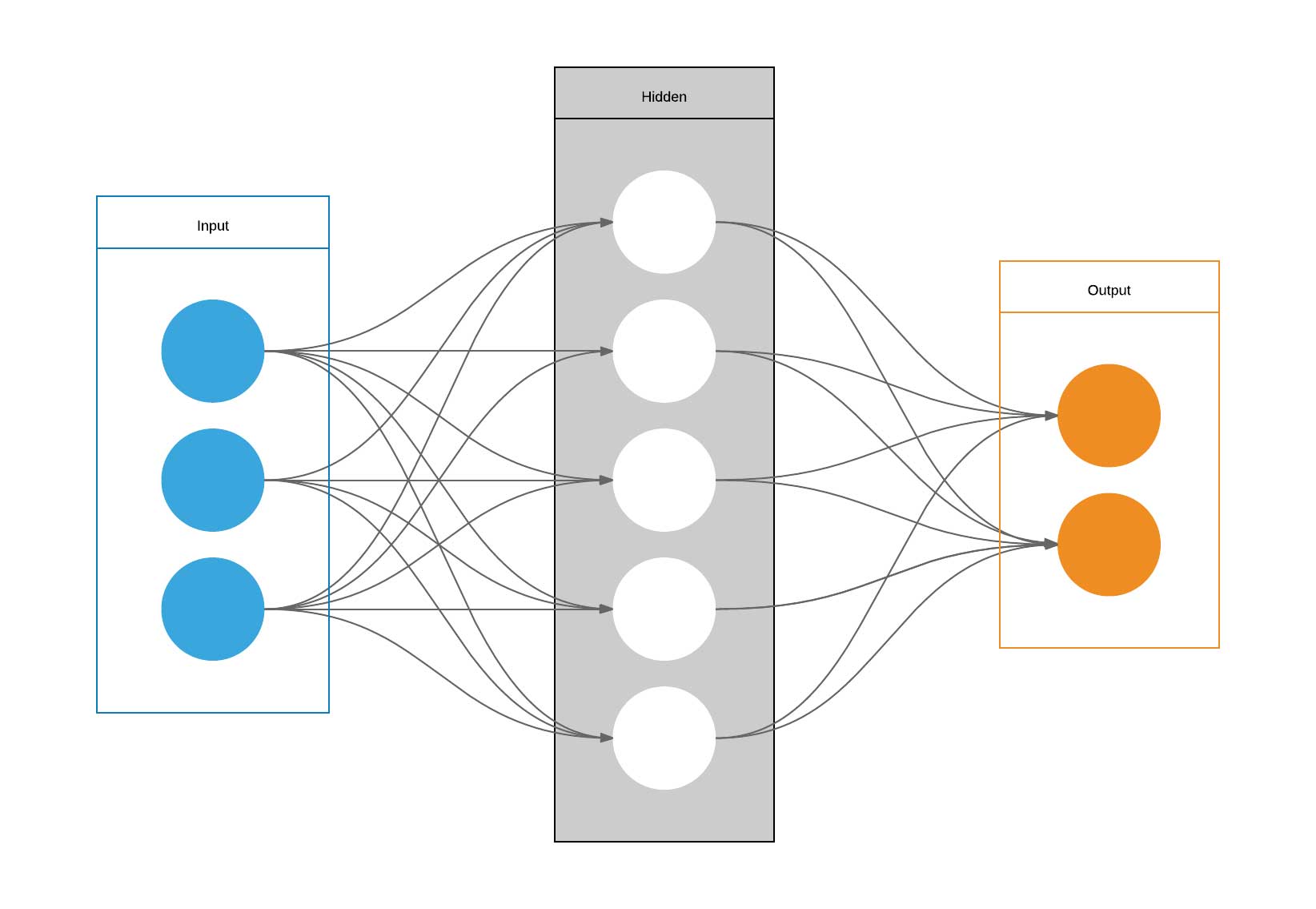

A basic structure of a single 'hidden' layer neural network

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

Much like the structure of a fishing net, a single layer of a neural network connects processing nodes together using strands. The vast number of connections enable enhanced communication between these nodes, increasing accuracy and data processing throughput.

ANNs will then pile a number of these layers on top of each other to analyse data, creating an input and output flow of data from the first layer to the last. Although the number of layers will vary depending on the nature of the ANN and its task, the idea is to pass data from one layer to another, with additional contextual information being added as it goes. This deviates slightly from the human brain, which is connected using a 3D matrix, rather than a series of layers.

Like an organic brain, nodes 'fire' across an ANN when they receive specific stimuli, passing the signal over to another node. However, in the case of ANNs, the input signal is defined as a real number, with the output being the sum of the various inputs.

The value of these inputs is dependent on their weighting, which serves to increase or decrease the importance placed on the inputs respective to the task being performed. The goal is to take an arbitrary number of binary inputs and translate them into a single binary output.

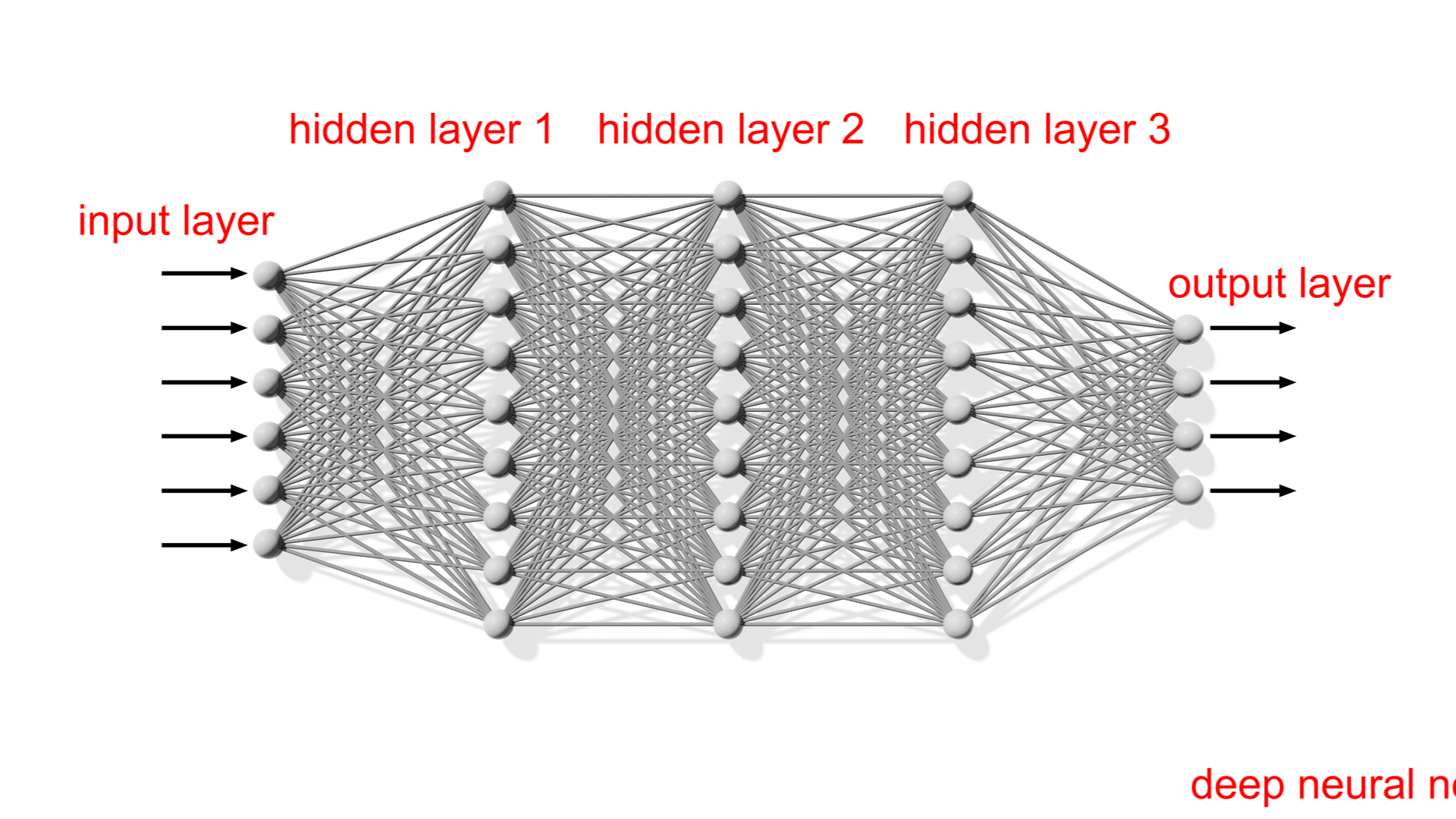

A more complex neural network, increasing the sophistication of its processing

Earlier models of neural networks used shallow structures, where only one input and output layer were used. Modern systems now are comprised of an input layer, where data first enters the network, multiple 'hidden' layers, which add complexity to the analysis, and an output layer.

This is where the term 'deep learning' has derived - the 'deep' part specifically referring to any neural network that uses more than one 'hidden' layer.

The evening party example

To explain how a neural network works in practice, let's boil it down to a real-world example.

Imagine you have been invited to a party and you are trying to decide whether to go. It's highly likely that you are weighing up pros and cons and pulling a variety of factors into the decision process. For the sake of this example, we'll pick just three – 'will my friends be going?', 'is the party easy to get to?', 'is the weather going to be good?'

It's possible to model this process using an artificial neural network by translating these considerations into binary numbers. For example, we could assign a binary value to 'weather', namely '1' for clear weather and '0' for severe weather. The same format will be repeated for each deciding factor.

Neural threshold

However, it's not enough to just assign values, as this doesn't help us arrive at a decision. For that, we would need to define a threshold – that is, the point at which the number of positive factors outweighs the number of negative factors.

Based on the binary values, a suitable threshold could be '2' – in other words, you would need two factors to return a '1' before deciding to attend the party. If your friends were to attend the party ('1') and the weather was good ('1'), this would be enough to pass the threshold.

If the weather was bad ('0'), and the party was difficult to get to ('0'), this would not meet the threshold and you would decide against attending the party, even if your friends were to attend ('1').

Neural weighting

Admittedly, that is a very basic example of the fundamentals of a neural network, but hopefully, it helps to highlight the idea of values and thresholds. However, the decision-making process is far more complex than this example, and it's often the case that one factor will be more influential in the decision-making process over another.

To create this variation, we can use 'neural weighting' – this dictates how important a factor's binary value is in relation to other factors by multiplying it by its weighting.

While each of the considerations in our example could sway you one way or another, it's likely that you will place greater importance on one or two of the factors. If you are entirely put off by the idea of leaving the house during a heavy downpour, then the inclement weather will outweigh the other two considerations. In this example, we could place greater importance on the weather value by giving it a higher weighting:

- Weather = w5

- Friends = w2

- Distance = w2

If we imagine that the threshold has now been set to 6, poor weather (a 0 value) would prevent the rest of the inputs from reaching the desired threshold, and therefore the node would not 'fire' (you would decide against going to the party).

RELATED RESOURCE

Cloud-managed networks: The secret to success

Discover how a simple, smart and secure network enhances productivity and drives business growth

Again, it's a simple example, but it provides an overview of how decisions are made based on the weight of evidence provided. If you're to extrapolate this to, say, an image recognition system, the various considerations of whether to attend a party (inputs) would be the dismantled characteristics of a given image, whether that's colour, size, or shapes. A system training to identifying a dog, for example, may give greater weighting to shapes, or colour.

When a neural network is in training, the weights and thresholds are set to random values. These are then continually adjusted as training data is passed through the network until a consistent output is achieved.

Benefits of neural networks

Neural networks are not completely limited by the data they feed on – you can go as far as saying they learn organically. And, as they can generalize inputs, they are highly valuable for pattern recognition systems.

This includes the ability to find shortcuts when tasked with answering computationally intensive answers and inferring relations between data points. The ANN doesn't have to expect a data source to be unambiguously connected.

They can also be fault tolerant, in that they have the ability to respond to failures and continue operating despite the error. In effect, it can self-diagnose and debug a network.

If they are scaled over multiple systems, they can route around missing or non-communicative nodes. This is in addition to finding ways around non-functioning parts of the network – it can do this by recreating data by inference to determine where the non-functioning nodes are.

Perhaps the biggest advantage of deep neural networks is the capability to process and cluster unstructured data. This includes images, text, audio, and also numerical data.

Examples of neural networks

Artificial neural networks is an area that is undergoing immense advancement, particularly with newer developments in generative AI. From modeling complex systems to processing ever larger datasets, the evolution of neural networks has been rapid, especially in fields like image processing, speech recognition, the financial and medical sectors, and content creation.

Image processing is arguably one of the easiest to explain as anyone with a modern smartphone will know. ANNs are used here for image classification and object recognition.

In the financial sector, ANNs can be used for credit scoring and stock market predictions. Investors and risk managers can use ANNs to process mass volumes of financial data and find telling insights that can inform investment decisions. When it comes to credit scores, it's about data-driven assessments to improve the accuracy of default predictions. Both examples require high-quality data and there is a black-box nature to financial system in that they tend to give results without giving away too much information.

Medical use cases for ANNs mostly deal with detection technologies, such as image recognition for MRI scans. With vast medical datasets, ANNs can enhance the accuracy of diagnostics and potentially catch diseases in their infancy.

However, ANNs are also helping advancements in drug discovery. Here they are used to speed up and identify potential drug candidates and formulate treatment plans and even financial outlays.

Another area of growth for ANNs is content creation. However, there are a few issues here, particularly around the use of deep learning models to learn and then 'create' the work of an artist. Essentially this is the power to create the ideas, images, and sounds of someone without needing the actual person. Examples like DALL-E, which is a deep neural network trained on millions of images and volumes of text, can be used to create various artworks - though often it's a mishmash of real-life works.

Dale Walker is a contributor specializing in cybersecurity, data protection, and IT regulations. He was the former managing editor at ITPro, as well as its sibling sites CloudPro and ChannelPro. He spent a number of years reporting for ITPro from numerous domestic and international events, including IBM, Red Hat, Google, and has been a regular reporter for Microsoft's various yearly showcases, including Ignite.

-

What are the Web Content Accessibility Guidelines (WCAG) and are they being followed?

What are the Web Content Accessibility Guidelines (WCAG) and are they being followed?Explainer More must be done to meet accessibility needs in corporate design

-

BenQ RD280UG monitor review

BenQ RD280UG monitor reviewReviews The RD280UG is a quirky 28in 4K+ monitor designed for the needs of programmers – but it's a great productivity monitor full stop

-

Intel targets AI hardware dominance by 2025

Intel targets AI hardware dominance by 2025News The chip giant's diverse range of CPUs, GPUs, and AI accelerators complement its commitment to an open AI ecosystem

-

Calls for AI models to be stored on Bitcoin gain traction

Calls for AI models to be stored on Bitcoin gain tractionNews AI model leakers are making moves to keep Meta's powerful large language model free, forever

-

Why is big tech racing to partner with Nvidia for AI?

Why is big tech racing to partner with Nvidia for AI?Analysis The firm has cemented a place for itself in the AI economy with a wide range of partner announcements including Adobe and AWS

-

Baidu unveils 'Ernie' AI, but can it compete with Western AI rivals?

Baidu unveils 'Ernie' AI, but can it compete with Western AI rivals?News Technical shortcomings failed to persuade investors, but the company's local dominance could carry it through the AI race

-

OpenAI announces multimodal GPT-4 promising “human-level performance”

OpenAI announces multimodal GPT-4 promising “human-level performance”News GPT-4 can process 24 languages better than competing LLMs can English, including GPT-3.5

-

ChatGPT vs chatbots: What’s the difference?

ChatGPT vs chatbots: What’s the difference?In-depth With ChatGPT making waves, businesses might question whether the technology is more sophisticated than existing chatbots and what difference it'll make to customer experience

-

Bing exceeds 100m daily users in AI-driven surge

Bing exceeds 100m daily users in AI-driven surgeNews A third of daily users are new to the past month, with Bing Chat interactions driving large chunks of traffic for Microsoft's long-overlooked search engine

-

OpenAI launches ChatGPT API for businesses at competitive price

OpenAI launches ChatGPT API for businesses at competitive priceNews Developers can now implement the popular AI model within their apps using a few lines of code