Facebook facing scrutiny amid claims it encourages spread of misinformation

Despite extensive efforts from Facebook to combat disinformation, Mozilla is just one company that demands more

Facebook is facing mounting pressure from health experts to combat the rise of anti-vaccination groups that are setting up shop on the social network's platform and spreading disinformation.

Running with the title of 'anti-vaxxers', campaigners unite en masse to promote oft ill-informed facts in order to persuade others to join their movement, the movement that experts claim Facebook is apparently not doing enough to halt.

These groups are large and growing in number. One such group called 'Stop Mandatory Vaccination' has more than 150,000 members and reports say that hundreds appeared in Olympia, Washington last week to protest a bill that would make it more difficult for families to opt-out of vaccinations for their children.

The claims being made on these pages are controversial, to say the least. Some relate to vaccinations being linked with autism diagnoses and others claim that large quantities of vitamin C can cure the negative effects of vaccinations, despite them being completely safe.

Many of the Facebook groups with large followings are members-only with approval resting at the hands of the group admins.

Facebook is facing the ethical decision of whether to take action against these harmful groups and eliminate them from the platform, just as it has done in the past with Iranian and Russian-linked pages and groups.

Facebook's previous policy regarding the deletion of pages meant there were workarounds for admins that wished to continue spreading their message. If a page owner had more than one page but only one of which violated Facebook's rules, the page that violated the rules would be taken offline, leaving the other(s) untouched.

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

The problem with this is that page owners could simply migrate the same community over to the 'backup' page and continue as if nothing happened.

But, in a blog post last month addressing the changes Facebook made to its policy, that all changed. It said that it "may now also remove other Pages and Groups even if that specific Page or Group has not met the threshold to be unpublished on its own."

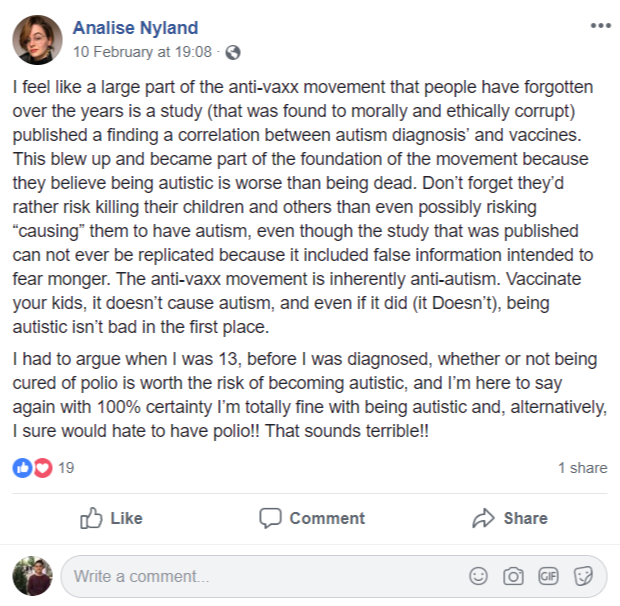

The effort to remove these pages for good is welcomed by those who are supposedly affected by the 'negative effects' of vaccines. One Facebook user posted how she would rather live with Autism than die of Polio.

Recently, Ethan Lindenberger, an Ohio teen took to Reddit for advice after his mother wouldn't allow him to be vaccinated.

The post received thousands of interactions and most commented that he had to be 18 years of age in order to go and get vaccinations for himself. Lindenberger did just that and has now had five vaccinations.

"If I get whooping cough I may be able to handle it because I'm older and I have a good immune system, but who's to say I don't cough on my two-year-old sister?" he asked when speaking to the BBC. "That's an extremely scary thought," he added.

The disinformation situation

Many different companies and bodies have hopped on the disinformation bandwagon and pressure has never been greater for companies, especially Facebook, to be more transparent and proactive in quelling the spread of dangerous misinformation.

The world's eyes have been on Facebook since the Cambridge Analytica scandal. One step forward Facebook has made recently was its announcement of securing five new partnerships ahead of the general election in India to be held in May. The partnerships were made to bolster its fact-checking abilities to silence disinformation from toying with voter opinion.

The partners India Today Group, Vishvas.news, Factly, Newsmobile, and Fact Crescendo are all internationally certified fact-checking networks which will monitor and review news stories on Facebook for facts and accuracy.

In just the past two weeks, Facebook announced it has removed hundreds of pages that coordinated inauthentic information from countries such as Iran, Indonesia and Myanmar.

Google has also made strides in the effort to secure elections. It announced it will focus its sights on disinformation campaigns and state-sponsored phishing attacks in an effort to prevent abuse of elections.

Measures will also be taken to promote genuine, informative content related to elections and actively combat the effects of disinformation.

These measures include adding a 'Fact Check' tag to news stories it indexes which helps users verify the information they read in big news stories. Also, as a result of a rise in scammers trying to take advantage of the growing popularity of online news to make money, Google has prohibited websites in its ad network from advertising misrepresentative content.

Despite the moves in the right direction, others still aren't happy. Mozilla recently signed an open letter addressed to the European Commission, emploring it to compel Facebook to be more transparent and do more to fight disinformation on its platform.

While Mozilla says it appreciates "the considerable work that Facebook and others have done to fight disinformation on their platforms", it believes that more could be done.

It references Facebook blocking third-parties from conducting analysis of ads on its platform and believes that Facebook must "develop an open, functional API that can be used by any developer, researcher, or organisation to develop tools, critical insights, and research designed to educate and empower users to understand and therefore resist targeted disinformation campaigns."

Connor Jones has been at the forefront of global cyber security news coverage for the past few years, breaking developments on major stories such as LockBit’s ransomware attack on Royal Mail International, and many others. He has also made sporadic appearances on the ITPro Podcast discussing topics from home desk setups all the way to hacking systems using prosthetic limbs. He has a master’s degree in Magazine Journalism from the University of Sheffield, and has previously written for the likes of Red Bull Esports and UNILAD tech during his career that started in 2015.

-

Liz Kendall: UK has to act fast to secure AI leadership

Liz Kendall: UK has to act fast to secure AI leadershipNews Tech secretary Liz Kendall has pledged greater investment in the chip and semiconductor technologies that underpin AI

-

Amazon CTO Werner Vogels on the future of software development

Amazon CTO Werner Vogels on the future of software developmentInterview AI marks the latest shift in a profession that’s always been evolving, and Amazon CTO Werner Vogels thinks developers should embrace it