AWS re:Invent: All the big updates from the rapid fire day-two keynote

AWS re:Invent has had no shortage of talking points so far

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

You are now subscribed

Your newsletter sign-up was successful

While the curtain may have closed on the day two keynote at AWS re:Invent, momentum at the annual flagship conference shows no sign of slowing.

AWS CEO Adam Selipsky began proceedings on Tuesday in the opening keynote, showcasing the cloud giant’s meteoric performance so far this year and its unrelenting pursuit of dominance in the generative AI space - and it certainly appears to be working.

A slew of new product announcements flowed throughout his enthusiastic two-hour keynote, including the exciting launch of Amazon Q, the firm’s AI assistant that could prove to be a game changing move for AWS in the ongoing AI race.

Updates to Amazon S3, deeper ties with Nvidia, and more intuitive, security-focused features for Amazon CodeWhisperer made for a truly captivating day. And we were just hours into the conference.

Bedrock steals the show at AWS re:Invent

Day two at AWS re:Invent saw Swami Sivasubramanian, VP for data and AI at the cloud giant take to the stage. Sivasubramanian’s keynote was almost too much to take in at times, giving attendees a series of rapid-fire product announcements spanning virtually every aspect of AWS operations.

Amazon Bedrock, the firm’s LLM framework which gives users access to a host of both in-house and third-party foundation models, saw two big announcements.

Anthropic’s Claude 2.1 foundation model, which launched last week, will now be available for customers using the platform. Claude 2.1 is a behemoth of a foundation model. Offering a 200k token context window, the model is twice the capacity of the previous iteration, and more than three times that of GPT-4.

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

MORE ON CLAUDE 2.1

And it’s far more accurate. This latest model has halved the number of ‘hallucinations’, whereby a model presents false information as factually correct.

Meanwhile, Meta’s Llama 2 70B model will also be plugged into the platform. An equally impressive open source model, Llama 2 is specifically fine-tuned for chase use cases, Sivasubramanian told attendees.

Bedrock customers could soon become swamped with a plethora of tantalizing options, but this is far from a bad thing. The framework is fast becoming an unrivaled platform for organizations seeking to dab their hands in generative AI and unlock marked improvements to operational efficiency.

Amazon SageMaker announcements

In addition to Bedrock announcements, Sivasubramanian revealed a slew of new capabilities for Amazon SageMaker, the firm’s managed service that allows customers to build AI tools in a cost-effective manner.

The launch of SageMaker HyperPod will likely be an impactful addition to the AWS portfolio, offering users the ability to accelerate model training times by around 40% and significantly reducing both the strain and cost of training AI models.

Model training has proven a cumbersome, intensive process for organizations so far, and AWS’ attempts to reduce this burden could prove vital for customers.

Research from CCS Insight in October suggested that “prohibitive” deployment and training costs could even harm adoption rates in 2024.

RELATED RESOURCE

Discover how organizations are accelerating the training and deployment of machine learning models at scale

"This is a big deal," Sivasubramanian told attendees.

This was complemented by the launch of SageMaker Clarify. The application allows customers to evaluate and compare the best models based on their unique business needs.

Throughout the first two days, AWS has been keen to emphasize the importance of experimentation at enterprises to help them identify the best models based on their unique requirements. Clarify could be critical for those still on the fence during those tentative early adoption phases.

A growing number of Titan models

New Titan foundation model launches topped off what was an action packed keynote session during day two.

Amazon’s powerful in-house models - available via Bedrock - are joined by the new Amazon Titan Multimodal Embeddings model and the Titan Image Generator.

The first of these will help customers produce more accurate and contextually relevant search experiences for end users, Sivasubramanian said.

"This enables you to build richer multimodal search and recommendation options," he said. Customers are already using the foundation model, he added, noting that it has enabled some to unlock marked improvements in user search experiences.

"Companies like Opera are using Titan multimodal embeddings to enhance search experience for customers."

The Titan Image Generator appears perfect for organizations operating in the retail, media, or advertising spaces. This model can produce realistic images based on natural language prompts and will also include invisible watermarks to ensure transparency.

A brief run through of the process for creating images through Titan Image Generator showed it to be a highly intuitive and easy-to-use platform.

Sivasubramanian said the model is specifically trained for a “large array of domains”, meaning that businesses spanning multiple industries will likely benefit.

ITPro is live on the ground in Las Vegas for AWS re:Invent 2023. To keep tabs on all of our coverage at the conference, subscribe to our newsletter or follow our daily live blog.

Ross Kelly is ITPro's News & Analysis Editor, responsible for leading the brand's news output and in-depth reporting on the latest stories from across the business technology landscape. Ross was previously a Staff Writer, during which time he developed a keen interest in cyber security, business leadership, and emerging technologies.

He graduated from Edinburgh Napier University in 2016 with a BA (Hons) in Journalism, and joined ITPro in 2022 after four years working in technology conference research.

For news pitches, you can contact Ross at ross.kelly@futurenet.com, or on Twitter and LinkedIn.

-

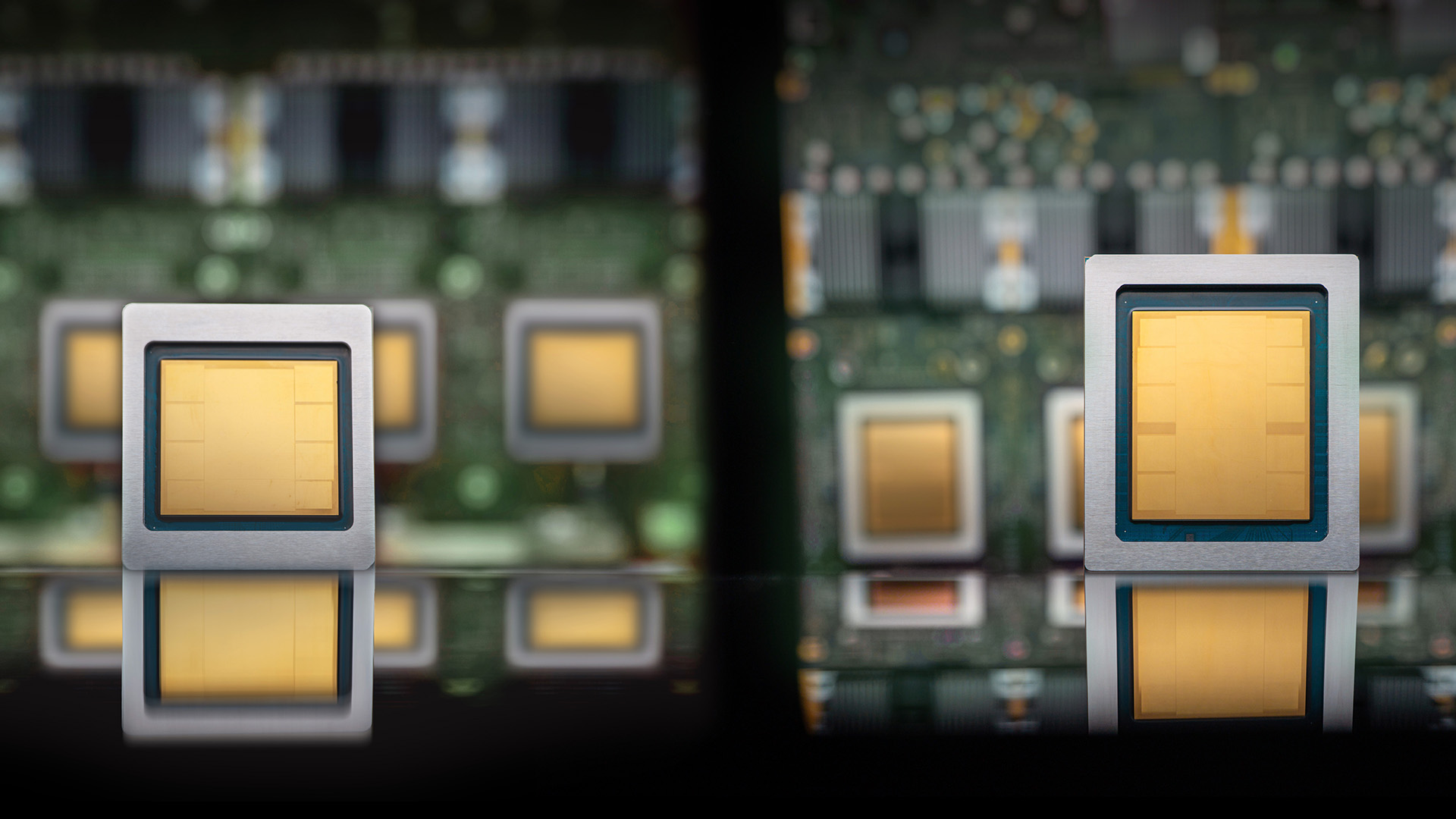

Google Cloud announces eighth-generation TPUs, boasting AI training and inference leaps

Google Cloud announces eighth-generation TPUs, boasting AI training and inference leapsNews Alongside chip improvements, Google Cloud has overhauled its data center fabric and file systems to support enterprise-scale AI deployment

-

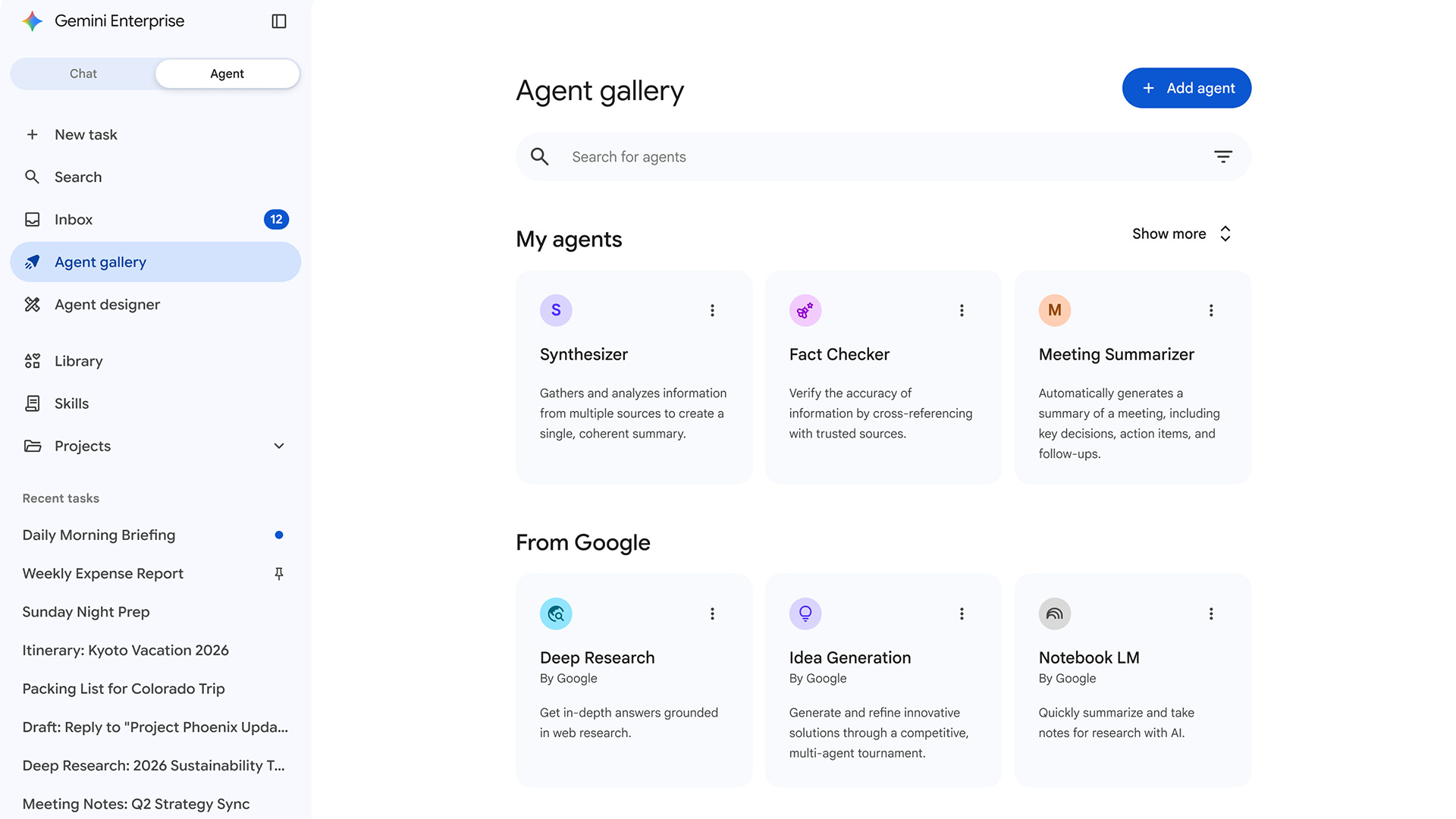

Google expands Gemini Enterprise, consolidates Vertex AI services to simplify agent deployment

Google expands Gemini Enterprise, consolidates Vertex AI services to simplify agent deploymentNews Gemini Enterprise Agent Platform aims to help organizations to build, scale, govern, and optimize AI agents

-

Why Amazon’s ‘go build it’ AI strategy aligns with OpenAI’s big enterprise push

Why Amazon’s ‘go build it’ AI strategy aligns with OpenAI’s big enterprise pushNews OpenAI and Amazon are both vying to offer customers DIY-style AI development services

-

Amazon’s rumored OpenAI investment points to a “lack of confidence” in Nova model range

Amazon’s rumored OpenAI investment points to a “lack of confidence” in Nova model rangeNews The hyperscaler is among a number of firms targeting investment in the company

-

Infosys teams up with AWS to fuse Amazon Q Developer with internal tools

Infosys teams up with AWS to fuse Amazon Q Developer with internal toolsNews Combining Infosys Topaz and Amazon Q Developer will enhance the company's internal operations and drive innovation for customers

-

AWS has dived headfirst into the agentic AI hype cycle, but old tricks will help it chart new waters

AWS has dived headfirst into the agentic AI hype cycle, but old tricks will help it chart new watersOpinion While AWS has jumped on the agentic AI hype train, its reputation as a no-nonsense, reliable cloud provider will pay dividends

-

Want to build your own frontier AI model? Amazon Nova Forge can help with that

Want to build your own frontier AI model? Amazon Nova Forge can help with thatNews The new service aims to lower bar for enterprises without the financial resources to build in-house frontier models

-

AWS CEO Matt Garman says AI agents will have 'as much impact on your business as the internet or cloud'

AWS CEO Matt Garman says AI agents will have 'as much impact on your business as the internet or cloud'News Garman told attendees at AWS re:Invent that AI agents represent a paradigm shift in the trajectory of AI and will finally unlock returns on investment for enterprises.

-

AWS targets IT modernization gains with new agentic AI features in Transform

AWS targets IT modernization gains with new agentic AI features in TransformNews New custom agents aim to speed up legacy code modernization and mainframe overhauls

-

Moving generative AI from proof of concept to production: a strategic guide for public sector success

Moving generative AI from proof of concept to production: a strategic guide for public sector successGenerative AI can transform the public sector but not without concrete plans for adoption and modernized infrastructure