Gartner says 40% of enterprises will experience ‘shadow AI’ breaches by 2030 — educating staff is the key to avoiding disaster

Staff need to be educated on the risks of shadow AI to prevent costly breaches

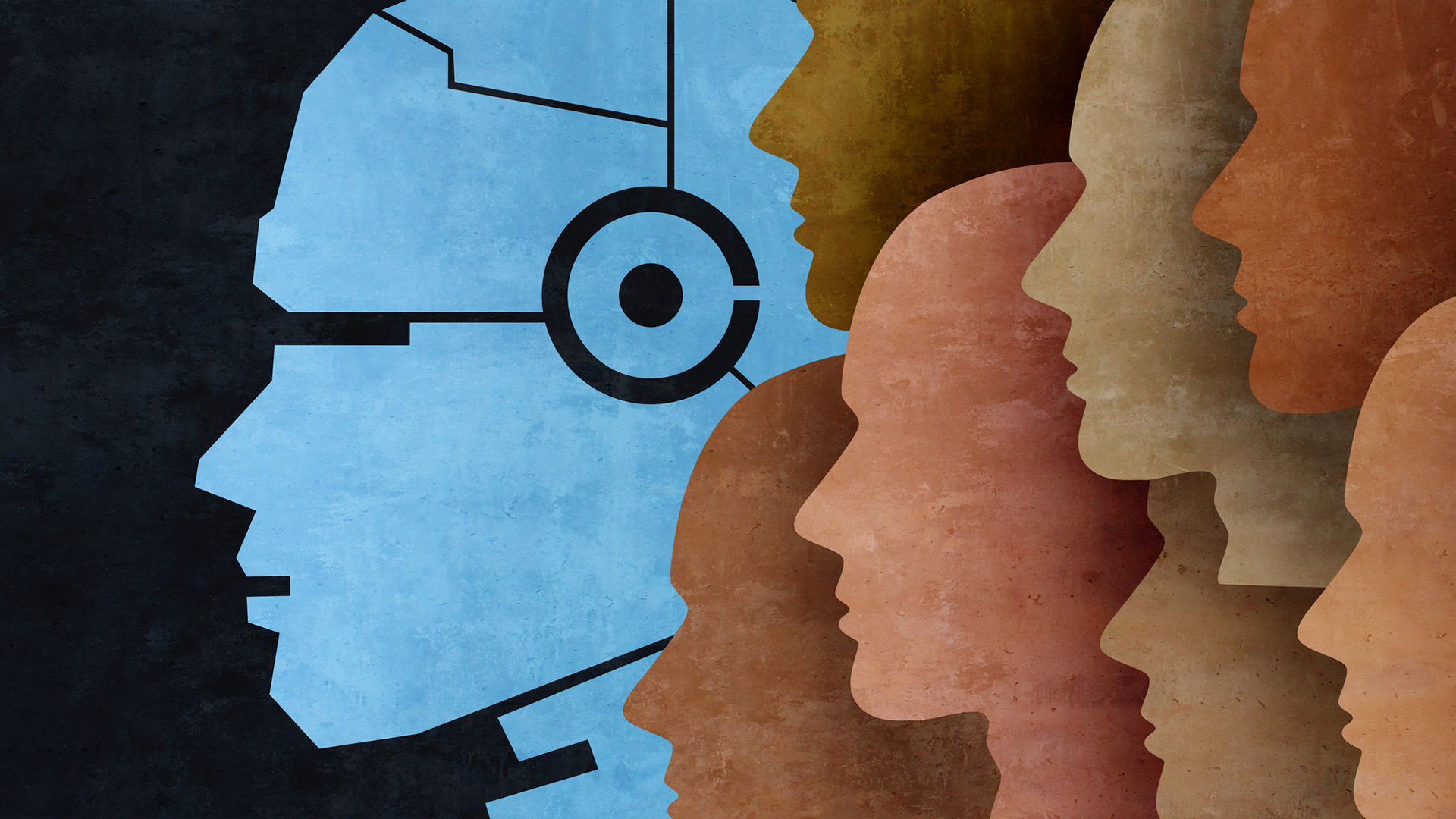

Nearly half of enterprises could face serious security or compliance-related incidents as a result of Shadow AI by 2030, prompting calls for more robust governance practices.

Analysis from Gartner shows 40% of businesses could fall foul of unauthorized AI usage as employees continue to use tools not monitored or cleared by security teams.

The findings from Gartner come in the wake of a survey of cybersecurity leaders which underlined growing concerns about the rise of shadow AI. More than two-thirds (69%) of respondents said their organization either suspects – or has evidence to prove – that employees are using prohibited tools.

These tools, the consultancy said, can increase the risk of IP loss and data exposure, as well as causing other security and compliance issues.

Gartner said the trend will require a concerted effort to educate staff on the use of these tools, clearer guidelines, and more detailed monitoring.

“To address these risks, CIOs should define clear enterprise-wide policies for AI tool usage, conduct regular audits for shadow AI activity and incorporate GenAI risk evaluation into their SaaS assessment processes,” said Arun Chandrasekaran, distinguished VP analyst at Gartner.

How to tackle shadow AI

The Gartner report is the latest in a string of industry studies warning about the use of unauthorized AI solutions.

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

A recent Microsoft study, for example, found nearly three-quarters (71%) of UK-based workers admitted to using shadow AI tools rather than those offered by their employer.

Notably, the report found that 22% of workers had used unauthorized tools for risky finance-related tasks, placing their employer at huge risk.

Guidance from the British Computer Society (BCS) on shadow AI aligns closely with the advice from Gartner. The organisation said organisations should adopt a comprehensive approach to tackling the problem which combines policy development, employee education, and technological oversight.

Policies should cover all aspects of AI use, from data input to output, and be flexible enough to respond to advancements in AI technology and regulatory changes.

Similarly, reviews should be carried out regularly while blacklists of websites and tools that organizations don't want their employees to use can help, along with continuous monitoring.

Make sure to follow ITPro on Google News to keep tabs on all our latest news, analysis, and reviews.

MORE FROM ITPRO

Emma Woollacott is a freelance journalist writing for publications including the BBC, Private Eye, Forbes, Raconteur and specialist technology titles.

-

‘Too many employees are serving as the human middleware’: Workers are wasting a full day each week switching between disparate AI tools and internal systems

‘Too many employees are serving as the human middleware’: Workers are wasting a full day each week switching between disparate AI tools and internal systemsNews Transferring data from one AI tool to another is costing more time than the tools actually save

-

Microsoft joins competitors in handing over AI models for advanced testing

Microsoft joins competitors in handing over AI models for advanced testingNews US and UK government agencies will evaluate the firm's frontier models, along with those from Google and xAI

-

AI adoption is accelerating in the UK, but ‘trust is not keeping pace’

AI adoption is accelerating in the UK, but ‘trust is not keeping pace’News Organizations need to do more to reassure customers over governance

-

Liz Kendall: The UK is in prime position to become a global leader in AI — but greater tech industry support is needed to avoid falling behind

Liz Kendall: The UK is in prime position to become a global leader in AI — but greater tech industry support is needed to avoid falling behindNews Tech secretary Liz Kendall has pledged greater investment in the chip and semiconductor technologies that underpin AI

-

UK firms accelerate ‘sovereign AI’ plans amid concerns over dependence on overseas tech

UK firms accelerate ‘sovereign AI’ plans amid concerns over dependence on overseas techNews A Red Hat report shows firms are prioritizing sovereign AI over fears that foreign providers could restrict access

-

UK organizations are failing to move past basic AI use-cases

UK organizations are failing to move past basic AI use-casesNews Businesses in the UK are ramping up AI adoption, but they’re falling at key hurdles

-

‘AI is not making IT simpler – it's making it more consequential’: IT workers are feeling the heat as AI raises expectations

‘AI is not making IT simpler – it's making it more consequential’: IT workers are feeling the heat as AI raises expectationsNews A SolarWinds survey suggests AI makes IT work more strategic, but also adds friction and raises expectations

-

AI adoption rates aren’t matching IT hype

AI adoption rates aren’t matching IT hypeNews The appetite for AI is there, but a range of issues are hampering adoption