Microsoft joins competitors in handing over AI models for advanced testing

US and UK government agencies will evaluate the firm's frontier models, along with those from Google and xAI

Microsoft, Google, and xAI have agreed to hand over their AI tools to the US Center for AI Standards and Innovation (CAISI) and the UK's AI Security Institute (AISI) for pre-deployment testing.

They will evaluate the firms' frontier models, assess safeguards, and help mitigate national security and large-scale public safety risks, Microsoft said.

"Well-constructed tests help us understand whether our systems are working as intended and delivering the benefits they are designed to provide. Testing also helps us stay ahead of risks, such as AI-driven cyber attacks and other criminal misuses of AI systems, that can emerge once advanced AI systems are deployed in the world," said Natasha Crampton, Microsoft’s chief responsible AI officer.

"While Microsoft regularly undertakes many types of AI testing on its own, testing for national security and large-scale public safety risks necessarily must be a collaborative endeavor with governments. This type of testing depends on deep technical, scientific, and national security expertise that is uniquely held by institutions like CAISI in the US and AISI in the UK and the government agencies they work with."

In the US, Microsoft and the National Institute of Standards and Technology (NIST) will collaborate with CAISI on improving methodologies for adversarial assessments.

It will mean testing AI systems by examining unexpected behaviors, misuse pathways, and failure modes. This includes developing more systematic and reproducible approaches to evaluation, including shared frameworks, datasets, and workflows for assessing safety, security, and robustness risks in advanced AI systems.

“Independent, rigorous measurement science is essential to understanding frontier AI and its national security implications,” said CAISI director Chris Fall. “These expanded industry collaborations help us scale our work in the public interest at a critical moment.”

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

Microsoft strikes agreement with UK researchers

In the UK, Microsoft will collaborate with AISI on research related to frontier safety and security, including ways of evaluating high-risk capabilities and the effectiveness of the safeguards used to address them

"The partnership will also include research into societal resilience, examining how conversational AI systems interact with users insensitive contexts," said AISI.

"As AI systems become increasingly capable, sustained two-way collaboration between government and companies developing and deploying frontier AI is essential to advance our joint understanding of large-scale risks to public safety and national security."

Microsoft said future plans include collaborating with other AI institutes around the world, sharing priorities and methodologies for testing through the International Network for AI Measurement, Evaluation and Science.

The company is also working with Frontier Model Forum (FMF), an initiative dedicated to advancing the science and practice of frontier AI safety and security, to support independent research and promote transparency around risk mitigation strategies.

It is also contributing to MLCommons, a multistakeholder non-profit that develops and operationalizes testing tools such as AILuminate, a family of safety and security benchmarks.

"As AI capabilities advance, so too must the rigor of the testing and safeguards that underpin them. We will apply what we learn from these partnerships directly into how we design, test, and deploy AI systems, ensuring that progress in evaluation science translates into safer, more secure products for our customers," said Crampton.

"As these partnerships progress, we will share what we learn and look for opportunities to apply insights and best practices to AI testing more broadly."

FOLLOW US ON SOCIAL MEDIA

Follow ITPro on Google News and add us as a preferred source to keep tabs on all our latest news, analysis, views, and reviews.

You can also follow ITPro on LinkedIn, X, Facebook, and BlueSky.

Emma Woollacott is a freelance journalist writing for publications including the BBC, Private Eye, Forbes, Raconteur and specialist technology titles.

-

Panasonic Toughbook marks 30th year with new European partner program

Panasonic Toughbook marks 30th year with new European partner programNews The revamped initiative introduces a new deal registration system, enhanced training programs, and fresh growth incentives

-

Enterprises keep cutting staff for AI — and they keep regretting it

Enterprises keep cutting staff for AI — and they keep regretting itNews Gartner research shows many companies are reducing headcount due to AI, but it’s not all it’s cut out to be

-

AI adoption is accelerating in the UK, but ‘trust is not keeping pace’

AI adoption is accelerating in the UK, but ‘trust is not keeping pace’News Organizations need to do more to reassure customers over governance

-

Google is building its own OpenClaw alternative — Remy ‘elevates the Gemini app into a true assistant’

Google is building its own OpenClaw alternative — Remy ‘elevates the Gemini app into a true assistant’News The OpenClaw-style agent, dubbed ‘Remy’, is reportedly being tested by developers internally

-

The AI operations gap is reshaping the Microsoft channel

The AI operations gap is reshaping the Microsoft channelHow are AI advancements shaping the moves channel partners are making and need to make going forward?

-

Liz Kendall: The UK is in prime position to become a global leader in AI — but greater tech industry support is needed to avoid falling behind

Liz Kendall: The UK is in prime position to become a global leader in AI — but greater tech industry support is needed to avoid falling behindNews Tech secretary Liz Kendall has pledged greater investment in the chip and semiconductor technologies that underpin AI

-

Accenture has been trialling Microsoft Copilot since 2023 – now it’s rolling out the AI tool to all 743,000 staff

Accenture has been trialling Microsoft Copilot since 2023 – now it’s rolling out the AI tool to all 743,000 staffNews Accenture will roll out Microsoft Copilot to nearly three quarters of a million employees after years of testing

-

UK firms accelerate ‘sovereign AI’ plans amid concerns over dependence on overseas tech

UK firms accelerate ‘sovereign AI’ plans amid concerns over dependence on overseas techNews A Red Hat report shows firms are prioritizing sovereign AI over fears that foreign providers could restrict access

-

UK organizations are failing to move past basic AI use-cases

UK organizations are failing to move past basic AI use-casesNews Businesses in the UK are ramping up AI adoption, but they’re falling at key hurdles

-

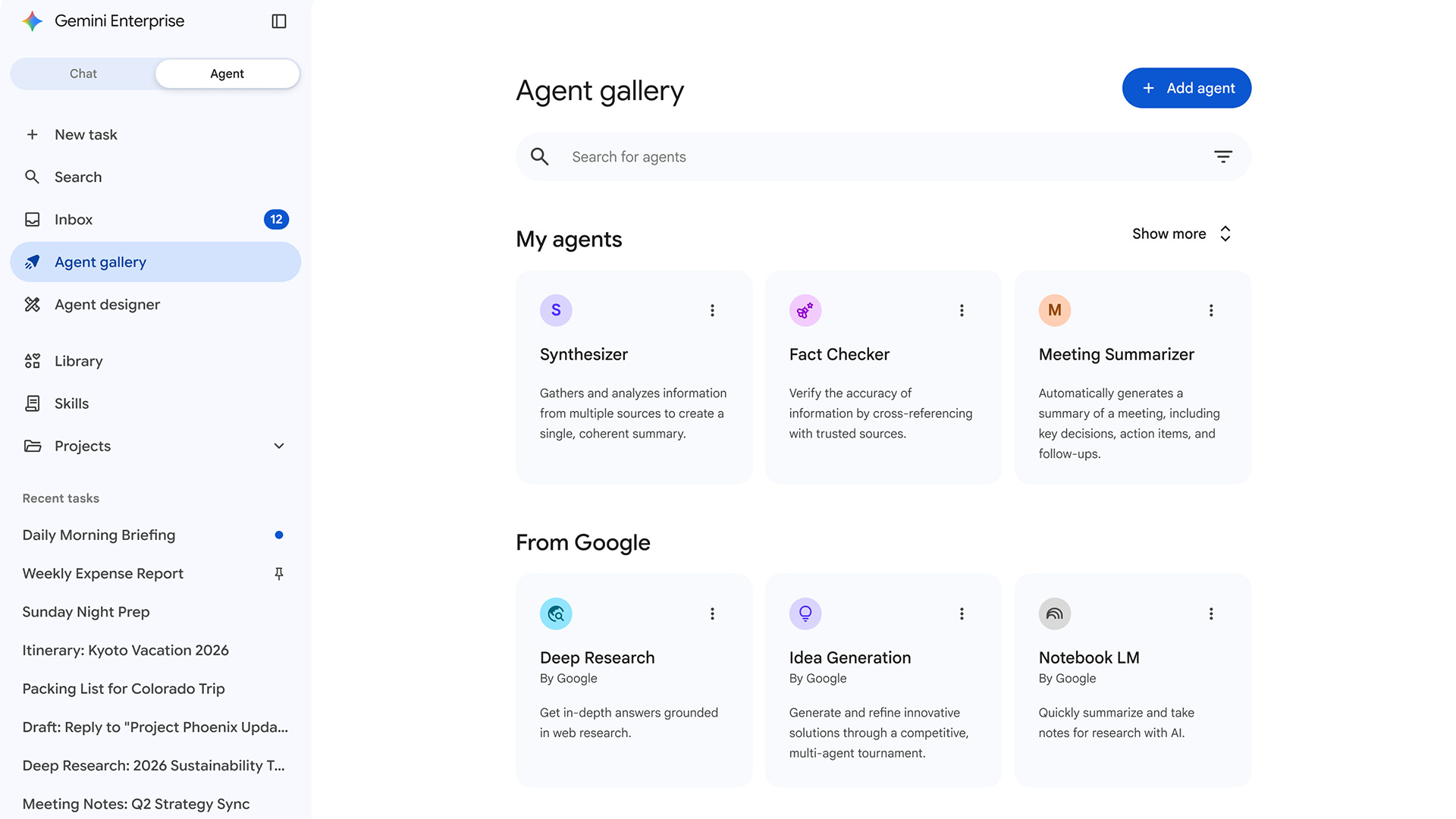

Google expands Gemini Enterprise, consolidates Vertex AI services to simplify agent deployment

Google expands Gemini Enterprise, consolidates Vertex AI services to simplify agent deploymentNews Gemini Enterprise Agent Platform aims to help organizations to build, scale, govern, and optimize AI agents