Some of the most popular open weight AI models show ‘profound susceptibility’ to jailbreak techniques

Open weight AI models from Meta, OpenAI, Google, and Mistral all showed serious flaws

A host of leading open weight AI models contain serious security vulnerabilities, according to researchers at Cisco.

In a new study, researchers found these models, which are publicly available and can be downloaded and modified by users based on individual needs, displayed “profound susceptibility to adversarial manipulation” techniques.

Cisco evaluated models by a range of firms including:

- Alibaba (Qwen3-32B)

- DeepSeek (v3.1)

- Google (Gemma 3-1B-IT)

- Meta (Llama 3.3-70B-Instruct)

- Microsoft (Phi-4)

- OpenAI (GPT-OSS-20b)

- Mistral (Large-2)

All of the aforementioned models were put through their paces with Cisco’s AI Validation tool, which is used to assess model safety and probe for potential security vulnerabilities.

Researchers found that, for all models, susceptibility to “multi-turn jailbreak attacks” was a key recurring issue. This is a method whereby an individual can essentially force a model to produce prohibited content.

This is achieved by using specifically-crafted instructions from the user that, over time, can be used to manipulate the model’s behavior. This is a more laborious process than “single-turn” techniques, which involve manipulating a model with a single effective malicious prompt.

Multi-turn jailbreak techniques have been observed in the wild before, particularly with the use of the Skeleton Key method, which allowed hackers to convince an AI model to produce instructions for making a Molotov cocktail.

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

Success rates with individual models varied wildly, the study noted. Researchers recorded a 25.86% success rate with Google’s Gemma-3-1B-IT model, for example, while also recording a 92.78% success rate with Mistral Large-2.

Researchers also recorded the highest success rate for single-turn attack methods with both these models.

Different strokes for different folks

The varied success rates recorded by Cisco lie in how these models are typically used, researchers noted. This rests on two key factors: alignment and capability.

In the case of 'alignment', this refers to how an AI model acts in the context of human intentions and values. 'Capability', meanwhile, refers to the model’s ability to perform a specific task.

For example, models such as Meta’s Llama range, which place a lower focus on alignment, showed the highest susceptibility to multi-turn attack methods.

Researchers noted that this is because Meta made a conscious decision to place developers “in the driver seat” in terms of tailoring the model’s safety mechanisms based on individual use-cases.

“Models that focused heavily on alignment (e.g., Google Gemma-3-1B-IT) did demonstrate a more balanced profile between single- and multi-turn strategies deployed against it, indicating a focus on “rigorous safety protocols” and “low risk level” for misuse,” the study said.

AI model flaws have real-world implications

Researchers warned that flaws contained in these models could have real-world ramifications, particularly with regard to data protection and privacy.

“This could translate into real-world threats, including risks of sensitive data exfiltration, content manipulation leading to compromise of integrity of data and information, ethical breaches through biased outputs, and even operational disruptions in integrated systems like chatbots or decision-support tools,” the study noted.

Notably, in enterprise settings, they warned these vulnerabilities could “enable unauthorized access to proprietary information”.

Concerns over AI model manipulation have become a common recurring theme since the advent of generative AI in late 2022, with a steady flow of new jailbreak techniques emerging on a regular basis.

Make sure to follow ITPro on Google News to keep tabs on all our latest news, analysis, and reviews.

MORE FROM ITPRO

Ross Kelly is ITPro's News & Analysis Editor, responsible for leading the brand's news output and in-depth reporting on the latest stories from across the business technology landscape. Ross was previously a Staff Writer, during which time he developed a keen interest in cyber security, business leadership, and emerging technologies.

He graduated from Edinburgh Napier University in 2016 with a BA (Hons) in Journalism, and joined ITPro in 2022 after four years working in technology conference research.

For news pitches, you can contact Ross at ross.kelly@futurenet.com, or on Twitter and LinkedIn.

-

Liz Kendall: The UK is in prime position to become a global leader in AI — but greater tech industry support is needed to avoid falling behind

Liz Kendall: The UK is in prime position to become a global leader in AI — but greater tech industry support is needed to avoid falling behindNews Tech secretary Liz Kendall has pledged greater investment in the chip and semiconductor technologies that underpin AI

-

Four things you need to know about OpenAI’s new workspace agents for ChatGPT – including how to build your own

Four things you need to know about OpenAI’s new workspace agents for ChatGPT – including how to build your ownNews New ‘workspace agents’ from OpenAI will automate tasks for workers and can be customized for specific roles

-

UK firms accelerate ‘sovereign AI’ plans amid concerns over dependence on overseas tech

UK firms accelerate ‘sovereign AI’ plans amid concerns over dependence on overseas techNews A Red Hat report shows firms are prioritizing sovereign AI over fears that foreign providers could restrict access

-

UK organizations are failing to move past basic AI use-cases

UK organizations are failing to move past basic AI use-casesNews Businesses in the UK are ramping up AI adoption, but they’re falling at key hurdles

-

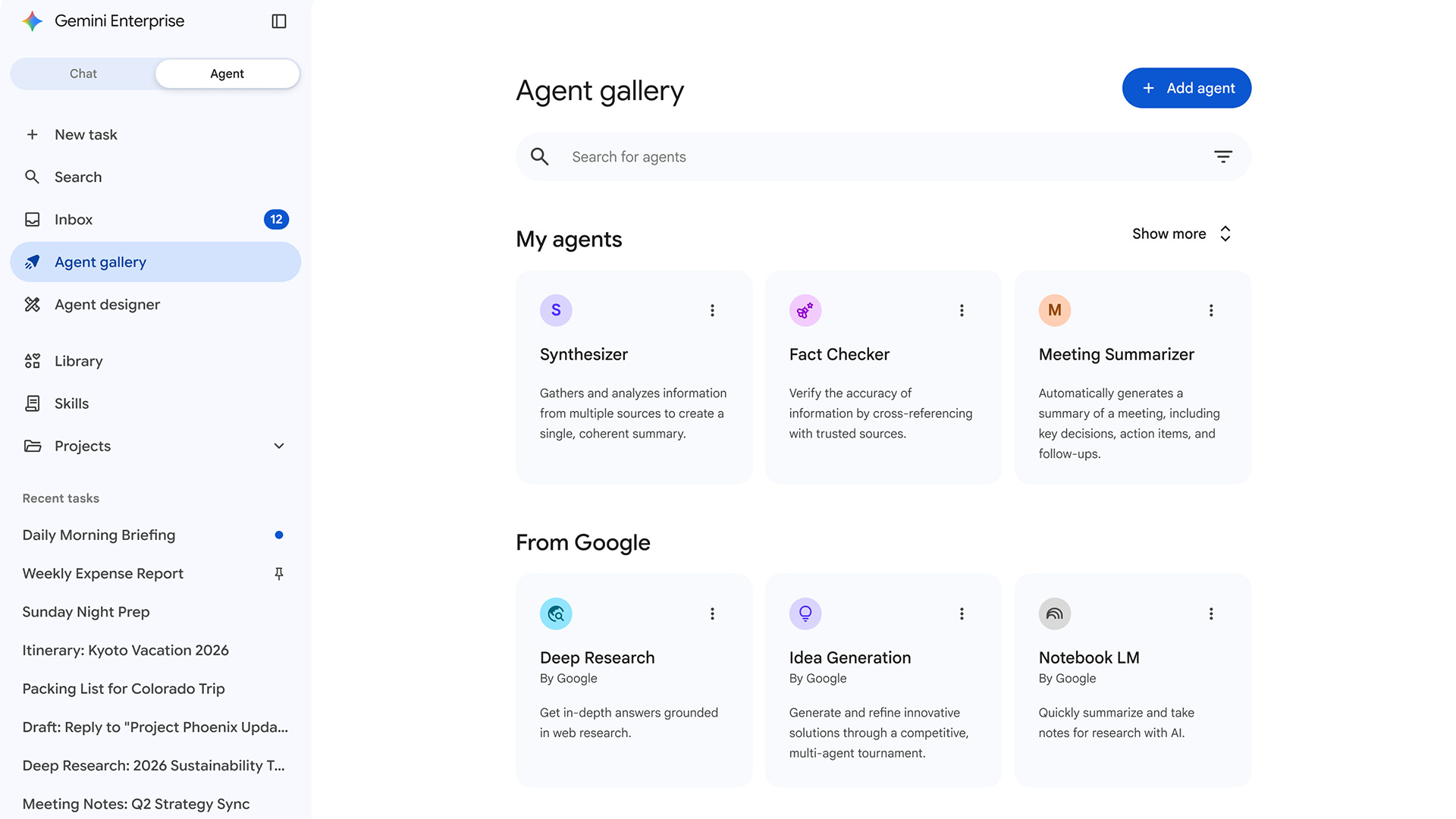

Google expands Gemini Enterprise, consolidates Vertex AI services to simplify agent deployment

Google expands Gemini Enterprise, consolidates Vertex AI services to simplify agent deploymentNews Gemini Enterprise Agent Platform aims to help organizations to build, scale, govern, and optimize AI agents

-

‘AI is not making IT simpler – it's making it more consequential’: IT workers are feeling the heat as AI raises expectations

‘AI is not making IT simpler – it's making it more consequential’: IT workers are feeling the heat as AI raises expectationsNews A SolarWinds survey suggests AI makes IT work more strategic, but also adds friction and raises expectations

-

AI adoption rates aren’t matching IT hype

AI adoption rates aren’t matching IT hypeNews The appetite for AI is there, but a range of issues are hampering adoption

-

Meta engineer trusted advice from an AI agent, ended up exposing user data

Meta engineer trusted advice from an AI agent, ended up exposing user dataNews The internal security incident exposed sensitive user data to unauthorized employees