What is Kubernetes?

We explain the power of Kubernetes, the Google-developed open source platform powering containerization at scale

Max Slater-Robins

Kubernetes is an open source platform that has become the cornerstone of modern application deployment. Originally developed by Google and now maintained by the Cloud Native Computing Foundation (CNCF), it helps organizations automate the deployment, scaling, and management of containerized workloads, a critical function in today’s cloud-driven IT environments.

As businesses increasingly adopt microservices, hybrid cloud, and DevOps practices, Kubernetes has emerged as the de facto solution for orchestrating containerized applications at scale. Its flexibility, extensibility, and vendor-neutral design have helped it maintain a stronghold across both startups and enterprise IT.

But while Kubernetes remains central to modern infrastructure, it’s not without complexity.

Understanding what it is, how it works, and how it’s used in practice is essential for any IT professional looking to work in a cloud-native environment, or simply make sense of how their organization’s applications are deployed.

How does Kubernetes work?

At its most basic level, Kubernetes – often abbreviated to K8s – is an open source container orchestration platform designed to manage the deployment, scaling, and operation of containerized applications.

Containers help software run reliably across different computing environments – whether on-premise, in the cloud, or somewhere in between – by packaging applications with everything they need to run.

Originally developed by Google, Kubernetes was open-sourced in 2014 and is now managed by the CNCF. It builds on years of internal experience at Google with a system called Borg and has since become the industry standard for managing containers in production.

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

At the heart of Kubernetes is the cluster – a group of machines that work together to run containerized workloads. Each cluster includes a control plane, which handles scheduling, scaling, and orchestration, and a set of worker nodes that run application containers.

Kubernetes uses several key components to maintain application availability and performance, including pods, which group one or more containers as a unit of deployment; services, which enable network access to a set of pods; controllers, which ensure the desired state of the system is maintained over time; and schedulers, which decide where workloads should run based on available resources

Kubernetes can monitor the health of workloads, restart failed containers, and scale applications up or down as needed, and supports rolling updates, load balancing, and automatic failover – all with minimal human intervention.

Crucially, Kubernetes is vendor-agnostic and supports any platform that conforms to the Open Container Initiative (OCI) standards, allowing applications to run consistently and reliably across on-premises, public cloud, or hybrid environments.

Given all of this, it’s easy to see why Kubernetes has been such a smash hit.

How in-demand are Kubernetes skills?

Kubernetes remains one of the most sought-after skills in today’s IT job market. As more organizations embrace cloud-native development, infrastructure as code, and containerization, demand for professionals with the relevant skills to handle Kubernetes environments continues to rise.

Kubernetes-related roles offer an average global salary ranging from $124,000 to $185,000, with the highest salaries seen in North America, according to Kube Careers’ 2024 Q4 report,

Job titles most commonly requiring Kubernetes skills include DevOps engineer, site reliability engineer (SRE), and platform engineer, all of which are pivotal to modern IT.

Certifications remain an important differentiator for candidates. The Certified Kubernetes Administrator (CKA), Certified Kubernetes Application Developer (CKAD), and Certified Kubernetes Security Specialist (CKS) are widely recognized and often requested in job listings, and completing one or more of these can significantly enhance job prospects.

Hybrid and remote working models are also contributing to this demand. The New Stack reported that, by late 2024, nearly 30% of Kubernetes job listings explicitly offered hybrid working arrangements, a notable increase from the previous year.

While more professionals are entering the space, Kubernetes remains complex enough that skilled engineers are still in short supply. Teams with strong Kubernetes knowledge are better equipped to manage resilient infrastructure, scale services efficiently, and support fast-moving development teams.

How much does it cost to deploy Kubernetes?

Kubernetes itself is free and open source. However, deploying and running it at scale comes with real-world costs that largely depend on whether your organization opts to manage Kubernetes in-house or use a managed service from a public cloud vendor.

Managed Kubernetes platforms – such as Amazon EKS, Google Kubernetes Engine (GKE), and Microsoft Azure AKS – simplify setup and maintenance, but charge for control plane management, compute, storage, and networking.

At the time of writing, Amazon EKS costs $0.10 per hour per cluster for the control plane alone – around $72 per month – with additional costs based on node usage and data transfer. Azure AKS and Google GKE offer similar pricing, although Azure still provides a free control plane tier with limited service guarantees.

If you run Kubernetes on your own infrastructure – either on-premise or in virtual machines – you’ll avoid managed service fees but face increased operational overhead. You’ll need to maintain the control plane, secure the environment, and manage updates and scaling, which often requires dedicated platform engineering resources.

What are the best Kubernetes alternatives?

Although Kubernetes has become the dominant choice for container orchestration, it isn’t the only option. Several alternative platforms exist that may be better suited to specific workloads, team sizes, or operational preferences.

First up, Docker Swarm, once a popular lightweight alternative, provides native orchestration as part of the Docker platform, and is easier to set up and understand than Kubernetes, making it appealing for smaller teams or simpler projects. However, it lacks many of the advanced features, flexibility, and community support that Kubernetes offers, and has largely fallen out of favor for production use.

Another notable option is HashiCorp Nomad, a general-purpose orchestrator that can manage both containerized and non-containerized workloads. It’s known for being lightweight, scalable, and operationally straightforward, making it a good fit for organizations looking for simplicity without sacrificing performance.

Red Hat OpenShift, by contrast, is built on Kubernetes but provides additional tooling, enhanced security features, and enterprise support, making it a popular choice for organizations operating in regulated industries or those that prefer a more integrated development platform.

Where next?

Kubernetes has reshaped the way organizations build, deploy, and manage modern applications. Its ability to automate operations, scale seamlessly, and run consistently across on-premise, cloud, and hybrid environments has made it a cornerstone of today’s IT infrastructure.

While it’s not without its complexities, Kubernetes continues to evolve, with a growing ecosystem of tools designed to make it more accessible and efficient. Alternatives do exist, but for most large-scale or cloud-native workloads, Kubernetes remains the most capable and widely supported solution.

For teams embracing DevOps, platform engineering, or microservices at scale, Kubernetes is here to stay and will likely only grow more influential in the months and years ahead.

Dale Walker is a contributor specializing in cybersecurity, data protection, and IT regulations. He was the former managing editor at ITPro, as well as its sibling sites CloudPro and ChannelPro. He spent a number of years reporting for ITPro from numerous domestic and international events, including IBM, Red Hat, Google, and has been a regular reporter for Microsoft's various yearly showcases, including Ignite.

-

Enterprises are shipping a torrent of untested AI-generated code

Enterprises are shipping a torrent of untested AI-generated codeNews With speed routinely prioritized over quality, organizations often respond by taking shortcuts

-

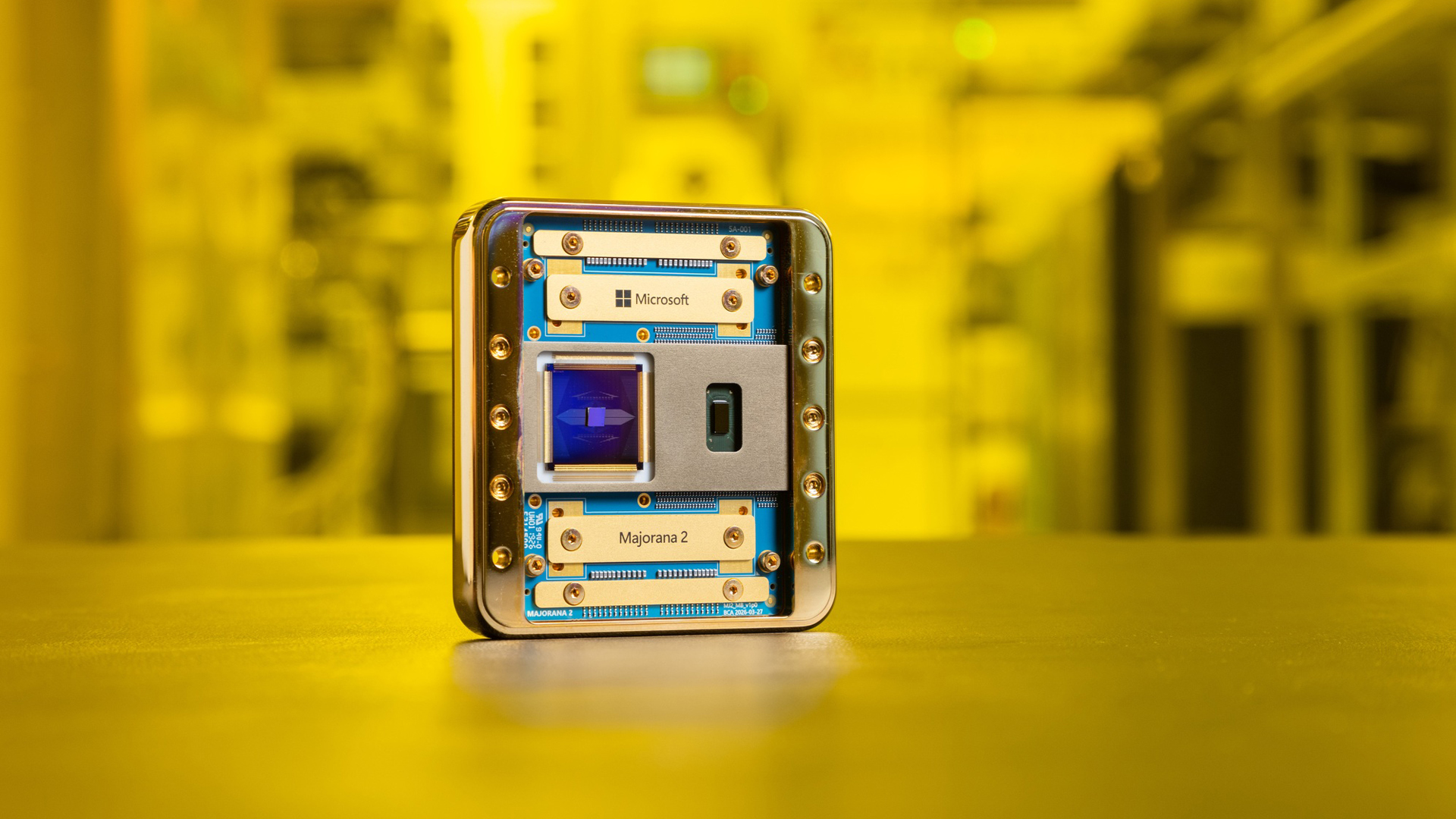

Microsoft touts huge performance breakthroughs with Majorana 2 quantum chip

Microsoft touts huge performance breakthroughs with Majorana 2 quantum chipNews The Majorana 2 quantum chip was developed with the help of the Microsoft Discovery platform

-

Red Hat, Google Cloud eye legacy modernization gains with partnership expansion

Red Hat, Google Cloud eye legacy modernization gains with partnership expansionNews Red Hat wants to make it easier to migrate and modernize applications

-

Maximizing Microsoft 365 Security: How Cloudflare enhances protection and adds value

Maximizing Microsoft 365 Security: How Cloudflare enhances protection and adds valueWebinar Strengthen your defenses, proactively block attacks, and reduce risks

-

VPN replacement phases: Learn others’ real-world approaches

VPN replacement phases: Learn others’ real-world approachesWebinar Accelerate Zero Trust adoption

-

UK enterprises lead the way on containerization, but skills gaps could hinder progress

UK enterprises lead the way on containerization, but skills gaps could hinder progressNews The UK risks fumbling its lead on cloud native deployments due to skills issues, according to Nutanix’s Enterprise Cloud Index (ECI) survey.

-

Understanding NIS2 directives: The role of SASE and Zero Trust

Understanding NIS2 directives: The role of SASE and Zero TrustWebinar Enhance cybersecurity measures to comply with new regulations

-

From legacy to leading edge: Transforming app delivery for better user experiences

From legacy to leading edge: Transforming app delivery for better user experiencesWebinar Meet end-user demands for high-performing applications

-

Navigating evolving regional data compliance and localization regulations with Porsche Informatik

Navigating evolving regional data compliance and localization regulations with Porsche InformatikWebinar A data localization guide for enterprises

-

Strategies for improving security team efficiency

Strategies for improving security team efficiencyWebinar Get more value from your digital investments