Nvidia and Microsoft team up to build 'most powerful' AI supercomputer

The firms seek to accelerate the training of AI models using Azure's supercomputer infrastructure and Nvidia GPUs

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

You are now subscribed

Your newsletter sign-up was successful

Nvidia has announced that it will collaborate with Microsoft on a years-long project to create an AI supercomputer, that could rank amongst the world’s most powerful, in order to help organisations train and deploy AI at scale.

The deal will see Nvidia contribute tens of thousands of GPUs, networking technology, and its full stack of AI software to Microsoft Azure cloud’s supercomputing infrastructure, which already utilises ND and NC-series virtual machines trained to work on artificial intelligence (AI) and deep learning.

Nvidia will use Azure’s virtual machine (VM) instances to train generative AI, a field which includes language models such as OpenAI’s GPT-4, and Nvidia’s own Megatron Turing NLG.

The company’s full AI stack, containing workflows and development kits certified for use on Azure, will be made available to Azure enterprise customers.

The collaboration will also see improvements to DeepSpeed, Microsoft’s deep learning optimisation software suite used to accelerate training models. Going forward, DeepSpeed will utilise Nvidia’s H100 Transformer Engine architecture to accelerate large models, including generative AI, at up to twice the speed previously possible.

“AI is fueling the next wave of automation across enterprises and industrial computing, enabling organisations to do more with less as they navigate economic uncertainties,” said Scott Guthrie, executive vice president of the Cloud + AI Group at Microsoft.

“Our collaboration with Nvidia unlocks the world’s most scalable supercomputer platform, which delivers state-of-the-art AI capabilities for every enterprise on Microsoft Azure.”

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

RELATED RESOURCE

The Total Economic Impact™ of IBM Spectrum Virtualize

Cost savings and business benefits enabled by storage built with IBMSpectrum Virtualize

Azure VM instances currently contain Nvidia A100s, which utilise Quantum 200Gbit/sec Infiniband networking. The addition of the H100s will see this networking speed double through the use of Quantum-2 400Gbit/sec Infiniband networking, capable of handling larger AI training sets and workloads.

Natural language processing (NLP) models like GPT-3 and its upcoming successor GPT-4 have been associated with controversial deployment in the past. At one point, models such as these were thought to be too dangerous for general release, due to their ability to convincingly craft fake news pieces and propensity for spouting hate speech.

Researchers have expressed hope that through intensive training in supercomputers, NLPs could prove invaluable for business use in text and speech comprehension, the future of virtual assistants, and through the partial or full automation of tasks such as computer programming.

This is not the first supercomputer Nvidia has worked on, nor the first AI supercomputer. In 2021, the chip giant switched on the UK’s fastest supercomputer, aimed at using AI for intensive health research, and in January 2022 it was announced that Meta would build the “world’s fastest” AI supercomputer in collaboration with Nvidia.

More recently still, Hewlett Packard Enterprise (HPE) lifted the lid on ‘Champollion’, an AI supercomputer that it plans to make available to scientists and engineers that was designed in collaboration with Nvidia.

Nvidia has invested in a wide range of AI and supercomputing projects, with the company’s own ‘Selene’ supercomputer having consistently ranked in the top ten most powerful computers in the world since its 2020 creation.

Nvidia declined to give further details on the timeline of its agreement with Microsoft.

“We are not elaborating on the terms of the deal beyond saying that it is a multi-year agreement,” said Paresh Kharya, senior director of product management at Nvidia told IT Pro.

"The goal is to accelerate the most important AI applications. Working with Microsoft Azure, we gain speed and the ability to do things at scale using less energy. We can then take AI applications such as speech recognition and large language models to advance generative AI."

Rory Bathgate is Features and Multimedia Editor at ITPro, overseeing all in-depth content and case studies. He can also be found co-hosting the ITPro Podcast with Jane McCallion, swapping a keyboard for a microphone to discuss the latest learnings with thought leaders from across the tech sector.

In his free time, Rory enjoys photography, video editing, and good science fiction. After graduating from the University of Kent with a BA in English and American Literature, Rory undertook an MA in Eighteenth-Century Studies at King’s College London. He joined ITPro in 2022 as a graduate, following four years in student journalism. You can contact Rory at rory.bathgate@futurenet.com or on LinkedIn.

-

Nvidia targets open source interoperability with new model coalitions, agentic frameworks

Nvidia targets open source interoperability with new model coalitions, agentic frameworksNews A new open model development coalition and agentic frameworks target enterprise interoperability

-

Will AI hiring entrench gender bias?

Will AI hiring entrench gender bias?ITPro Podcast This International Women's Day, it's more important than ever to consider the inherent biases of training data

-

February rundown: SaaS-pocalypse now?

February rundown: SaaS-pocalypse now?ITPro Podcast Geopolitical uncertainty is intensifying public and private sector focus on true sovereign workloads

-

HPE and Nvidia launch first EU AI factory lab in France

HPE and Nvidia launch first EU AI factory lab in FranceNews The facility will let customers test and validate their sovereign AI factories

-

Dell Technologies doubles down on AI with SC25 announcements

Dell Technologies doubles down on AI with SC25 announcementsAI Factories, networking, storage and more get an update, while the company deepens its relationship with Nvidia

-

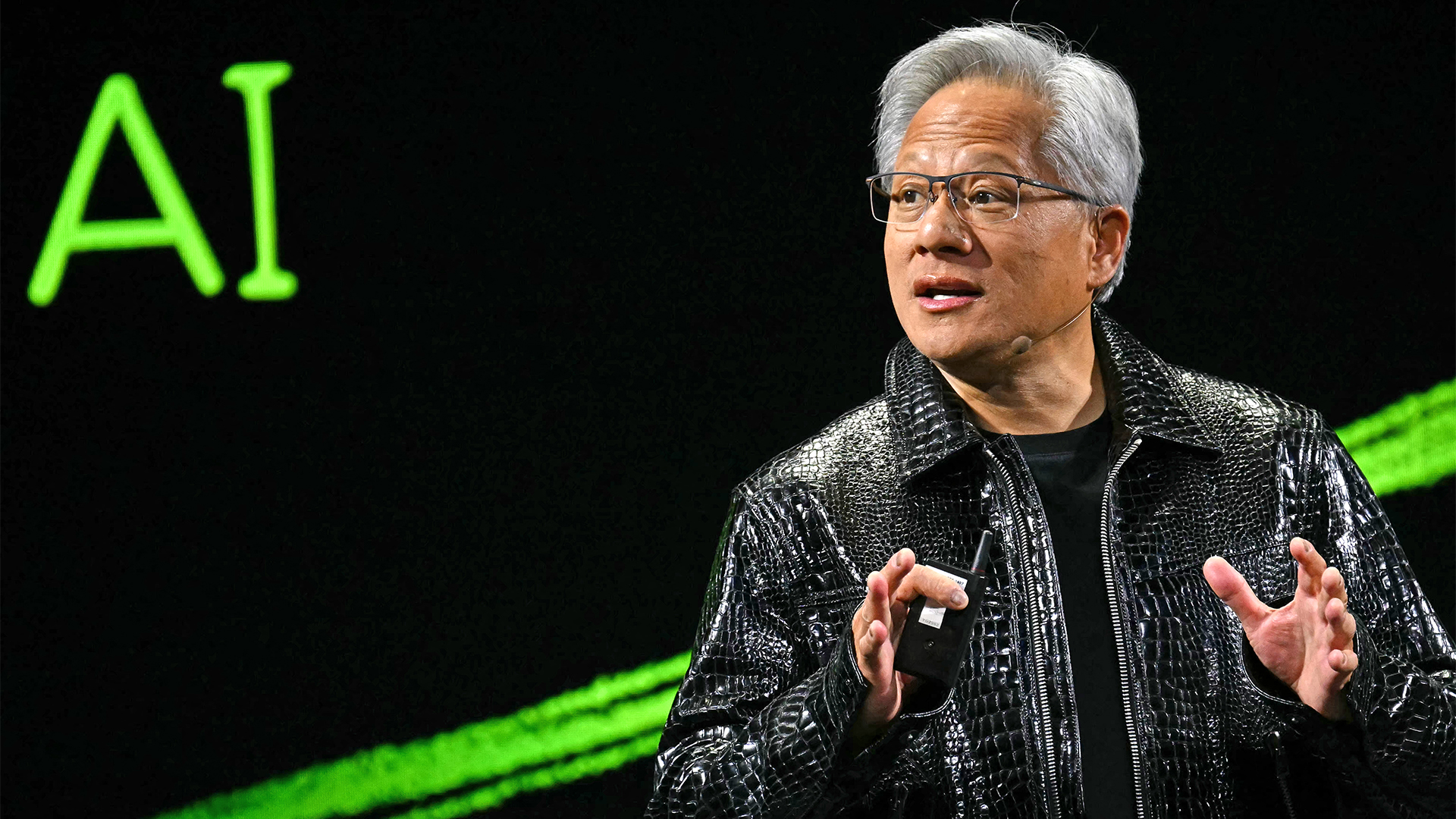

Nvidia CEO Jensen Huang says future enterprises will employ a ‘combination of humans and digital humans’ – but do people really want to work alongside agents? The answer is complicated.

Nvidia CEO Jensen Huang says future enterprises will employ a ‘combination of humans and digital humans’ – but do people really want to work alongside agents? The answer is complicated.News Enterprise workforces of the future will be made up of a "combination of humans and digital humans," according to Nvidia CEO Jensen Huang. But how will humans feel about it?

-

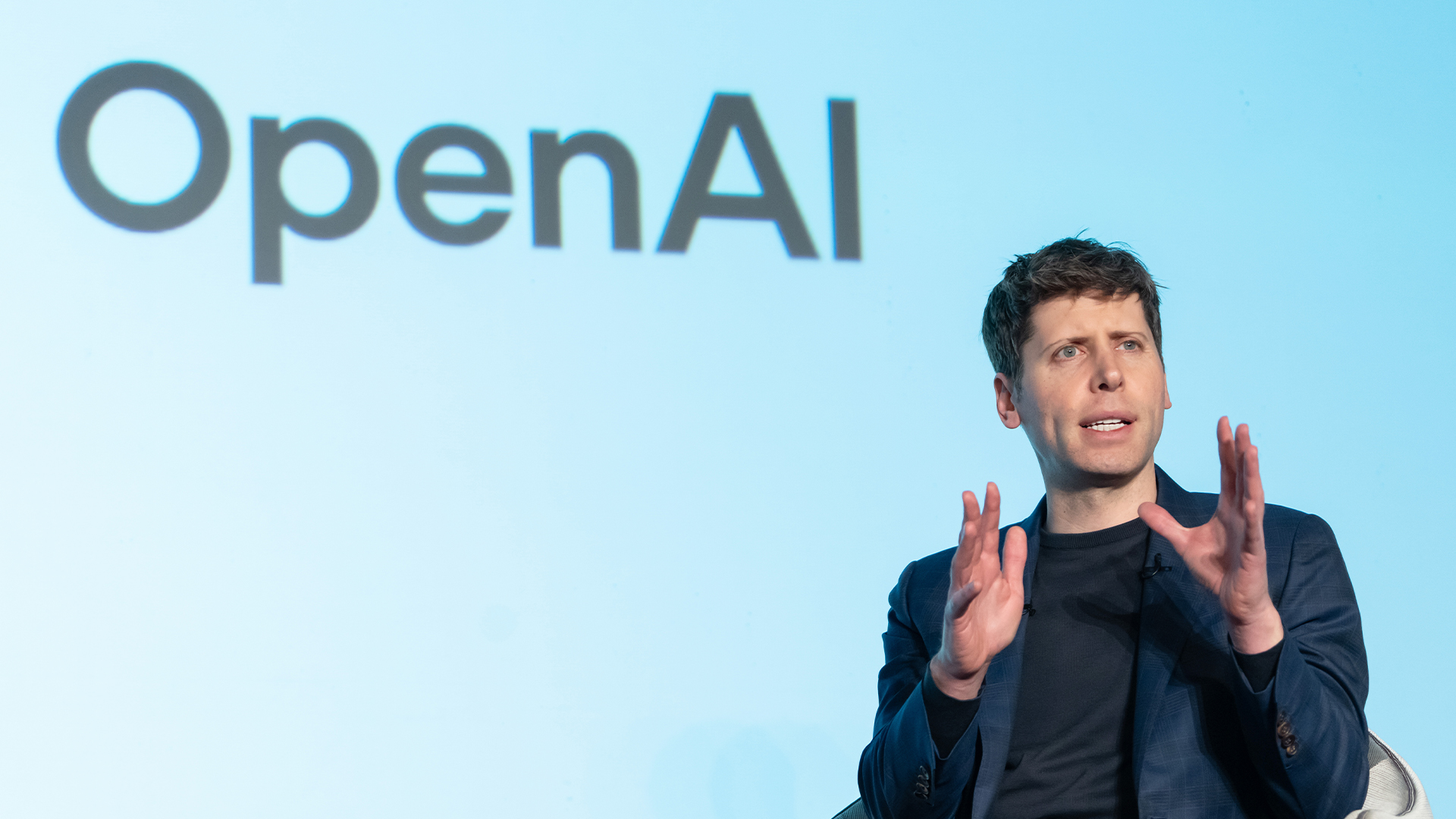

OpenAI signs another chip deal, this time with AMD

OpenAI signs another chip deal, this time with AMDnews AMD deal is worth billions, and follows a similar partnership with Nvidia last month

-

Why Nvidia’s $100 billion deal with OpenAI is a win-win for both companies

Why Nvidia’s $100 billion deal with OpenAI is a win-win for both companiesNews OpenAI will use Nvidia chips to build massive systems to train AI