Fraudsters use AI voice manipulation to steal £200,000

Bold social engineering attack could be the first of many powered by machine learning

Cyber criminals have used artificial intelligence (AI) and voice technology to impersonate a UK business owner, resulting in the fraudulent transfer of $243,000 (201,000).

In March this year, what is believed to be an unknown hacker group is said to have exploited AI-powered software to mimic the prominent business leader's voice to fool his subordinate, the CEO of a UK-based energy subsidiary, according to the Wall Street Journal (WSJ).

The hackers were then able to convince the CEO to carry out transactions in the guise of urgent funds destined for its German parent company.

It's believed that the fraudsters phoned the UK-based CEO to demand a transfer to a Hungarian supplier. They contacted him again, still impersonating the parent company's chief executive, to reassure him the transfer would be reimbursed.

The CEO was then contacted a third time, before any reimbursement funds had appeared, to request a second urgent transfer. It was at this point the CEO became suspicious and declined to make any further payments.

The funds that were transferred to Hungary, however, were soon moved on to Mexico and various other locations, with law enforcement still looking for suspects.

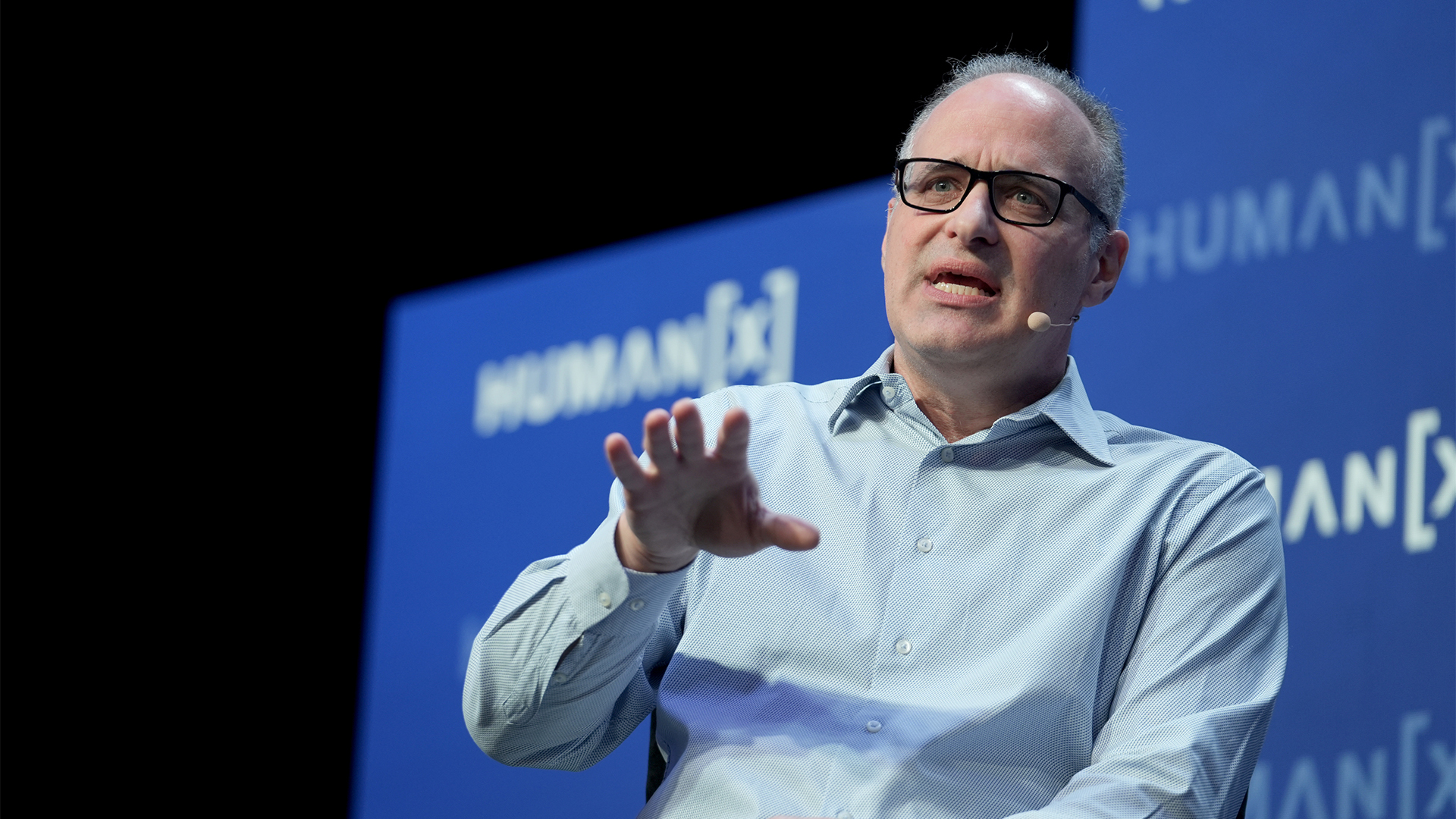

This social engineering attack could be a sign for things to come, according to ESET cyber security specialist Jake Moore, who expects to see a huge rise in machine-learned cyber crimes in the near future.

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

"We have already seen DeepFakes imitate celebrities and public figures in video format, but these have taken around 17 hours of footage to create convincingly," he said.

"Being able to fake voices takes fewer recordings to produce. As computing power increases, we are starting to see these become even easier to create, which paints a scary picture ahead."

With enterprise security practices becoming more robust with time, criminals may increasingly look to staff as the most easily-exploitable gaps in an organisation's defence.

Social engineering has, indeed, grown to be far more sophisticated in recent years with employees faced with slicker phishing campaigns and highly targeted attempts at deception.

"To reduce risks it is imperative not only to make people aware that such imitations are possible now, but also to include verification techniques before any money is transferred," Moore added.

Keumars Afifi-Sabet is a writer and editor that specialises in public sector, cyber security, and cloud computing. He first joined ITPro as a staff writer in April 2018 and eventually became its Features Editor. Although a regular contributor to other tech sites in the past, these days you will find Keumars on LiveScience, where he runs its Technology section.

-

UK firms left in the dark over what workers are sharing with AI

UK firms left in the dark over what workers are sharing with AINews Security teams can’t keep track of what workers are sharing with AI applications, regardless of whether they’re approved or unauthorized

-

Triple Microsoft designation defines serious, competitive, high-performing modern MSPs

Triple Microsoft designation defines serious, competitive, high-performing modern MSPsIndustry Insights Triple Microsoft designation signals serious MSP capability through rigorous, performance-based accreditation standards

-

Microsoft and NCSC issue alerts over hacker campaigns targeting WhatsApp, Signal messaging apps

Microsoft and NCSC issue alerts over hacker campaigns targeting WhatsApp, Signal messaging appsNews Microsoft warns about a sophisticated attack that starts with WhatsApp messages, while the NCSC says such incidents are on the rise

-

Is your new hire an AI clone? Microsoft says North Korean hackers are using AI to impersonate job seekers and steal company secrets

Is your new hire an AI clone? Microsoft says North Korean hackers are using AI to impersonate job seekers and steal company secretsNews The groups are increasingly using face-changing or voice-changing software to make their fake identities more plausible

-

Google issues warning over ShinyHunters-branded vishing campaigns

Google issues warning over ShinyHunters-branded vishing campaignsNews Related groups are stealing data through voice phishing and fake credential harvesting websites

-

Thousands of Microsoft Teams users are being targeted in a new phishing campaign

Thousands of Microsoft Teams users are being targeted in a new phishing campaignNews Microsoft Teams users should be on the alert, according to researchers at Check Point

-

Microsoft warns of rising AitM phishing attacks on energy sector

Microsoft warns of rising AitM phishing attacks on energy sectorNews The campaign abused SharePoint file sharing services to deliver phishing payloads and altered inbox rules to maintain persistence

-

Warning issued as surge in OAuth device code phishing leads to M365 account takeovers

Warning issued as surge in OAuth device code phishing leads to M365 account takeoversNews Successful attacks enable full M365 account access, opening the door to data theft, lateral movement, and persistent compromise

-

Amazon CSO Stephen Schmidt says the company has rejected more than 1,800 fake North Korean job applicants in 18 months – but one managed to slip through the net

Amazon CSO Stephen Schmidt says the company has rejected more than 1,800 fake North Korean job applicants in 18 months – but one managed to slip through the netNews Analysis from Amazon highlights the growing scale of North Korean-backed "fake IT worker" campaigns

-

Complacent Gen Z and Millennial workers are more likely to be duped by social engineering attacks

Complacent Gen Z and Millennial workers are more likely to be duped by social engineering attacksNews Overconfidence and a lack of security training are putting organizations at risk