Dreamforce 2023: Benioff says generative AI needs a ‘trust revolution’

Salesforce wants to do things “very, very differently” with responsible AI innovation, Marc Benioff told Dreamforce 2023 attendees

The generative AI boom over the last 12 months has given rise to a growing appetite for a ‘trust’ based counter revolution, according to Salesforce chief executive Marc Benioff.

Speaking at Dreamforce 2023, the outspoken chief executive fired a broadside at industry counterparts when discussing the topic of ‘hallucinations’, whereby a generative AI system presents false information as factually correct.

Benioff said AI hallucinations are a lingering issue that the industry must deal with if it is to tackle a widening “trust gap” that has emerged in recent months.

Research from the firm earlier this year revealed that 73% of employees believe generative AI raises “new security risks” while nearly two-thirds (60%) of those who plan to use the tech “don’t know how to keep data secure.”

This hesitancy among technology practitioners shows there is still work to be done to sow trust and emphasize the potential benefits of generative AI, Benioff noted.

“Like a lot of new technologies in our industry, there appears to be this thing called a trust gap,” he said. “Somehow there might not have been as much trust built into these technologies as was widely expected. That’s not unusual in our industry.

“They call them hallucinations. I call them lies. These LLMs are very convincing liars. They can turn very toxic, very quickly. That’s not necessarily great in a customer situation, not great in an enterprise employee situation.”

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

It was a bold statement from Benioff, and one that will likely have hit a nerve among industry counterparts such as OpenAI CEO Sam Altman, who has been repeatedly questioned by lawmakers on the dangers of generative AI.

RELATED RESOURCE

Get insight into how companies are unlocking value with Salesforce.

DOWNLOAD FOR FREE

Benioff’s position appears pinned around the idea that large language model (LLM) developers are unfazed by the ethical aspects of training models with data trawled from the web, for example.

These firms have an insatiable thirst for data, and once they’re done they simply move on without regard for where it's come from, or the legitimacy of the data used.

“I don’t have to tell you, I don’t have to explain to anybody here,” he said. “It’s a well-known secret at this point that they’re using your data to make money.”

Critically, Benioff suggested that the question of data security and the use of data in the training of LLMs should be one that executives across the industry pay close attention to.

“Where is the data going when I’m using my LLM? Where did that data go? The model is using it to get smarter and you’ll never see your data again. These LLMs are hungry for our data, it’s how they get smarter,” he said.

“The LLM companies are just algorithm companies. They only work when they have data and the data that they get, they’ve mostly stolen, or just go out onto the net and get whatever data they can get.”

Benioff warned attendees that the “next set of data” LLM developers want is corporate data. This, he suggested, represents the holy grail for developers.

Access to corporate data will “make them even smarter” and benefit only a select few organizations rather than benefiting the broader enterprise industry.

With this in mind, Benioff underlined the importance of closing the “trust gap” and pursuing a responsible approach to AI development and use.

It’s an area that Salesforce has focused on heavily in recent years. The tech giant went out of its way to create an ‘ethical and humane use officer’ to examine and assess the responsible use and development of AI tools.

Similarly, the firm created a chief trust officer “way before anyone” else in a bid to guide and direct its responsible AI goals.

“We created a chief trust officer in our company because we recognize at Salesforce that your data is not our product,” he said. “That is not what we do here - you control access to your data.”

“We prioritize accurate, verifiable results, our product policies protect human rights, and we advance responsible AI globally, just as we always have. Transparency builds trust.”

Salesforce’s trust-based approach to AI is one that differentiates it from industry counterparts, Benioff suggested. And it’s a policy that has fostered valuable relationships with customers over the years.

“We want to turn AI over to you, but only with trust,” he said. “That is our mission on AI. We want to build a trusted AI platform for customer companies so that everyone is Einstein and more productive.”

Salesforce’s recent product announcements appear to align closely with what Benioff discussed during his keynote.

Promotion of the firm’s recently-launched Einstein Copilot assistant was frequently accompanied by comments highlighting the ‘Einstein Trust Layer’, which is a privacy-focused, “zero-retention architecture” which underpins Salesforce’s Einstein AI tools.

Moving forward, continuing its trust-based approach is a key objective at Salesforce, Benioff told attendees. The tech giant wants to “do something very, very different” and stick to long-established values rather than favoring development at all costs.

“As we go through this next wave of generative AI and as we start to head towards autonomy, my engineering teams have said ‘we’re going to do something very, very different’,” he said.

“We are going to stand behind our values.”

Ross Kelly is ITPro's News & Analysis Editor, responsible for leading the brand's news output and in-depth reporting on the latest stories from across the business technology landscape. Ross was previously a Staff Writer, during which time he developed a keen interest in cyber security, business leadership, and emerging technologies.

He graduated from Edinburgh Napier University in 2016 with a BA (Hons) in Journalism, and joined ITPro in 2022 after four years working in technology conference research.

For news pitches, you can contact Ross at ross.kelly@futurenet.com, or on Twitter and LinkedIn.

-

Salesforce ramps up agentic AI research with new foundry project

Salesforce ramps up agentic AI research with new foundry projectNews Researchers are already working on new tools for agent-to-agent interaction and “ambient intelligence”

-

Salesforce targets unified customer support automation with Agentforce Contact Center

Salesforce targets unified customer support automation with Agentforce Contact CenterNews Combining AI agents, telephony, and CRM, Salesforce is making a firm case for automated customer interactions and controlled

-

Salesforce targets telco gains with new agentic AI tools

Salesforce targets telco gains with new agentic AI toolsNews Telecoms operators can draw on an array of pre-built agents to automate and streamline tasks

-

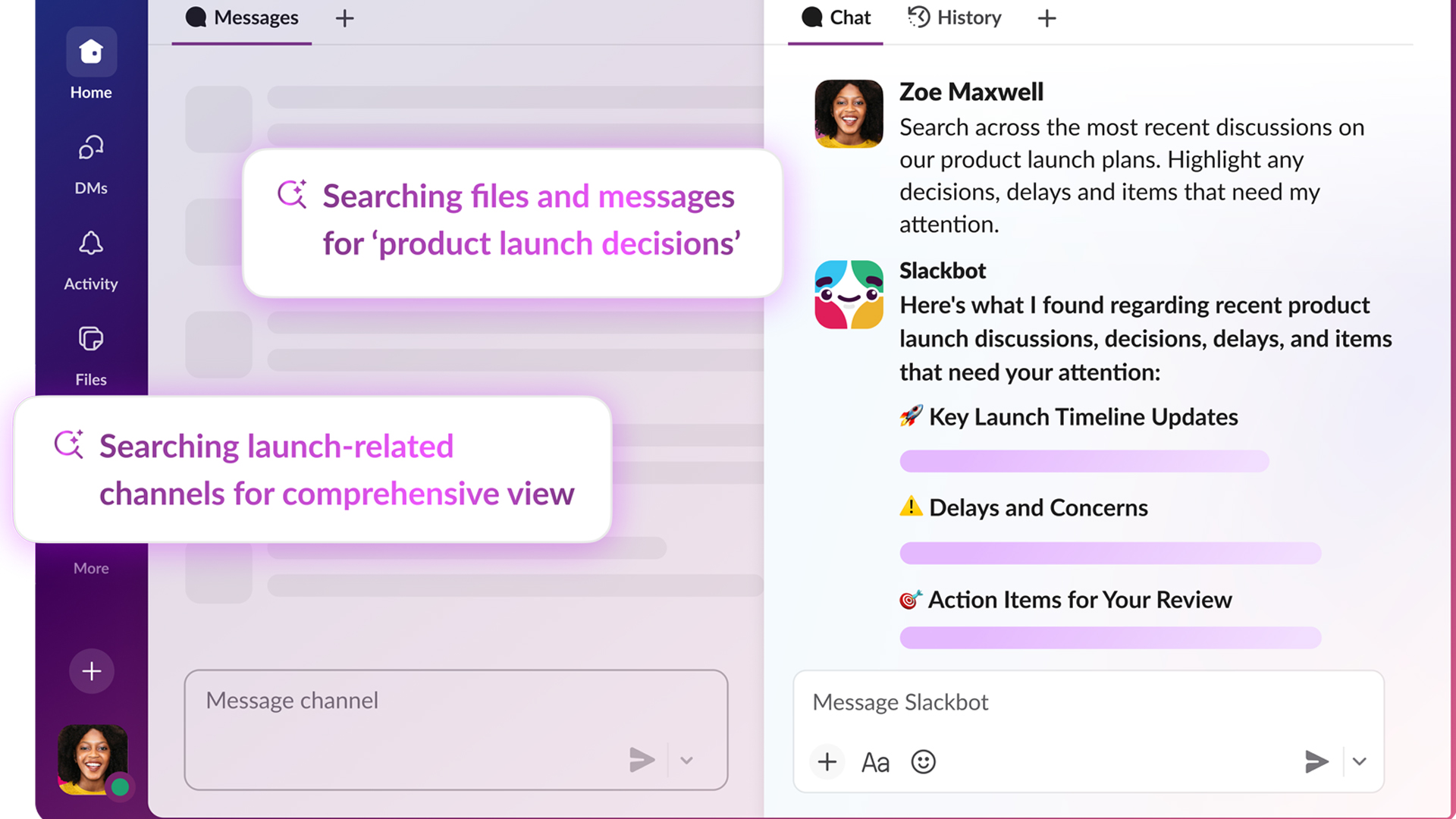

The AI-enabled Slackbot is now generally available – Salesforce says it could save more than a day’s work per week

The AI-enabled Slackbot is now generally available – Salesforce says it could save more than a day’s work per weekNews With an entirely overhauled model behind the chatbot, users can summarize channels and ask for highly personalized information

-

'It's slop': OpenAI co-founder Andrej Karpathy pours cold water on agentic AI hype – so your jobs are safe, at least for now

'It's slop': OpenAI co-founder Andrej Karpathy pours cold water on agentic AI hype – so your jobs are safe, at least for nowNews Despite the hype surrounding agentic AI, OpenAI co-founder Andrej Karpathy isn't convinced and says there's still a long way to go until the tech delivers real benefits.

-

‘I don't think anyone is farther in the enterprise’: Marc Benioff is bullish on Salesforce’s agentic AI lead – and Agentforce 360 will help it stay top of the perch

‘I don't think anyone is farther in the enterprise’: Marc Benioff is bullish on Salesforce’s agentic AI lead – and Agentforce 360 will help it stay top of the perchNews Salesforce is leaning on bringing smart agents to customer data to make its platform the easiest option for enterprises

-

Dreamforce 2025 live: All the latest updates from San Francisco

Dreamforce 2025 live: All the latest updates from San FranciscoNews We're live on the ground in San Francisco for Dreamforce 2025 – keep tabs on all of our rolling coverage from the annual Salesforce conference.

-

Salesforce just launched a new catch-all platform to build enterprise AI agents

Salesforce just launched a new catch-all platform to build enterprise AI agentsNews Businesses will be able to build agents within Slack and manage them with natural language