Now you can try out Google Gemini Pro for yourself

Devs can leverage Gemini Pro using Google AI Studio and Vertex AI

Developers have been offered a chance to try out Google’s powerful new Gemini Pro AI model following its high-profile arrival last week.

Google has said that Gemini can run on everything from data centers to smartphones.

The launch of the new model represents the tech giant’s best hope of catching up with OpenAI’s ChatGPT, which has been grabbing headlines – and user momentum – over the last year.

The first iteration of the model, Gemini 1.0, will come in three sizes - the lightweight Gemini Nano for smartphones and other small devices, Gemini Pro for scaling across a wide range of applications, and Gemini Ultra for highly complex tasks.

Google has touted good test results for Gemini Ultra, and noted that across areas such as natural image, audio, and video understanding and mathematical reasoning, it beats the current state-of-the-art results on 30 of the 32 top benchmarks for large language models.

The firm made sure to highlight where Gemini Ultra beats GPT-4, however, Ultra won’t be available until next year while OpenAI's flagship model has been out since March.

Now Gemini Pro is available for developers and enterprises to build for their own use cases.

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

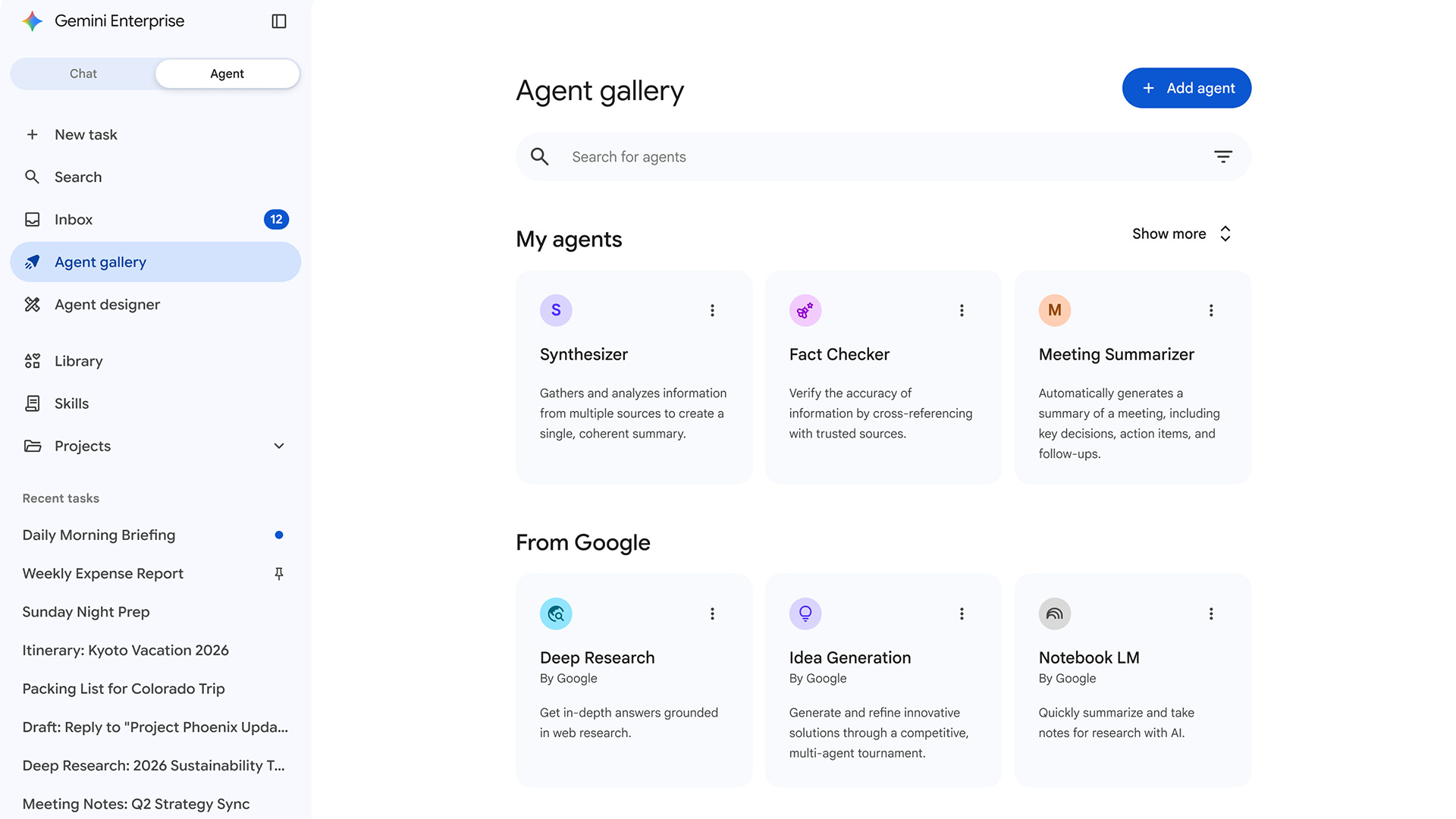

It’s available in two ways - via Google AI Studio, a free web-based tool for developers to build their prompts, and through Google’s more comprehensive Vertex AI platform, which allows companies to build production-grade agents using their own data.

Gemini Pro: What can users expect?

Google said Gemini Pro currently accepts text as input and generates text as output, although there is also a Gemini Pro Vision version available that accepts text and imagery as input, with text output.

Software Development Kits for Gemini Pro are now openly available and support a range of coding languages, including Python, Android (Kotlin), Node.js, Swift, and JavaScript.

The Google AI Studio developer tool offers a free limit of 60 requests per minute.

However, Google said to help it improve product quality, when developers use the free quota, their API and Google AI Studio input and output “may be accessible” to trained reviewers, although the data is “de-identified” from their Google account and API key.

With Vertex AI, developers have access to the same Gemini models, but will be able to tune it with their own company’s data or include up-to-minute information and extensions to take real-world actions.

Google has also unveiled an upgraded version of its image model, Imagen 2, which improves photorealism, text rendering, and logo generation capabilities.

Similarly, the firm announced MedLM, a family of foundation models fine-tuned for healthcare industry use cases.

When Gemini was first unveiled, Google chief executive Sundar Pichai said the company was “nearly eight years into our journey as an AI-first company”.

But it has also clearly been wrong-footed by the unexpected and huge success of ChatGPT. The wave of announcements around Gemini will be aimed at countering that – especially as OpenAI has been battling controversy in recent weeks.

RELATED RESOURCE

Discover how a mobile-first mindset can improve team cohesion

DOWNLOAD NOW

Google released a video showing some of the capabilities of Gemini, and although it’s impressive, the firm noted the latency has been reduced and Gemini outputs have been shortened for brevity, which make it a bit less fancy.

Even so, Gemini is that latest entrant to the AI race. It was specifically designed as a multimodal model, which means it has been trained to recognize and understand text, images and audio at the same time, so it better understands nuanced information and can answer questions relating to complicated topics.

This makes it better at explaining reasoning in complex subjects like math and physics, Google claimed. The first version of Gemini can explain and generate code in the programming languages including Python, Java, C++, and Go.

Gemini Pro is already being put to use as part of Google’s Bard chatbot, while Gemini Nano is running on Google’s Pixel 8 Pro smartphone where it powers the Summarize in the Recorder app and Smart Reply in Gboard.

Gemini Ultra is listed by Google as “coming soon” because it’s still undergoing extensive trust and safety checks. Ultra will be offered to some customers and developers for early experimentation and feedback before an eventual roll-out for developers and enterprise customers in early 2024.

Steve Ranger is an award-winning reporter and editor who writes about technology and business. Previously he was the editorial director at ZDNET and the editor of silicon.com.

-

‘Perfect’ Zero Trust is killing your mid-market productivity

‘Perfect’ Zero Trust is killing your mid-market productivitySponsored Security theory often collapses under real-world deadlines. It’s time for a more auditable, “human-centric” approach to privileged access management

-

Increased AI use means developers spend more time reviewing code than ever

Increased AI use means developers spend more time reviewing code than everNews While AI is improving productivity and efficiency, many developers are caught up in a vicious cycle of code reviews and bug hunting

-

Microsoft joins competitors in handing over AI models for advanced testing

Microsoft joins competitors in handing over AI models for advanced testingNews US and UK government agencies will evaluate the firm's frontier models, along with those from Google and xAI

-

Google is building its own OpenClaw alternative — Remy ‘elevates the Gemini app into a true assistant’

Google is building its own OpenClaw alternative — Remy ‘elevates the Gemini app into a true assistant’News The OpenClaw-style agent, dubbed ‘Remy’, is reportedly being tested by developers internally

-

Google expands Gemini Enterprise, consolidates Vertex AI services to simplify agent deployment

Google expands Gemini Enterprise, consolidates Vertex AI services to simplify agent deploymentNews Gemini Enterprise Agent Platform aims to help organizations to build, scale, govern, and optimize AI agents

-

Google says hacker groups are using Gemini to augment attacks – and companies are even ‘stealing’ its models

Google says hacker groups are using Gemini to augment attacks – and companies are even ‘stealing’ its modelsNews Google Threat Intelligence Group has shut down repeated attempts to misuse the Gemini model family

-

‘The fastest adoption of any model in our history’: Sundar Pichai hails AI gains as Google Cloud growth, Gemini popularity surges

‘The fastest adoption of any model in our history’: Sundar Pichai hails AI gains as Google Cloud growth, Gemini popularity surgesNews The company’s cloud unit beat Wall Street expectations as it continues to play a key role in driving AI adoption

-

‘In the model race, it still trails’: Meta’s huge AI spending plans show it’s struggling to keep pace with OpenAI and Google – Mark Zuckerberg thinks the launch of agents that ‘really work’ will be the key

‘In the model race, it still trails’: Meta’s huge AI spending plans show it’s struggling to keep pace with OpenAI and Google – Mark Zuckerberg thinks the launch of agents that ‘really work’ will be the keyNews Meta CEO Mark Zuckerberg promises new models this year "will be good" as the tech giant looks to catch up in the AI race

-

DeepSeek rocked Silicon Valley in January 2025 – one year on it looks set to shake things up again with a powerful new model release

DeepSeek rocked Silicon Valley in January 2025 – one year on it looks set to shake things up again with a powerful new model releaseAnalysis The Chinese AI company sent Silicon Valley into meltdown last year and it could rock the boat again with an upcoming model

-

Google’s Apple deal is a major seal of approval for Gemini – and a sure sign it's beginning to pull ahead of OpenAI in the AI race

Google’s Apple deal is a major seal of approval for Gemini – and a sure sign it's beginning to pull ahead of OpenAI in the AI raceAnalysis Apple opting for Google's models to underpin Siri and Apple Intelligence is a major seal of approval for the tech giant's Gemini range – and a sure sign it's pulling ahead in the AI race.