Technical standards bodies hope to deliver AI success with ethical development practices

The ISO, IEC, and ITU are working together to develop standards that can support the development and deployment of trustworthy AI systems

Three major international technical standardization bodies are working to introduce ethical considerations into their standards, with the release of four guiding principles.

The International Organization for Standardization (ISO), the International Electrotechnical Commission (IEC), and the International Telecommunication Union (ITU) last week launched the Seoul Statement at an event in South Korea.

This statement is aimed at advancing the development of safe, inclusive, and effective international standards for AI. These standards, the bodies revealed, should reflect global needs, support regulatory alignment, and foster interoperability, trust and inclusion.

"It places international standards at the heart of AI governance," said Sung Hwan Cho, president of the ISO.

"We must systematically include social and human rights considerations into our standards work. We must collaborate across government, industry and civil society and academia to ensure all voices are heard."

The guiding principles for trustworthy AI

The statement is based on four core principles covering key areas spanning development, deployment, and long-term maintenance of AI systems.

Standards should actively incorporate sociological dimensions as well as technical ones, for example. They should deepen the understanding of the interplay between international standards and human rights, recognizing both their importance and universality throughout the AI development lifecycle.

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

They should also help strengthen an inclusive, multi-stakeholder community to develop and apply international standards for the design, deployment, and governance of AI. Elsewhere, the organizations encouraged closer collaboration between public and private sector entities on AI capacity building.

"Standards are technical tools to uphold the principles we want to live by,” said Seizo Onoe, director of the ITU telecommunication standardization Bureau.

"The vision set out by this joint statement calls for diverse expertise and global commitment to collaboration and consensus – exactly what drives our standards work and exactly the spirit needed to create the future we want.”

Any regulatory framework will need to be forward-thinking and adaptable, the bodies noted, largely due to the rapid evolution of the AI landscape moving forward.

Ethical specifications will have to reflect related issues such as the poor provision of energy supply in developing countries for example, as well as the lack of compute power.

Research highlighted by the standards bodies indicates that the developing world houses less than 1% of global data center capacity, underlining the need for greater investment to broaden compute capacity.

These nations are also struggling with a shortage of chipsets and AI components, a lack of public-private data sharing, and a severe shortage of training.

Tackling AI safety concerns

Next steps for the organizations include the drafting of standards on the storage of sensitive data.

The trio are also planning to look at the issues of election interference, deepfakes, and misinformation. The latter of these areas is of particular interest, they noted, with most current deepfake detectors failing to adequately deliver.

Threat actors are already using deepfakes to dupe unsuspecting enterprise workers, with a recent study from Ironscales showing that 85% of cybersecurity and IT leaders have experienced at least one deepfake attack in the last year, marking a 10% increase on 2024 statistics.

"How is a deepfake coming about, and how do we give the technical tools to make detecting it easier?" asked Philippe Metziger, CEO and secretary general of the IEC.

"Our role is making things more transparent from a technical point of view."

The statement was created following a UN recommendation last year, with the hope that standards convergence can help reduce fragmentation and lower compliance burdens, while focusing on responsible AI development and deployment.

"Standards don't solve everything," said Metziger. "But we see ourselves as major contributors to AI governance."

Make sure to follow ITPro on Google News to keep tabs on all our latest news, analysis, and reviews.

MORE FROM ITPRO

Emma Woollacott is a freelance journalist writing for publications including the BBC, Private Eye, Forbes, Raconteur and specialist technology titles.

-

Sam Altman pours cold water on AI 'jobs apocalypse' concerns

Sam Altman pours cold water on AI 'jobs apocalypse' concernsNews OpenAI CEO Sam Altman “thought there would have been more impact” on white collar and entry-level jobs at this point

-

Everything you need to know about Euro-Office

Everything you need to know about Euro-OfficeNews Euro-Office offers a sovereign European rival to Microsoft Office and Google Docs

-

Dell raises annual forecasts as AI boom continues to reward hardware vendors

Dell raises annual forecasts as AI boom continues to reward hardware vendorsNews Supply chain adjustments and shrewd management of the memory chip shortage help Dell capitalize on increased demand for AI

-

Upskill your staff in AI or expect them to quit, says Gartner

Upskill your staff in AI or expect them to quit, says GartnerNews Organizations need to focus on targeted AI tools and training to make the most of their staff and succeed in transformation

-

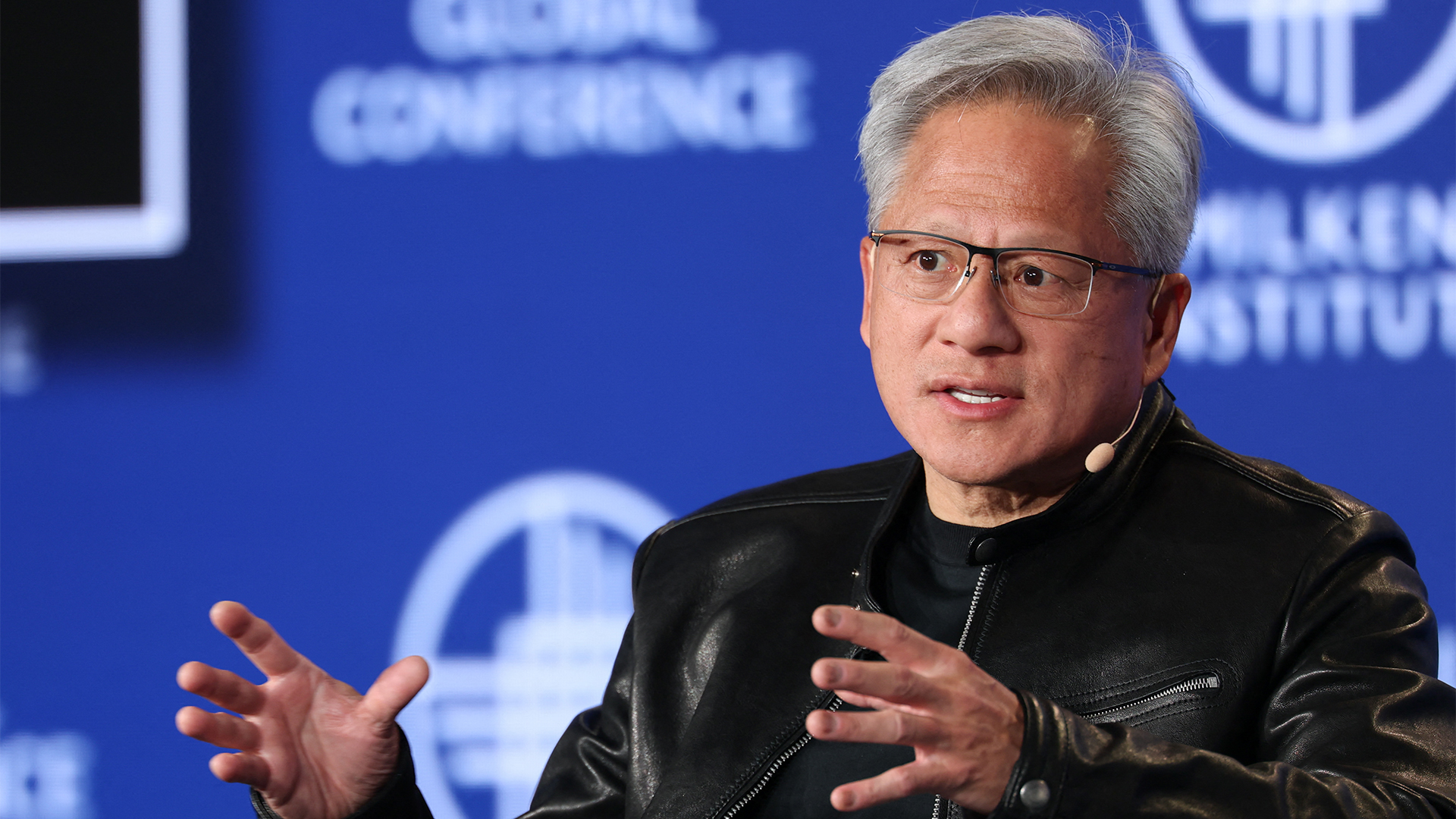

Nvidia CEO Jensen Huang says these professions will be the big winners of the generative AI boom

Nvidia CEO Jensen Huang says these professions will be the big winners of the generative AI boomNews White collar workers might be sweating, but Jensen Huang thinks skilled tradespeople will be in the vanguard of the AI revolution

-

AI adoption projects keep failing, but enterprise ‘FOMO’ means investment is still rising

AI adoption projects keep failing, but enterprise ‘FOMO’ means investment is still risingNews More than half of organizations say they're only deploying AI because their competitors do

-

‘Today’s actions are not a cost-cutting exercise’: Cloudflare is cutting 1,100 jobs as internal AI usage surges 600%

‘Today’s actions are not a cost-cutting exercise’: Cloudflare is cutting 1,100 jobs as internal AI usage surges 600%News The layoffs at Cloudflare come amid a 600% increase in internal AI usage

-

The first hurdle is the hardest in generative AI adoption – and businesses keep falling

The first hurdle is the hardest in generative AI adoption – and businesses keep fallingAnalysis AWS’ UK chief said AI advances “feel like magic” at its recent London summit, but many firms are facing the reality of sluggish gains

-

UK firms are grappling with mismatched AI productivity gains – employees are more efficient, but business performance is stagnating

UK firms are grappling with mismatched AI productivity gains – employees are more efficient, but business performance is stagnatingNews AI is providing value at an individual level, but “systems and workflows” need to be redesigned for business-wide gains

-

Dynatrace eyes IT observability gains with Bindplane acquisition

Dynatrace eyes IT observability gains with Bindplane acquisitionNews The vendor said Bindplane’s integration will help customers gain greater control over telemetry data across distributed environments