Legal professionals face huge risks when using AI at work

Lawyers were caught out with fake citations after using generative AI on a case, highlighting a lack of AI literacy in the field

US law firm Morgan & Morgan has told its staff to stop using AI because it could make up case law — and presenting that in a court filing was a fireable offense.

The law firm, which specializes in personal injury claims, warned two staff members for using the technology earlier this month.

The lawyers in question have been sanctioned by a federal judge in Wyoming for citing non-existent cases in a filing against Walmart in a suit over a toy hoverboard, according to Reuters.

One of the lawyers admitted to using AI, saying it was an unintentional mistake.

While such mistakes remain rare, it's certainly not the first time. Reuters cited seven instances where lawyers have been questioned or disciplined over using AI in a case in the US, while DoNotPay was slapped down for billing itself as "the world's first robot lawyer".

Similarly, a survey by Thomson Reuters showed 64% of law firms were already using AI for research, suggesting wide swathes of the legal profession are making use of the technology despite such serious concerns.

This isn’t the first time the potential risks of AI in the legal profession have been flagged. Last year, a report from US chief justice John Roberts highlighted the potential pitfalls and impact of generative AI in the legal field, as well as its long-term applications for workers.

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

Roberts’ report noted concerns over AI hallucinations and the potential damage this could cause.

Speaking to ITPro at the time, Adam Ryan, VP of Product at Litera, said legal professionals have an ethical obligation to use the technology responsibly.

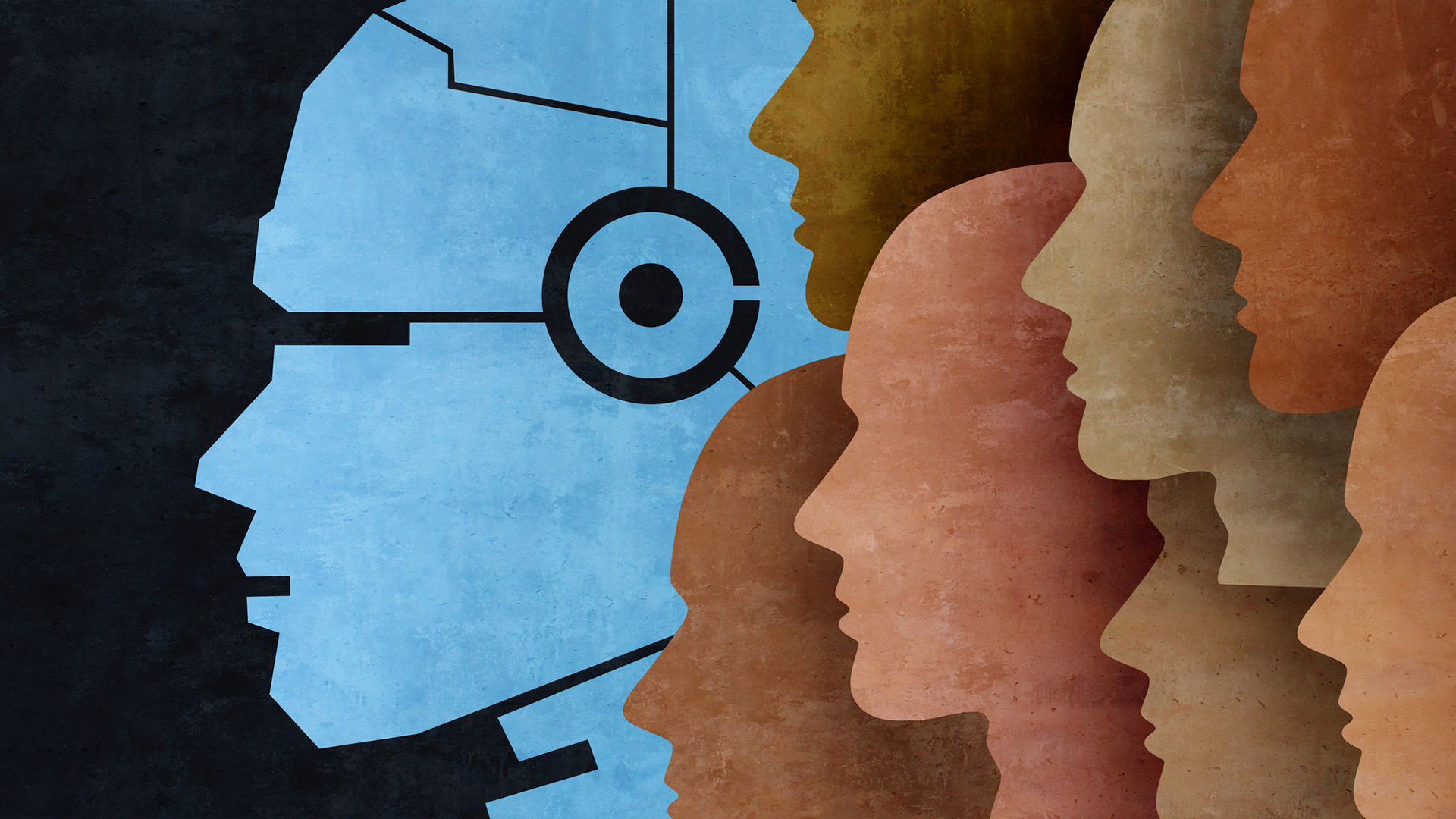

Generative AI tools such as ChatGPT draw from large language models (LLMs) to predict the next letter or word when it generates text — but it doesn't have any sense of accuracy or what's true. To get around this, users ask for citations — necessary in the legal field — but LLMs will make those up too.

These are called "hallucinations" as though they're a bug, but it's simply how the systems work – though more advanced systems are better at returning text that's accurate and are now linked to the web for real-time sourcing, making fact checking easier.

The way LLMs work means they can help lawyers in their daily roles, but only if all facts are double-checked for accuracy – a point not all in the field appear to be aware of.

Testing AI on the law

To prove the point, British law firm Linklaters tested AI chatbots with 50 questions across 10 different areas of law, admitting the test was designed to be hard.

"They are the sort of questions that would require advice from a competent mid-level (two years’ post qualification experience) lawyer, specialized in that practice area; that is someone traditionally four years out of law school," the company said.

The second round of the benchmark, released earlier this month, included OpenAI o1 and Google Gemini 2.0, the most recent models from those companies. Senior lawyers marked each answer out of ten, including points for substance, clarity, and citations.

Back in 2023, out of GPT-2, GPT-3, GPT-4, and Google Bard, the best score was posted by the latter — though Bard only scored 4.4 out of ten. In this second round, Gemini scored six out of ten, while OpenAI o1 scored 6.4 out of ten.

"In both cases, this was driven by material increases in the scores for substance and the accuracy of citations," the company noted.

Given the low scores, it's no surprise that Linklaters warned against using either model for English law advice — without expert human interaction.

"However, if that expert supervision is available, they are getting to the stage where they could be useful, for example by creating a first draft or as a cross-check. This is particularly the case for tasks that involve summarizing relatively well-known areas of law," Linklaters added.

So could these models get good enough to one day be useful on their own for legal interpretation? Linklaters noted that the rate of progression was significant, but it remained unclear if it would continue.

It's worth noting that these models are trained on generalist text, such as the wider internet. A law-bot trained specifically for use in a legal context could prove more useful than such universal tools.

Even then, LLMs might not be the best option for law firms, according to Linklaters.

"It is possible there are inherent limitations to LLMs – which are partly stochastic parrots regurgitating the internet (and other learned text) on demand," Linklaters added. "However, the fine tuning of this technology is likely to deliver performance improvements for years to come."

Lack of AI literacy

The real problem might not be AI, but the fact that so many lawyers use it without understanding its well-understood limitations.

Harry Surden, a law professor at the University of Colorado, told Reuters that these examples suggest a "lack of AI literacy", and the answer wasn't to ditch technology but to learn the limitations of generative AI.

"Lawyers have always made mistakes in their filings before AI… This is not new,” he told the publication.

Indeed, for those lawyers who are aware of so-called hallucinations in LLMs, the challenge may be their own inability to spot mistakes — be they generated by humans or machines.

RELATED WHITEPAPER

Dr Ilia Kolochenko, partner & cybersecurity practice lead at Platt Law LLP and fellow at the European Law Institute (ELI), said that given the widespread coverage of AI hallucinations, many legal professionals will be aware of the risk.

"The AI hallucinations phenomena has been so widely mediatized and enthusiastically discussed virtually by every single user of all social networks, that it would be unwise to hypothesize that lawyers are unaware of the hallucinations or other AI-related risks," he said.

"The true problem is that even after a thorough verification of AI-generated or AI-tuned legal content, say, a complex multijurisdictional M&A contract or a response to antitrust probe, some lawyers simply cannot spot legal mistakes related to both procedure and substance of the case."

That's one reason to avoid AI and instead speak to more experienced colleagues. But there is another: it's the only way to learn, and by using AI, lawyers — as with any knowledge worker — risks over relying on the technology.

"As a result, lawyers may not only provide defective and harmful legal advice to clients, but also gradually lose their intellectual capacities, as several recent researches consistently demonstrate," he said.

Indeed, recent research by Microsoft suggests the use of AI — including its own — at work can impact critical thinking.

AI might still have a role to play

That means that AI may have a role, but it needs to be carefully considered, Kolochenko said.

"In sum, while AI may automate and accelerate many routine legal tasks, it shall never fully replace human expertise or preclude legal professionals from continually honing their legal and related skills, otherwise, they may face harsh disciplinary actions and legal malpractice lawsuits.”

Indeed, that research by Thomson Reuters suggests that there could be a wide range of uses for AI, with 47% using it for document review, 45% for disclosure, and 38% for document automation.

"The industry will gain confidence in GenAI as it continues to have a positive impact on how we work,” Ryan told ITPro last year.

“Not using GenAI and LLM tools will put firms at a serious disadvantage as they will not be able to work nearly as quickly, accurately, or efficiently as firms that are leveraging these game-changing tools.”

MORE FROM ITPRO

- Why AI could be a legal nightmare for years to come

- Use of generative AI in legal profession accelerates despite accuracy concerns

- The future of AI in the legal industry

Solomon Klappholz is a former staff writer for ITPro and ChannelPro. He has experience writing about the technologies that facilitate industrial manufacturing, which led to him developing a particular interest in cybersecurity, IT regulation, industrial infrastructure applications, and machine learning.

-

Dell Technologies World 2026: agents, hardware, and tokenomics

Dell Technologies World 2026: agents, hardware, and tokenomicsJane, live from Las Vegas, takes us through her week at Dell’s AI agent extravaganza

-

Could rising token costs boost interest in on-premises hardware?

Could rising token costs boost interest in on-premises hardware?Dell Technologies executives claimed running agents on premises will be cheaper than in the public cloud. They may have a point…

-

Are AI tools making us less intelligent?

Are AI tools making us less intelligent?News Growing reliance on AI tools that provide instant answers could be eroding skills, GoTo report warns

-

‘Too many employees are serving as the human middleware’: Workers are wasting a full day each week switching between disparate AI tools and internal systems

‘Too many employees are serving as the human middleware’: Workers are wasting a full day each week switching between disparate AI tools and internal systemsNews Transferring data from one AI tool to another is costing more time than the tools actually save

-

Microsoft joins competitors in handing over AI models for advanced testing

Microsoft joins competitors in handing over AI models for advanced testingNews US and UK government agencies will evaluate the firm's frontier models, along with those from Google and xAI

-

AI adoption is accelerating in the UK, but ‘trust is not keeping pace’

AI adoption is accelerating in the UK, but ‘trust is not keeping pace’News Organizations need to do more to reassure customers over governance

-

Liz Kendall: The UK is in prime position to become a global leader in AI — but greater tech industry support is needed to avoid falling behind

Liz Kendall: The UK is in prime position to become a global leader in AI — but greater tech industry support is needed to avoid falling behindNews Tech secretary Liz Kendall has pledged greater investment in the chip and semiconductor technologies that underpin AI

-

UK firms accelerate ‘sovereign AI’ plans amid concerns over dependence on overseas tech

UK firms accelerate ‘sovereign AI’ plans amid concerns over dependence on overseas techNews A Red Hat report shows firms are prioritizing sovereign AI over fears that foreign providers could restrict access

-

UK organizations are failing to move past basic AI use-cases

UK organizations are failing to move past basic AI use-casesNews Businesses in the UK are ramping up AI adoption, but they’re falling at key hurdles

-

‘AI is not making IT simpler – it's making it more consequential’: IT workers are feeling the heat as AI raises expectations

‘AI is not making IT simpler – it's making it more consequential’: IT workers are feeling the heat as AI raises expectationsNews A SolarWinds survey suggests AI makes IT work more strategic, but also adds friction and raises expectations