Amazon Olympus could be the LLM to rival OpenAI and Google

With a reported two trillion parameters, Amazon Olympus would be among the most powerful models available

Amazon is working on the development of a new large language model (LLM) known as ‘Olympus’ in a bid to topple ChatGPT and Bard, according to reports.

Sources at the company told The Information that the tech giant is working on the LLM and has allocated both resources and staff from its Alexa AI and science teams to spearhead its creation.

Development of the model is being led by Rohit Prasad, former head of Alexa turned lead scientist for artificial general intelligence (AGI)according to Reuters.

Prasad moved into the role to specifically focus on generative AI development as the company seeks to contend with industry competitors such as Microsoft-backed OpenAI and Google.

According to sources, the Amazon Olympus model will have two trillion parameters. If correct, this would make it one of the largest and most powerful models currently in production.

By contrast, OpenAI’s GPT-4, the current market leading model, boasts one trillion parameters.

Olympus could be rolled out as early as December and there is a possibility the model could be used to support retail, Alexa, and AWS operations.

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

Is Amazon Olympus the successor to Titan?

RELATED RESOURCE

Find out what leading Salesforce customers are doing to deliver enterprise value

DOWNLOAD NOW

Amazon already has its Titan foundation models, which are available for AWS customers as part of its Bedrock framework.

Amazon Bedrock offers customers a variety of foundation models, including models from AI21 Labs and Anthropic, which the tech giant recently backed with a multi-billion-dollar investment.

Amazon Olympus could be the natural evolution of Amazon’s LLM ambitions. Earlier this year, the company revealed it planned to increase investment in the development of LLMs and generative AI tools.

ITPro has approached Amazon for comment.

Amazon Olympus deviates from Bedrock "ethos"

Olympus could be seen as a departure from Amazon’s AI strategy to date, with Amazon Bedrock having set the firm aside from the AI ‘arms race’ of Google and Microsoft by focusing on providing as wide a range of third-party AI models as possible in addition to its powerful Titan foundation models.

A core feature of Bedrock in demonstrations has been the ability to use multiple models across a single process like a production line, with models assigned to constituent tasks that play into their individual strengths.

The firm showed an example of this at its AWS Summit London conference, in which it showed a marketing department using a Titan model to SEO-optimize a product, Anthropic’s Claude to generate the product description, and Stability AI’s StableDiffusion to produce the product image.

If the firm has diverted some of its attention to creating a ‘killer’ AI model, one that will be able to outperform most or all models on its own, one could question where that fits in with Bedrock’s ethos.

Rory Bathgate is Features & Multimedia Editor at ITPro, leading our in-depth content and case studies. He can also be found co-hosting the ITPro Podcast with Jane McCallion, swapping a keyboard for a microphone to discuss the latest learnings with thought leaders from across the tech sector.

The reports stating that the model has two trillion parameters don’t stretch believability, and this would almost certainly cement Olympus as the largest LLM on the market at double the reported size of GPT-4.

Whether Olympus’ reportedly mammoth size translates into raw performance remains to be seen. LLMs are complex frameworks shaped as much by their training and fine-tuning as by the scale of the data they access, and experts in the field have been divided for years on whether bigger is better when it comes to generative AI.

For many years, it was thought that there could be a cutoff point for generative AI model size, beyond which the model would lose its specificity and begin providing vague, generalized answers. We have long since broken that barrier, and the success many companies have charted with their models has seemingly proved this sentiment wrong.

But there are also examples of smaller models performing at the same level, or better than, their larger counterparts. Google’s Palm 2, which powers its Bard chatbot, has 340 billion parameters compared to the original PaLM’s 540 billion, but has consistently outperformed the model across all benchmarks and is competitive with GPT-4.

Its performance will speak louder than its spec sheet, and it’s too early to judge Olympus before its proper announcement. But the very existence of the model represents a possible major course correction for Amazon, and customers will be watching with anticipation in the months to come.

Ross Kelly is ITPro's News & Analysis Editor, responsible for leading the brand's news output and in-depth reporting on the latest stories from across the business technology landscape. Ross was previously a Staff Writer, during which time he developed a keen interest in cyber security, business leadership, and emerging technologies.

He graduated from Edinburgh Napier University in 2016 with a BA (Hons) in Journalism, and joined ITPro in 2022 after four years working in technology conference research.

For news pitches, you can contact Ross at ross.kelly@futurenet.com, or on Twitter and LinkedIn.

-

Why cyber resilience is business critical

Why cyber resilience is business criticalSponsored Podcast Leaders need to focus on resilience over prevention, in collaboration with a trusted partner

-

Why speed and performance are vital for extracting value from data

Why speed and performance are vital for extracting value from dataSponsored Businesses are mired in more complexity than ever, with the need to harness new technologies adding to the strain. But investing in enterprise infrastructure that encourages speed and high performance means faster results.

-

Microsoft joins competitors in handing over AI models for advanced testing

Microsoft joins competitors in handing over AI models for advanced testingNews US and UK government agencies will evaluate the firm's frontier models, along with those from Google and xAI

-

Google is building its own OpenClaw alternative — Remy ‘elevates the Gemini app into a true assistant’

Google is building its own OpenClaw alternative — Remy ‘elevates the Gemini app into a true assistant’News The OpenClaw-style agent, dubbed ‘Remy’, is reportedly being tested by developers internally

-

Four things you need to know about OpenAI’s new workspace agents for ChatGPT – including how to build your own

Four things you need to know about OpenAI’s new workspace agents for ChatGPT – including how to build your ownNews New ‘workspace agents’ from OpenAI will automate tasks for workers and can be customized for specific roles

-

Google Cloud Next 2026: Scaling AI agents

Google Cloud Next 2026: Scaling AI agentsITPro Podcast The hyperscaler is going all in on full-stack AI deployment, underpinned by in-house innovations such as TPUs

-

UK organizations are failing to move past basic AI use-cases

UK organizations are failing to move past basic AI use-casesNews Businesses in the UK are ramping up AI adoption, but they’re falling at key hurdles

-

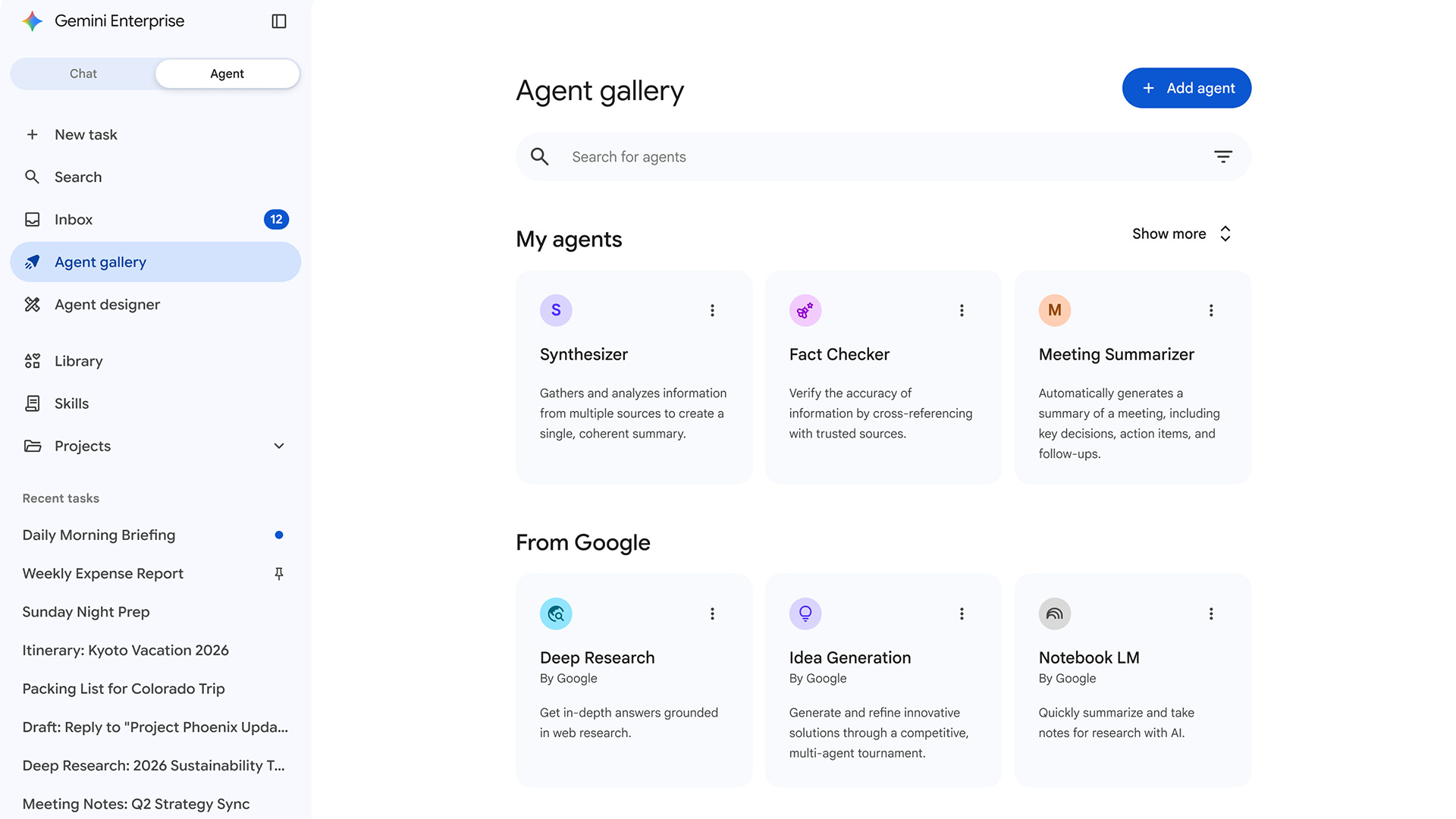

Google expands Gemini Enterprise, consolidates Vertex AI services to simplify agent deployment

Google expands Gemini Enterprise, consolidates Vertex AI services to simplify agent deploymentNews Gemini Enterprise Agent Platform aims to help organizations to build, scale, govern, and optimize AI agents

-

OpenAI says AI tools are paying dividends for small businesses, but uptake is sluggish in several UK regions

OpenAI says AI tools are paying dividends for small businesses, but uptake is sluggish in several UK regionsNews While some small businesses are seeing big benefits, many don't use AI at all

-

Microsoft has a new AI poster child in Anthropic – and it’s about time

Microsoft has a new AI poster child in Anthropic – and it’s about timeOpinion Microsoft is cosying up to Anthropic at a crucial time in the race to deliver on AI promises