Big Tech AI alliance has ‘almost zero’ chance of achieving goals, expert says

Companies like Microsoft, Google, and OpenAI all have competing objectives and approaches to openness, making true private-sector collaboration a serious challenge

A new partnership between Microsoft, Google, OpenAI, and Anthropic that aims to improve AI safety and responsibility is unlikely to meet its targets, according to an analyst.

The Frontier Model Forum has committed itself to the safe development of ‘frontier models’, which it defines as large machine learning models that exceed the most advanced models currently available.

It aims to identify AI best practices, assist the private sector, academics, and policymakers in the implementation of safety measures, educate the public, and deploy AI to fight issues such as climate change.

“It’s nice that these companies have formed this new body because the issues are challenging and common to all model creators and users,” said Avivah Litan, distinguished VP analyst at Gartner.

“But the chances that a group of diehard competitors will arrive at a common useful solution and actually get it to be implemented ubiquitously – across both closed and open source models that they and others control across the globe – is practically zero.”

At present, the Forum is only formed of its founder members but has stated it would welcome applications from organizations that can commit to developing frontier models safely and assisting wider initiatives in the sector.

Meta, which has focused on ‘open’ AI through models such as Llama 2, and AWS which has called for ‘democratized’ AI and favors a broad approach to model access, were absent from the group at the time of launch.

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

OpenAI and Microsoft have had a highly successful year of collaboration, with the industry-leading model GPT-4 having powered tools such as ChatGPT and 365 Copilot.

Google has heavily invested in its own models including PaLM 2, which it uses for its Bard chatbot. Its AI subsidiary Google DeepMind has publicly committed itself to AI safety, with CEO Demis Hassabis long having been a proponent of an ethical approach to the technology.

RELATED RESOURCE

Accelerating FinOps & sustainable IT

IT professionals are under pressure to seek new ways of ensuing sustainable growth. Discover IBM Turbonomic Application Resource Management's ability to optimise and automate cloud and data centres.

DOWNLOAD FOR FREE

Anthropic has dominated headlines less than its forum co-founders, but has made waves in the industry since it was founded by ex-OpenAI members in 2021. It claims that its chatbot Claude is safer than alternatives, and has favored a cautious approach to market in contrast with its competitors.

Litan expressed hope that the companies could work to find solutions that could be enforced by governments, and stressed that an international governmental body is necessary to enforce global AI standards but will prove difficult to form.

“We haven’t seen such global government cooperation on climate change where the solutions are already known. I wouldn’t expect global cooperation and governance around AI to be any easier. In fact, it will be harder since solutions are yet unknown. At least these companies can strive to identify solutions, so that’s a good thing.

AI regulation continues at different paces around the world. The UK has appointed a chair to its Foundation Model Taskforce, which seeks to identify guardrails for the technology that can be applied worldwide, but may be falling behind the EU which has already progressed its AI Act through several important rounds of voting.

Dr Kate Devlin, senior lecturer in social and cultural artificial intelligence in the Department of Digital Humanities at King's College London, told ITPro that the Frontier Model Forum is welcome “in principle”, and highlighted the intention of the companies involved to engage with wider partners as a good step.

“It is not yet clear what form this will take: The announcement seems to focus particularly on technical approaches, but there are much wider socio-technical aspects that need to be addressed when it comes to developing AI responsibly,” said Dr. Devlin.

“It is important to ensure there is no conflict of interest when critically examining the impact this technology is having on the world.”

Dr Devlin is a member of Responsible AI UK, a program that aims to consolidate the responsible AI ecosystem and aims to improve collaboration in the space.

Backed by the public body UK Research and Innovation, it has committed itself to research and innovation projects in socio-technical and creative fields in addition to STEM, in order to back the full potential of the UK’s AI ecosystem.

Other groups already aiming to provide safety guidelines for the use of AI include the Global Partnership on AI (GPAI), which brings together 29 countries under a rotating president country to support the OECD’s Recommendation on Artificial Intelligence.

It works to make AI theory a reality, in line with the concerns of legislators, industry professionals, academics, and civil groups.

The Frontier Model Forum named the G7’s Hiroshima AI process as an initiative it will support. This was an agreement made at the G7 Summit in Japan, with world leaders in attendance asked to collaborate with the OECD and GPAI.

Rory Bathgate is Features and Multimedia Editor at ITPro, overseeing all in-depth content and case studies. He can also be found co-hosting the ITPro Podcast with Jane McCallion, swapping a keyboard for a microphone to discuss the latest learnings with thought leaders from across the tech sector.

In his free time, Rory enjoys photography, video editing, and good science fiction. After graduating from the University of Kent with a BA in English and American Literature, Rory undertook an MA in Eighteenth-Century Studies at King’s College London. He joined ITPro in 2022 as a graduate, following four years in student journalism. You can contact Rory at rory.bathgate@futurenet.com or on LinkedIn.

-

Four things you need to know about OpenAI’s new workspace agents for ChatGPT – including how to build your own

Four things you need to know about OpenAI’s new workspace agents for ChatGPT – including how to build your ownNews New ‘workspace agents’ from OpenAI will automate tasks for workers and can be customized for specific roles

-

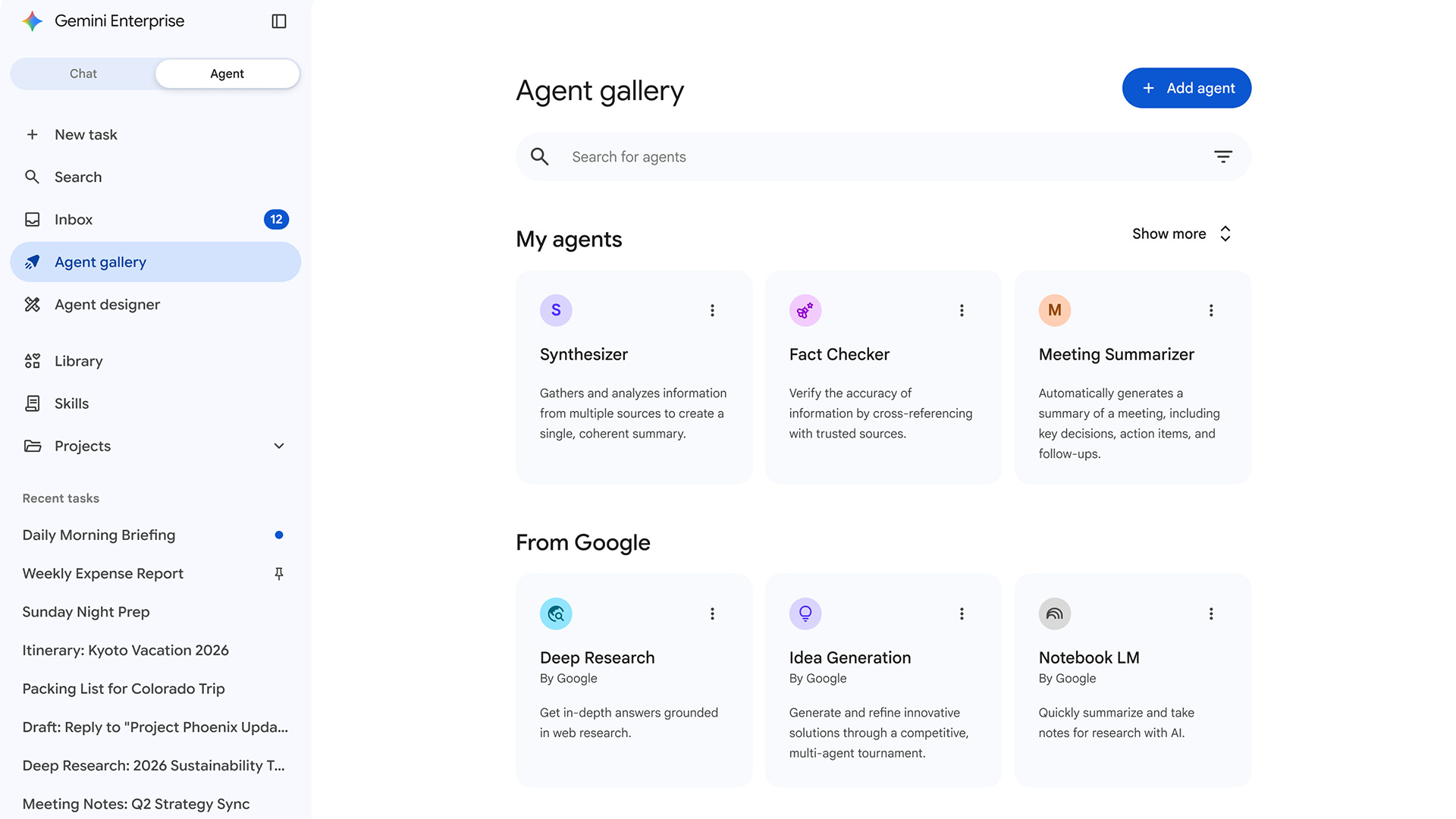

Google expands Gemini Enterprise, consolidates Vertex AI services to simplify agent deployment

Google expands Gemini Enterprise, consolidates Vertex AI services to simplify agent deploymentNews Gemini Enterprise Agent Platform aims to help organizations to build, scale, govern, and optimize AI agents

-

OpenAI says AI tools are paying dividends for small businesses, but uptake is sluggish in several UK regions

OpenAI says AI tools are paying dividends for small businesses, but uptake is sluggish in several UK regionsNews While some small businesses are seeing big benefits, many don't use AI at all

-

Microsoft has a new AI poster child in Anthropic – and it’s about time

Microsoft has a new AI poster child in Anthropic – and it’s about timeOpinion Microsoft is cosying up to Anthropic at a crucial time in the race to deliver on AI promises

-

Will AI hiring entrench gender bias?

Will AI hiring entrench gender bias?ITPro Podcast This International Women's Day, it's more important than ever to consider the inherent biases of training data

-

Why Amazon’s ‘go build it’ AI strategy aligns with OpenAI’s big enterprise push

Why Amazon’s ‘go build it’ AI strategy aligns with OpenAI’s big enterprise pushNews OpenAI and Amazon are both vying to offer customers DIY-style AI development services

-

February rundown: SaaS-pocalypse now?

February rundown: SaaS-pocalypse now?ITPro Podcast Geopolitical uncertainty is intensifying public and private sector focus on true sovereign workloads

-

‘A huge vote of confidence’: London set to host OpenAI's largest research hub outside US

‘A huge vote of confidence’: London set to host OpenAI's largest research hub outside USNews OpenAI wants to capitalize on the UK’s “world-class” talent in areas such as machine learning