Former Google CEO Eric Schmidt rejects claims Al scaling has peaked

AI doesn't has a scaling problem, despite reports to the contrary

How can the large language models (LLMs) driving the generative AI boom keep getting better? That's the question driving a debate around so-called scaling laws — and former Google CEO Eric Schmidt isn't concerned.

Scaling laws refer to how the accuracy and quality of a deep-learning model improves with size — bigger is better when it comes to the model itself, the amount of data it's fed, and the computing that powers it.

However, these scaling laws may have limits. Eventually, LLMs face diminishing returns, improvements become more expensive, or stop becoming possible altogether - perhaps reflecting a limitation in the technology itself or for more practical reasons, such as a shortage of data.

Reports earlier this month suggested the next models from the major AI developers may reflect this, with smaller leaps forward than with previous releases.

First, a report from The Information suggested OpenAI was concerned about the next version of its model, dubbed Orion, with improvements more limited than in previous releases.

Because of that, the report claimed the company was using additional techniques for better performance beyond the model itself, including post-training tweaks.

Similar challenges were noted in a report by Bloomberg at both Google and Anthropic, with the next iteration of the former's Gemini model failing to meet expectations, while Anthropic’s challenges with the next version of its Claude model have reportedly led to delays to its release.

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

Is there a wall?

Not everyone agrees that there is a limit to these LLMs, however. Following the reports, OpenAI chief executive Sam Altman posted online insisting “there is no wall."

Altman's succinct argument was expanded on by the former leader of one of OpenAI's rivals, ex-Google CEO Eric Schmidt.

Speaking on the Diary of a CEO podcast, host Steven Bartlett asked Schmidt what worried him about LLMs in the near future, and he began his answer by explaining that he believes these AI models won't face a scaling wall within the next five years.

"In five years, you'll have two or three more turns of the crank of these large language models," Schmidt said.

"These large models are scaling with an ability that is unprecedented; there's no evidence that the scaling laws, as they're called, have begun to stop. They will eventually stop, but we're not there yet,” he added.

"Each one of these cranks looks like it's a factor of two, factor of three, factor of four of capability, so let's just say turning the crank all of these systems get 50 times or 100 times more powerful."

Continued scaling a "fantasy"

Given that ever increasing power, Schmidt echoed AI safety concerns by discussing how LLMs could plan cyber attacks, create viruses, and exacerbate conflicts.

Others disagree, including long-time AI skeptic, the New York University professor Gary Marcus, who said in his Substack that AI would hit a wall, and continued progress at the same rate until reaching Artificial General Intelligence (AGI) was "a fantasy".

"The truth is that scaling is running out, and that truth is, at last coming out," he wrote, adding that venture capitalist Marc Andreesen had publicly said that on a podcast "we're not getting the intelligent improvements at all out of it" — which Marcus interpreted as "basically VC-ese for 'deep learning is hitting a wall'."

That will mean troubling times for companies that have bet big on LLMs, such as OpenAI and Microsoft. Marcus predicted that LLMs are here to stay, but the increasing costs will change the market.

"LLMs such as they are, will become a commodity; price wars will keep revenue low," he wrote.

"Given the cost of chips, profits will be elusive. When everyone realizes this, the financial bubble may burst quickly; even Nvidia might take a hit, when people realize the extent to which its valuation was based on a false premise."

The costs associated with LLMs have also been flagged as a major concern by other industry stakeholders, such as Anthropic CEO Dario Amodei.

In July, Amodei noted that it already costs hundreds of millions of dollars to train models in some instances, and there are some “in training today” that cost closer to $1 billion.

These numbers pale in comparison to the coming years, however, with Amodei predicting that AI training costs will hit the $10 billion and $100 billion mark over the course of “2025, 2026, maybe 2027”.

Freelance journalist Nicole Kobie first started writing for ITPro in 2007, with bylines in New Scientist, Wired, PC Pro and many more.

Nicole the author of a book about the history of technology, The Long History of the Future.

-

Kaseya shifts from AI ‘insights’ to autonomous action with new agentic platform

Kaseya shifts from AI ‘insights’ to autonomous action with new agentic platformNews The company aims to evolve from its suite of management tools into an autonomous operating system for MSPs

-

Accenture to roll-out Copilot to 700,000+ staff

Accenture to roll-out Copilot to 700,000+ staffNews Accenture will roll out Microsoft Copilot to nearly three quarters of a million employees after years of testing

-

Accenture has been trialling Microsoft Copilot since 2023 – now it’s rolling out the AI tool to all 743,000 staff

Accenture has been trialling Microsoft Copilot since 2023 – now it’s rolling out the AI tool to all 743,000 staffNews Accenture will roll out Microsoft Copilot to nearly three quarters of a million employees after years of testing

-

Four things you need to know about OpenAI’s new workspace agents for ChatGPT – including how to build your own

Four things you need to know about OpenAI’s new workspace agents for ChatGPT – including how to build your ownNews New ‘workspace agents’ from OpenAI will automate tasks for workers and can be customized for specific roles

-

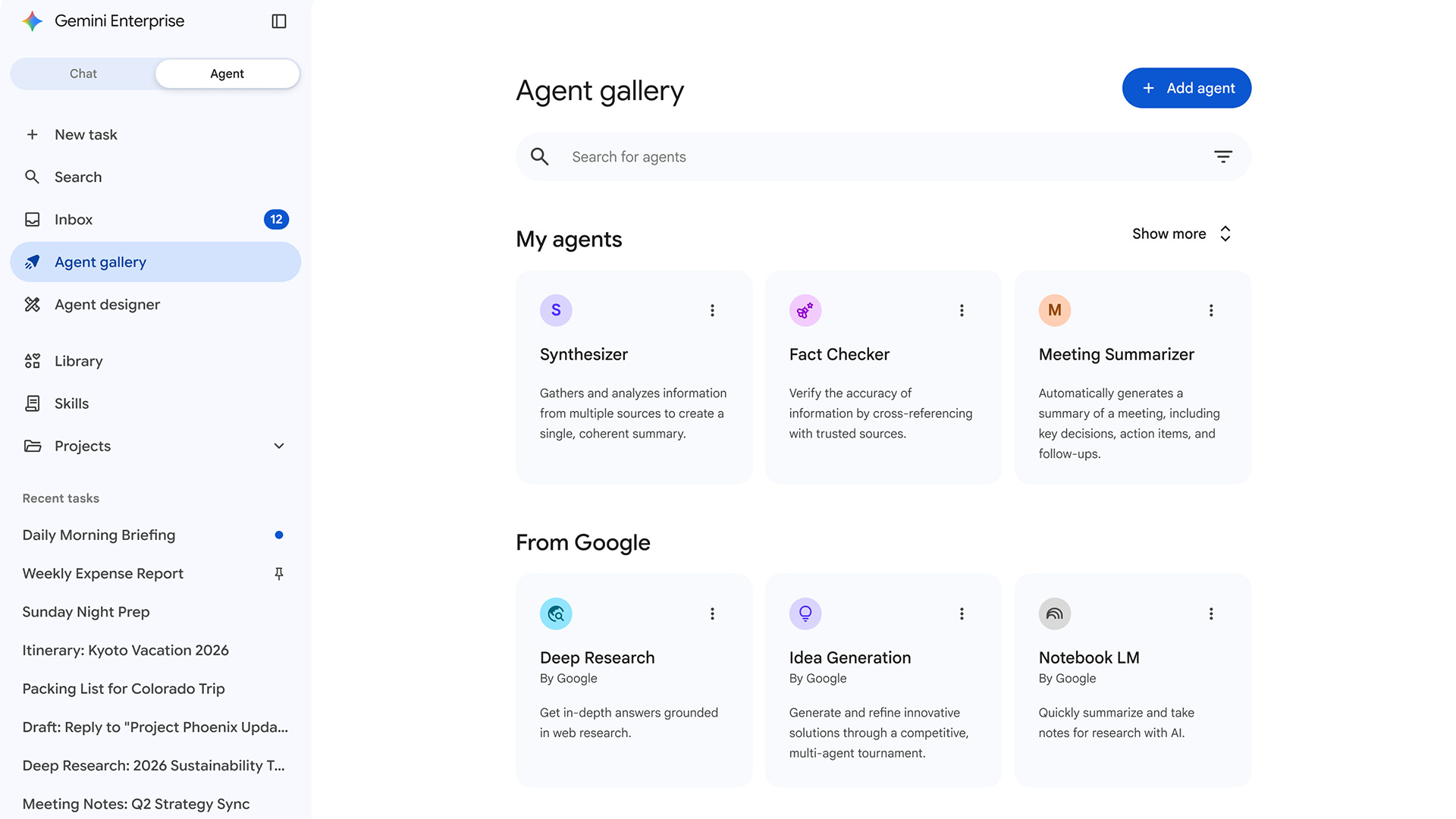

Google expands Gemini Enterprise, consolidates Vertex AI services to simplify agent deployment

Google expands Gemini Enterprise, consolidates Vertex AI services to simplify agent deploymentNews Gemini Enterprise Agent Platform aims to help organizations to build, scale, govern, and optimize AI agents

-

‘We experimented with efforts to differentially reduce these capabilities’: Anthropic toned down Opus 4.7’s cyber uses in wake of Claude Mythos release

‘We experimented with efforts to differentially reduce these capabilities’: Anthropic toned down Opus 4.7’s cyber uses in wake of Claude Mythos releaseNews Testing of new cyber-related safeguards for Anthropic’s Opus 4.7 model could shape the future public release of Claude Mythos

-

Anthropic is worried hackers could abuse its Claude Mythos AI model – so it's asking big tech partners to test it behind closed doors

Anthropic is worried hackers could abuse its Claude Mythos AI model – so it's asking big tech partners to test it behind closed doorsNews Anthropic’s Project Glasswing will give a host of leading tech companies access to its new Claude Mythos model for testing

-

'That language is no longer reflective of how Copilot is used today': Microsoft says Copilot isn't just for 'entertainment purposes only'

'That language is no longer reflective of how Copilot is used today': Microsoft says Copilot isn't just for 'entertainment purposes only'News Sharp-eyed users spotted Microsoft describing its Copilot AI as "for entertainment purposes only"

-

‘Fragmentation is poison’: How Microsoft is targeting disparate data to boost AI adoption

‘Fragmentation is poison’: How Microsoft is targeting disparate data to boost AI adoptionNews Amir Netz, the co-creator of Microsoft's Power BI service, tells ITPro that business context is key to effective AI deployment.

-

Microsoft is rolling out Copilot Cowork to more customers

Microsoft is rolling out Copilot Cowork to more customersNews Use of Copilot Cowork has been limited to select customers so far