Google Cloud targets ‘AI anywhere’ with Vertex AI Agents

AI choice across Google Cloud’s ecosystem has massively expanded, as the firm casts a wide net and emphasizes open models

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

You are now subscribed

Your newsletter sign-up was successful

Google Cloud has announced an end-to-end suite of AI updates across its platform, as it leans on its Vertex and Gemini offerings to help customers achieve ‘AI anywhere’.

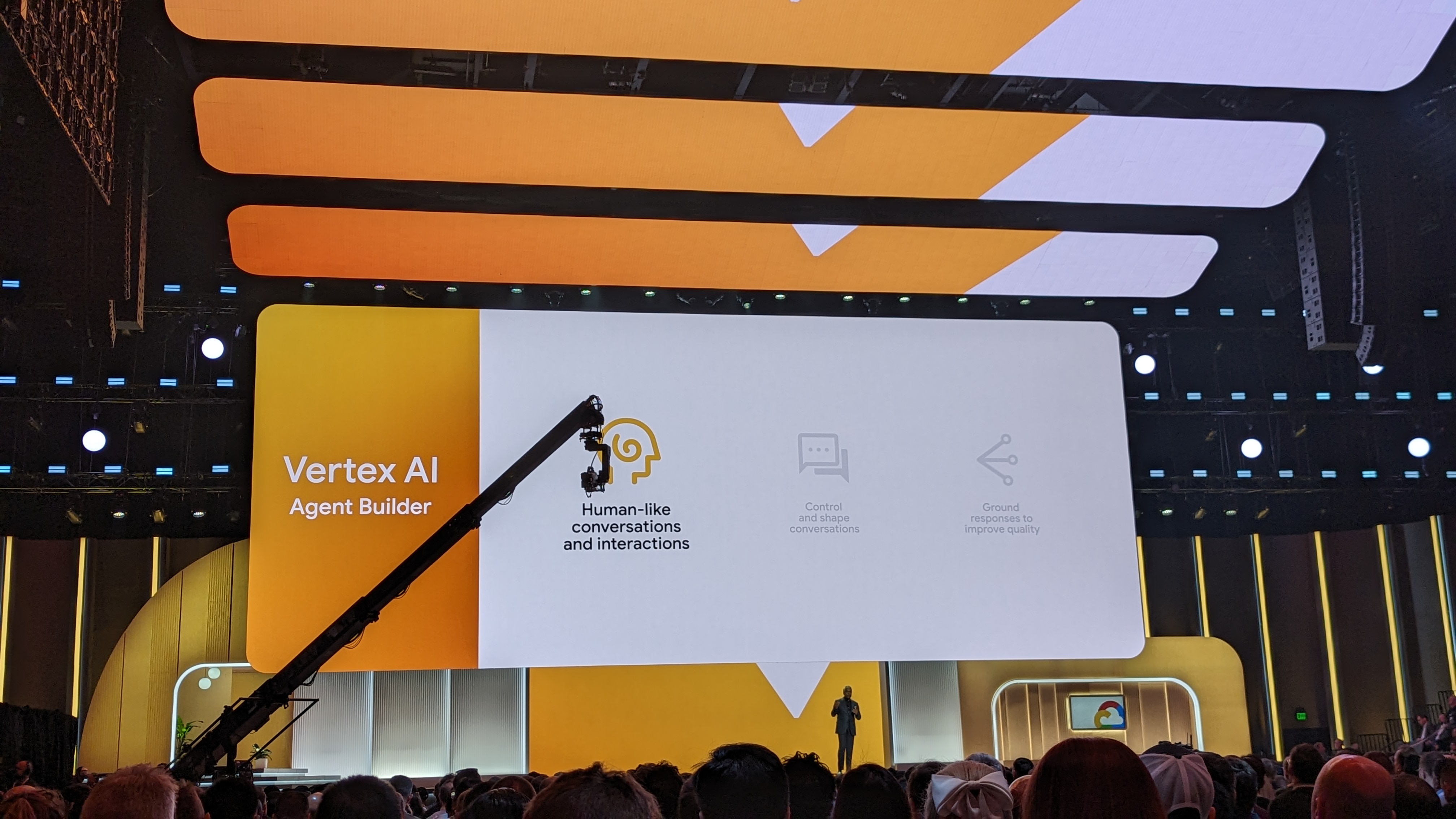

A core offering touted throughout Google Cloud Next, the firm’s annual conference held this year in Mandalay Bay, Las Vegas, has been AI ‘agents’ accessible through its Vertex AI platform.

Developers can set out custom tasks and capabilities for agents using natural language in Vertex AI Agent Builder, a new no-code solution for deploying generative AI assistants. Rooted in Google’s Gemini family of models, the agents can then complete simple or complex tasks as enterprises see fit.

In conversation with assembled media, Will Grannis, VP & chief technology officer at Google Cloud, described Vertex AI Agents as “at the top” of Google Cloud’s sophisticated AI ecosystem.

“Moving from natural language processing bots sitting on top of a website to intelligent agents that can take context from an organization, its brand guidelines, its knowledge of its own customers and use that to create new experiences, more efficient workflows, changing customer support significantly, agents as a manifestation of all that underlying innovation … it’s a world away from a year ago.”

In the opening keynote of the event, attendees were shown demos in which Vertex AI Agent could identify products within a company’s stock based on a text description of a video, or answer detailed employee questions on their company’s healthcare plan.

Via API connections, the agent could then recommend specific orthodontic clinics near to the user and pull through reviews from Google for each.

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

Agents are just one avenue for connecting AI with customer data, and Google Cloud has repeatedly stated its goal of providing as many choices for its customers as possible. Another can be found in advancements with Google Distributed Cloud (GDC)

An ‘AI anywhere’ approach via Google Distributed Cloud

Through updates to GDC, Google Cloud has doubled down on its desire for customers to plug AI into their data wherever it lies.

With generative AI search capabilities for GDC, businesses will be able to use natural language prompts to find enterprise data and content. Google Cloud said that as the service is based on Gemma, Google DeepMind’s lightweight, open model, all queries and outputs will remain on-prem to keep data secure.

Businesses will be able to search data in GDC edge and air-gapped environments using AI, in much the same way as Vertex AI Agents operate elsewhere in their stack.

It’s another avenue by which businesses can unlock AI in every corner of the working day, even when that involves using AI to trawl sensitive data that never interacts with the public cloud. The feature is expected to enter preview in Q2 2024.

RELATED WEBINAR

Through its managed approach, Google Cloud also aims to bring its cloud services to its customers’ data, including at the edge. This means that, should they wish, customers can use GDC’s cross-cloud network functionality to search air-gapped data using natural language prompts as seamlessly as they could trawl data in the public cloud.

This uses Vertex AI models and a pre-configured API for optical character recognition (OCR), speech recognition, and translation.

Two more announcements that center on surfacing data with AI include Gemini in BigQuery and Gemini in Looker, which allow workers to use prompts to discover information for data analysis.

Both run on Gemini 1.5 Pro, the latest version of Google Cloud’s in-house Gemini family of large language models which is now generally available on Vertex. The model will feature a sizeable 128k token prompt window, alongside the capacity for a gigantic one million tokens for specific circumstances.

In the opening keynote, Google Cloud showed a demo in which Gemini in Looker could answer questions on correlation between sales and data and produce graphs based on this data. As Gemini 1.5 Pro is multimodal, the model can also draw information from images.

In the keynote demo, the user could find out product sales and supply chain information by asking for all results that looked similar to a specific product.

Similarly, Gemini in BigQuery can be used for powerful data analysis across an enterprise’s data using natural language inputs. As part of its commitment to achieve ‘AI anywhere’ without the need for businesses to shift or sanitize their datasets, BigQuery and Looker can connect to Vertex AI natively to allow models to connect directly with enterprise data.

An open, mix-and-match approach to models

A key selling point of Google Cloud’s platform throughout Google Cloud Next so far has been its pursuit of the widest possible range of models, architecture choices, and avenues for training. A number of models are newly available on the platform, including all the iterations of Anthropic’s Claude 3, Google’s latest image generation model Imagen 2, and a new lightweight, open code completion model named Code Gemma.

Grannis described Vertex as a platform intended to provide customers with “almost any” model that exists and empower them to train, tune, and ground these on an enterprise’s unique data.

Businesses will be able to use Google Search to ground outputs from models, which Google Cloud maintains will help ensure models align with facts and more reliably provide verifiable information. Customers seeking to use their own data to ground models will be able to plug agents directly into information from platforms such as Salesforce or BigQuery.

“One of the more profound things in that layer is the grounding,” Grannis said.

“So Google Search native with the Gemini API as well as authoritative search for your own documents, your unstructured data, structured data, all part of Vertex AI. So taking a model and actually making it useful in the context of an industry or use case.”

“The real outcomes don’t come from the first shot,” said Grannis. “The real outcomes come from iterative development and exploring what these models can do, what they don’t do, what data you have and don’t have, and being able to combine that insight into your development workflow.”

“Think of Vertex as an opinionated MLOps platform that democratizes experimentation and generative AI,” added Grannis.

Rory Bathgate is Features and Multimedia Editor at ITPro, overseeing all in-depth content and case studies. He can also be found co-hosting the ITPro Podcast with Jane McCallion, swapping a keyboard for a microphone to discuss the latest learnings with thought leaders from across the tech sector.

In his free time, Rory enjoys photography, video editing, and good science fiction. After graduating from the University of Kent with a BA in English and American Literature, Rory undertook an MA in Eighteenth-Century Studies at King’s College London. He joined ITPro in 2022 as a graduate, following four years in student journalism. You can contact Rory at rory.bathgate@futurenet.com or on LinkedIn.

-

‘The fastest adoption of any model in our history’: Sundar Pichai hails AI gains as Google Cloud growth, Gemini popularity surges

‘The fastest adoption of any model in our history’: Sundar Pichai hails AI gains as Google Cloud growth, Gemini popularity surgesNews The company’s cloud unit beat Wall Street expectations as it continues to play a key role in driving AI adoption

-

Google’s Apple deal is a major seal of approval for Gemini – and a sure sign it's beginning to pull ahead of OpenAI in the AI race

Google’s Apple deal is a major seal of approval for Gemini – and a sure sign it's beginning to pull ahead of OpenAI in the AI raceAnalysis Apple opting for Google's models to underpin Siri and Apple Intelligence is a major seal of approval for the tech giant's Gemini range – and a sure sign it's pulling ahead in the AI race.

-

Google Cloud Summit London 2025: Practical AI deployment

Google Cloud Summit London 2025: Practical AI deploymentITPro Podcast As startups take hold of technologies such as AI agents, where is the sector headed?

-

Google Cloud is leaning on all its strengths to support enterprise AI

Google Cloud is leaning on all its strengths to support enterprise AIOpinion Google Cloud made a big statement at its annual conference last week, staking its claim as the go-to provider for enterprise AI adoption.

-

Google Cloud Next 2025: Targeting easy AI

Google Cloud Next 2025: Targeting easy AIITPro Podcast Throughout its annual event, Google Cloud has emphasized the importance of simple AI adoption for enterprises and flexibility across deployment

-

Google DeepMind’s Demis Hassabis says AI isn’t a ‘silver bullet’ – but within five to ten years its benefits will be undeniable

Google DeepMind’s Demis Hassabis says AI isn’t a ‘silver bullet’ – but within five to ten years its benefits will be undeniableNews Demis Hassabis, CEO at Google DeepMind and one of the UK’s most prominent voices on AI, says AI will bring exciting developments in the coming year.

-

Google Cloud announces UK data residency for agentic AI services

Google Cloud announces UK data residency for agentic AI servicesNews With targeted cloud credits and skills workshops, Google Cloud hopes to underscore its UK infrastructure investment

-

Google jumps on the agentic AI bandwagon

Google jumps on the agentic AI bandwagonNews Agentic AI is all the rage, and Google wants to get involved