Google’s Hugging Face partnership shows the future of generative AI rests on open source collaboration

Closer ties between Google and Hugging Face show the tech giant is increasingly bullish on open source AI development

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

You are now subscribed

Your newsletter sign-up was successful

Google’s new partnership with Hugging Face marks another significant nod of approval from big tech on the potential of open source AI development, industry experts have told ITPro.

The tech giant recently announced a deal that will see the New York-based startup host its AI development services on Google Cloud.

Hugging Face, which describes the move as part of an effort to “democratize good machine learning”, will now collaborate with Google on open source projects using the tech giant’s cloud services.

With such a sizable number of models already available on Hugging Face, the attraction on Google’s end is clear, Gartner VP analyst Arun Chandrasekaran told ITPro, and underlines another example of a major industry player fostering closer ties with high-growth AI startups.

“Hugging Face is the largest hosting provider of open source models on the planet - it's basically GitHub for AI,” he said.

“This is why big firms like Google want to partner with it, because it can leverage the huge range of models available on the platform,” he added.

For Hugging Face, the deal will offer speed and efficiency for its users through Google’s cloud services.

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

Many Hugging Face users already use Google Cloud, the firm said, and this collaboration will grant them a greater level of access to AI training and deployment through Google Kubernetes Engine (GKE) and Vertex AI.

Google is also nipping at the heels of AWS with this partnership. Amazon’s cloud subsidiary announced a similar agreement with Hugging Face to accelerate the training of LLMs and vision models in February 2023.

Again, the MO was one of AI altruism. Both Hugging Face and AWS cited a desire to make it “easier for developers to access AWS services and deploy Hugging Face models specifically for generative AI applications".

RELATED RESOURCE

Discover how generative AI provides the technical support to operate successfully

DOWNLOAD NOW

Big tech firms have sharpened their focus on open source AI development over the last year, and there are a number of contributing factors to this, according to Chandrasekaran, especially with regard to driving broader adoption of generative AI.

“Rewind back nine months, and the AI landscape was dominated by closed source LLMs - think OpenAI or, to a lesser extent, Google,” he said.

“Since then, open source models have increased in volume and quality. Increasingly, they’re being used for enterprise because open source content is often licensed for commercial use,” he added. “At the same time, the quality of the actual models are improving.”

While open source models aren’t licensed for commercial use by definition, they are more likely to be. This makes them an attractive consideration for enterprise use, as businesses know they can roll out models freely within companies or on the open market.

Meta, for example, was among the first major tech firms to make a big statement on open source AI development last year with the launch of Llama 2.

Google eyes open source as the ticket to overcoming 'AI obstacles'

With issues of cost and scalability, as well as increasing regulation around the corner, open source seems to answer a lot of the big question marks around AI.

“Why is everyone interested in open source AI? The same reason they’re interested in open source in general,” Chandrasekaran said.

“It allows customizability, ease of use, and adaptability in the face of regulation,” he added.

“It allows customizability, ease of use, and adaptability in the face of regulation,” he added.

Matt Barker, global head of cloud native services at Venafi told ITPro there’s already an existing symbiotic relationship between open source and AI.

“Open source is already inherent in the foundations of AI,” he said. “Open source is foundational to how they run. Kubernetes, for example, underpins OpenAI,” he added.

“Open source code itself is also getting an efficiency boost thanks to the application of AI to help build and optimize it. Just look at the power of applying Copilot by GitHub to coding, or K8sGPT to Kubernetes clusters. This is just the beginning.”

George Fitzmaurice is a former Staff Writer at ITPro and ChannelPro, with a particular interest in AI regulation, data legislation, and market development. After graduating from the University of Oxford with a degree in English Language and Literature, he undertook an internship at the New Statesman before starting at ITPro. Outside of the office, George is both an aspiring musician and an avid reader.

-

Cohere's Aleph Alpha merger could create a transatlantic sovereign AI powerhouse

Cohere's Aleph Alpha merger could create a transatlantic sovereign AI powerhouseAnalysis The merger between Cohere and Aleph Alpha aims to capitalize on the burgeoning sovereign AI market

-

Everything you need to know about OpenAI's new workspace agents

Everything you need to know about OpenAI's new workspace agentsNews New ‘workspace agents’ from OpenAI will automate tasks for workers and can be customized for specific roles

-

UK organizations are failing to move past basic AI use-cases

UK organizations are failing to move past basic AI use-casesNews Businesses in the UK are ramping up AI adoption, but they’re falling at key hurdles

-

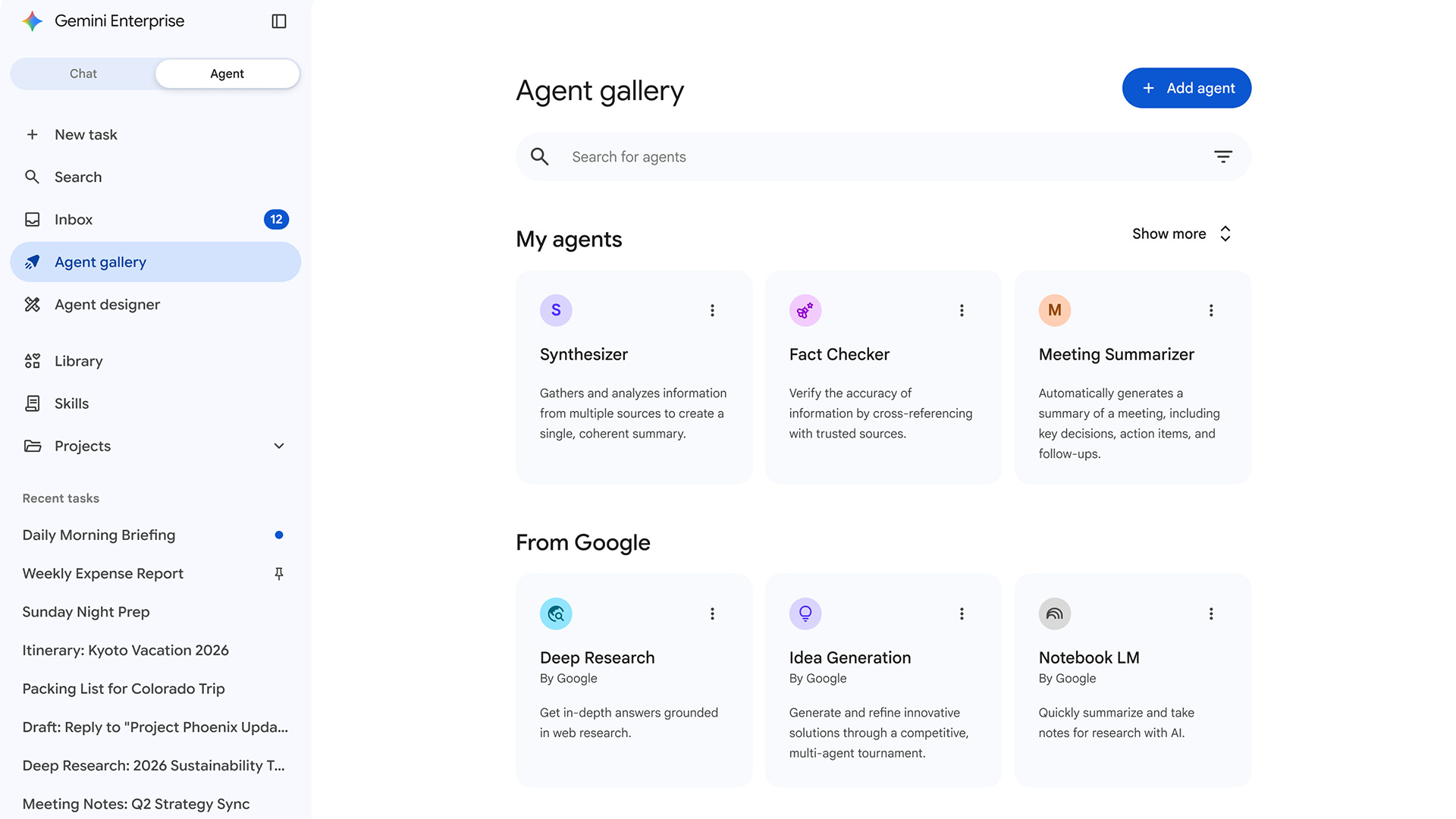

Google expands Gemini Enterprise, consolidates Vertex AI services to simplify agent deployment

Google expands Gemini Enterprise, consolidates Vertex AI services to simplify agent deploymentNews Gemini Enterprise Agent Platform aims to help organizations to build, scale, govern, and optimize AI agents

-

Why Amazon’s ‘go build it’ AI strategy aligns with OpenAI’s big enterprise push

Why Amazon’s ‘go build it’ AI strategy aligns with OpenAI’s big enterprise pushNews OpenAI and Amazon are both vying to offer customers DIY-style AI development services

-

Google says hacker groups are using Gemini to augment attacks – and companies are even ‘stealing’ its models

Google says hacker groups are using Gemini to augment attacks – and companies are even ‘stealing’ its modelsNews Google Threat Intelligence Group has shut down repeated attempts to misuse the Gemini model family

-

‘The fastest adoption of any model in our history’: Sundar Pichai hails AI gains as Google Cloud growth, Gemini popularity surges

‘The fastest adoption of any model in our history’: Sundar Pichai hails AI gains as Google Cloud growth, Gemini popularity surgesNews The company’s cloud unit beat Wall Street expectations as it continues to play a key role in driving AI adoption

-

Amazon’s rumored OpenAI investment points to a “lack of confidence” in Nova model range

Amazon’s rumored OpenAI investment points to a “lack of confidence” in Nova model rangeNews The hyperscaler is among a number of firms targeting investment in the company

-

‘In the model race, it still trails’: Meta’s huge AI spending plans show it’s struggling to keep pace with OpenAI and Google – Mark Zuckerberg thinks the launch of agents that ‘really work’ will be the key

‘In the model race, it still trails’: Meta’s huge AI spending plans show it’s struggling to keep pace with OpenAI and Google – Mark Zuckerberg thinks the launch of agents that ‘really work’ will be the keyNews Meta CEO Mark Zuckerberg promises new models this year "will be good" as the tech giant looks to catch up in the AI race

-

DeepSeek rocked Silicon Valley in January 2025 – one year on it looks set to shake things up again with a powerful new model release

DeepSeek rocked Silicon Valley in January 2025 – one year on it looks set to shake things up again with a powerful new model releaseAnalysis The Chinese AI company sent Silicon Valley into meltdown last year and it could rock the boat again with an upcoming model