What is big data?

Big data is a big deal for the business world - here's what it's all about...

Tech giants are the new robber barons, terms we applied to railroad operators or oil prospectors in the 19th century, and their stock in trade isn't gold or land but data.

It's being created every time you type a URL into a browser, file an email, or organize a work flight. It's filling data centers around the world and driving demand for new ones, with 2023 seeing record construction in the North American data center market, per CBRE data.

So-called because of its vastness, big data is the art and science of organizations, governments, and businesses learning more than they ever realized they needed to know about their markets and users.

Historical context of big data

Since the advent of the computer during the Second World War, connected digital networks (the 1960s) and the World Wide Web (the 1990s) laid the groundwork for modern big data.

Digital services have created a sea of information about the way people engage with peers, products, and society and forward thinking digital operators soon realised how competitive advantages that would make or break entire business models could be teased out of it.

Collecting data, repurposing countless file types into compatible formats, and applying all new tools to shine a light on our collective behaviour, buying patterns, social concerns, and preferences on a mass scale. This created the hottest information science of the 21st century.

Elements of big data

Big data doesn't just mean a lot of data – it has unique and specific properties. Definitions depend, to some extent, on the application, but big data can usually be defined by the five 'V's'.

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

Volume

Big data's most notable characteristic. Most data in the past was generated by employees in organisations, but today it comes from systems, networks, social media, internet of things (IoT) devices, and ecommerce.

The McKinsey Institute recognised back in 2016 that the world's data was doubling every two years, and as of last year we were generating 1,000 petabytes every day.

Velocity

Because of the speed of markets and consumer behaviour today, data is out of date as soon as it's generated.

So the term big data doesn't only refer to quantity – it's also about constant generation and propagation. Getting the most out of it means controlling and shaping its pipeline into your organisation. This allows you to make decisions based on up-to-the minute information that accounts for changes and themes as they emerge in real time.

Variety

Pre-digital data was structured – entries in a ledger or addresses in a list – recorded, and handled according to their intended use. Today, data in popular database formats is similarly organised, but big data includes all the unstructured information as well. That is, the chaos of social media posts, jpeg images, email files and more - the underlying digital makeup of which wasn’t devised to communicate easily with each other.

Organisations don't only need a new kind of integration infrastructure to bring it all under control, but people with the knowhow. This creates demand for a new class of data professional.

Veracity

Sometimes data can come into the organisation at such pace and volume that it can be hard to be sure of its quality. The term 'rubbish in, rubbish out' very much applies, and if you're using subpar or incomplete data you might end up with an outcome that's incomplete or flat out wrong.

That means every big data effort needs a QA process to verify, and in some cases 'clean', data to ensure the best possible analysis.

Value

Capturing and collating data is half the job. The other is a robust analysis plan that establishes what exactly you're going to ask your data to do. That means you need to be sure the expense and time will be worth it for the value you'll get back. Simulate, test, and weigh up the costs and benefits carefully before you scale.

Benefits of big data

Imagine conducting a survey twice over. The first time, you ask three close friends who are of similar age, gender, and socioeconomic status, and in the second survey, you poll tens of thousands of participants online.

The first result will be next to useless because larger sample sizes give you greater accuracy thanks to ever-finer detail in the metric ranges. Big data is like the biggest survey ever conducted where, not only are we looking for the answer, the data itself helps us formulate the questions.

The greatest benefit it offers is a deeper understanding of what's going on in your market or sector and the way customers engage with your company or even individual products. Trends and patterns you might not have foreseen (or even know exist) will reveal themselves, letting you capitalize on them faster and more efficiently.

Big data also lets you isolate and action individual elements of your business to make improvements. If you store sensitive data, it can protect you (and users) against fraudulent transactions. It can tell you what products or services your users want. It can even be used to make your data storage needs cheaper and more efficient by telling you what data to retain.

Challenges of big data

With volume comes complexity. Even putting aside the countless different file formats, wrangling it all into a coherent whole that we can plumb for its secrets is no small feat.

This is why we have seen the rise of the data analyst and data scientist, specialised roles in very technical domains. While data is easy to produce and getting easier to collect, it's certainly not cheap to action when you take the standard salaries into account – $103k for a data scientist and 80-$110k for a data analyst. It's no wonder the roles are a permanent feature on our annual list of the highest-paying technology jobs.

That means the biggest challenge is doing your cost/benefit analysis. Will the financial advantage of big data be worth the cost of using it?

Once you have your data handler installed, the sheer size and intricacy of the job is the next hurdle. Big data doesn't just come from anywhere; it can be coming into your organisation constantly, and it’s getting faster all the time. In 2020 we created or shared 64.2 zettabytes of data. This year we're projected to hit 147ZB, and next year, 181ZB.

RELATED WHITEPAPER

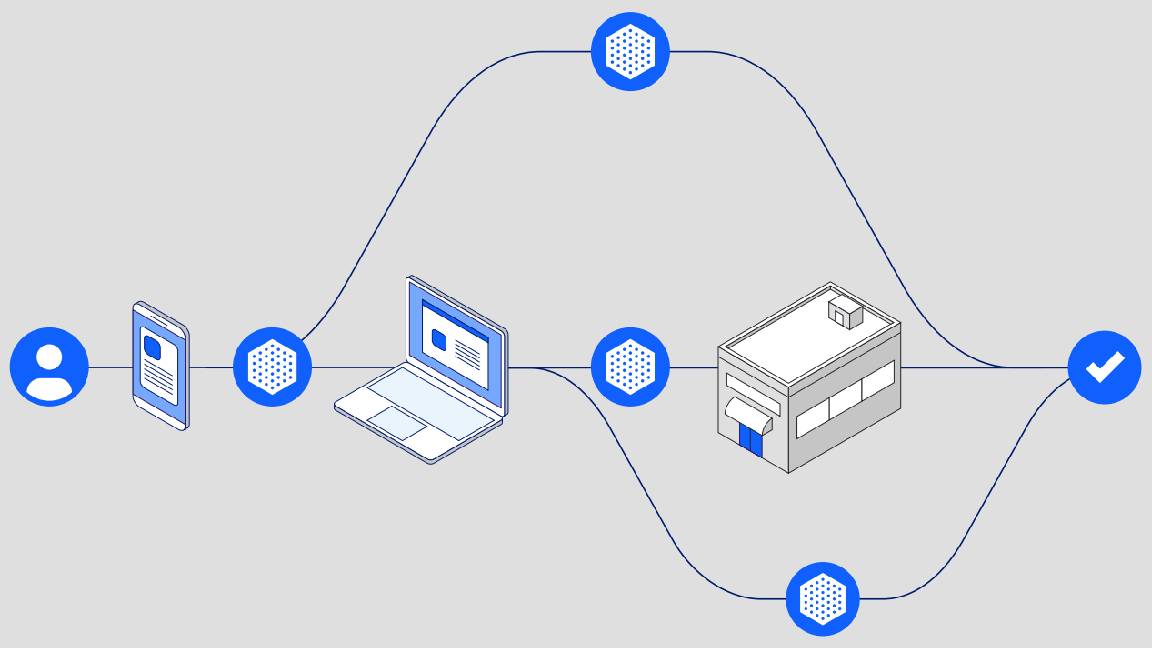

That means big data initiatives need platforms to shape information from disparate sources into a usable form, and the right intelligence tools to get accurate answers. That's not only an extra expense, it takes considerable time to action. Therefore, the next challenge is figuring out if your big data efforts will enable you to move fast enough to get ahead of trends, problems, opportunities, or competitors.

And once you have all that in place, how do you keep it secure? Big data means ports and channels open to countless other source domains, and a rapidly expanding attack surface. You might also face strict data control laws like Europe's GDPR, if you host private information or operate in certain regions, which will place restrictions and expectations on your data policies. Security should be one of your top five big data initiatives.

Even bigger data

According to one market research firm, the big data market was worth US $164.5bn in 2021 and will reach $337.2 billion by 2028, a CAGR of a little over 10%.

But even that might be a conservative estimate. AI is only now going mainstream, and it's prompting a more frenzied demand for next gen microchips, data centres, and bandwidth. Algorithms won't only generate an entire new wave of information, they'll be essential partners in collecting and interrogating it for insight. This will have enormous implications for big data and the considerations listed above.

More on big data

- What is big data analytics?

- How Copenhagen Airport become a big data powerhouse

- How LaLiga championed big data to transform data analytics in sport

Drew Turney is a freelance journalist who has been working in the industry for more than 25 years. He has written on a range of topics including technology, film, science, and publishing.

At ITPro, Drew has written on the topics of smart manufacturing, cyber security certifications, computing degrees, data analytics, and mixed reality technologies.

Since 1995, Drew has written for publications including MacWorld, PCMag, io9, Variety, Empire, GQ, and the Daily Telegraph. In all, he has contributed to more than 150 titles. He is an experienced interviewer, features writer, and media reviewer with a strong background in scientific knowledge.

-

Put AI to work for talent management

Put AI to work for talent managementWhitepaper Change the way we define jobs and the skills required to support business and employee needs

-

More than a number: Your risk score explained

More than a number: Your risk score explainedWhitepaper Understanding risk score calculations

-

Four data challenges holding back your video business

Four data challenges holding back your video businesswhitepaper Data-driven insights are key to making strategic business decisions that chart a winning route

-

Creating a proactive, risk-aware defence in today's dynamic risk environment

Creating a proactive, risk-aware defence in today's dynamic risk environmentWhitepaper Agile risk management starts with a common language

-

How to choose an HR system

How to choose an HR systemWhitepaper What IT leaders need to know

-

Sustainability and TCO: Building a more power-efficient business

Sustainability and TCO: Building a more power-efficient businessWhitepaper Sustainable thinking is good for the planet and society, and your brand

-

What is small data and why is it important?

What is small data and why is it important?In-depth Amid a deepening ocean of corporate information and business intelligence, it’s important to keep things manageable with small data

-

Microsoft's stellar cloud performance bolsters growth amid revenue slump

Microsoft's stellar cloud performance bolsters growth amid revenue slumpNews The tech giant partly blames unstable exchange rates and increased energy costs for the slowdown