Dell CTO: AI is nothing compared to the oncoming quantum storm

Dell CTO John Roese says it's 'disturbing' how badly prepared businesses are to make the most of AI, with poorly curated data fueling current models

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

You are now subscribed

Your newsletter sign-up was successful

Businesses must be more aware of the data requirements for artificial intelligence (AI), and use this period of focus on AI risks to prepare for the quantum computing ‘threat’.

That’s according to John Roese, global chief technology officer (CTO) at Dell, who shines a light on the main challenges businesses face when adopting AI models, and the lessons they can learn from the deployment of generative AI.

Roese acknowledges the computing bottleneck associated with training AI models, but denies this is the main hurdle holding businesses back when deploying the technology.

“The bigger issue for an enterprise use of a large language model (LLM) is in order to train it, you have to have access to the right data and provide the data to the training infrastructure,” he tells ITPro at Dell Technologies World 2023. “Most customers have not done enough work on their data management.”

Stripping out bias

As an example of good data management, Roese cites Dell’s work over the past four years to eliminate non-inclusive language from its content library and internal code environment. These include labels like ‘whitelist’ and ‘blacklist’.

If an LLM was trained on the firm’s content repository, Roese explains, it would be unlikely to not incorporate the biases of these words. Firms that don’t curate data before using it to train AI models intended for products such as chatbots could inadvertently create services that reflect an inherent racism or misogyny, as demonstrated by Microsoft’s Tay scandal.

“If you want to create a chatbot or an LLM that reflects your dataset, it will reflect your dataset – the good, the bad, and the ugly – and it’s important to know that you've created a dataset that is reflecting your values.”

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

RELATED RESOURCE

Roese also notes LLMs work best with unstructured data, as neural networks seek to create connections of their own rather than relying on arbitrary structures. As a result, he says, businesses need to ensure that their data is “sitting in the right place” to be used for training to avoid having to spend lots of time restructuring data down the line.

Are the majority of firms aware of these requirements at present? “No, they're not,” Roese admits. “And that's very disturbing to be perfectly honest.”

AI is a dress rehearsal for quantum

Generative AI has been hailed as one of the most significant technological developments of the century. At Dell Technologies World 2023, CEO Michael Dell compared it to the invention of the internet or PC.

“Everybody's talking about generative AI as if it is the destination – but it isn’t."

In recent months, many have highlighted the risks of generative AI, with analysts calling it an existential threat, and pioneers calling for a temporary development pause.

But Roese recommends businesses use the big upheaval generative AI has triggered as a “learning experience” to better position themselves for future technologies that will disrupt the sector to a far greater extent.

“Everybody's talking about generative AI as if it is the destination – but it isn’t,” Roese stresses, arguing people are so “shell-shocked” by the headline-grabbing technology that they have failed to give proper thought to what comes next. “The answer to that is actually quite simple in my mind, it's quantum,” he continues.

The primary use case for quantum, Roese explains, is clear: quantum machine learning. He notes while generative AI is branded as “disruptive” and sparking fear in some, it’s just the logical progression of existing technologies.

“Imagine if it now ran at five orders of magnitude higher performance. And that, inevitably, is coming.”

What is the 'steal now, crack later' threat?

Although we don't expect quantum computers to be widely available for many years, cyber criminals are already stealing encrypted data in the hopes of gaining access in the future.

Maintaining cyber security in the face of developments in quantum computing is something that will become increasingly important as we approach the 2030s.

Experts suggest it’s a matter of if, not when, standards such as AES encryption break down, for example. Once encryption is cracked, the security of data will be wholly undermined.

The private sector isn’t alone in the race to quantum, as many nation-states have already announced huge investments aimed at proactive quantum development and adoption.

In the Spring Statement, UK Chancellor of the Exchequer Jeremy Hunt announced a £900 million ($1.1 billion) fund for quantum computing research, as part of a wider £2.5 billion ($3.1 billion) investment program for UK quantum.

Companies will have to navigate this disruption in the near future, Roese warns, and would do well to use the choppy waters of generative AI as a dress rehearsal for weathering the coming storm of quantum computing.

Rory Bathgate is Features and Multimedia Editor at ITPro, overseeing all in-depth content and case studies. He can also be found co-hosting the ITPro Podcast with Jane McCallion, swapping a keyboard for a microphone to discuss the latest learnings with thought leaders from across the tech sector.

In his free time, Rory enjoys photography, video editing, and good science fiction. After graduating from the University of Kent with a BA in English and American Literature, Rory undertook an MA in Eighteenth-Century Studies at King’s College London. He joined ITPro in 2022 as a graduate, following four years in student journalism. You can contact Rory at rory.bathgate@futurenet.com or on LinkedIn.

-

Lloyds Bank touts quantum potential in anti-fraud activities

Lloyds Bank touts quantum potential in anti-fraud activitiesNews The bank said quantum algorithms showed long‑term promise, especially when used to complement AI and classical machine learning

-

IBM is targeting 'quantum advantage' in 12 months – and says useful quantum computing is just a few years away

IBM is targeting 'quantum advantage' in 12 months – and says useful quantum computing is just a few years awayNews Leading organizations are already preparing for quantum computing, which could upend our understanding of linear mathematical problems

-

Sundar Pichai thinks commercially viable quantum computing is just 'a few years' away

Sundar Pichai thinks commercially viable quantum computing is just 'a few years' awayNews The Alphabet exec acknowledged that Google just missed beating OpenAI to model launches but emphasized the firm’s inherent AI capabilities

-

Future-proofing cybersecurity: Understanding quantum-safe AI and how to create resilient defenses

Future-proofing cybersecurity: Understanding quantum-safe AI and how to create resilient defensesIndustry Insights Practical steps businesses can take to become quantum-ready today

-

IBM and AMD are teaming up to champion 'quantum-centric supercomputing' – but what is it?

IBM and AMD are teaming up to champion 'quantum-centric supercomputing' – but what is it?News The plan is to integrate the two technologies to create scalable, open source platforms

-

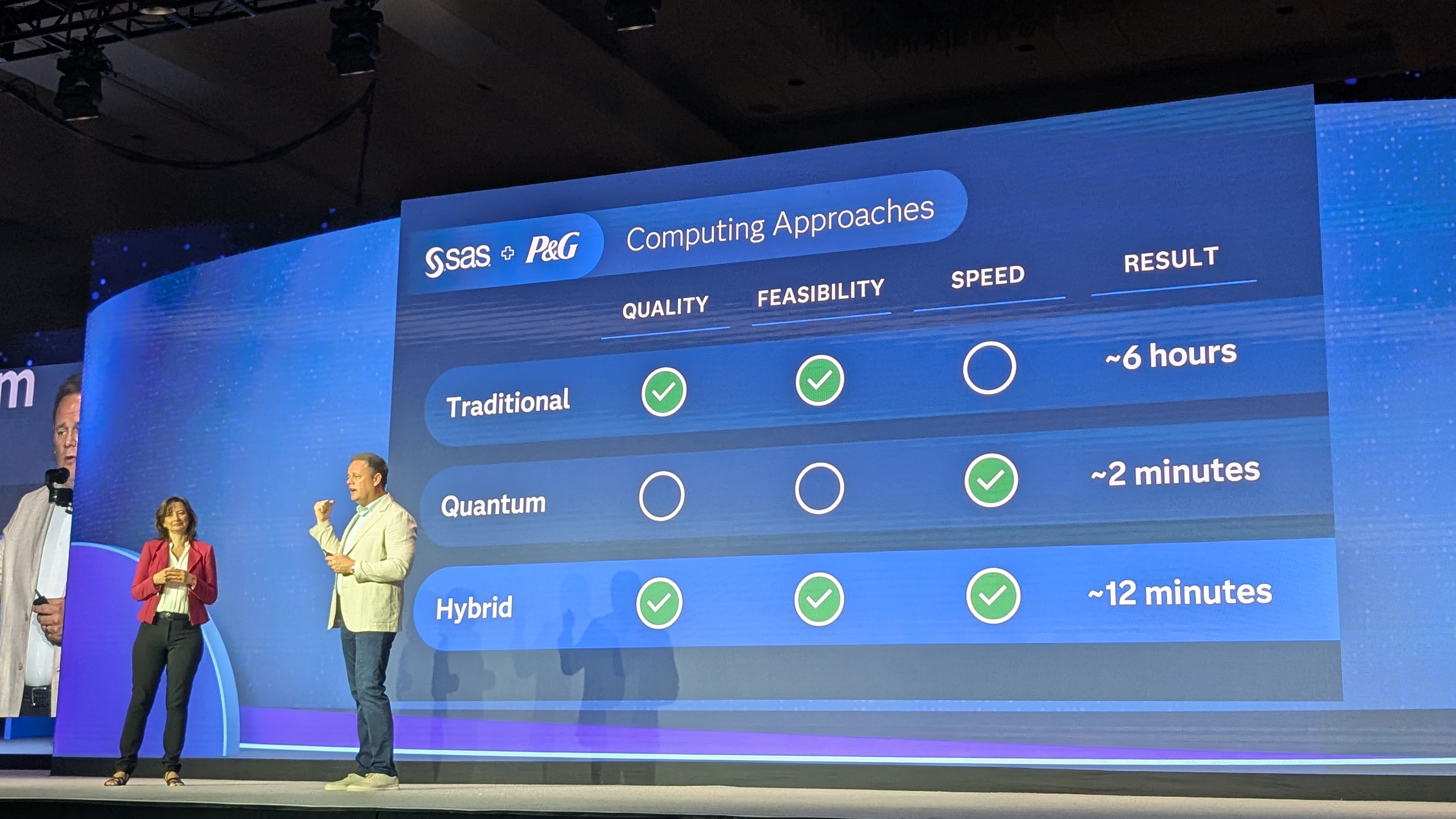

SAS thinks quantum AI has huge enterprise potential – here's why

SAS thinks quantum AI has huge enterprise potential – here's whyNews The analytics veteran has shone a light on three crucial quantum partnerships, as it warns organizations must innovate or fall prey to new threats

-

The UK government wants quantum technology out of the lab and in the hands of enterprises

The UK government wants quantum technology out of the lab and in the hands of enterprisesNews The UK government has unveiled plans to invest £121 million in quantum computing projects in an effort to drive real-world applications and adoption rates.

-

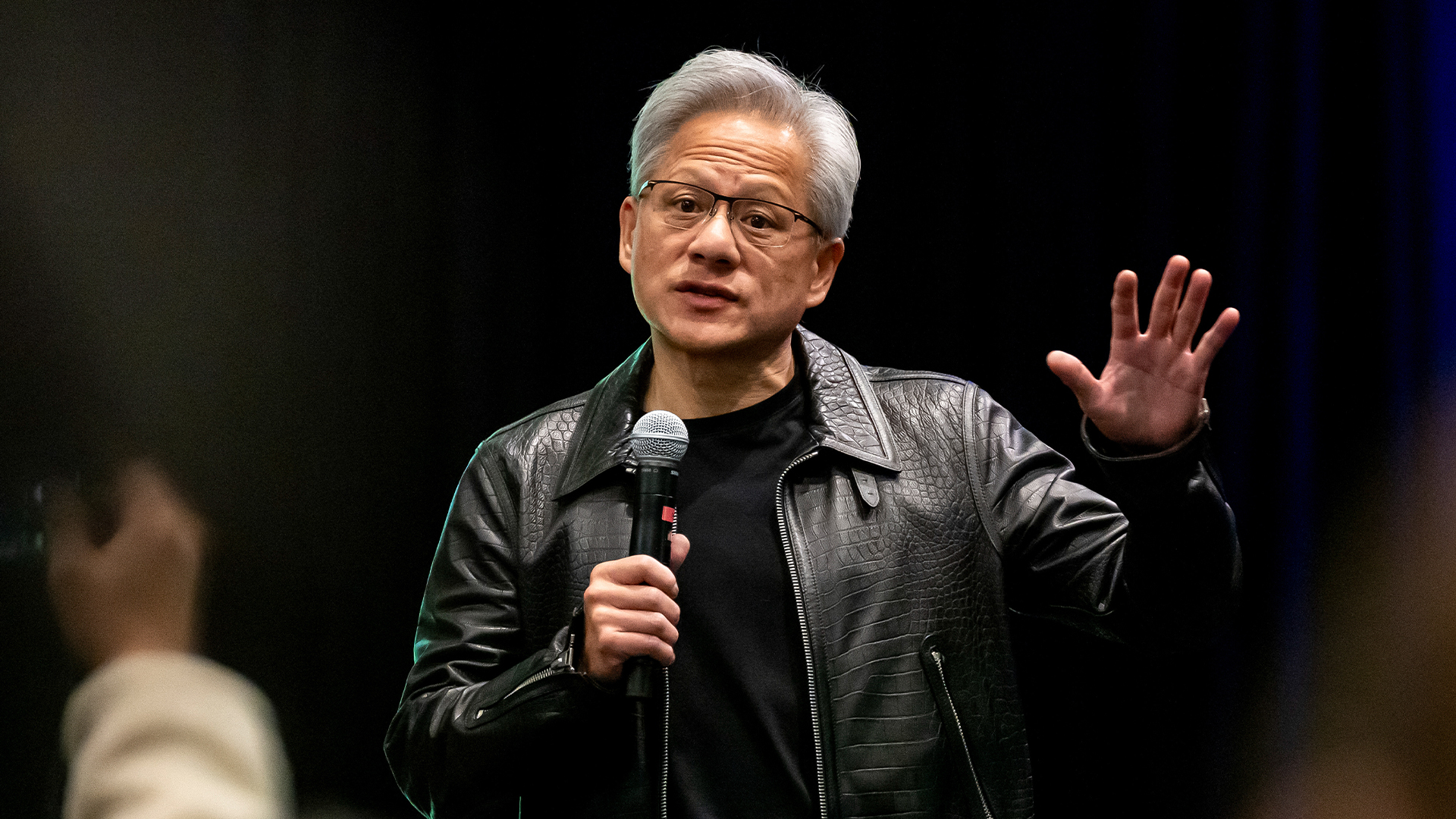

‘This is the first event in history where a company CEO invites all of the guests to explain why he was wrong’: Jensen Huang changes his tune on quantum computing after January stock shock

‘This is the first event in history where a company CEO invites all of the guests to explain why he was wrong’: Jensen Huang changes his tune on quantum computing after January stock shockNews Nvidia CEO Jensen Huang has stepped back from his prediction that practical quantum computing applications are decades away following comments that sent stocks spiraling in January.