Shadow AI and the new visibility gap in software development

Shadow AI adoption creates security risks and visibility gaps in development

Generative AI is becoming increasingly central to how software is developed. However, while this integration vastly improves productivity, a problem is emerging with the rise of shadow AI.

Recent research shows that shadow AI is already mainstream, with a Software AG study finding that half of workers rely on unapproved tools. In the UK, the adoption is on an even larger scale, with over 70% of employees admitting to using unauthorized AI, and more than half doing so on a weekly basis.

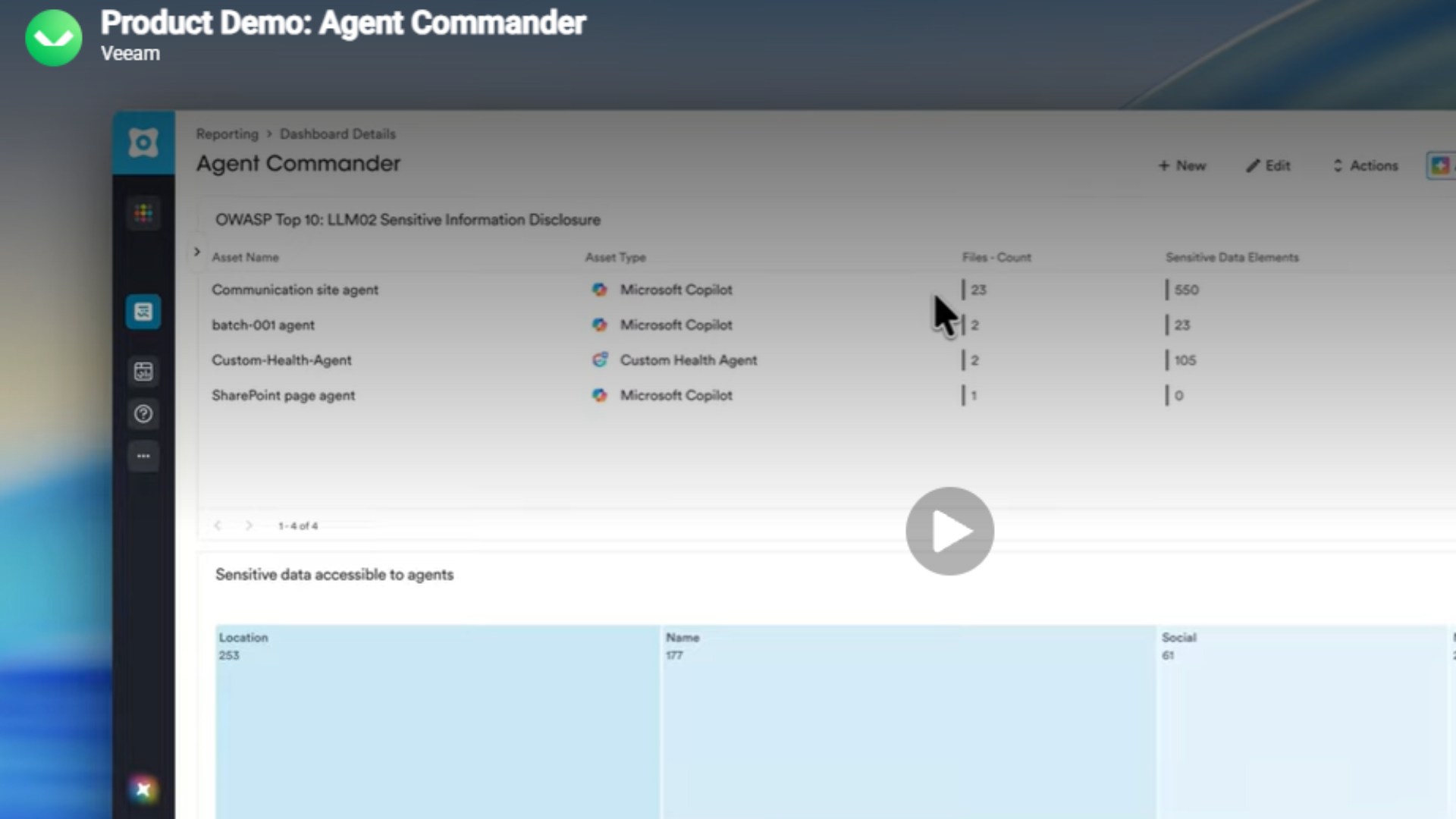

This is a familiar scenario in the tech sector, where shadow IT has long threatened on-premises and cloud network security. Shadow AI, however, introduces more complicated issues, in particular, the “lethal trifecta”. AI agents typically depend on a mix of access to three things: private data, external communication, and openness to untrusted context (prompt injection). When all three of these are present at once, organizations are at risk of an AI agent being tricked by malicious prompts, which can not only make sensitive data more vulnerable to attack but also put the organization at odds with compliance requirements.

AI systems are actively integrated across workflow processes with agents and AI assistants writing code, executing commands, and automating tasks. They quite literally act as human agents when it comes to writing software programs, and they operate at the level of trust granted to the software. Importantly, this is not the same level of trust that is afforded to human agents.

The two-way flow of data that characterizes AI usage in companies also increases the risk of confidential information being leaked. Developers may, even inadvertently, send private code, confidential information, or passwords to external AI models. Meanwhile, potentially vulnerable AI-generated code can flow back into code, where it can introduce breaches, weaknesses, or unsafe patterns if not correctly governed.

How Shadow AI is creating a visibility gap

For managed service providers and DevOps channel partners, this creates a gap in what they can monitor and prevent. Many Managed Service Providers (MSPs) have adapted to effectively manage traditional shadow IT using tools such as Cloud Access Security Brokers, SaaS discovery platforms, endpoint monitoring, and network traffic analysis.

These approaches are designed to identify unsanctioned applications by detecting unusual access patterns, monitoring known SaaS usage, or enforcing policies at the network and device level. However, when it comes to shadow AI, these methods are far less effective. Shadow AI usually exists inside engineering processes that aren’t covered by standard monitoring. Developers running agents on personal laptops or using personal API keys may be completely outside the reach of existing surveillance tools.

Stay up to date with the latest Channel industry news and analysis with our twice-weekly newsletter

As a result, MSPs lack a clear path to detect or resolve unauthorized AI use in software development environments. Without new approaches, they risk losing control over one of the fastest-growing parts of their customers’ technology stacks.

How to close the visibility gap

One method for this is to help organizations assess where they stand through structured maturity models. Reputable AI maturity tests can help enterprises measure the impact of AI agents being added to software-writing processes and identify where governance, security controls, and operating processes must evolve. For partners, these structures move the discussion beyond risk alone. Rather than simply stopping AI use, which is seldom effective, companies can instead put appropriate limits in place while still allowing developers to write program code.

This can be achieved by controlling how AI models are accessed by routing usage through centrally managed infrastructure rather than personal accounts or API keys, giving organizations visibility into which tools are being used, by whom, and what data is flowing through them.

When governance is part of the underlying structure - and largely invisible to developers - organizations can ensure consistent, safe use of AI across code, data, and systems, while still giving teams the freedom to experiment and innovate. The other half of the challenge is agent behavior at runtime. AI agents require network access, but that access can be scoped with process-level controls that can restrict them to approved services only, ensuring a compromised or misdirected agent cannot reach unauthorized endpoints or exfiltrate data. This significantly reduces the impact of risks such as prompt injection.

When governance is embedded at the infrastructure layer rather than enforced through policy or training, the attack surface shrinks significantly. Developers are able to retain their preferred tools, AI agents can continue to operate as intended, and organizations are able to gain full governance without having to force changes to developer workflows.

This change also gives partners the opportunity to expand their advisory role. As companies go through different iterations of AI integration, there is a clear three-stage evolution that creates revenue opportunities during specification, implementation, and the delivery of managed services.

- Migrate: Move from legacy environments to cloud-based development

- At this stage, partners support customers with modernizing their development infrastructure, which includes migrating workloads to cloud environments, standardizing developer workspaces, and establishing secure and centrally managed platforms.

- Modernize: Incorporate governed and audited AI into the development lifecycle

- Here, the focus is on embedding AI safely into workflows. Partners can introduce controlled access to AI models, implement audit and compliance mechanisms, and integrate governance into development pipelines. The goal here is to help customers adopt AI without increasing risk.

- Multiply: Extend agentic workflows to handle repetitive, low-value workloads

- Once governance has been well established, organizations can increase their use of AI agents to automate testing, coding, and operational tasks. For partners, this creates opportunities to design and manage agent workflows, optimize performance, and deliver ongoing managed services that improve efficiency over time.

However, the most important change for partners may be where they focus their attention. The AI world is changing rapidly, and organizations will inevitably change models, tools, and suppliers over time.

Governance plans that depend on controlling certain tools can quickly become obsolete. Instead, partners should focus on the basic processes developers use to build, test, and supply software. When governance structures are built around those fundamental processes, rather than individual AI products, they will stay strong even as the AI world changes.

AI use in development environments will only increase, and there will always be a temptation for developers to try new tools if they can speed up delivery times. For MSPs, this makes partner selection critical. Vendors that prioritize security governance and observability and enable enterprise customers to use AI tools safely will be best positioned to deliver the next generation of secure, AI-driven software development.

Simon Gregory is a channel and alliances leader at Coder, where he focuses on identifying, building, and scaling high-value strategic partnerships to drive growth and enhance client outcomes across EMEA.

With over 20 years of experience in partnerships and alliances, Simon has led international ecosystem development and expansion initiatives at organizations including Xebia, Laiye, and Stardog. His expertise spans partner strategy, indirect sales, and building collaborative frameworks that accelerate market reach and revenue.

-

Closing the AI control gap: Why channel partners are now on the front line

Closing the AI control gap: Why channel partners are now on the front lineIndustry Insights AI adoption creates unmanaged risks, driving demand for expert partner guidance

-

AI forces bigger software players to adapt pricing to compete

AI forces bigger software players to adapt pricing to competeIndustry Insights Software companies adding AI capabilities will need to upgrade monetization stacks designed for subscriptions rather than usage-based billing

-

How direct-to-chip cooling is helping MSPs meet AI demand

How direct-to-chip cooling is helping MSPs meet AI demandIndustry Insights MSPs must make careful, strategic choices now to position them - and their customers - for future success

-

AI readiness and legal compliance: Practical strategies for MSPs in the age of Copilot

AI readiness and legal compliance: Practical strategies for MSPs in the age of CopilotIndustry Insights How MSPs can respond effectively to the rising demand for AI services

-

From AI hype to AI reality: The steps businesses need to take to adopt AI responsibly

From AI hype to AI reality: The steps businesses need to take to adopt AI responsiblyIndustry Insights Responsible AI adoption requires a strategic, long-term approach rather than simply deploying new tools

-

The UK’s AI ambitions depend on channel partners

The UK’s AI ambitions depend on channel partnersIndustry Insights Strong AI rollout hinges on channel partners driving successful adoption

-

How to build trust into automation at scale

How to build trust into automation at scaleIndustry Insights How channel partners can scale robotics securely while building customer trust

-

Why ‘buy vs build’ Is the wrong question for AI strategy

Why ‘buy vs build’ Is the wrong question for AI strategyIndustry Insights AI is now central to modern enterprises, but many struggle to match hype with results