HPE partners with NVIDIA to accelerate AI model training

HPE said the partnership will help rapidly accelerate the training of AI models

Stay up to date with the latest Channel industry news and analysis with our twice-weekly newsletter

You are now subscribed

Your newsletter sign-up was successful

Hewlett Packard Enterprise (HPE) has announced the launch of a new supercomputing solution designed specifically for training and fine-tuning generative AI models.

The new offering from HPE will provide large enterprises, research institutions, and government organizations with an out-of-the-box and scalable solution for the computationally intensive workloads generative AI requires.

Designed in partnership with Nvidia, the platform will comprise a software suite for users to train and fine-tune AI models, liquid-cooled supercomputers, accelerated compute, networking, storage, and services, all aimed at enabling organizations to leverage the power of AI.

The software suite will contain three tools to facilitate accelerated development and deployment of AI models for organizations.

HPE’s Machine Learning Development Environment will simplify data preparation and help customers integrate their models with popular machine learning (ML) frameworks.

The HPE Cray Programming Environment will provide stability and sustainability with a set of tools for developing, porting, debugging, and refining code.

NVIDIA’s AI platform, NVIDIA AI Enterprise, offers customers over 50 frameworks, pretrained models, and development tools to both accelerate and simplify AI development and deployment.

Stay up to date with the latest Channel industry news and analysis with our twice-weekly newsletter

Based on HPE Cray EX2500 exascale-class system, this will be the first system to feature a quad configuration of NVIDIA’s Grace Hopper GH200 Superchips that boasts 72 ARM cores, 4 PFLOPS of AI performance, and 624GB of high speed memory.

This will enable the solution to scale up to thousands of GPUs with the ability to allocate the full capacity of nodes to a single AI workload for faster time-to-value and speed up training by 2 – 3x.

HPE’s Slingshot Interconnect networking solution will help organizations meet the scalability demands of AI model deployment, with an ethernet-based high performance network made to support exascale-class workloads.

Finally, HPE will bundle-in its Complete Care Services package to ensure customers receive expert advice in terms of setup, installation, and ongoing support.

Justin Hotard, executive vice president and general manager, HPC, AI & Labs at HPE said AI-tailored solutions are required to support the widespread deployment of the technology.

“To support generative AI, organizations need to leverage solutions that are sustainable and deliver the dedicated performance and scale of a supercomputer to support AI model training. We are thrilled to expand our collaboration with NVIDIA to offer a turnkey AI-native solution that will help our customers significantly accelerate AI model training and outcomes” he said.

Sustainability a key to the future of supercomputing

According to estimates in Schneider Electric’s Energy Management Research Center, by 2028, AI workloads will have grown to require 20GW of power within data centers.

RELATED RESOURCE

This webinar explores the state of AI in the financial services industry and its potential

WATCH NOW

This means organizations looking to implement AI and maintain their ESG goals will need incremental compute additions to be realized in a carbon neutral way.

HPE said it has integrated a direct liquid-cooling solution to address these concerns.

The firm already delivers the majority of the world’s top 10 most efficient supercomputers using its direct liquid cooling (DLC) capabilities to lower the energy consumption for compute-intensive applications.

It estimates that its DLC capabilities will be able to drive up to a 20% performance improvement per kilowatt over air-cooled solutions, while consuming 15% less power.

HPE’s supercomputing solution will be generally available in December 2023 in more than 30 countries.

Solomon Klappholz is a former staff writer for ITPro and ChannelPro. He has experience writing about the technologies that facilitate industrial manufacturing, which led to him developing a particular interest in cybersecurity, IT regulation, industrial infrastructure applications, and machine learning.

-

Why your best engineers are doing the wrong work

Why your best engineers are doing the wrong workIndustry Insights Why MSPs should adopt platform engineering to free engineers for more strategic work.

-

How Tim Cook turned Apple into a 'durable' tech industry powerhouse

How Tim Cook turned Apple into a 'durable' tech industry powerhouseNews Tim Cook might not boast the same tech visionary status as Steve Jobs, but the company’s growth has been remarkable

-

Nvidia targets open source interoperability with new model coalitions, agentic frameworks

Nvidia targets open source interoperability with new model coalitions, agentic frameworksNews A new open model development coalition and agentic frameworks target enterprise interoperability

-

Will AI hiring entrench gender bias?

Will AI hiring entrench gender bias?ITPro Podcast This International Women's Day, it's more important than ever to consider the inherent biases of training data

-

February rundown: SaaS-pocalypse now?

February rundown: SaaS-pocalypse now?ITPro Podcast Geopolitical uncertainty is intensifying public and private sector focus on true sovereign workloads

-

HPE and Nvidia launch first EU AI factory lab in France

HPE and Nvidia launch first EU AI factory lab in FranceNews The facility will let customers test and validate their sovereign AI factories

-

Dell Technologies doubles down on AI with SC25 announcements

Dell Technologies doubles down on AI with SC25 announcementsAI Factories, networking, storage and more get an update, while the company deepens its relationship with Nvidia

-

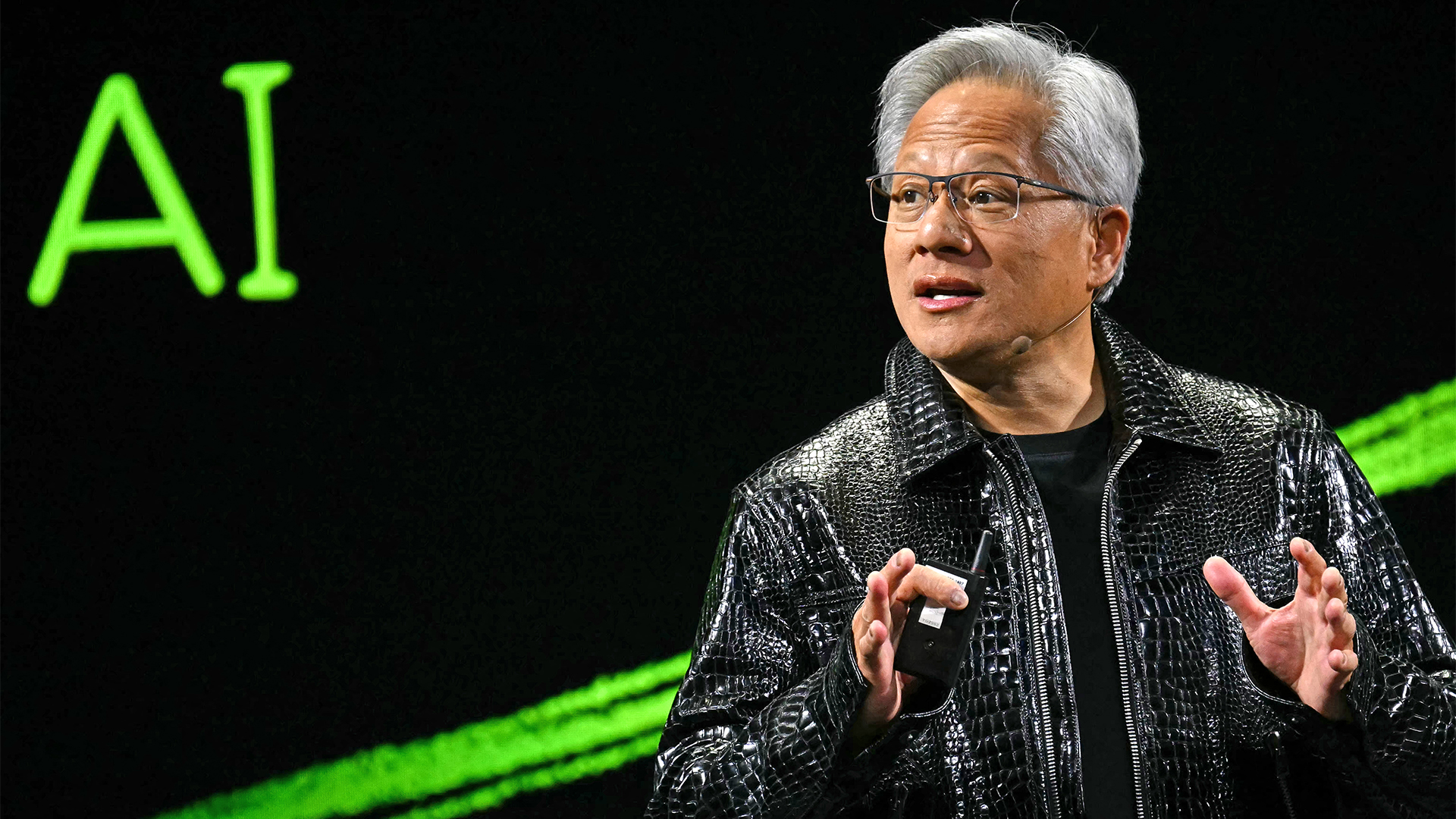

Nvidia CEO Jensen Huang says future enterprises will employ a ‘combination of humans and digital humans’ – but do people really want to work alongside agents? The answer is complicated.

Nvidia CEO Jensen Huang says future enterprises will employ a ‘combination of humans and digital humans’ – but do people really want to work alongside agents? The answer is complicated.News Enterprise workforces of the future will be made up of a "combination of humans and digital humans," according to Nvidia CEO Jensen Huang. But how will humans feel about it?

-

OpenAI signs another chip deal, this time with AMD

OpenAI signs another chip deal, this time with AMDnews AMD deal is worth billions, and follows a similar partnership with Nvidia last month

-

Why Nvidia’s $100 billion deal with OpenAI is a win-win for both companies

Why Nvidia’s $100 billion deal with OpenAI is a win-win for both companiesNews OpenAI will use Nvidia chips to build massive systems to train AI