Hugging Face just launched an open source alternative to OpenAI’s custom GPT builder, and it’s free

Hugging Face looks primed to position its new assistant creation platform as a direct competitor with OpenAI’s GPT Store, but how does it compare?

Rory Bathgate

Hugging Face has unveiled a new feature called ‘Hugging Chat Assistants’ that allows users to create and customize their own AI chatbots in an apparent bid to provide an open source alternative to OpenAI’s ‘GPT Store’.

The announcement was made on X by Hugging Face’s technical lead and LLM director, Phillip Schmid, who paraded the efficiency of Hugging Face’s chat assistants.

Introducing Hugging Chat Assistant! 🤵 Build your own personal Assistant in Hugging Face Chat in 2 clicks! Similar to @OpenAI GPTs, you can now create custom versions of @huggingface Chat! 🤯An Assistant is defined by🏷️ Name, Avatar, and Description🧠 Any available open… pic.twitter.com/9XaReKgg9mFebruary 2, 2024

Schmid said that personalized chatbots could be created in just “two clicks,” complete with custom names, avatars, and system prompts.

Notably, he also touted Hugging Face’s new found capacity to rival other AI companies, candidly describing the new feature as “similar” to OpenAIs custom GPT offering.

OpenAI's custom GPT builder framework, offered through the GPT Store, enables users to create their own AI assistants based on specific individual business - or personal - needs.

Hugging Face’s offering, meanwhile, will provide public access to a similar collection of third-party Hugging Chat Assistants.

Hugging Face vs OpenAI: What’s better for building custom chatbots?

Both Hugging Face and OpenAI are catering to an ever-increasing demand for generative AI tools that fulfill specific use cases, though there are a couple of key differences with the launch of this latest framework.

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

On a purely financial level, OpenAI levels a range of charges for its GPT builder, while Hugging Chat assistants are free to use.

OpenAI’s cheapest offering is ChatGPT Plus for $20 a month, followed by ChatGPT Team at $25 a month and ChatGPT Enterprise, the cost of which depends on the size and scope of the enterprise user.

Part of the reason Hugging Face is able to offer such a competitive alternative is because it uses open source large language models (LLMs).

OpenAI's GPT builder and GPT Store rely entirely on its proprietary, closed source LLMs, GPT-4, GPT-4 Vision, and GPT-4 Turbo.

Hugging Face users, by contrast, are offered a wider variety of models, including Mistral’s Mixtral platform and Meta’s Llama 2.

While Llama 2 can’t quite outperform GPT-4 yet, it runs at a similar level and, without the hefty price tag, it’s an attractive alternative.

There are still a few advantages to OpenAI's GPTs, however, such as the ability to generate images by utilizing OpenAI's inhouse Dall-E 3 engine.

Hugging Face recently announced a landmark partnership with Google

Other weaknesses of Hugging Chat Assistants include the lack of web search or retrieval augmented generation (RAG) support.

Despite its shortcomings, though, Hugging Face’s open source offering is still impressive. It looks to bolster Hugging Face’s position in the ever-accelerating AI goldrush, which just recently saw it secure a landmark partnership with Google.

Hugging Face “cuts out the heavy lifting” for open source AI development

This is a significant announcement for not only the open source AI, but also the entire tech sector. Throughout 2023, enthusiasm for generative AI among business leaders has slowly been pitted against the rising cost of generative AI platforms with Ben Wood, chief analyst and CMO at CCS Insight telling ITPro that many small firms would find AI “prohibitively expensive”.

In bringing customized chatbots to businesses for free, backed by powerful open source models, Hugging Face has put generative AI back on the table for those firms unable to afford the ongoing costs of public AI, or cough up the sizable funds necessary to train a model of their own.

Open source AI isn’t new in itself, as Hugging Face has been platforming free machine learning and generative AI models for some time.

But this new announcement cuts out the heavy lifting of implementing an open source model, allowing firms with smaller IT teams or limited time and resources to adopt AI for free. This is a big step toward ‘democratizing’ AI, a term many supporters of open source AI have come to use in the past year and a half.

For the vast majority of firms, this will not come into play and has been interpreted by many as an ‘anti hyperscaler’ clause to prevent competitors such as Google and Microsoft from using it directly. Smaller firms – that is, almost every other company in the world – seeks to benefit from the powerful, free model.

Mistral claims that Mixtral not only outperforms Llama 2 on “most benchmarks”, but is also superior to GPT-3.5 in the standard benchmarks too. This claim is borne out in some of its publication data, with the model achieving 70.6% in the text reasoning Massive Multitask Language Understanding (MMLU) benchmark against Llama 2 70B’s 69.9% and GPT-3.5’s 70%.

RELATED RESOURCE

Adopt generative AI and maintain security, privacy, and compliance

DOWNLOAD NOW

In other benchmarks such as WinoGrande, which pits LLMs against 44,000 reasoning problems, Mixtral’s score of 81.2% could not match Llama 2’s 83.2%. Both models cannot match Google’s Gemini or OpenAI’s GPT-4 – but they may not need to.

For many firms, functionality competitive with the free tier of ChatGPT will be good enough, particularly as part of a customer service funnel that redirects users to a human contact if problems aren’t resolved after a set window.

There’s also no reason to believe that Hugging Face won’t continue to update the platform with better models as they arrive. Meta is reportedly working on an even more powerful Llama 3 and Mistral aims to become a “European champion” for AI.

The benefits and potential of open source AI will continue to be explored in the coming weeks and months, as firms make models such as Llama 2 and Mixtral part of their software stack. This will make a meaningful contribution to that aim at the enterprise level.

George Fitzmaurice is a former Staff Writer at ITPro and ChannelPro, with a particular interest in AI regulation, data legislation, and market development. After graduating from the University of Oxford with a degree in English Language and Literature, he undertook an internship at the New Statesman before starting at ITPro. Outside of the office, George is both an aspiring musician and an avid reader.

- Rory BathgateFeatures and Multimedia Editor

-

Five Eyes agencies sound alarm over risky agentic AI deployments

Five Eyes agencies sound alarm over risky agentic AI deploymentsNews Security agencies have urged organizations to establish clear boundaries and guardrails for AI agents

-

What is Dell PowerProtect?

What is Dell PowerProtect?Dell PowerProtect gives enterprises modern, cyber-resilient backup and recovery capabilities for edge, on-prem, and multi-cloud environments

-

Four things you need to know about OpenAI’s new workspace agents for ChatGPT – including how to build your own

Four things you need to know about OpenAI’s new workspace agents for ChatGPT – including how to build your ownNews New ‘workspace agents’ from OpenAI will automate tasks for workers and can be customized for specific roles

-

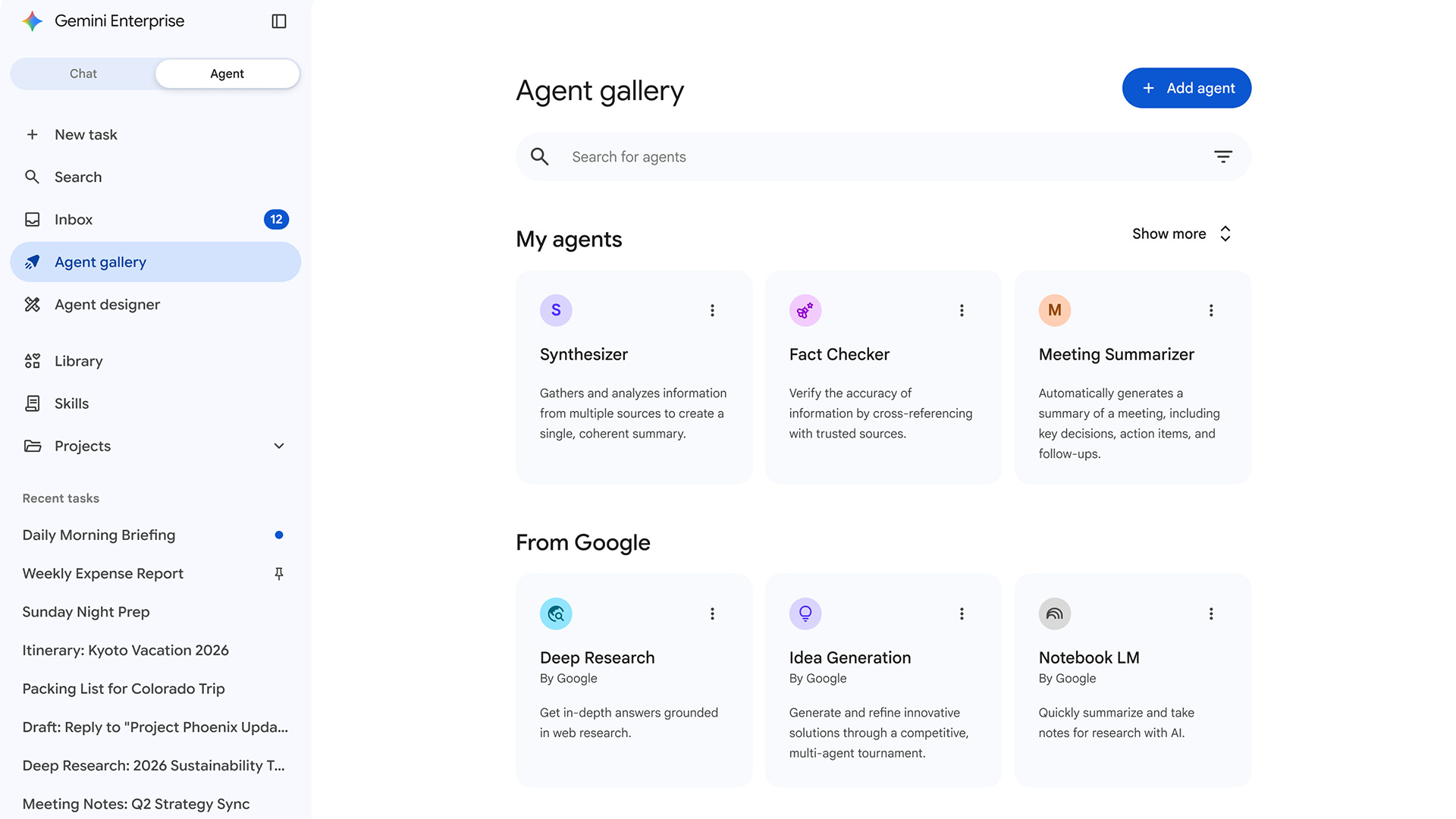

Google expands Gemini Enterprise, consolidates Vertex AI services to simplify agent deployment

Google expands Gemini Enterprise, consolidates Vertex AI services to simplify agent deploymentNews Gemini Enterprise Agent Platform aims to help organizations to build, scale, govern, and optimize AI agents

-

Meta engineer trusted advice from an AI agent, ended up exposing user data

Meta engineer trusted advice from an AI agent, ended up exposing user dataNews The internal security incident exposed sensitive user data to unauthorized employees

-

OpenAI says AI tools are paying dividends for small businesses, but uptake is sluggish in several UK regions

OpenAI says AI tools are paying dividends for small businesses, but uptake is sluggish in several UK regionsNews While some small businesses are seeing big benefits, many don't use AI at all

-

Microsoft has a new AI poster child in Anthropic – and it’s about time

Microsoft has a new AI poster child in Anthropic – and it’s about timeOpinion Microsoft is cosying up to Anthropic at a crucial time in the race to deliver on AI promises

-

Will AI hiring entrench gender bias?

Will AI hiring entrench gender bias?ITPro Podcast This International Women's Day, it's more important than ever to consider the inherent biases of training data

-

Why Amazon’s ‘go build it’ AI strategy aligns with OpenAI’s big enterprise push

Why Amazon’s ‘go build it’ AI strategy aligns with OpenAI’s big enterprise pushNews OpenAI and Amazon are both vying to offer customers DIY-style AI development services

-

February rundown: SaaS-pocalypse now?

February rundown: SaaS-pocalypse now?ITPro Podcast Geopolitical uncertainty is intensifying public and private sector focus on true sovereign workloads