Meta unveils two new GPU clusters used to train its Llama 3 AI model — and it plans to acquire an extra 350,000 Nvidia H100 GPUs by the end of 2024 to meet development goals

Meta is expanding its GPU infrastructure with the help of Nvidia in a bid to accelerate development of its Llama 3 large language model

Meta has announced two new GPU clusters that will see the firm provide improved infrastructure for dealing with the taxing compute demands of artificial intelligence (AI) systems.

Marking a “major investment in Meta’s AI future,” the firm announced the addition of two 24k GPU data center-scale clusters that boast heightened throughput and reliability for AI workloads.

These GPUs will support both Meta’s current Llama 2 model and its upcoming Llama 3 model, as well as the company’s wider research and development projects across generative AI and other areas.

The announcement was described by the firm as “one step in our ambitious infrastructure roadmap”, and will see the tech giant acquire 350,000 Nvidia H100 GPUs to expand its portfolio.

Meta said the expansion project will deliver a total compute power equivalent to nearly 600,000 H100s upon completion.

“As we look to the future, we recognize that what worked yesterday or today may not be sufficient for tomorrow’s needs,” the firm said in a statement.

“That’s why we are constantly evaluating and improving every aspect of our infrastructure, from the physical and virtual layers to the software layer and beyond.”

Sign up today and you will receive a free copy of our Future Focus 2025 report - the leading guidance on AI, cybersecurity and other IT challenges as per 700+ senior executives

How do Meta’s GPUs stack up for large-scale use?

Meta focused on building “end-to-end” AI systems in its latest pair of GPU clusters, emphasizing researcher and developer experience as a means of guiding production.

With high-performance network fabrics working alongside 24,576 Nvidia Tensor Core H100 GPUs, these new clusters are able to support “larger and more complex” models than Meta’s previous RSC clusters.

One of the new clusters was built with “remote direct memory access (RDMA) over converged Ethernet (RoCE),” while the other features an "Nvidia Quantum 2 InfiniBand fabric,” both geared towards improved network functionality.

Both clusters were built using Meta’s in-house open GPU hardware platform Grand Teton, which itself is built on generations of AI that integrate “power, control, compute, and fabric interfaces into a single chassis for better overall performance.”

RELATED WHITEPAPER

“Grand Teton allows us to build new clusters in a way that is purpose-built for current and future applications at Meta,” the firm said.

Generative AI also consumes data in huge volumes, the firm said, meaning the next generation of GPUs need to take storage into account.

Meta’s “home-grown” Linux storage system does this in its latest GPU cluster offerings, which will work in parallel with a version of Meta’s Tectonic distributed storage solution.

Though Meta reports that there were initial performance issues with these larger clusters, changes to its internal job scheduler helped optimize both GPU clusters to “achieve great and expected performance.”

George Fitzmaurice is a former Staff Writer at ITPro and ChannelPro, with a particular interest in AI regulation, data legislation, and market development. After graduating from the University of Oxford with a degree in English Language and Literature, he undertook an internship at the New Statesman before starting at ITPro. Outside of the office, George is both an aspiring musician and an avid reader.

-

Samsung averts strike action with AI-funded bonuses for chip workers

Samsung averts strike action with AI-funded bonuses for chip workersNews Deal with unions, mediated by the South Korean government, ends long-running dispute over bonus cap

-

'One-size-fits-all' agent governance sets enterprises up to fail

'One-size-fits-all' agent governance sets enterprises up to failNews Gartner recommends a graded approach for agents, depending on their level of autonomy

-

Arm’s new CPU represents a major shift for the AI data center market – what does it mean for UK tech?

Arm’s new CPU represents a major shift for the AI data center market – what does it mean for UK tech?Analysis With established expertise and an open approach, Arm could capture rising demand for CPUs

-

The Asus Ascent GX10 is a MiniPC with supercomputer ambitions for AI developers – but it's not cheap

The Asus Ascent GX10 is a MiniPC with supercomputer ambitions for AI developers – but it's not cheapReviews The Ubuntu-on-ARM operating system could limit its appeal, but for both serious and neophyte AI developers, it's a great desktop box

-

Nvidia’s Intel investment just gave it the perfect inroad to lucrative new markets

Nvidia’s Intel investment just gave it the perfect inroad to lucrative new marketsNews Nvidia looks set to branch out into lucrative new markets following its $5 billion investment in Intel.

-

Nvidia hails ‘another leap in the frontier of AI computing’ with Rubin GPU launch

Nvidia hails ‘another leap in the frontier of AI computing’ with Rubin GPU launchNews Set for general release in 2026, Rubin is here to solve the challenge of AI inference at scale

-

Nvidia braces for a $5.5 billion hit as tariffs reach the semiconductor industry

Nvidia braces for a $5.5 billion hit as tariffs reach the semiconductor industryNews The chipmaker says its H20 chips need a special license as its share price plummets

-

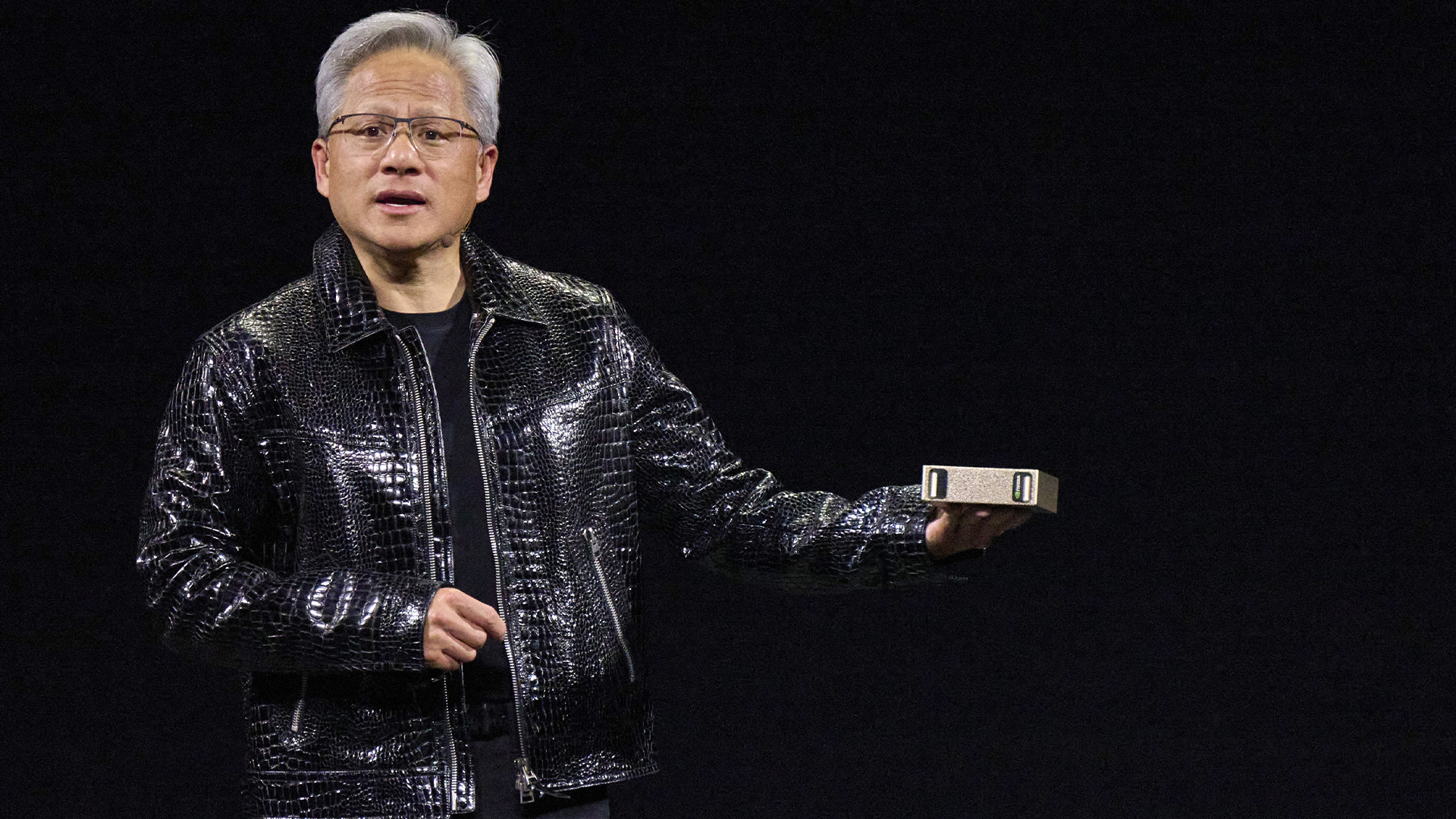

“The Grace Blackwell Superchip comes to millions of developers”: Nvidia's new 'Project Digits' mini PC is an AI developer's dream – but it'll set you back $3,000 a piece to get your hands on one

“The Grace Blackwell Superchip comes to millions of developers”: Nvidia's new 'Project Digits' mini PC is an AI developer's dream – but it'll set you back $3,000 a piece to get your hands on oneNews Nvidia unveiled the launch of a new mini PC, dubbed 'Project Digits', aimed specifically at AI developers during CES 2025.

-

Intel just won a 15-year legal battle against EU

Intel just won a 15-year legal battle against EUNews Ruled to have engaged in anti-competitive practices back in 2009, Intel has finally succeeded in overturning a record fine

-

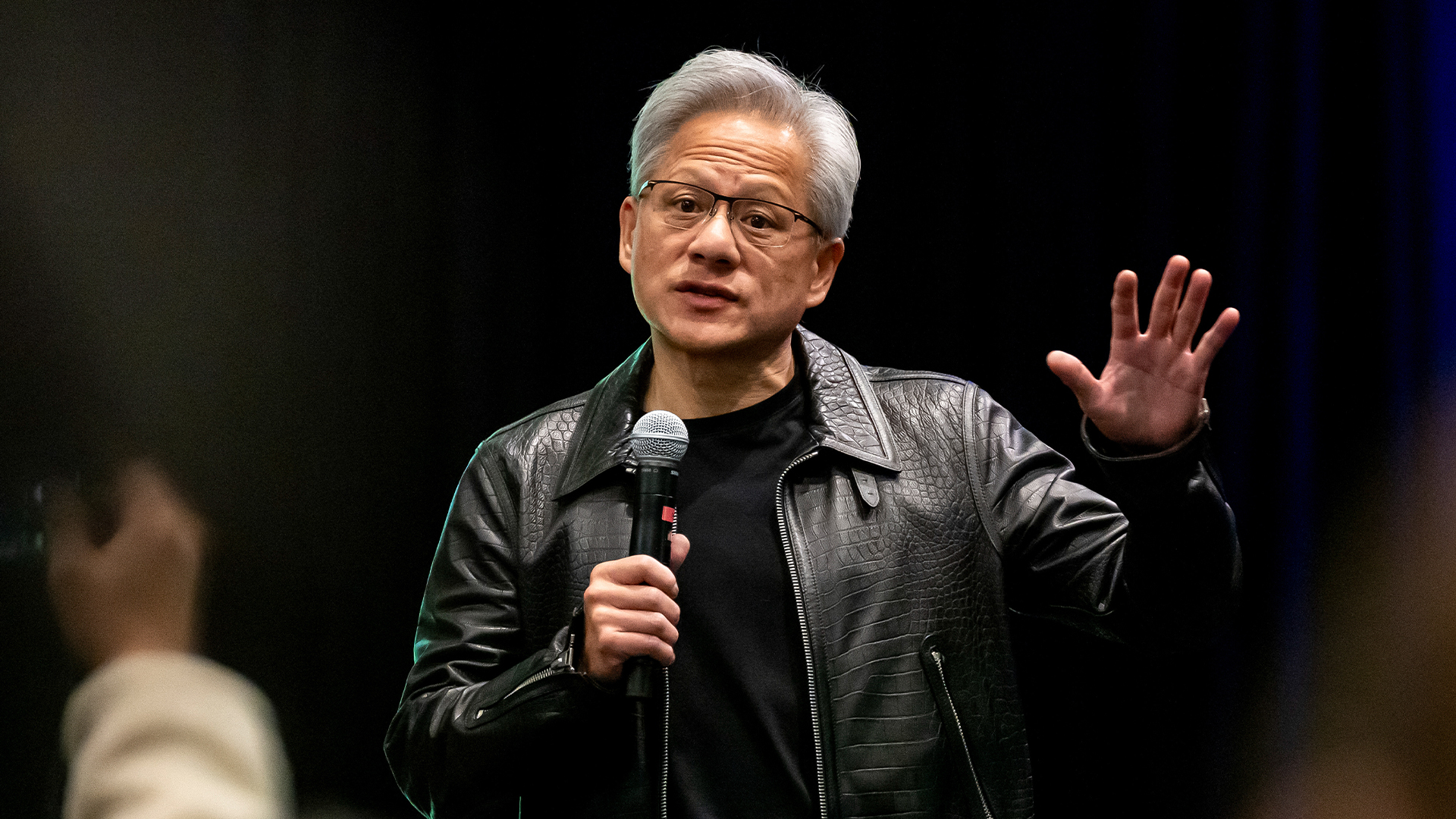

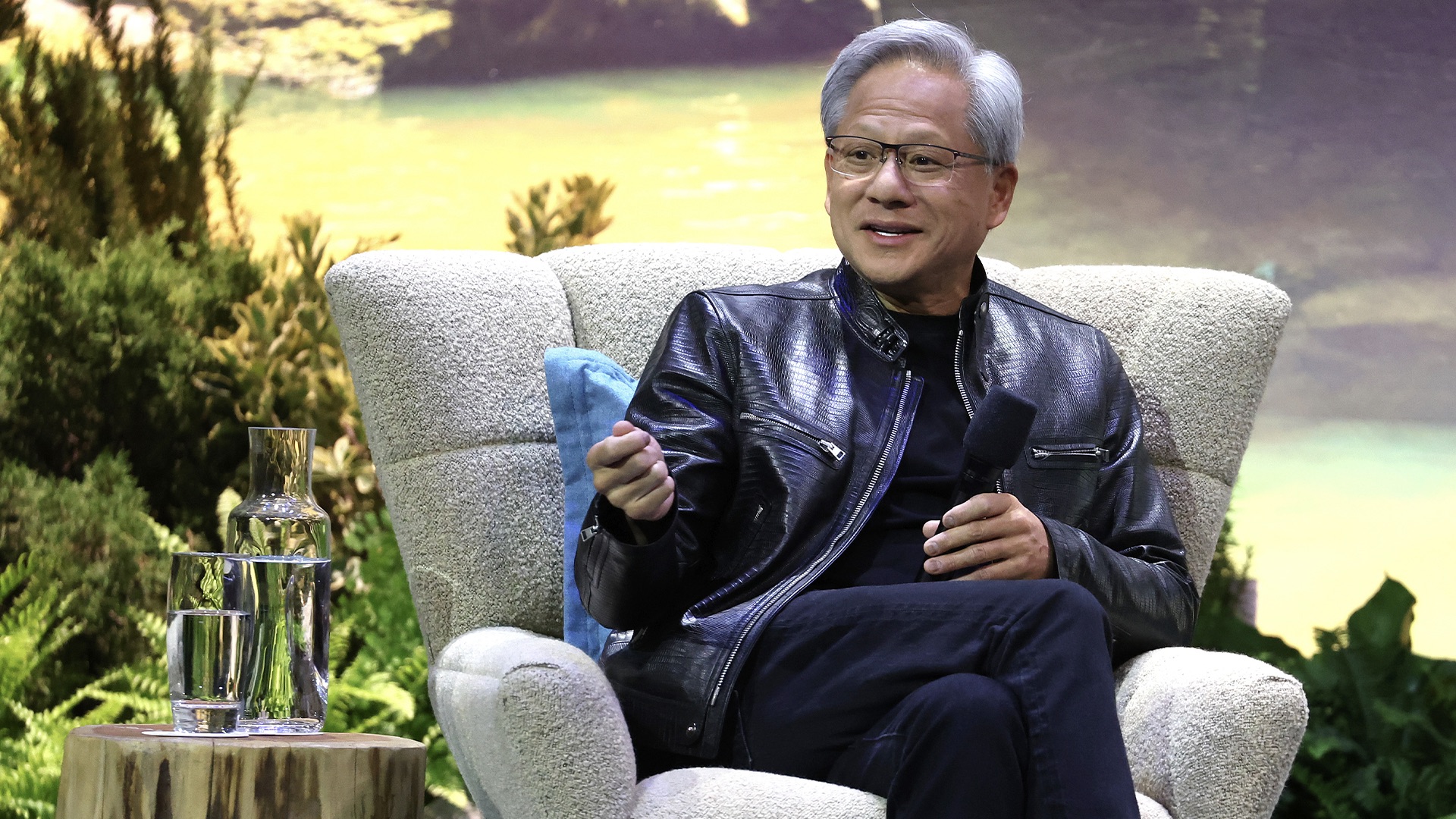

Jensen Huang just issued a big update on Nvidia's Blackwell chip flaws

Jensen Huang just issued a big update on Nvidia's Blackwell chip flawsNews Nvidia CEO Jensen Huang has confirmed that a design flaw that was impacting the expected yields from its Blackwell AI GPUs has been addressed.